ノーコードでクラウド上のデータとの連携を実現。

詳細はこちら →CData Software Japan - ナレッジベース

Latest Articles

- MySQL のデータをノーコードでREST API として公開する方法:CData API Server

- CData Sync AMI をAmazon Web Services(AWS)で起動

- Connect Cloud Guide: Derived Views, Saved Queries, and Custom Reports

- Connect Cloud Guide: SSO (Single Sign-On) and User-Defined Credentials

- Connect Cloud クイックスタート

- Shopify APIのバージョンアップに伴う弊社製品の対応について

Latest KB Entries

- DBAmp: Serial Number Expiration Date Shows 1999 or Expired

- CData Drivers のライセンスについて

- Spring4Shell に関する概要

- Update Required: HubSpot Connectivity

- CData Sync で差分更新を設定

- Apache Log4j2 Overview

ODBC Drivers

- [ article ] Tableau Server へのAuthorize.Net ダッシュボードの公開・パブリッシュ

- [ article ] PolyBase で外部データソースとしてGoogle Cloud Storage を連携利用

- [ article ] HDFS データからSQL Server に接続する4つの方法をご紹介。あなたにピッタリな方法は?

- [ article ] PolyBase で外部データソースとしてOdoo を連携利用

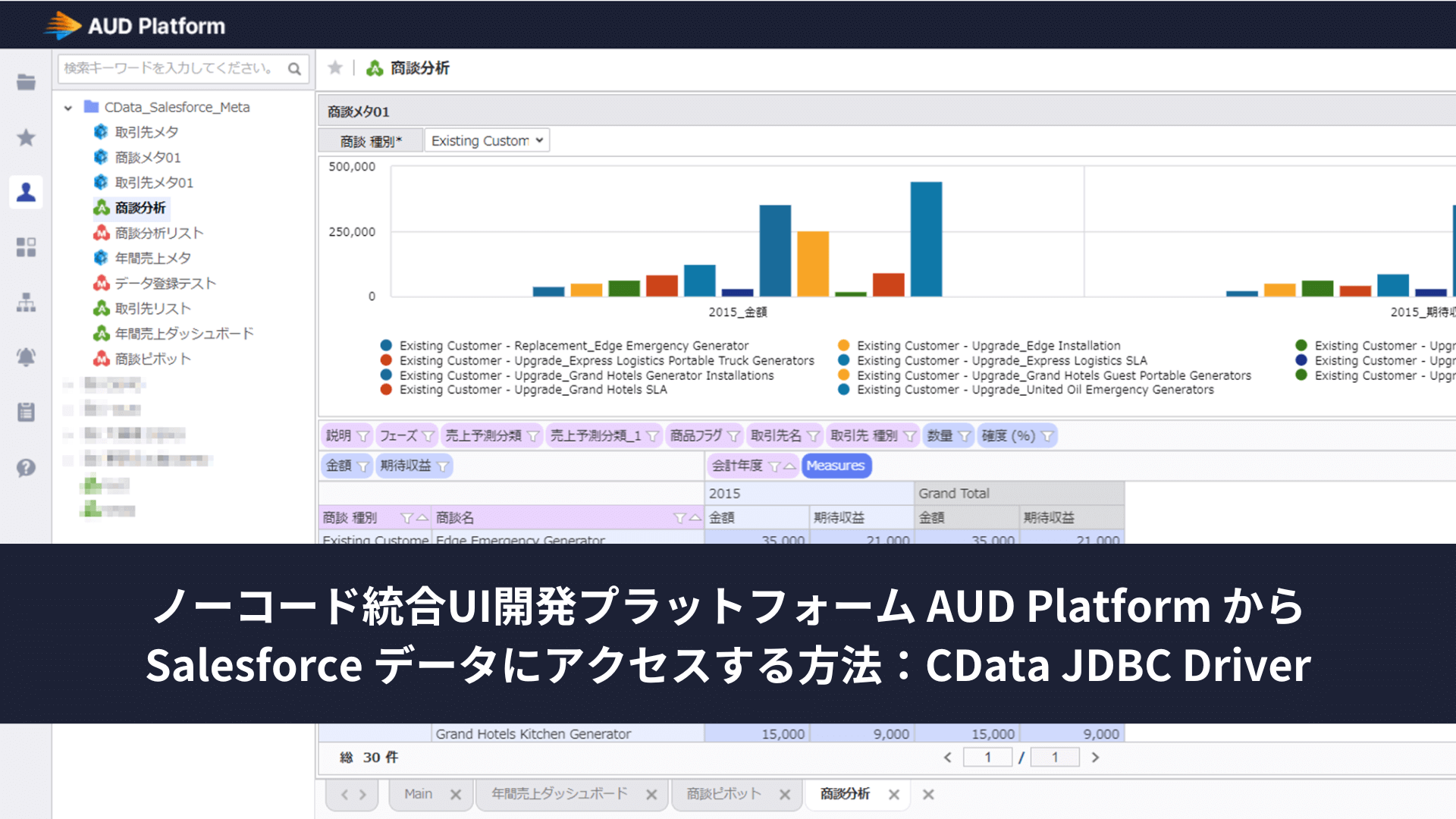

JDBC Drivers

- [ article ] SpagoBI でBigCommerce に連携

- [ article ] 帳票ツールのCROWNIX でZoho Books データを取り込んだ帳票を作成する

- [ article ] Informatica Enterprise Data Catalog にSage 200 ...

- [ article ] 全文検索・情報活用システムのQuickSolution にJDBC-ODBC Bridge ...

SSIS Components

- [ article ] Gmail データからSQL Server に接続する4つの方法をご紹介。あなたにピッタリな方法は?

- [ article ] Amazon DynamoDB をSSIS 経由でSQL サーバーにバックアップする

- [ article ] SSIS を使ってe-Sales Manager データをSQL Server にインポート

- [ article ] SurveyMonkey をSSIS 経由でSQL サーバーにバックアップする

ADO.NET Providers

- [ article ] 複数Adobe Analytics アカウントをレプリケーション

- [ article ] Entity Framework 6 からSAP HANA XS Advanced データに連携

- [ article ] HarperDB データをDevExpress Data Grid にデータバインドする。

- [ article ] 生産スケジューラFLEXSCHE へAdobe Analytics からデータを取り込む

Excel Add-Ins

- [ article ] Microsoft Power Query からMySQL データに連携してExcel から利用

- [ article ] Microsoft Power Query からTableau CRM Analytics ...

- [ article ] SharePoint Excel Services からCData ODBC Driver for ...

- [ article ] Remote Oracle Database としてSharePoint Excel ...

API Server

- [ article ] NetBeans IDE でOData データに仮想RDB として連携

- [ article ] SSIS を使ってOData データをSQL Server にインポート

- [ article ] OData ODBC データソースとの間にInformatica マッピングを作成

- [ article ] Jaspersoft Studio からOData データに接続する方法

Data Sync

- [ article ] 複数のGoogle Campaign Manager アカウントのレプリケーション

- [ article ] Vertica へのSage 50 UK データのETL/ELT ...

- [ article ] Amazon Redshift へのGoogle Data Catalog データのETL/ELT ...

- [ article ] SAP HANA へのTally データのETL/ELT パイプラインを作ってデータを統合する方法

Windows PowerShell

- [ article ] PowerShell を使ってFacebook データをSQL Server にレプリケーション

- [ article ] Snowflake データをPowerShell script でSQL Server ...

- [ article ] PowerShell からIBM Informix ...

- [ article ] JSON データをPowerShell でMySQL にレプリケーションする方法

FireDAC Components

- [ article ] Delphi のAmazon DynamoDB データへのデータバインドコントロール

- [ article ] Delphi のDropbox データへのデータバインドコントロール

- [ article ] Delphi のOracle データへのデータバインドコントロール

- [ article ] Delphi のGoogle Campaign Manager データへのデータバインドコントロール