Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Connect to HDFS Data in CloverDX (formerly CloverETL)

Transfer HDFS data using the visual workflow in the CloverDX data integration tool.

The CData JDBC Driver for HDFS enables you to use the data transformation components in CloverDX (formerly CloverETL) to work with HDFS as sources. In this article, you will use the JDBC Driver for HDFS to set up a simple transfer into a flat file. The CData JDBC Driver for HDFS enables you to use the data transformation components in CloverDX (formerly CloverETL) to work with HDFS as sources. In this article, you will use the JDBC Driver for HDFS to set up a simple transfer into a flat file.

Connect to HDFS as a JDBC Data Source

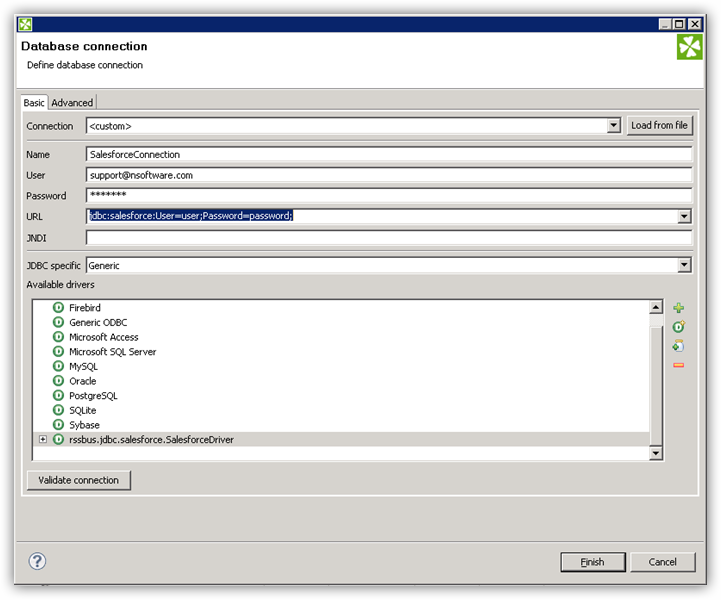

- Create the connection to HDFS data. In a new CloverDX graph, right-click the Connections node in the Outline pane and click Connections -> Create Connection. The Database Connection wizard is displayed.

- Click the plus icon to load a driver from a JAR. Browse to the lib subfolder of the installation directory and select the cdata.jdbc.hdfs.jar file.

- Enter the JDBC URL.

In order to authenticate, set the following connection properties:

- Host: Set this value to the host of your HDFS installation.

- Port: Set this value to the port of your HDFS installation. Default port: 50070

Built-in Connection String Designer

For assistance in constructing the JDBC URL, use the connection string designer built into the HDFS JDBC Driver. Either double-click the JAR file or execute the jar file from the command-line.

java -jar cdata.jdbc.hdfs.jarFill in the connection properties and copy the connection string to the clipboard.

![Using the built-in connection string designer to generate a JDBC URL (Salesforce is shown.)]()

A typical JDBC URL is below:

jdbc:hdfs:Host=sandbox-hdp.hortonworks.com;Port=50070;Path=/user/root;User=root;

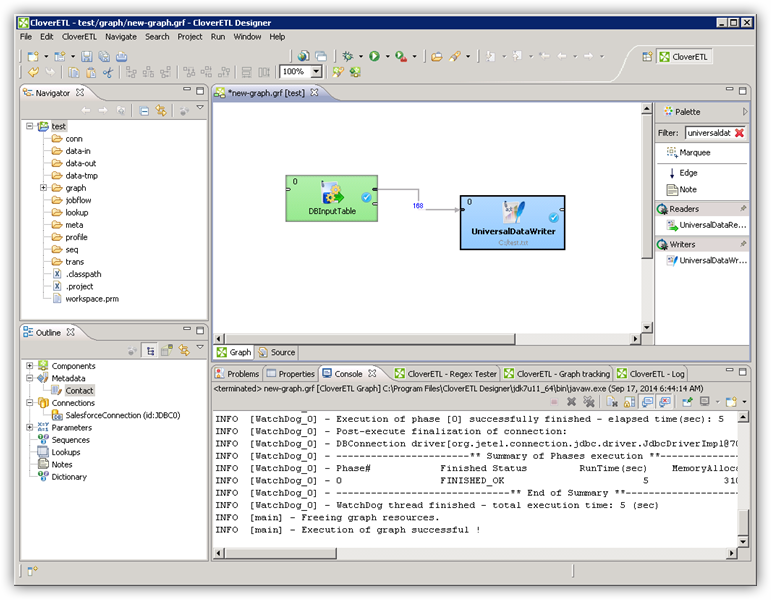

Query HDFS Data with the DBInputTable Component

- Drag a DBInputTable from the Readers selection of the Palette onto the job flow and double-click it to open the configuration editor.

- In the DB connection property, select the HDFS JDBC data source from the drop-down menu.

- Enter the SQL query. For example:

SELECT FileId, ChildrenNum FROM Files WHERE FileId = '119116'

Write the Output of the Query to a UniversalDataWriter

- Drag a UniversalDataWriter from the Writers selection onto the jobflow.

- Double-click the UniversalDataWriter to open the configuration editor and add a file URL.

- Right-click the DBInputTable and then click Extract Metadata.

- Connect the output port of the DBInputTable to the UniversalDataWriter.

- In the resulting Select Metadata menu for the UniversalDataWriter, choose the Files table. (You can also open this menu by right-clicking the input port for the UniversalDataWriter.)

- Click Run to write to the file.