ノーコードでクラウド上のデータとの連携を実現。

詳細はこちら →

CData

こんにちは!テクニカルディレクターの桑島です。

Denodo Platform は、エンタープライズデータベースのデータを一元管理するデータ仮想化製品です。CData JDBC Driver for ApacheKafka と組み合わせると、Denodo ユーザーはリアルタイムKafka データと他のエンタープライズデータソースを連携できるようになります。この記事では、Denodo Virtual DataPort Administrator でKafka の仮想データソースを作成する手順を紹介します。

最適化されたデータ処理が組み込まれたCData JDBC Driver は、リアルタイムKafka データを扱う上で比類のないパフォーマンスを提供します。Kafka にSQL クエリを発行すると、ドライバーはフィルタや集計などのサポートされているSQL 操作をKafka に直接渡し、サポートされていない操作(主にSQL 関数とJOIN 操作)は組み込みSQL エンジンを利用してクライアント側で処理します。組み込みの動的メタデータクエリを使用すると、ネイティブデータ型を使ってKafka データを操作および分析できます。

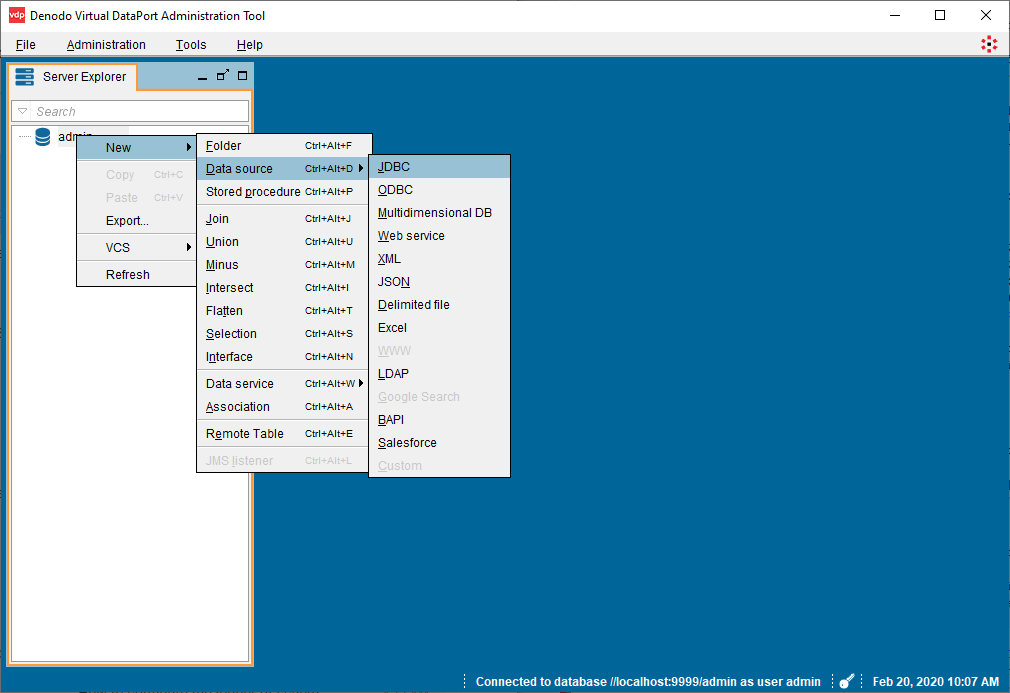

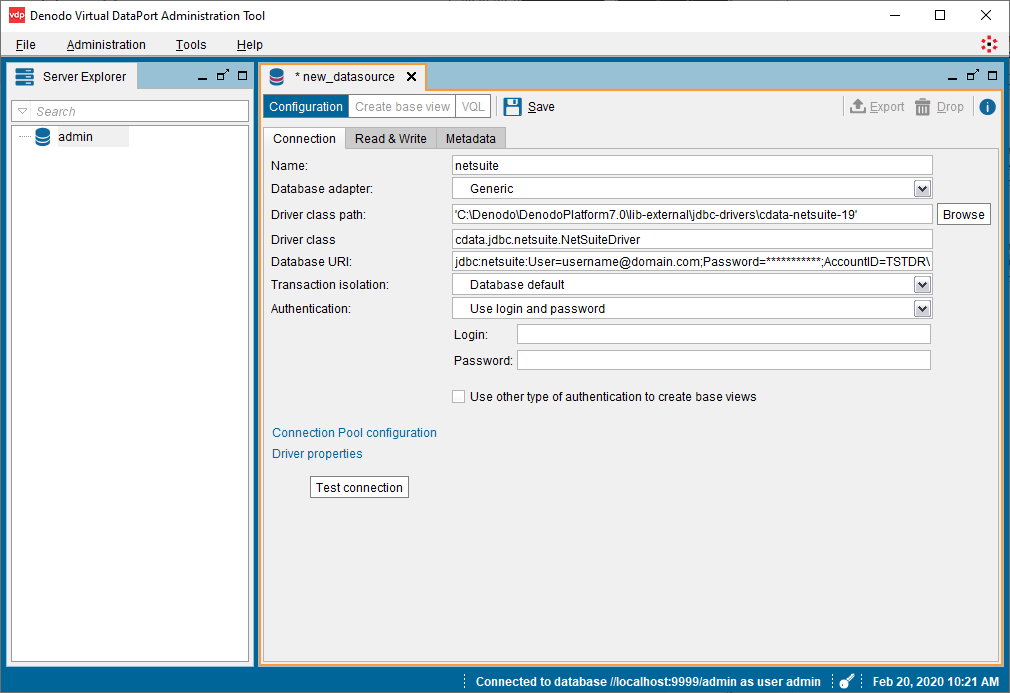

Denodo からリアルタイムKafka データに接続するには、JDBC Driver のJAR ファイルをDenodo の外部ライブラリディレクトリにコピーし、Virtual DataPort Administration Tool から新しいJDBC データソースを作成する必要があります。

Database URI:必要な接続プロパティを使用してJDBC のURL に設定。例えば次のようになります。

jdbc:apachekafka:User=admin;Password=pass;BootStrapServers=https://localhost:9091;Topic=MyTopic;

Database URI の作成については以下を参照してください。

JDBC URL の作成の補助として、Kafka JDBC Driver に組み込まれている接続文字列デザイナーが使用できます。JAR ファイルをダブルクリックするか、コマンドラインからjar ファイルを実行します。

java -jar cdata.jdbc.apachekafka.jar

接続プロパティを入力し、接続文字列をクリップボードにコピーします。

BootstrapServers およびTopic プロパティを設定して、Apache Kafka サーバーのアドレスと、対話するトピックを指定します。

サーバー証明書を信頼する必要がある場合があります。そのような場合は、必要に応じてTrustStorePath およびTrustStorePassword を指定してください。

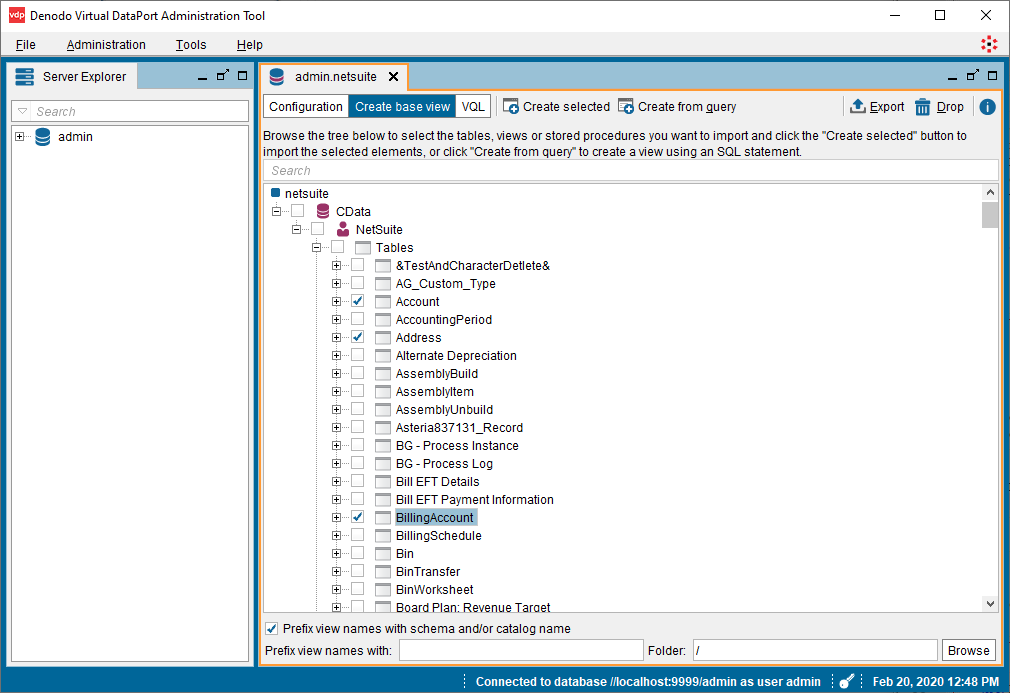

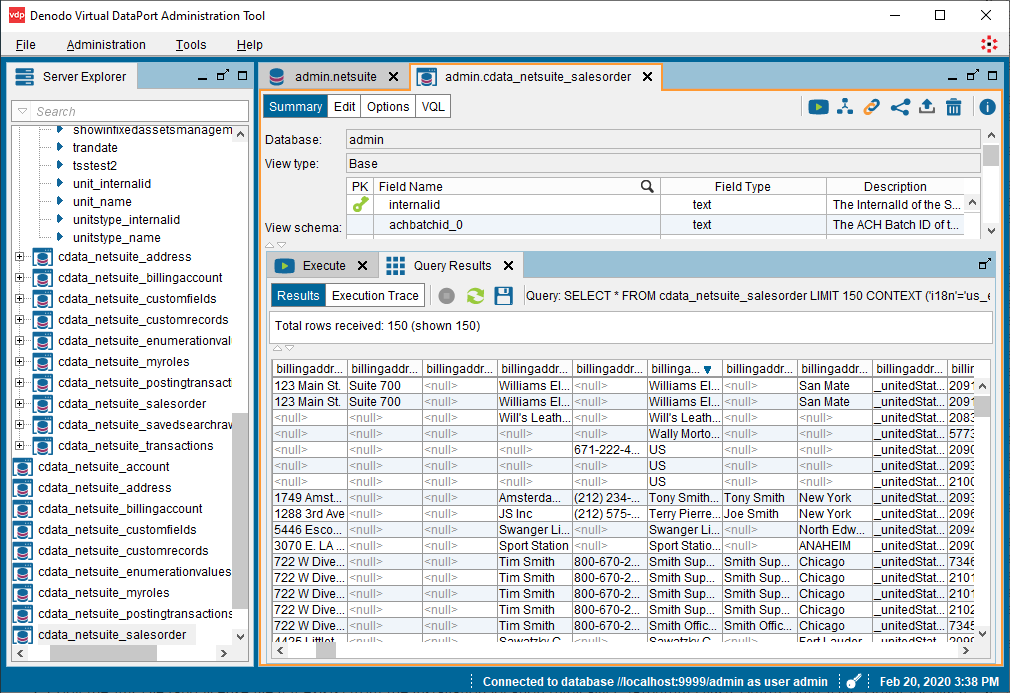

データソースを作成したら、Denodo Platform で使用するKafka データの基本ビューを作成できます。

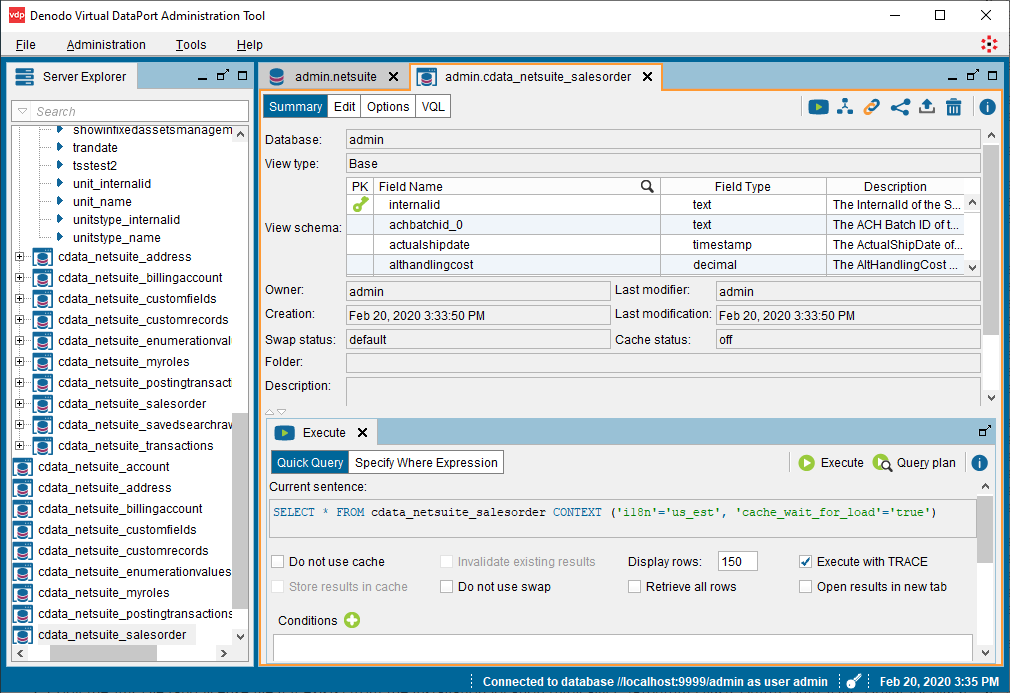

SELECT * FROM cdata_apachekafka_sampletable_1 CONTEXT ('i18n'='us_est', 'cache_wait_for_load'='true')

基本ビューを作成すると、Denodo Platform の他のデータソースと同様にリアルタイムKafka データを操作できるようになります。例えば、Denodo Data Catalog でKafka にクエリを実行できます。

CData JDBC Driver for ApacheKafka の30日の無償評価版をダウンロードして、Denodo Platform でリアルタイムKafka データの操作をはじめましょう!ご不明な点があれば、サポートチームにお問い合わせください。