Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Analyze Azure Data Lake Storage Data with Power Pivot

This article walks you through the process of using the CData ODBC Driver for Azure Data Lake Storage from Power Pivot. You will use the Table Import Wizard to load Azure Data Lake Storage data. You can visually build the import query or use any SQL supported by the driver.

The ODBC protocol is used by a wide variety of Business Intelligence (BI) and reporting tools to get access to different databases. The CData ODBC Driver for Azure Data Lake Storage brings the same power and ease of use to Azure Data Lake Storage data. This article uses the driver to import Azure Data Lake Storage data into Power Pivot.

Connect to Azure Data Lake Storage as an ODBC Data Source

If you have not already, first specify connection properties in an ODBC DSN (data source name). This is the last step of the driver installation. You can use the Microsoft ODBC Data Source Administrator to create and configure ODBC DSNs.

Authenticating to a Gen 1 DataLakeStore Account

Gen 1 uses OAuth 2.0 in Azure AD for authentication.

For this, an Active Directory web application is required. You can create one as follows:

To authenticate against a Gen 1 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen1.

- Account: Set this to the name of the account.

- OAuthClientId: Set this to the application Id of the app you created.

- OAuthClientSecret: Set this to the key generated for the app you created.

- TenantId: Set this to the tenant Id. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

Authenticating to a Gen 2 DataLakeStore Account

To authenticate against a Gen 2 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen2.

- Account: Set this to the name of the account.

- FileSystem: Set this to the file system which will be used for this account.

- AccessKey: Set this to the access key which will be used to authenticate the calls to the API. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

Connect from Power Pivot

Follow the steps below to connect to the DSN in Power Pivot.

- In Excel, click the Power Pivot Window icon on the Power Pivot tab to open Power Pivot.

- Launch the Table Import Wizard: Click the Get External Data from Other Data Sources button.

- Select the OLEDB/ODBC source option.

- Click Build to open the Data Link Properties dialog.

- In the Provider tab, select the Microsoft OLEDB Provider for ODBC Drivers option.

- In the Connection tab, select the Use Data Source Name option and then select the Azure Data Lake Storage DSN in the menu.

Select and Filter Tables and Views

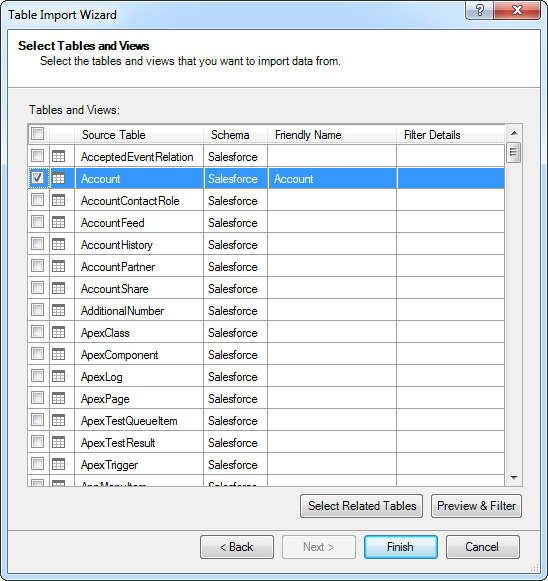

Follow the steps below to use the wizard to import Azure Data Lake Storage tables. As you use the wizard to select, filter, and sort columns of Azure Data Lake Storage tables, Power Pivot generates the query to be executed.

-

After selecting the DSN in the Table Import Wizard, select the option to select from a list of tables.

Click Preview & Filter to select specific columns, sort data, and visually build filters. To include or exclude columns, select and clear the option next to the column name.

To filter based on column values, click the down arrow button next to the column name. In the resulting dialog, select or clear the column values you want to filter. Alternatively, click Number Filters or Text Filters and then select a comparison operator. In the resulting dialog, build the filter criteria.

- Return to the Select Tables and Views page of the wizard. You can access filters by clicking the Applied Filters link in the Filter Details column.

Import and Filter with SQL

You can also import with an SQL query. The driver supports the standard SQL, allowing Excel to communicate with Azure Data Lake Storage APIs.

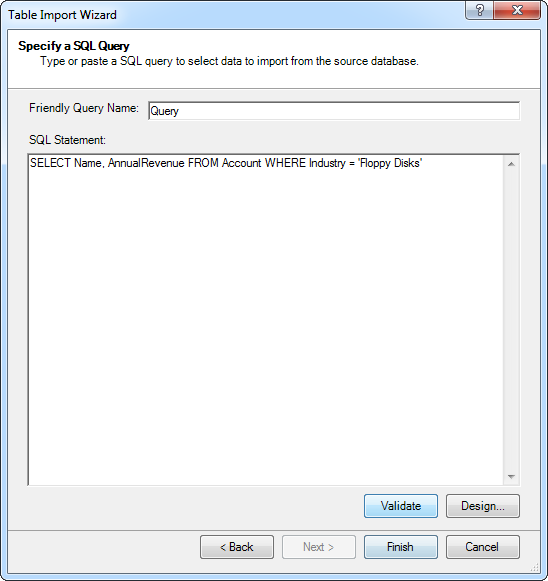

- After selecting the DSN in the Table Import Wizard, select the option to write a query.

In the SQL Statement box, enter the query. Click Validate to check that the syntax of the query is valid. Click Design to preview the results and adjust the query before import.

![The query to be used to import the data.]()

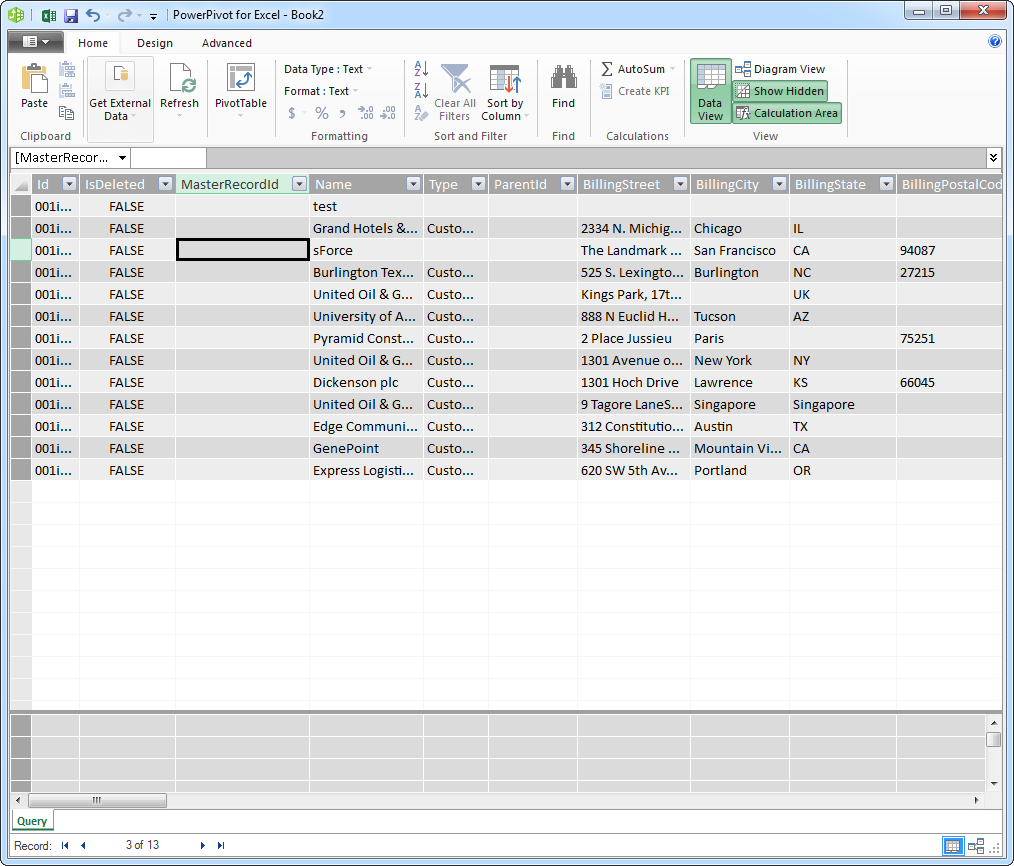

- Finish the wizard to import the data for your chosen query.

Refresh On Demand

Connectivity to Azure Data Lake Storage APIs enables real-time analysis. To immediately update your workbook with any changes, click Refresh.