Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →How to Visualize Databricks Data in Python with pandas

Use pandas and other modules to analyze and visualize live Databricks data in Python.

The rich ecosystem of Python modules lets you get to work quickly and integrate your systems more effectively. With the CData Python Connector for Databricks, the pandas & Matplotlib modules, and the SQLAlchemy toolkit, you can build Databricks-connected Python applications and scripts for visualizing Databricks data. This article shows how to use the pandas, SQLAlchemy, and Matplotlib built-in functions to connect to Databricks data, execute queries, and visualize the results.

With built-in optimized data processing, the CData Python Connector offers unmatched performance for interacting with live Databricks data in Python. When you issue complex SQL queries from Databricks, the driver pushes supported SQL operations, like filters and aggregations, directly to Databricks and utilizes the embedded SQL engine to process unsupported operations client-side (often SQL functions and JOIN operations).

Connecting to Databricks Data

Connecting to Databricks data looks just like connecting to any relational data source. Create a connection string using the required connection properties. For this article, you will pass the connection string as a parameter to the create_engine function.

To connect to a Databricks cluster, set the properties as described below.

Note: The needed values can be found in your Databricks instance by navigating to Clusters, and selecting the desired cluster, and selecting the JDBC/ODBC tab under Advanced Options.

- Server: Set to the Server Hostname of your Databricks cluster.

- HTTPPath: Set to the HTTP Path of your Databricks cluster.

- Token: Set to your personal access token (this value can be obtained by navigating to the User Settings page of your Databricks instance and selecting the Access Tokens tab).

Follow the procedure below to install the required modules and start accessing Databricks through Python objects.

Install Required Modules

Use the pip utility to install the pandas & Matplotlib modules and the SQLAlchemy toolkit:

pip install pandas pip install matplotlib pip install sqlalchemy

Be sure to import the module with the following:

import pandas import matplotlib.pyplot as plt from sqlalchemy import create_engine

Visualize Databricks Data in Python

You can now connect with a connection string. Use the create_engine function to create an Engine for working with Databricks data.

engine = create_engine("databricks:///?Server=127.0.0.1&Port=443&TransportMode=HTTP&HTTPPath=MyHTTPPath&UseSSL=True&User=MyUser&Password=MyPassword")

Execute SQL to Databricks

Use the read_sql function from pandas to execute any SQL statement and store the resultset in a DataFrame.

df = pandas.read_sql("SELECT City, CompanyName FROM Customers WHERE Country = 'US'", engine)

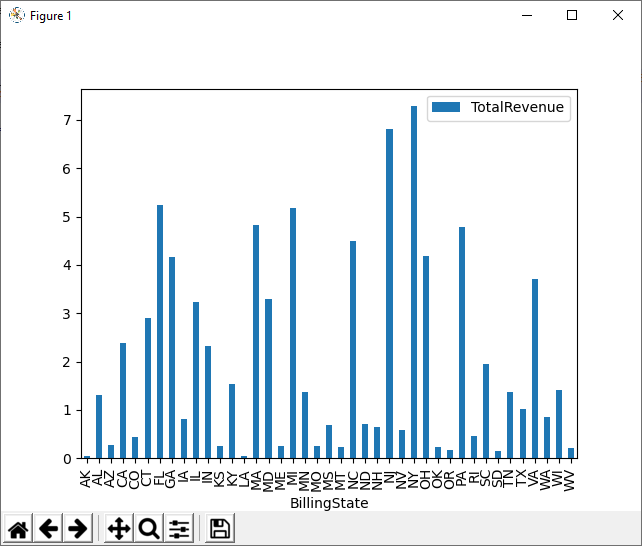

Visualize Databricks Data

With the query results stored in a DataFrame, use the plot function to build a chart to display the Databricks data. The show method displays the chart in a new window.

df.plot(kind="bar", x="City", y="CompanyName") plt.show()

Free Trial & More Information

Download a free, 30-day trial of the CData Python Connector for Databricks to start building Python apps and scripts with connectivity to Databricks data. Reach out to our Support Team if you have any questions.

Full Source Code

import pandas

import matplotlib.pyplot as plt

from sqlalchemy import create_engin

engine = create_engine("databricks:///?Server=127.0.0.1&Port=443&TransportMode=HTTP&HTTPPath=MyHTTPPath&UseSSL=True&User=MyUser&Password=MyPassword")

df = pandas.read_sql("SELECT City, CompanyName FROM Customers WHERE Country = 'US'", engine)

df.plot(kind="bar", x="City", y="CompanyName")

plt.show()