Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Create Informatica Mappings From/To a JDBC Data Source for Kafka

Create Kafka data objects in Informatica using the standard JDBC connection process: Copy the JAR and then connect.

Informatica provides a powerful, elegant means of transporting and transforming your data. By utilizing the CData JDBC Driver for Kafka, you are gaining access to a driver based on industry-proven standards that integrates seamlessly with Informatica's powerful data transportation and manipulation features. This tutorial shows how to transfer and browse Kafka data in Informatica PowerCenter.

Deploy the Driver

To deploy the driver to the Informatica PowerCenter server, copy the CData JAR and .lic file, located in the lib subfolder in the installation directory, to the following folder: Informatica-installation-directory\services\shared\jars\thirdparty.

To work with Kafka data in the Developer tool, you will need to copy the CData JAR and .lic file, located in the lib subfolder in the installation directory, into the following folders:

- Informatica-installation-directory\client\externaljdbcjars

- Informatica-installation-directory\externaljdbcjars

Create the JDBC Connection

Follow the steps below to connect from Informatica Developer:

- In the Connection Explorer pane, right-click your domain and click Create a Connection.

- In the New Database Connection wizard that is displayed, enter a name and Id for the connection and in the Type menu select JDBC.

- In the JDBC Driver Class Name property, enter:

cdata.jdbc.apachekafka.ApacheKafkaDriver - In the Connection String property, enter the JDBC URL, using the connection properties for Kafka.

Set BootstrapServers and the Topic properties to specify the address of your Apache Kafka server, as well as the topic you would like to interact with.

Authorization Mechanisms

- SASL Plain: The User and Password properties should be specified. AuthScheme should be set to 'Plain'.

- SASL SSL: The User and Password properties should be specified. AuthScheme should be set to 'Scram'. UseSSL should be set to true.

- SSL: The SSLCert and SSLCertPassword properties should be specified. UseSSL should be set to true.

- Kerberos: The User and Password properties should be specified. AuthScheme should be set to 'Kerberos'.

You may be required to trust the server certificate. In such cases, specify the TrustStorePath and the TrustStorePassword if necessary.

Built-in Connection String Designer

For assistance in constructing the JDBC URL, use the connection string designer built into the Kafka JDBC Driver. Either double-click the JAR file or execute the jar file from the command-line.

java -jar cdata.jdbc.apachekafka.jarFill in the connection properties and copy the connection string to the clipboard.

![Using the built-in connection string designer to generate a JDBC URL (Salesforce is shown.)]()

A typical connection string is below:

jdbc:apachekafka:User=admin;Password=pass;BootStrapServers=https://localhost:9091;Topic=MyTopic;

Browse Kafka Tables

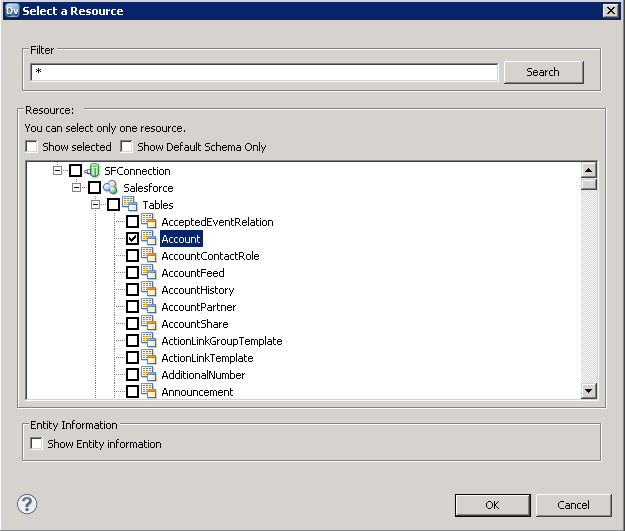

After you have added the driver JAR to the classpath and created a JDBC connection, you can now access Kafka entities in Informatica. Follow the steps below to connect to Kafka and browse Kafka tables:

- Connect to your repository.

- In the Connection Explorer, right-click the connection and click Connect.

- Clear the Show Default Schema Only option.

![The driver models Kafka entities as relational tables. (Salesforce is shown.)]()

You can now browse Kafka tables in the Data Viewer: Right-click the node for the table and then click Open. On the Data Viewer view, click Run.

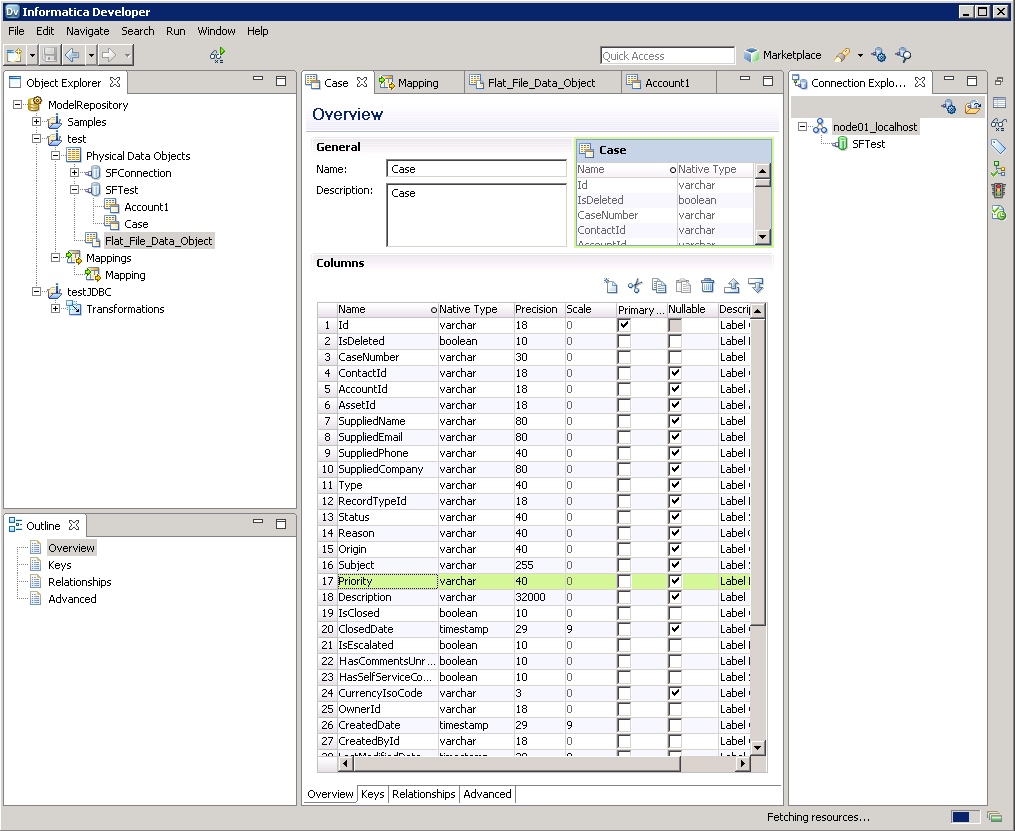

Create Kafka Data Objects

Follow the steps below to add Kafka tables to your project:

- Select tables in Kafka, then right-click a table in Kafka, and click Add to Project.

- In the resulting dialog, select the option to create a data object for each resource.

- In the Select Location dialog, select your project.

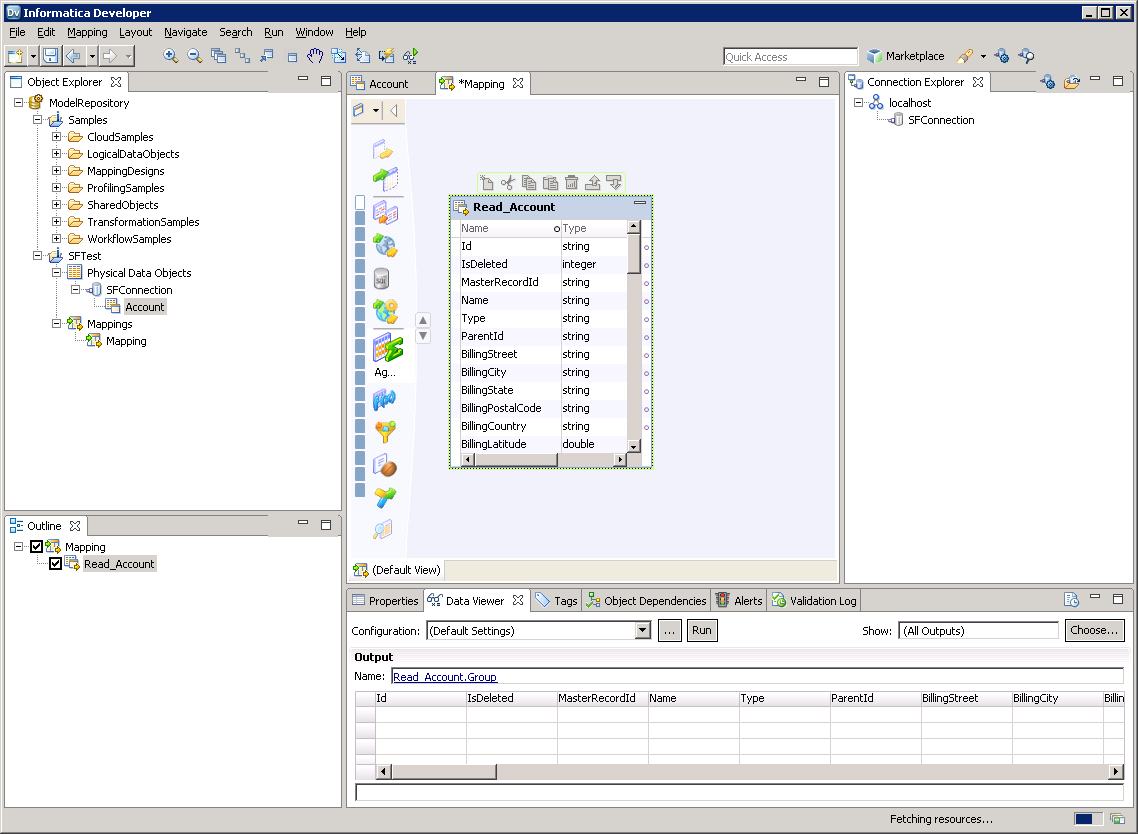

Create a Mapping

Follow the steps below to add the Kafka source to a mapping:

- In the Object Explorer, right-click your project and then click New -> Mapping.

- Expand the node for the Kafka connection and then drag the data object for the table onto the editor.

- In the dialog that appears, select the Read option.

![The source Kafka table in the mapping. (Salesforce is shown.)]()

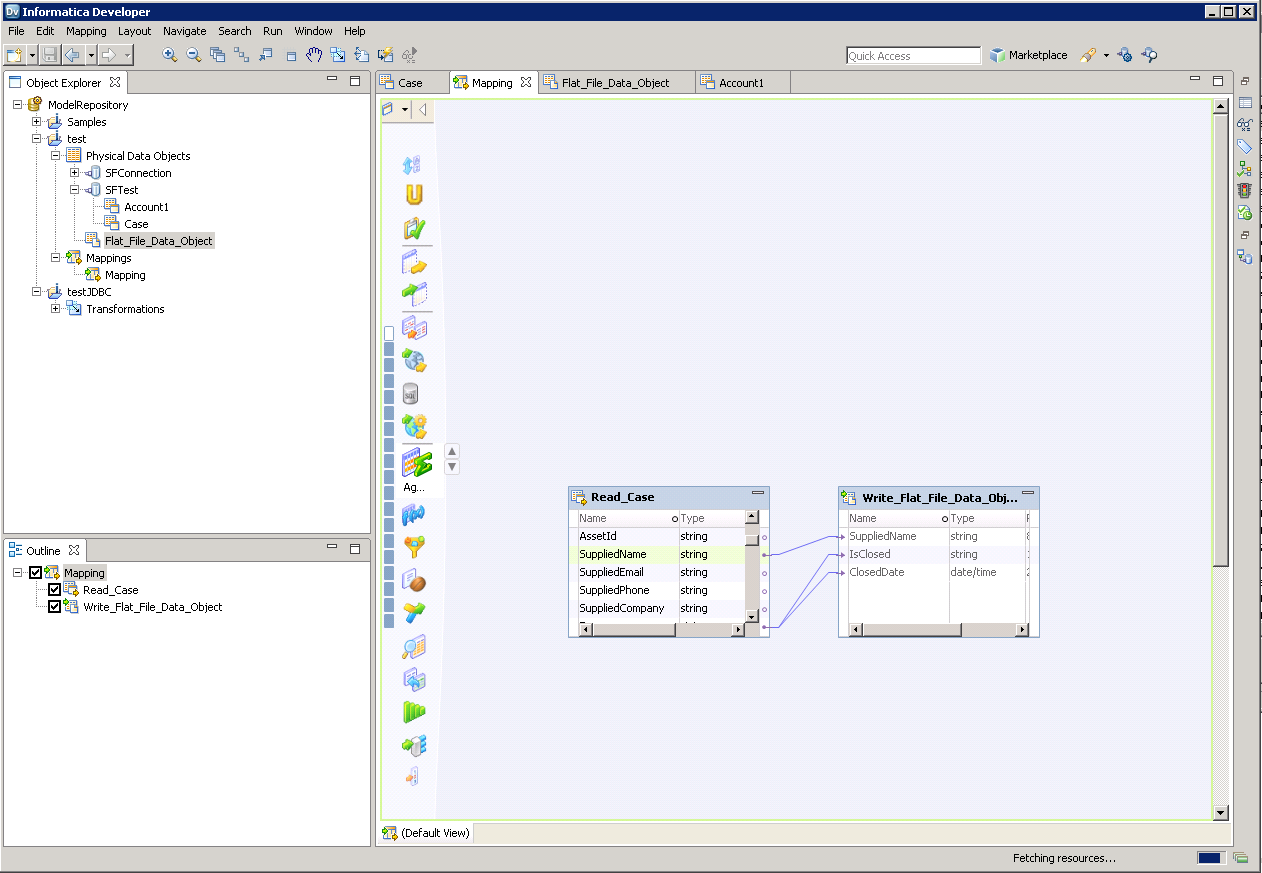

Follow the steps below to map Kafka columns to a flat file:

- In the Object Explorer, right-click your project and then click New -> Data Object.

- Select Flat File Data Object -> Create as Empty -> Fixed Width.

- In the properties for the Kafka object, select the rows you want, right-click, and then click copy. Paste the rows into the flat file properties.

- Drag the flat file data object onto the mapping. In the dialog that appears, select the Write option.

- Click and drag to connect columns.

To transfer Kafka data, right-click in the workspace and then click Run Mapping.

![The completed mapping. (Salesforce is shown.)]()