Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Connect to Spark Data in RapidMiner

Integrate Spark data with standard components and data source configuration wizards in RapidMiner Studio.

This article shows how you can easily integrate the CData JDBC driver for Spark into your processes in RapidMiner. This article uses the CData JDBC Driver for Spark to transfer Spark data to a process in RapidMiner.

Connect to Spark in RapidMiner as a JDBC Data Source

You can follow the procedure below to establish a JDBC connection to Spark:

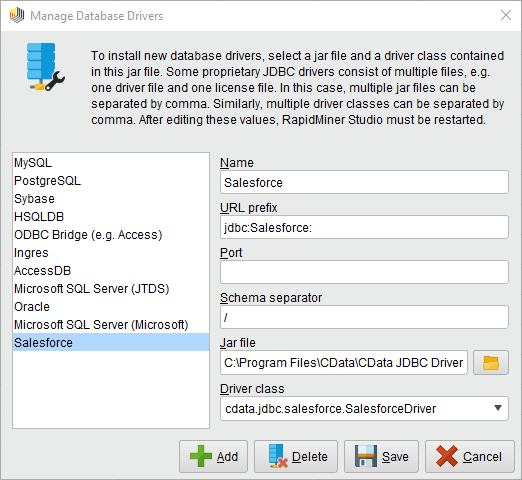

- Add a new database driver for Spark: Click Connections -> Manage Database Drivers.

- In the resulting wizard, click the Add button and enter a name for the connection.

- Enter the prefix for the JDBC URL:

jdbc:sparksql: - Enter the path to the cdata.jdbc.sparksql.jar file, located in the lib subfolder of the installation directory.

- Enter the driver class:

cdata.jdbc.sparksql.SparkSQLDriver![The JDBC driver configuration. (Salesforce is shown.)]()

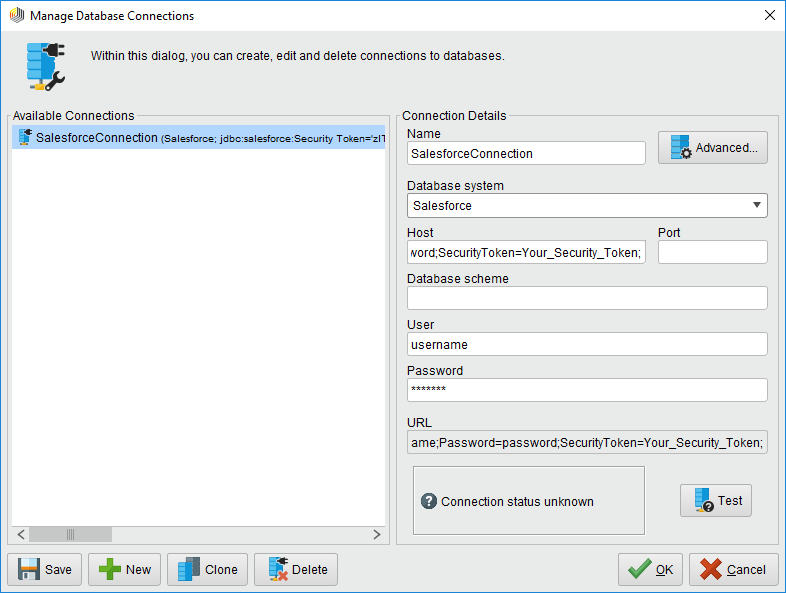

- Create a new Spark connection: Click Connections -> Manage Database Connections.

- Enter a name for your connection.

- For Database System, select the Spark driver you configured previously.

- Enter your connection string in the Host box.

Set the Server, Database, User, and Password connection properties to connect to SparkSQL.

Built-in Connection String Designer

For assistance in constructing the JDBC URL, use the connection string designer built into the Spark JDBC Driver. Either double-click the JAR file or execute the jar file from the command-line.

java -jar cdata.jdbc.sparksql.jarFill in the connection properties and copy the connection string to the clipboard.

![Using the built-in connection string designer to generate a JDBC URL (Salesforce is shown.)]()

A typical connection string is below:

Server=127.0.0.1; - Enter your username and password if necessary.

![The connection to the JDBC data source. (Salesforce is shown.)]()

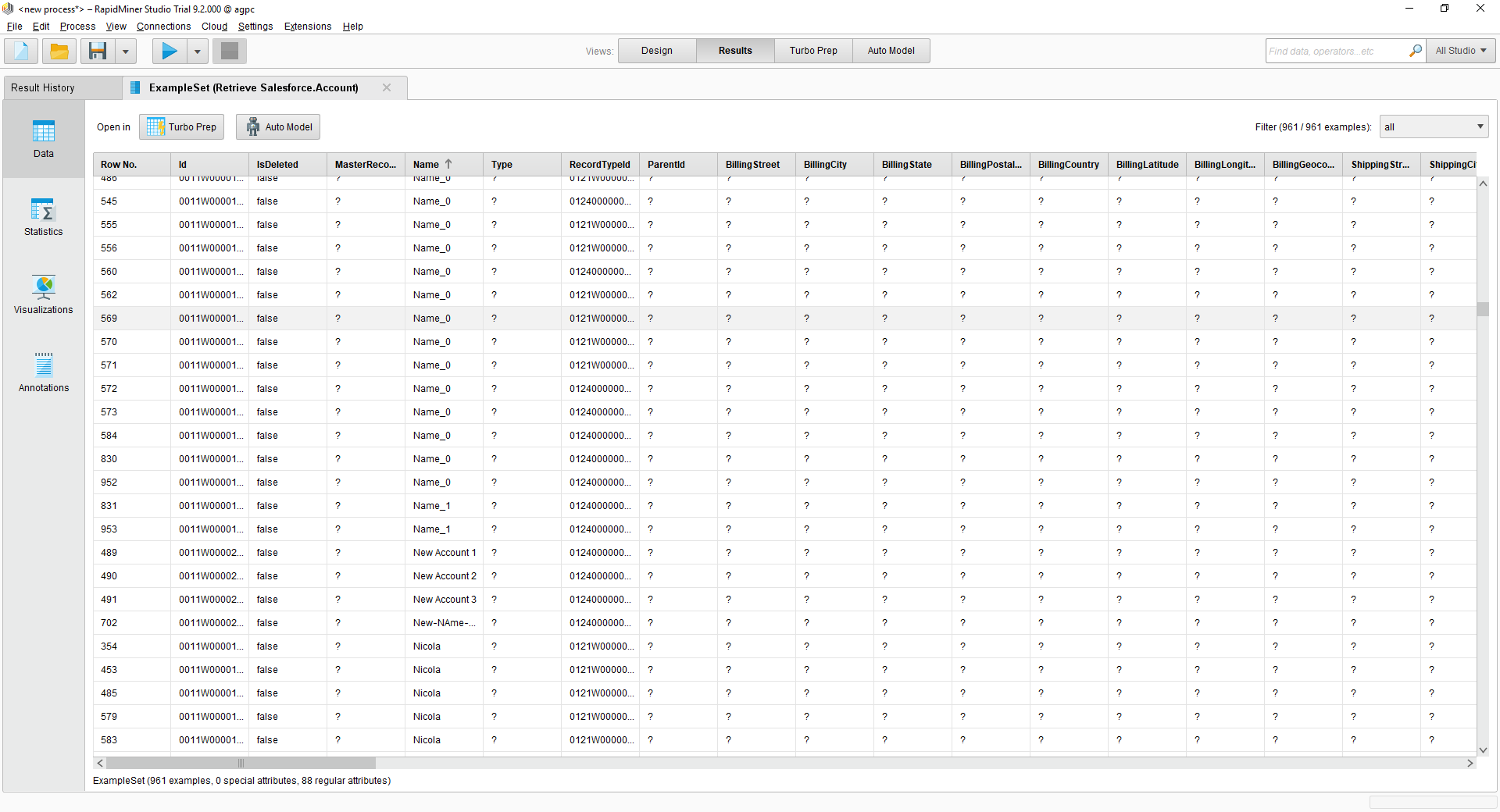

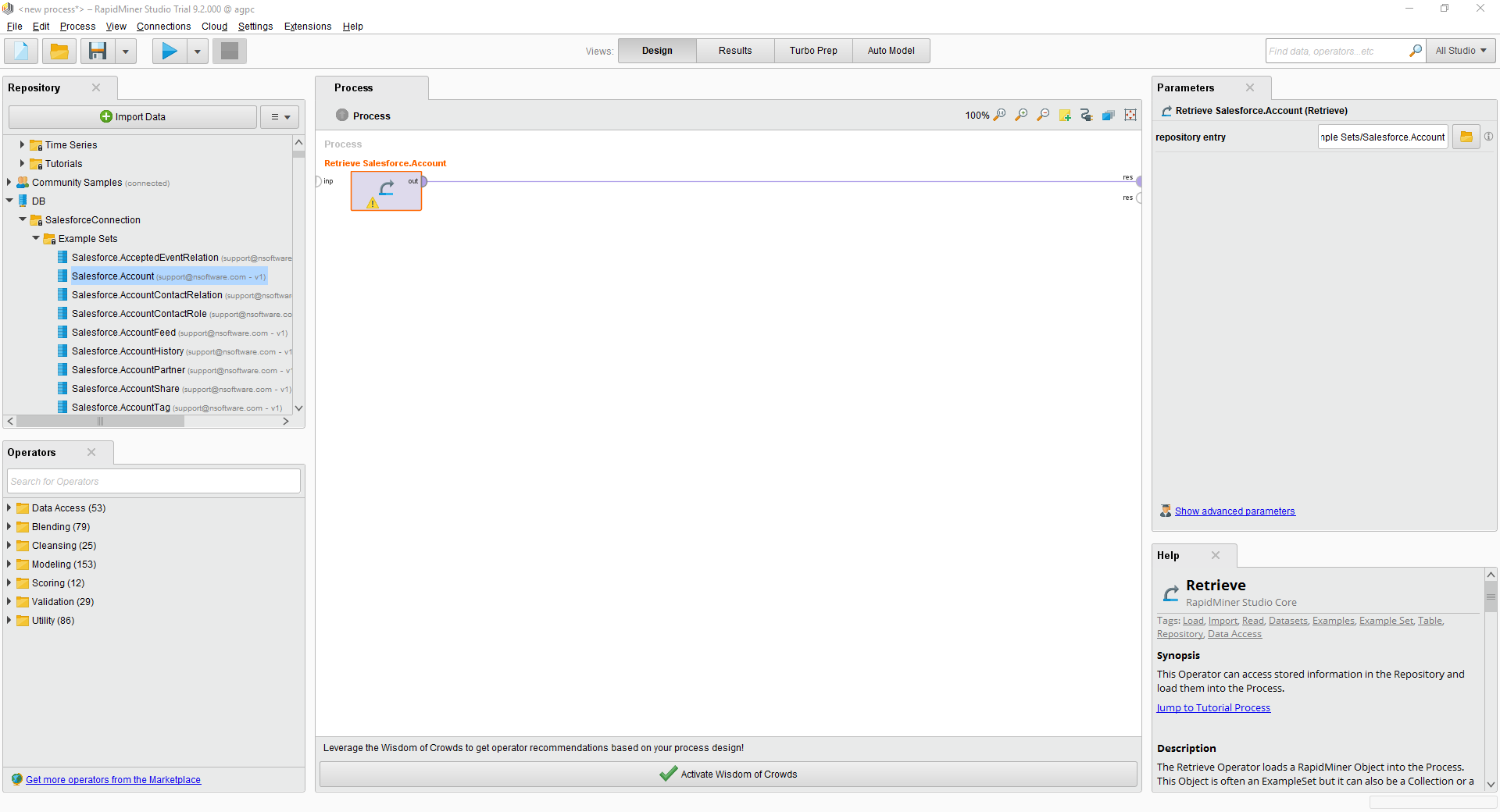

You can now use your Spark connection with the various RapidMiner operators in your process. To retrieve Spark data, drag the Retrieve operator from the Operators view.

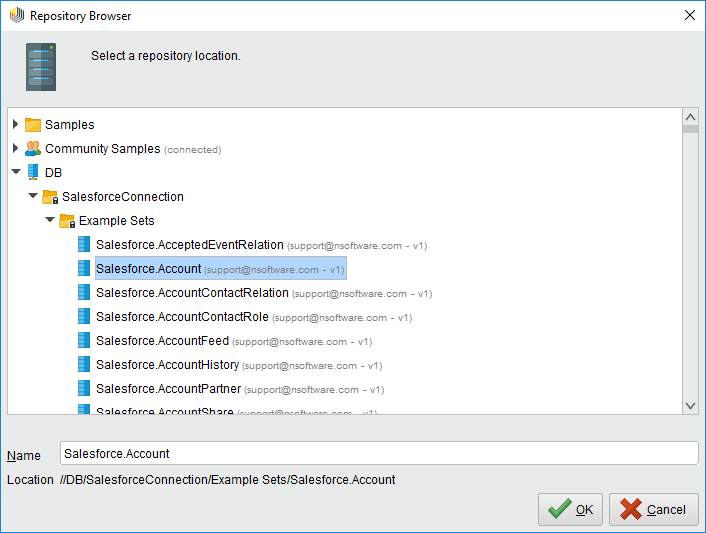

With the Retrieve operator selected, you can then define which table to retrieve in the Parameters view by clicking the folder icon next to the "repository entry." In the resulting Repository Browser, you can expand your connection node to select the desired example set.

With the Retrieve operator selected, you can then define which table to retrieve in the Parameters view by clicking the folder icon next to the "repository entry." In the resulting Repository Browser, you can expand your connection node to select the desired example set.

Finally, wire the output to the Retrieve process to a result, and run the process to see the Spark data.