DBArtisan でJDBC 経由でSpark データ をデータ連携利用

DBArtisan のウィザードを使用して、Spark のJDBC データソースを作成します。

加藤龍彦

デジタルマーケティング

最終更新日:2022-09-23

CData

こんにちは!ウェブ担当の加藤です。マーケ関連のデータ分析や整備もやっています。

CData JDBC Driver for SparkSQL は、データベースとしてSpark データ に連携できるようにすることで、Spark データ をDBArtisan などのデータベース管理ツールにシームレスに連携します。ここでは、DBArtisan でSpark のJDBC ソースを作成する方法を説明します。データを直観的に標準SQL で実行できます。

Spark データ をDBArtisan Projects に連携

以下のステップに従って、Spark をプロジェクトのデータベースインスタンスとして登録します。

- DBArtisan で、[Data Source]->[Register Datasource]とクリックします。

- [Generic JDBC]を選択します。

- [Manage]をクリックします。

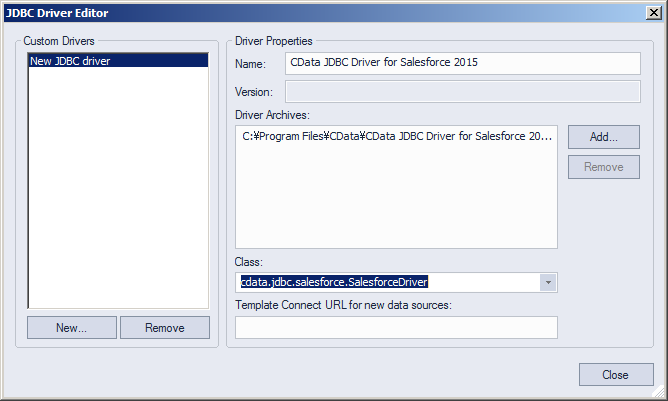

- 表示されるダイアログで、[New]をクリックします。ドライバーの名前を入力し、[Add]をクリックします。次に、ドライバーJAR に移動します。ドライバーJAR は、インストールディレクトリのlib サブフォルダにあります。

![The JDBC driver definition in the Register Datasource wizard.(Salesforce is shown.)]()

-

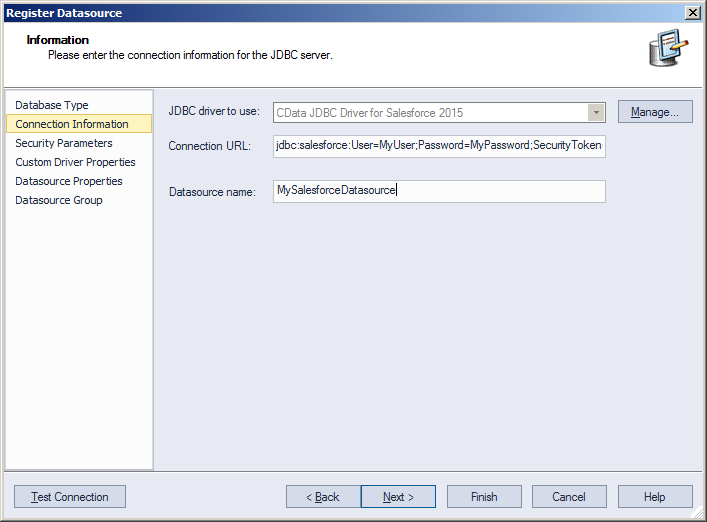

Connection URL ボックスで、JDBC URLに資格情報とその他の必要な接続プロパティを入力します。

SparkSQL への接続

SparkSQL への接続を確立するには以下を指定します。

- Server:SparkSQL をホストするサーバーのホスト名またはIP アドレスに設定。

- Port:SparkSQL インスタンスへの接続用のポートに設定。

- TransportMode:SparkSQL サーバーとの通信に使用するトランスポートモード。有効な入力値は、BINARY およびHTTP です。デフォルトではBINARY が選択されます。

- AuthScheme:使用される認証スキーム。有効な入力値はPLAIN、LDAP、NOSASL、およびKERBEROS です。デフォルトではPLAIN が選択されます。

Databricks への接続

Databricks クラスターに接続するには、以下の説明に従ってプロパティを設定します。Note:必要な値は、「クラスター」に移動して目的のクラスターを選択し、

「Advanced Options」の下にある「JDBC/ODBC」タブを選択することで、Databricks インスタンスで見つけることができます。

- Server:Databricks クラスターのサーバーのホスト名に設定。

- Port:443

- TransportMode:HTTP

- HTTPPath:Databricks クラスターのHTTP パスに設定。

- UseSSL:True

- AuthScheme:PLAIN

- User:'token' に設定。

- Password:パーソナルアクセストークンに設定(値は、Databricks インスタンスの「ユーザー設定」ページに移動して「アクセストークン」タブを選択することで取得できます)。

ビルトイン接続文字列デザイナー

JDBC URL の構成については、Spark JDBC Driver に組み込まれている接続文字列デザイナーを使用してください。JAR ファイルのダブルクリック、またはコマンドラインからJAR ファイルを実行します。

java -jar cdata.jdbc.sparksql.jar

接続プロパティを入力し、接続文字列をクリップボードにコピーします。

![Required JDBC connection properties in the Register Datasource wizard.(Salesforce is shown.)]()

下は一般的な接続文字列です。

jdbc:sparksql:Server=127.0.0.1;

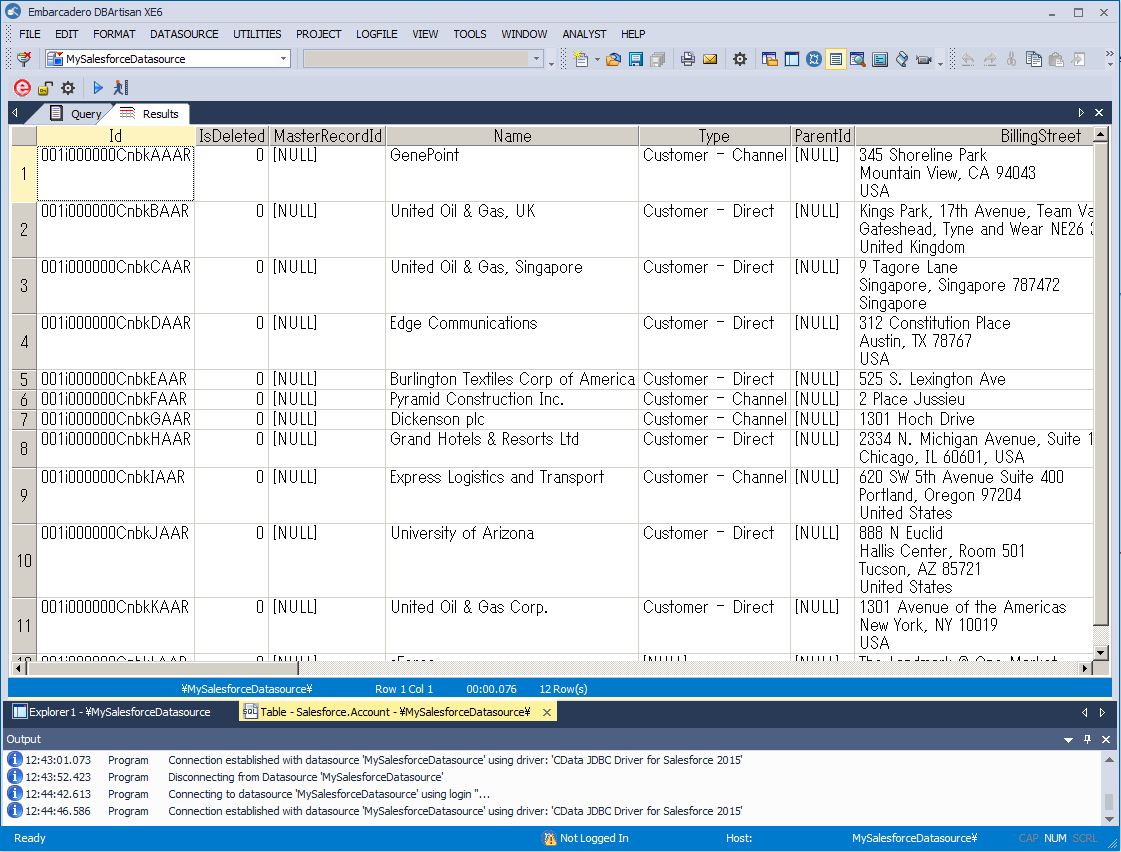

- ウィザードを終了して、Spark に接続します。Spark エンティティは、[Datasource Explorer]に表示されます。

ほかのデータベースを使うのと同じように、Spark を使うことができます。

Spark API にサポートされているクエリについてのより詳しい情報は、ドライバーのヘルプドキュメントを参照してください。

![The results of a query.(Salesfnorce is shown.)]()

関連コンテンツ