ノーコードでクラウド上のデータとの連携を実現。

詳細はこちら →

CData

こんにちは!ウェブ担当の加藤です。マーケ関連のデータ分析や整備もやっています。

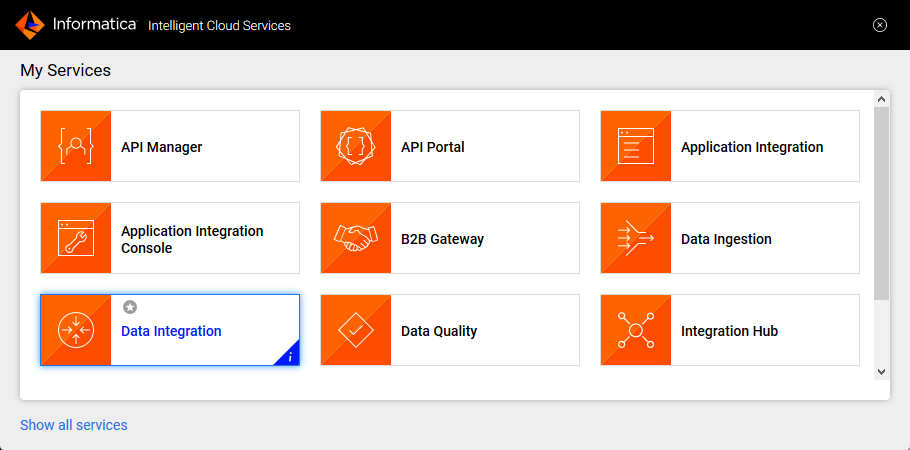

Informatica Cloud を使うと、抽出、変換、読み込み(ETL)のタスクをクラウド上で実行できます。Cloud Secure Agent およびCData JDBC Driver for ADLS を組み合わせると、Informatica Cloud で直接Azure Data Lake Storage データにリアルタイムでアクセスできます。この記事では、Cloud Secure Agent のダウンロードと登録、JDBC ドライバーを経由したAzure Data Lake Storage への接続、そしてInformatica Cloud の処理で使用可能なマッピングの生成について紹介します。

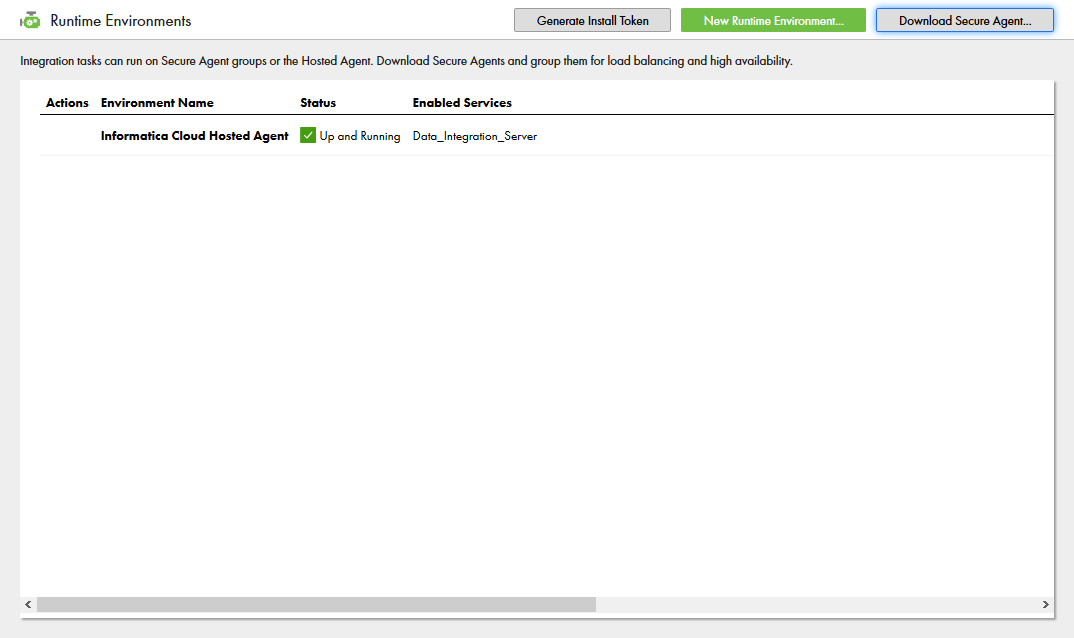

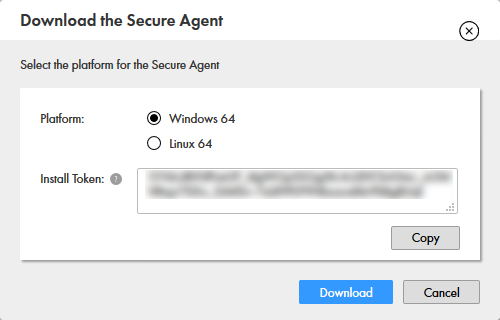

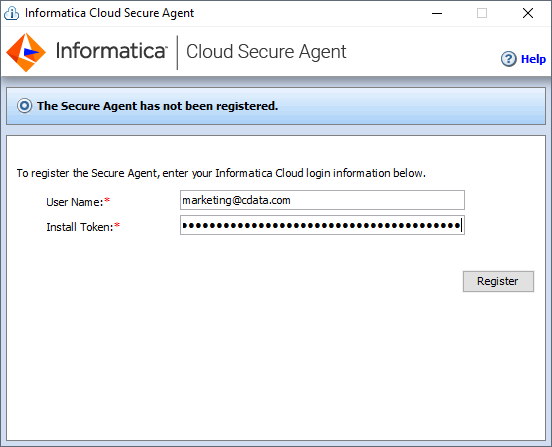

JDBC ドライバー経由でAzure Data Lake Storage データを操作するには、Cloud Secure Agent をインストールします。

NOTE:Cloud Secure Agent の全サービスが立ち上がるまで、時間がかかる場合があります。

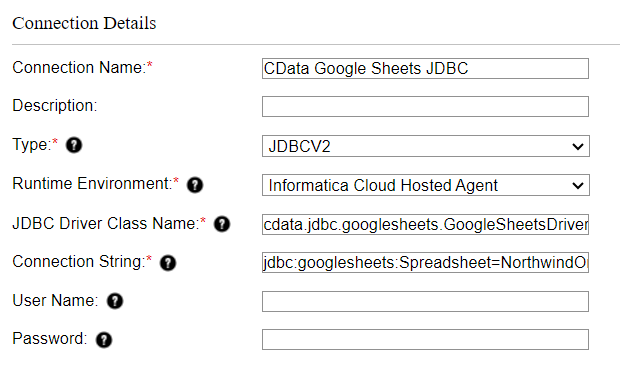

Cloud Secure Agent をインストールして実行したら、JDBC ドライバーを使ってAzure Data Lake Storage に接続できるようになります。はじめに「接続」タブをクリックし、続けて「新しい接続」をクリックします。接続するには次のプロパティを入力します。

jdbc:adls:Schema=ADLSGen2;Account=myAccount;FileSystem=myFileSystem;AccessKey=myAccessKey;InitiateOAuth=GETANDREFRESH;

Gen 1 DataLakeStorage アカウントに接続するには、はじめに以下のプロパティを設定します。

Gen 1 は、認証方法としてAzure Active Directory OAuth(AzureAD)およびマネージドサービスID(AzureMSI)をサポートしています。認証方法は、ヘルプドキュメントの「Azure DataLakeStorage Gen 1 への認証」セクションを参照してください。

Gen 2 DataLakeStorage アカウントに接続するには、はじめに以下のプロパティを設定します。

Gen 2は、認証方法としてアクセスキー、共有アクセス署名(SAS)、Azure Active Directory OAuth(AzureAD)、マネージドサービスID(AzureMSI)など多様な方法をサポートしています。AzureAD、AzureMSI での認証方法は、ヘルプドキュメントの「Azure DataLakeStorage Gen 2 への認証」セクションを参照してください。

アクセスキーを使用して接続するには、AccessKey プロパティを取得したアクセスキーの値に、AuthScheme を「AccessKey」に設定します。

Azure ポータルからADLS Gen2 ストレージアカウントのアクセスキーを取得できます。

共有アクセス署名を使用して接続するには、SharedAccessSignature プロパティを接続先リソースの有効な署名に設定して、AuthScheme を「SAS」に設定します。 共有アクセス署名は、Azure Storage Explorer などのツールで生成できます。

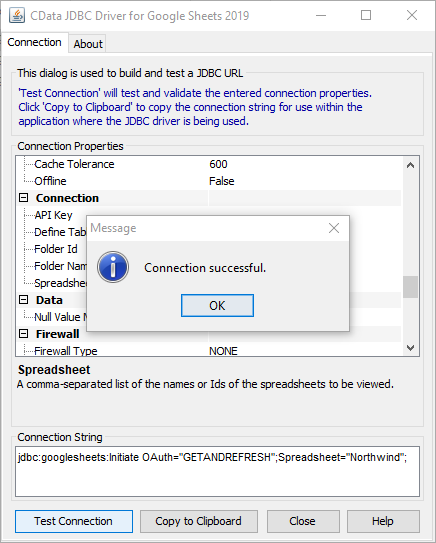

JDBC URL の作成の補助として、Azure Data Lake Storage JDBC Driver に組み込まれている接続文字列デザイナーが使用できます。.jar ファイルをダブルクリックするか、コマンドラインから.jar ファイルを実行します。

java -jar cdata.jdbc.adls.jar

接続プロパティを入力し、接続文字列をクリップボードにコピーします。

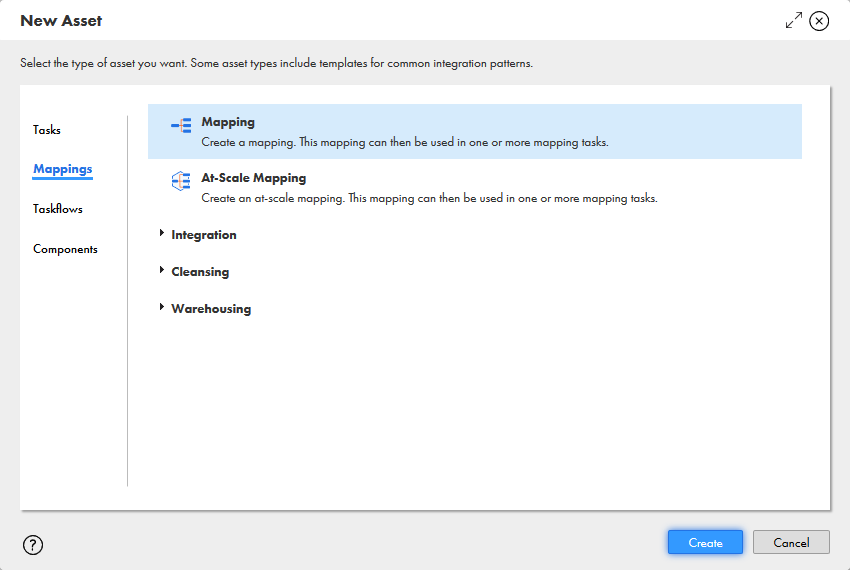

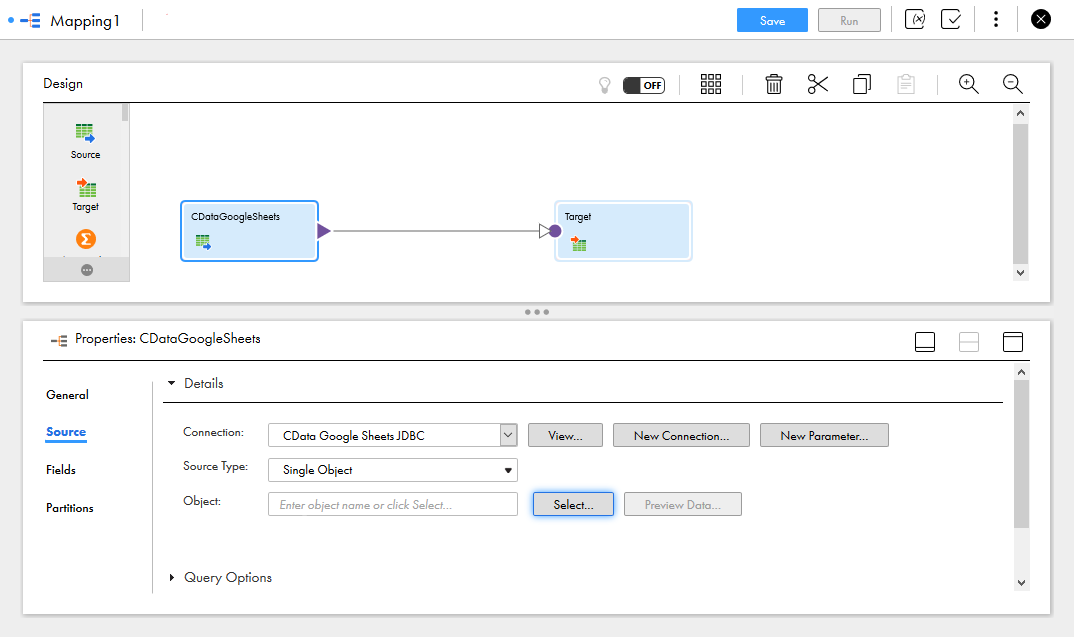

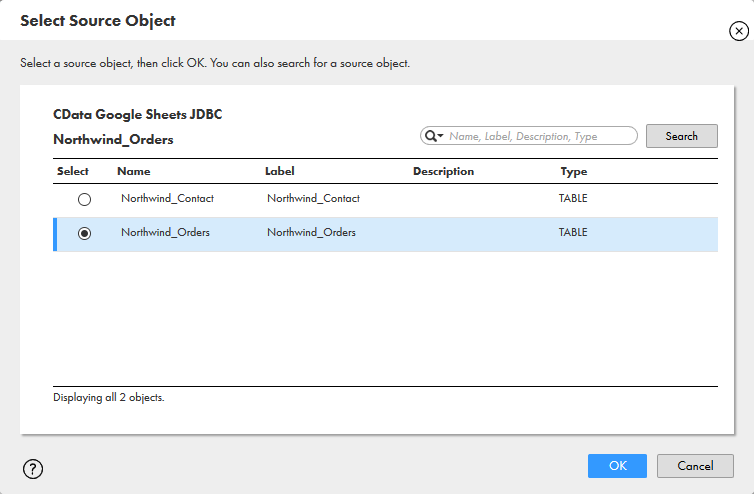

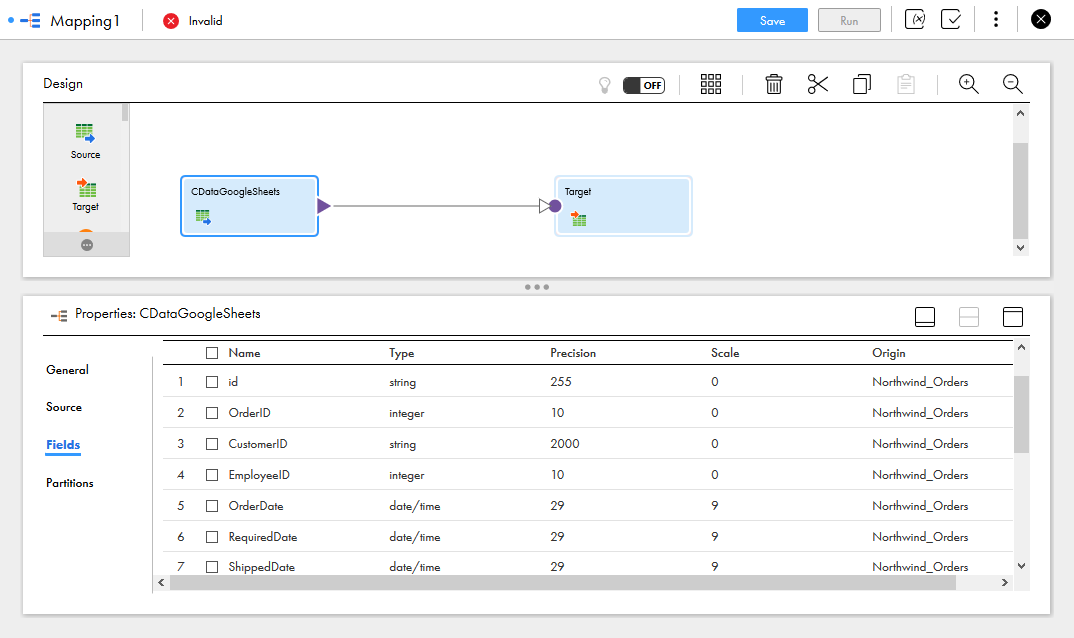

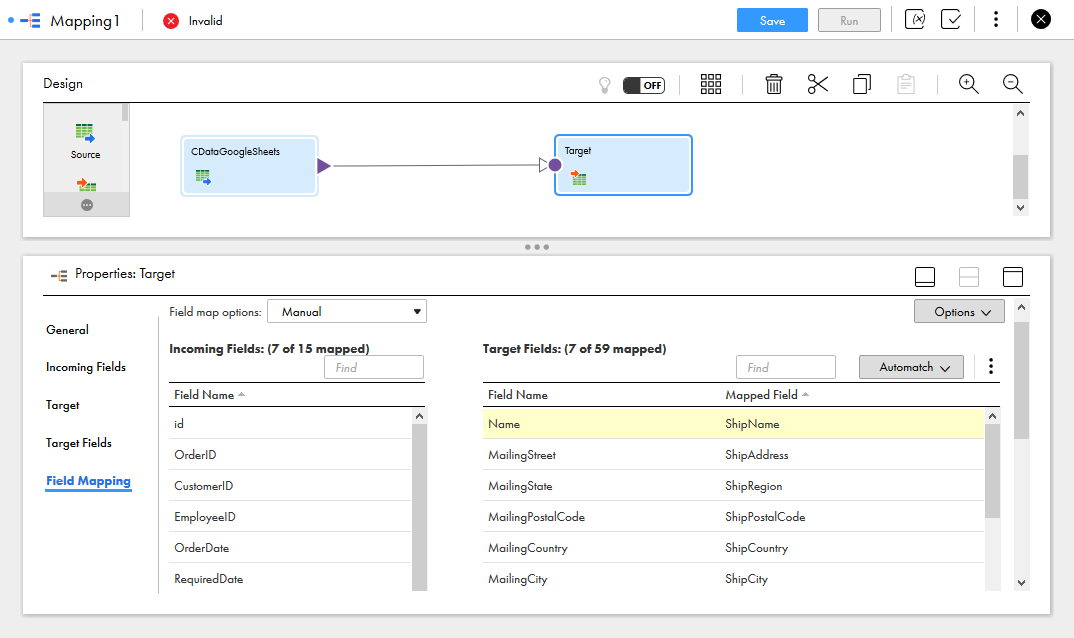

Azure Data Lake Storage への接続設定が完了し、Informatica のどのプロセスでもAzure Data Lake Storage データにアクセスできるようになりました。以下の手順で、Azure Data Lake Storage から別のデータターゲットへのマッピングを作成します。

マッピングの設定が完了し、Informatica Cloud でサポートされている接続とリアルタイムAzure Data Lake Storage データの統合を開始する準備ができました。CData JDBC Driver for ADLS の30日の無償評価版をダウンロードして、今日からInformatica Cloud でリアルタイムAzure Data Lake Storage データの操作をはじめましょう!