Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Visualize Azure Data Lake Storage Data in TIBCO Spotfire through ODBC

The ODBC standard has ubiquitous support and makes self-service business intelligence easy. Use the ODBC Driver to load Azure Data Lake Storage data into TIBCO Spotfire.

This article walks you through using the CData ODBC Driver for Azure Data Lake Storage in TIBCO Spotfire. You will use the data import wizard to connect to a DSN (data source name) for Salesforce and build on the sample visualizations to create a simple dashboard.

Connect to Azure Data Lake Storage as an ODBC Data Source

If you have not already, first specify connection properties in an ODBC DSN (data source name). This is the last step of the driver installation. You can use the Microsoft ODBC Data Source Administrator to create and configure ODBC DSNs.

Authenticating to a Gen 1 DataLakeStore Account

Gen 1 uses OAuth 2.0 in Azure AD for authentication.

For this, an Active Directory web application is required. You can create one as follows:

To authenticate against a Gen 1 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen1.

- Account: Set this to the name of the account.

- OAuthClientId: Set this to the application Id of the app you created.

- OAuthClientSecret: Set this to the key generated for the app you created.

- TenantId: Set this to the tenant Id. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

Authenticating to a Gen 2 DataLakeStore Account

To authenticate against a Gen 2 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen2.

- Account: Set this to the name of the account.

- FileSystem: Set this to the file system which will be used for this account.

- AccessKey: Set this to the access key which will be used to authenticate the calls to the API. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

When you configure the DSN, you may also want to set the Max Rows connection property. This will limit the number of rows returned, which is especially helpful for improving performance when designing reports and visualizations.

Create Visualizations of Azure Data Lake Storage Tables

Follow the steps below to connect to the DSN and create real-time data visualizations:

- Click Data -> Add Data ...

- Click Other -> Load data with ODBC, OLE DB, or ADO.NET data provider.

- In the Data Source Type menu, select ODBC Data Provider and click Configure.

- Select the DSN.

![The DSN to connect to in the Add Data Tables wizard. (Salesforce is shown.)]()

- Select the tables that you want to add to the dashboard. This example uses Resources. You can also specify an SQL query. The driver supports the standard SQL syntax.

![Tables and columns selected in the tree or specified by an SQL query. (Salesforce is shown.)]()

- If you want to work with the live data, click the Keep Data Table External option. This option enables your dashboards to reflect changes to the data in real time.

If you want to load the data into memory and process the data locally, click the Import Data Table option. This option is better for offline use or if a slow network connection is making your dashboard less interactive.

- After adding tables, the Recommended Visualizations wizard is displayed. When you select a table, Spotfire uses the column data types to detect number, time, and category columns. This example uses Permission in the Numbers section and FullPath in the Categories section.

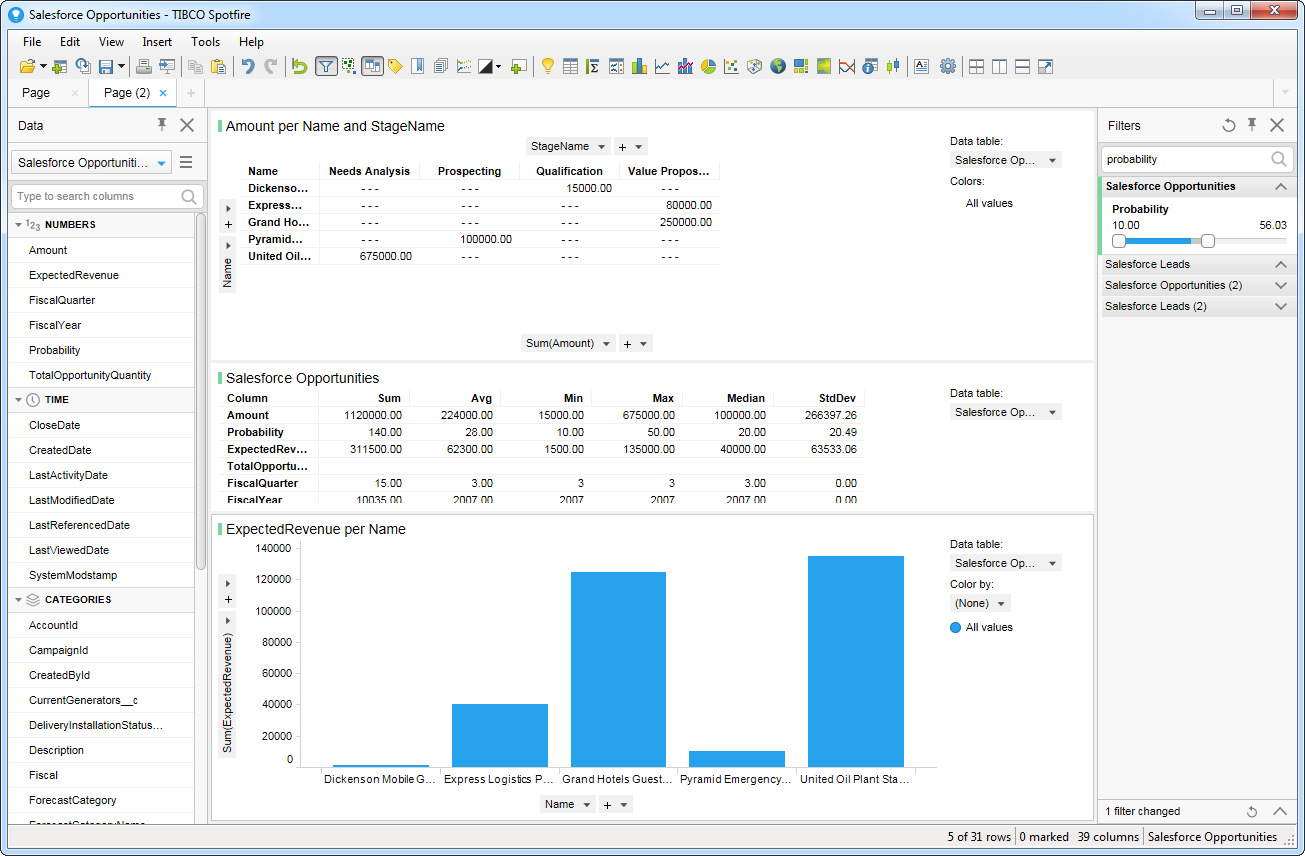

![Recommended visualizations for the imported data table. (Salesforce is shown.)]()

After adding several visualizations in the Recommended Visualizations wizard, you can make other modifications to the dashboard. For example, you can zoom in on high probability opportunities by applying a filter on the page. To add a filter, click the Filter button. The available filters for each query are displayed in the Filters pane.