Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Stream Confluence Data into Apache Kafka Topics

Access and stream Confluence data in Apache Kafka using the CData JDBC Driver and the Kafka Connect JDBC connector.

Apache Kafka is an open-source stream processing platform that is primarily used for building real-time data pipelines and event-driven applications. When paired with the CData JDBC Driver for Confluence, Kafka can work with live Confluence data. This article describes how to connect, access and stream Confluence data into Apache Kafka Topics and to start Confluent Control Center to help users secure, manage, and monitor the Confluence data received using Kafka infrastructure in the Confluent Platform.

With built-in optimized data processing, the CData JDBC Driver offers unmatched performance for interacting with live Confluence data. When you issue complex SQL queries to Confluence, the driver pushes supported SQL operations, like filters and aggregations, directly to Confluence and utilizes the embedded SQL engine to process unsupported operations client-side (often SQL functions and JOIN operations). Its built-in dynamic metadata querying allows you to work with and analyze Confluence data using native data types.

Prerequisites

Before connecting the CData JDBC Driver for streaming Confluence data in Apache Kafka Topics, install and configure the following in the client Linux-based system.

- Confluent Platform for Apache Kafka

- Confluent Hub CLI Installation

- Self-Managed Kafka JDBC Source Connector for Confluent Platform

Define a New JDBC Connection to Confluence data

- Download CData JDBC Driver for Confluence on a Linux-based system

- Follow the given instructions to create a new directory extract all the driver contents into it:

- Create a new directory named Confluence

mkdir Confluence - Move the downloaded driver file (.zip) into this new directory

mv ConfluenceJDBCDriver.zip Confluence/ - Unzip the CData ConfluenceJDBCDriver contents into this new directory

unzip ConfluenceJDBCDriver.zip

- Create a new directory named Confluence

- Open the Confluence directory and navigate to the lib folder

ls cd lib/ - Copy the contents of the lib folder of Confluence into the lib folder of Kafka Connect JDBC. Check the Kafka Connect JDBC folder contents to confirm that the cdata.jdbc.confluence.jar file is successfully copied into the lib folder

cp * ../../confluent-7.5.0/share/confluent-hub-components/confluentinc-kafka-connect-jdbc/lib/ cd ../../confluent-7.5.0/share/confluent-hub-components/confluentinc-kafka-connect-jdbc/lib/ - Install the CData Confluence JDBC driver license using the given command, followed by your Name and Email ID

java -jar cdata.jdbc.confluence.jar -l - Enter the product key or "TRIAL" (In the scenarios of license expiry, please contact our CData Support team)

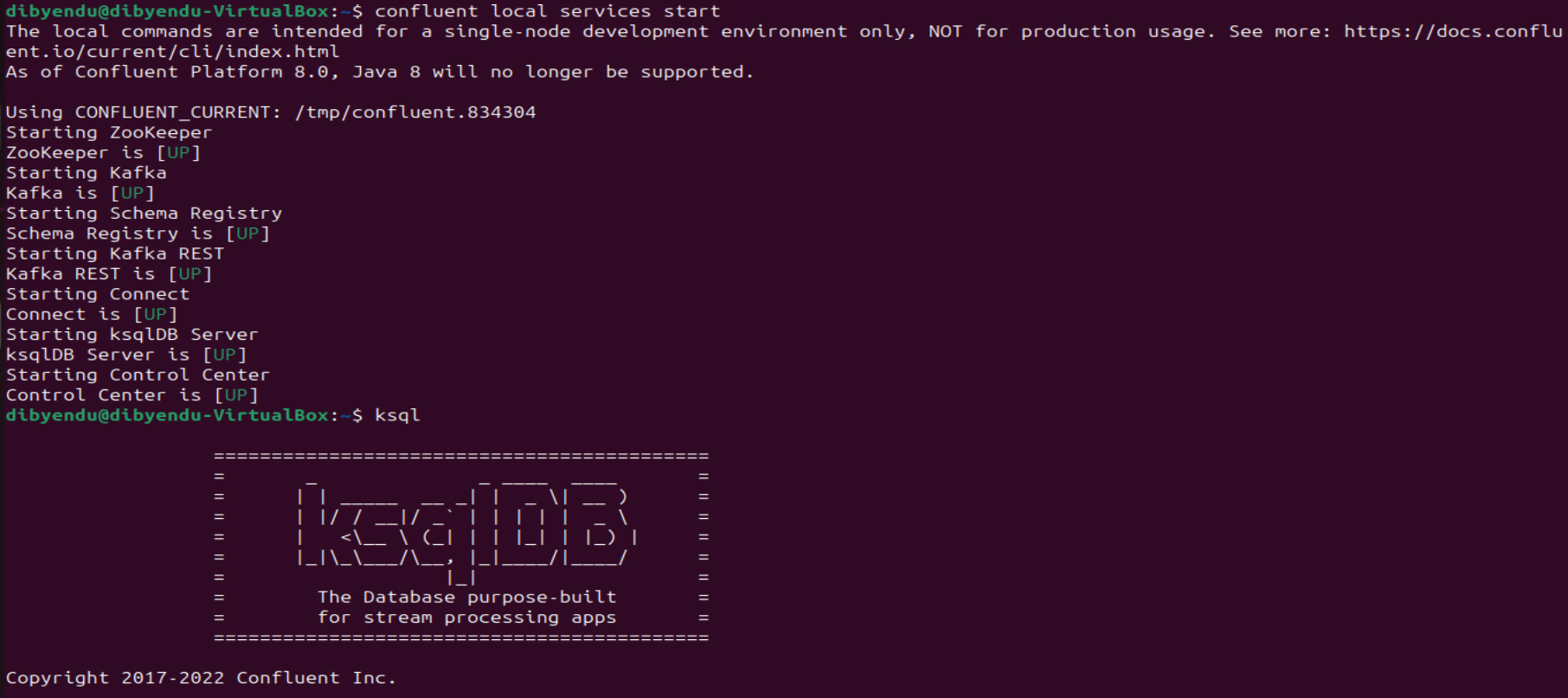

- Start the Confluent local services using the command:

confluent local services startThis starts all the Confluent Services like Zookeeper, Kafka, Schema Registry, Kafka REST, Kafka CONNECT, ksqlDB and Control Center. You are now ready to use the CData JDBC driver for Confluence to stream messages using Kafka Connect Driver into Kafka Topics on ksqlDB.

![Start the Confluent local services Start the Confluent local services]()

- Create the Kafka topics manually using a POST HTTP API Request:

curl --location 'server_address:8083/connectors' --header 'Content-Type: application/json' --data '{ "name": "jdbc_source_cdata_confluence_01", "config": { "connector.class": "io.confluent.connect.jdbc.JdbcSourceConnector", "connection.url": "jdbc:confluence:User=admin;APIToken=myApiToken;Url=https://yoursitename.atlassian.net;Timezone=America/New_York;", "topic.prefix": "confluence-01-", "mode": "bulk" } }'Let us understand the fields used in the HTTP POST body (shown above):

- connector.class: Specifies the Java class of the Kafka Connect connector to be used.

- connection.url: The JDBC connection URL to connect with Confluence data.

Built-in Connection String Designer

For assistance in constructing the JDBC URL, use the connection string designer built into the Confluence JDBC Driver. Either double-click the JAR file or execute the jar file from the command-line.

java -jar cdata.jdbc.confluence.jarFill in the connection properties and copy the connection string to the clipboard.

Obtaining an API Token

An API token is necessary for account authentication. To generate one, login to your Atlassian account and navigate to API tokens > Create API token. The generated token will be displayed.

Connect Using a Confluence Cloud Account

To connect to a Cloud account, provide the following (Note: Password has been deprecated for connecting to a Cloud Account and is now used only to connect to a Server Instance.):

- User: The user which will be used to authenticate with the Confluence server.

- APIToken: The API Token associated with the currently authenticated user.

- Url: The URL associated with your JIRA endpoint. For example, https://yoursitename.atlassian.net.

Connect Using a Confluence Server Instance

To connect to a Server instance, provide the following:

- User: The user which will be used to authenticate with the Confluence instance.

- Password: The password which will be used to authenticate with the Confluence server.

- Url: The URL associated with your JIRA endpoint. For example, https://yoursitename.atlassian.net.

![Using the built-in connection string designer to generate a JDBC URL (Salesforce is shown.) Using the built-in connection string designer to generate a JDBC URL (Salesforce is shown.)]()

- topic.prefix: A prefix that will be added to the Kafka topics created by the connector. It's set to "confluence-01-".

- mode: Specifies the mode in which the connector operates. In this case, it's set to "bulk", which suggests that the connector is configured to perform bulk data transfer.

This request adds all the tables/contents from Confluence as Kafka Topics.

Note: The IP Address (server) to POST the request (shown above) is the Linux Network IP Address.

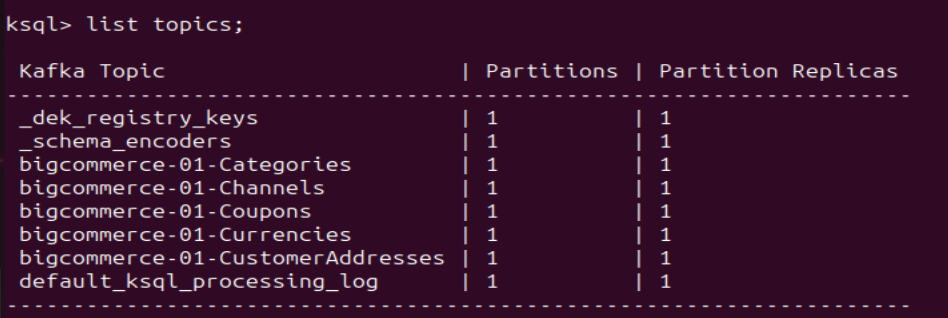

- Run ksqlDB and list the topics. Use the commands:

ksql list topics;![List the Kafka Topics (BigCommerce is shown) List the Kafka Topics (BigCommerce is shown)]()

- To view the data inside the topics, type the SQL Statement:

PRINT topic FROM BEGINNING;

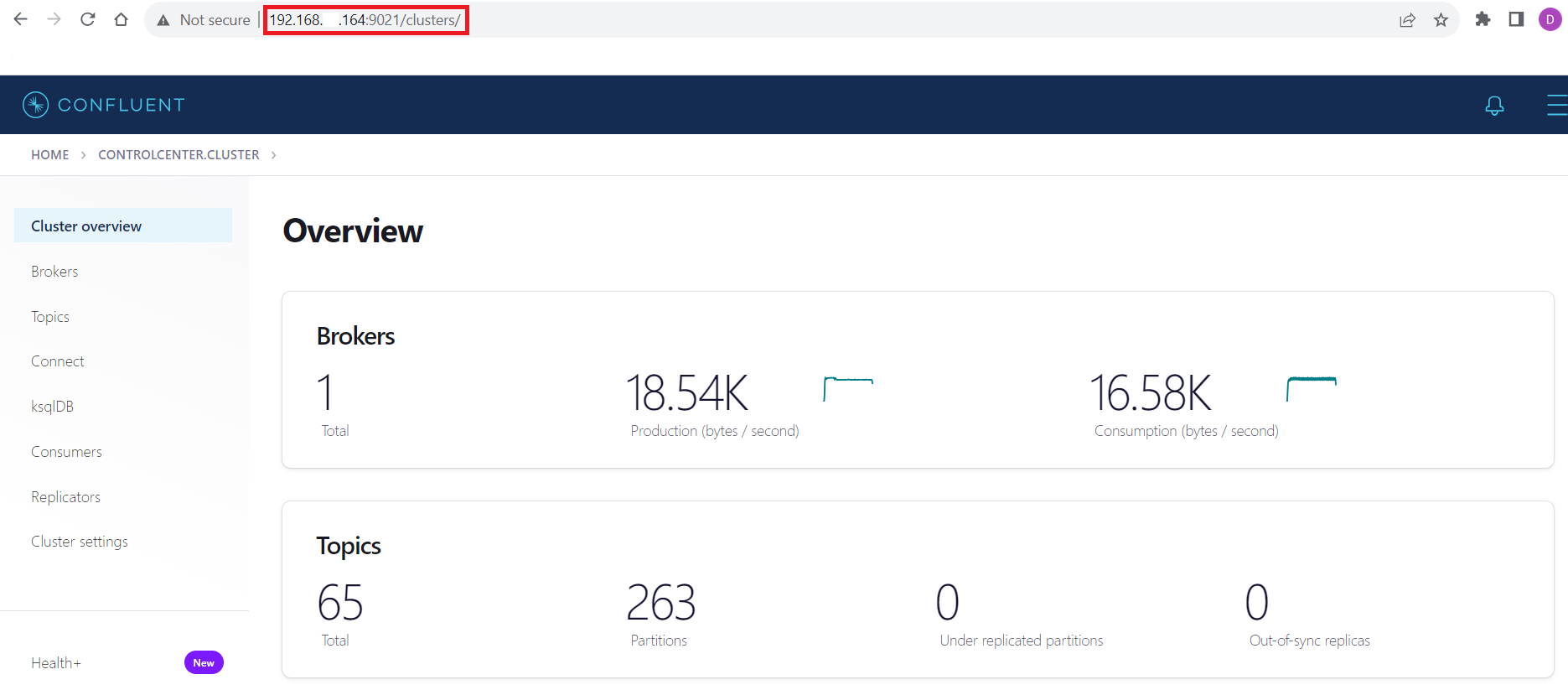

Connecting with the Confluent Control Center

To access the Confluent Control Center user interface, ensure to run the "confluent local services" as described in the above section and type http://<server address>:9021/clusters/ on your local browser.

Get Started Today

Download a free, 30-day trial of the CData JDBC Driver for Confluence and start streaming Confluence data into Apache Kafka. Reach out to our Support Team if you have any questions.