Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →How to integrate HCL Domino with Apache Airflow

Access and process HCL Domino data in Apache Airflow using the CData JDBC Driver.

Apache Airflow supports the creation, scheduling, and monitoring of data engineering workflows. When paired with the CData JDBC Driver for HCL Domino, Airflow can work with live HCL Domino data. This article describes how to connect to and query HCL Domino data from an Apache Airflow instance and store the results in a CSV file.

With built-in optimized data processing, the CData JDBC Driver offers unmatched performance for interacting with live HCL Domino data. When you issue complex SQL queries to HCL Domino, the driver pushes supported SQL operations, like filters and aggregations, directly to HCL Domino and utilizes the embedded SQL engine to process unsupported operations client-side (often SQL functions and JOIN operations). Its built-in dynamic metadata querying allows you to work with and analyze HCL Domino data using native data types.

Configuring the Connection to HCL Domino

Built-in Connection String Designer

For assistance in constructing the JDBC URL, use the connection string designer built into the HCL Domino JDBC Driver. Either double-click the JAR file or execute the jar file from the command-line.

java -jar cdata.jdbc.domino.jar

Fill in the connection properties and copy the connection string to the clipboard.

Connecting to Domino

To connect to Domino data, set the following properties:

- URL: The host name or IP of the server hosting the Domino database. Include the port of the server hosting the Domino database. For example: http://sampleserver:1234/

- DatabaseScope: The name of a scope in the Domino Web UI. The driver exposes forms and views for the schema governed by the specified scope. In the Domino Admin UI, select the Scopes menu in the sidebar. Set this property to the name of an existing scope.

Authenticating with Domino

Domino supports authenticating via login credentials or an Azure Active Directory OAuth application:

Login Credentials

To authenticate with login credentials, set the following properties:

- AuthScheme: Set this to "OAuthPassword"

- User: The username of the authenticating Domino user

- Password: The password associated with the authenticating Domino user

The driver uses the login credentials to automatically perform an OAuth token exchange.

AzureAD

This authentication method uses Azure Active Directory as an IdP to obtain a JWT token. You need to create a custom OAuth application in Azure Active Directory and configure it as an IdP. To do so, follow the instructions in the Help documentation. Then set the following properties:

- AuthScheme: Set this to "AzureAD"

- InitiateOAuth: Set this to GETANDREFRESH. You can use InitiateOAuth to avoid repeating the OAuth exchange and manually setting the OAuthAccessToken.

- OAuthClientId: The Client ID obtained when setting up the custom OAuth application.

- OAuthClientSecret: The Client secret obtained when setting up the custom OAuth application.

- CallbackURL: The redirect URI defined when you registered your app. For example: https://localhost:33333

- AzureTenant: The Microsoft Online tenant being used to access data. Supply either a value in the form companyname.microsoft.com or the tenant ID.

The tenant ID is the same as the directory ID shown in the Azure Portal's Azure Active Directory > Properties page.

To host the JDBC driver in clustered environments or in the cloud, you will need a license (full or trial) and a Runtime Key (RTK). For more information on obtaining this license (or a trial), contact our sales team.

The following are essential properties needed for our JDBC connection.

| Property | Value |

|---|---|

| Database Connection URL | jdbc:domino:RTK=5246...;Server=https://domino.corp.com;AuthScheme=OAuthPassword;User=my_domino_user;Password=my_domino_password; |

| Database Driver Class Name | cdata.jdbc.domino.DominoDriver |

Establishing a JDBC Connection within Airflow

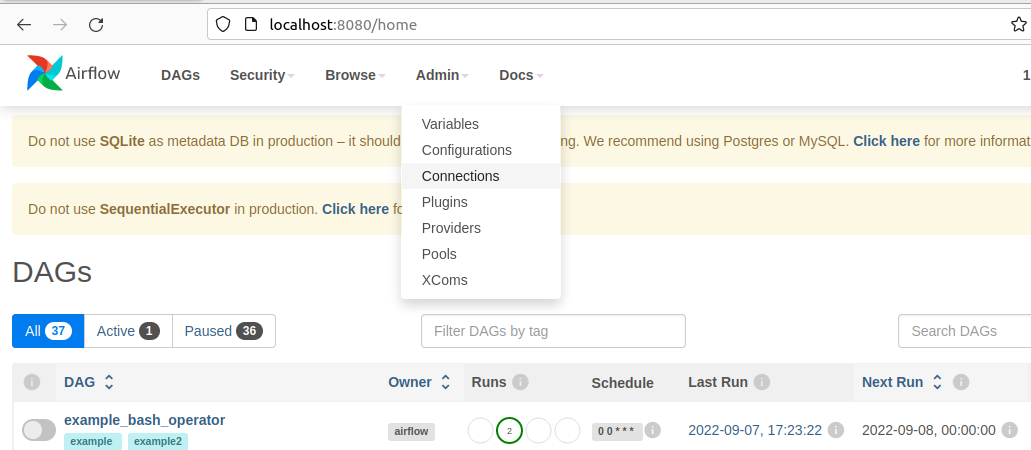

- Log into your Apache Airflow instance.

- On the navbar of your Airflow instance, hover over Admin and then click Connections.

![Clicking connections]()

- Next, click the + sign on the following screen to create a new connection.

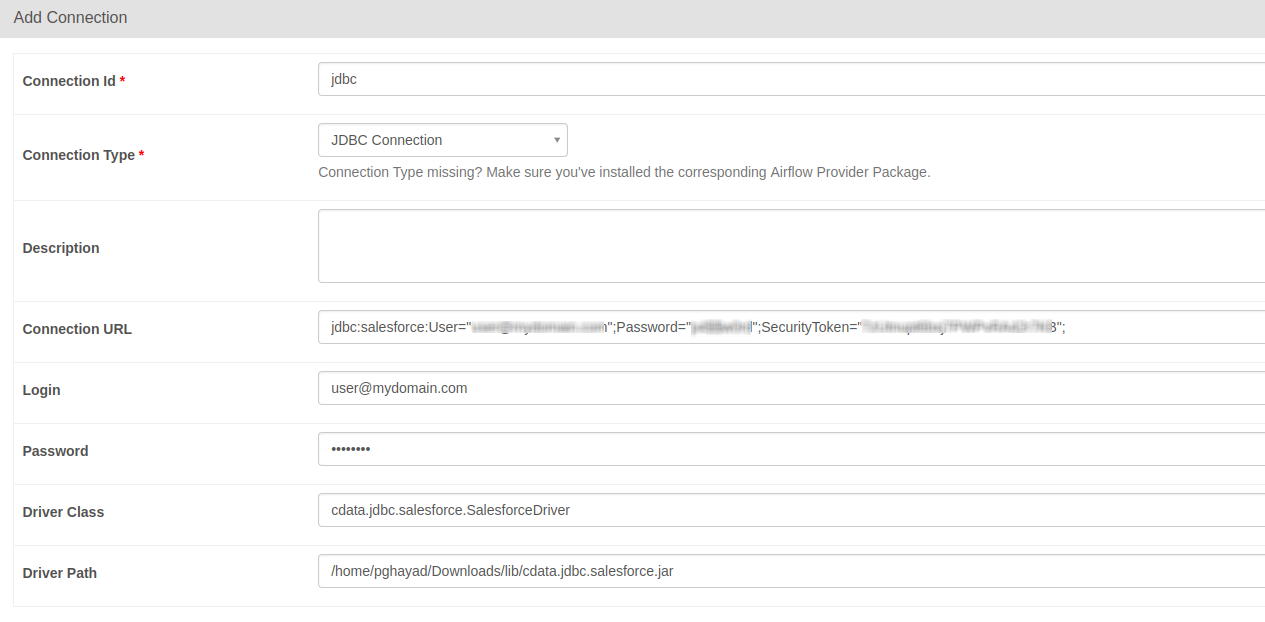

- In the Add Connection form, fill out the required connection properties:

- Connection Id: Name the connection, i.e.: domino_jdbc

- Connection Type: JDBC Connection

- Connection URL: The JDBC connection URL from above, i.e.: jdbc:domino:RTK=5246...;Server=https://domino.corp.com;AuthScheme=OAuthPassword;User=my_domino_user;Password=my_domino_password;)

- Driver Class: cdata.jdbc.domino.DominoDriver

- Driver Path: PATH/TO/cdata.jdbc.domino.jar

![Add JDBC connection form]()

- Test your new connection by clicking the Test button at the bottom of the form.

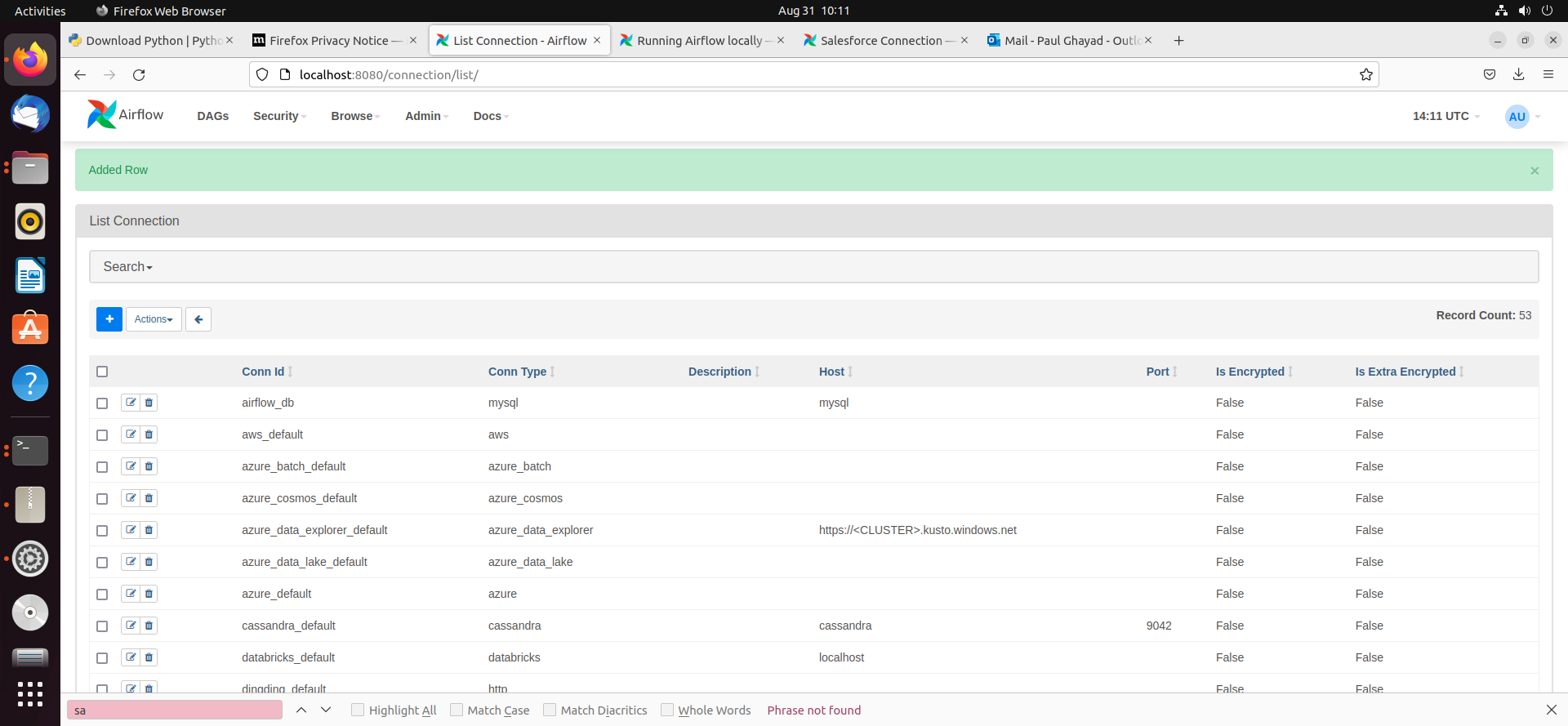

- After saving the new connection, on a new screen, you should see a green banner saying that a new row was added to the list of connections:

![New connection added]()

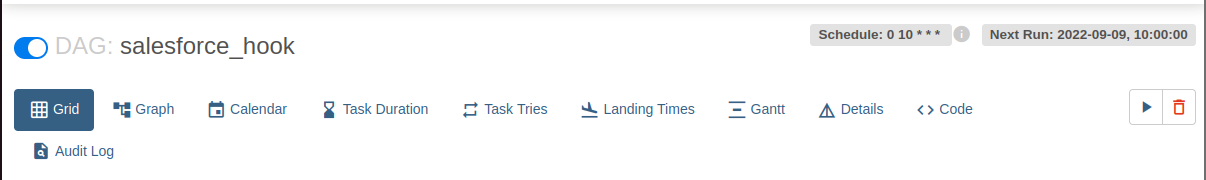

Creating a DAG

A DAG in Airflow is an entity that stores the processes for a workflow and can be triggered to run this workflow. Our workflow is to simply run a SQL query against HCL Domino data and store the results in a CSV file.

- To get started, in the Home directory, there should be an "airflow" folder. Within there, we can create a new directory and title it "dags". In here, we store Python files that convert into Airflow DAGs shown on the UI.

- Next, create a new Python file and title it hcl domino_hook.py. Insert the following code inside of this new file:

import time from datetime import datetime from airflow.decorators import dag, task from airflow.providers.jdbc.hooks.jdbc import JdbcHook import pandas as pd # Declare Dag @dag(dag_id="hcl domino_hook", schedule_interval="0 10 * * *", start_date=datetime(2022,2,15), catchup=False, tags=['load_csv']) # Define Dag Function def extract_and_load(): # Define tasks @task() def jdbc_extract(): try: hook = JdbcHook(jdbc_conn_id="jdbc") sql = """ select * from Account """ df = hook.get_pandas_df(sql) df.to_csv("/{some_file_path}/{name_of_csv}.csv",header=False, index=False, quoting=1) # print(df.head()) print(df) tbl_dict = df.to_dict('dict') return tbl_dict except Exception as e: print("Data extract error: " + str(e)) jdbc_extract() sf_extract_and_load = extract_and_load() - Save this file and refresh your Airflow instance. Within the list of DAGs, you should see a new DAG titled "hcl domino_hook".

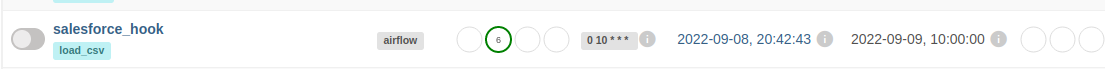

![New DAG added]()

- Click on this DAG and, on the new screen, click on the unpause switch to make it turn blue, and then click the trigger (i.e. play) button to run the DAG. This executes the SQL query in our hcl domino_hook.py file and export the results as a CSV to whichever file path we designated in our code.

![Run the DAG]()

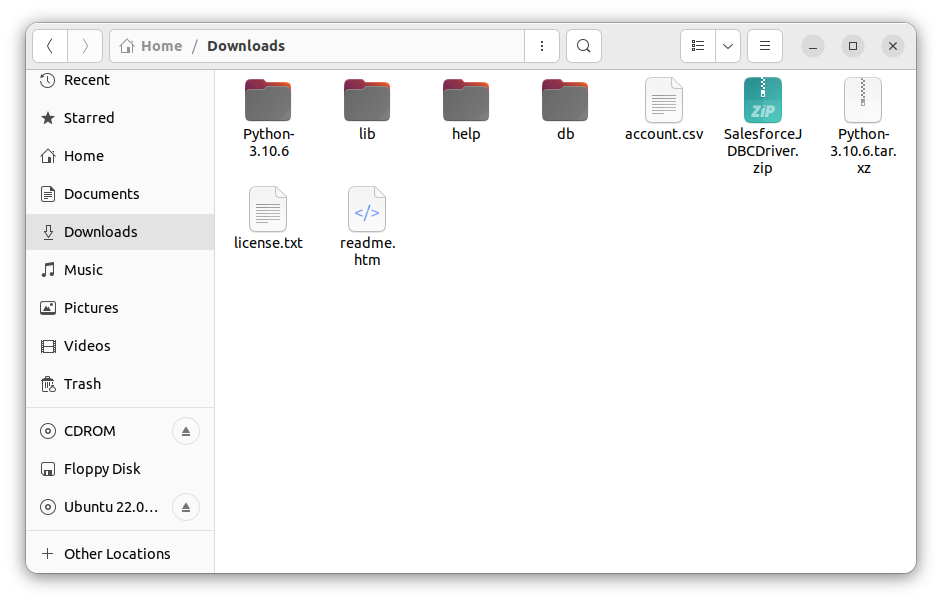

- After triggering our new DAG, we check the Downloads folder (or wherever you chose within your Python script), and see that the CSV file has been created - in this case, account.csv.

![CSV created]()

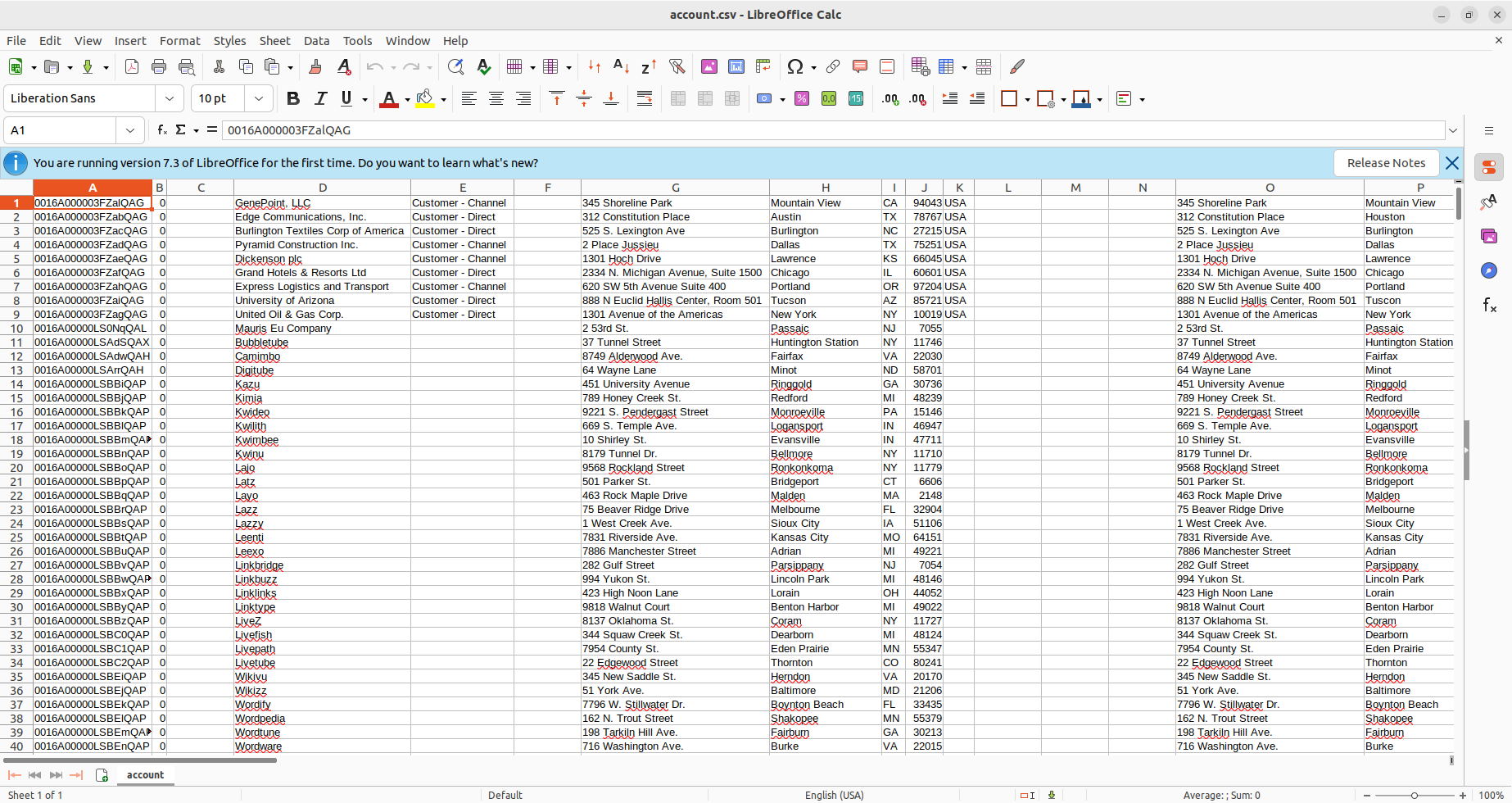

- Open the CSV file to see that your HCL Domino data is now available for use in CSV format thanks to Apache Airflow.

![CSV file with HCL Domino data.]()