Build Azure Data Lake Storage-Powered Applications in GitHub Copilot with CData Code Assist MCP

GitHub Copilot is an AI-powered coding assistant that integrates directly into Visual Studio Code and other IDEs. With support for MCP, GitHub Copilot can connect to local tools and enterprise data sources, enabling natural language interaction with live systems during development.

Model Context Protocol (MCP) is an open standard for connecting LLM clients to external services through structured tool interfaces. MCP servers expose capabilities such as schema discovery and live querying, allowing AI agents to retrieve and reason over real-time data safely and consistently.

In this article, we guide you through installing the CData Code Assist MCP for Azure Data Lake Storage, configuring the connection to Azure Data Lake Storage, connecting the Code Assist MCP add-on to GitHub Copilot, and querying live Azure Data Lake Storage data from within Visual Studio Code.

Prerequisites

- Visual Studio Code is installed on your machine

- GitHub Copilot Chat extension is enabled in Visual Studio Code

- CData Code Assist MCP for Azure Data Lake Storage has been installed

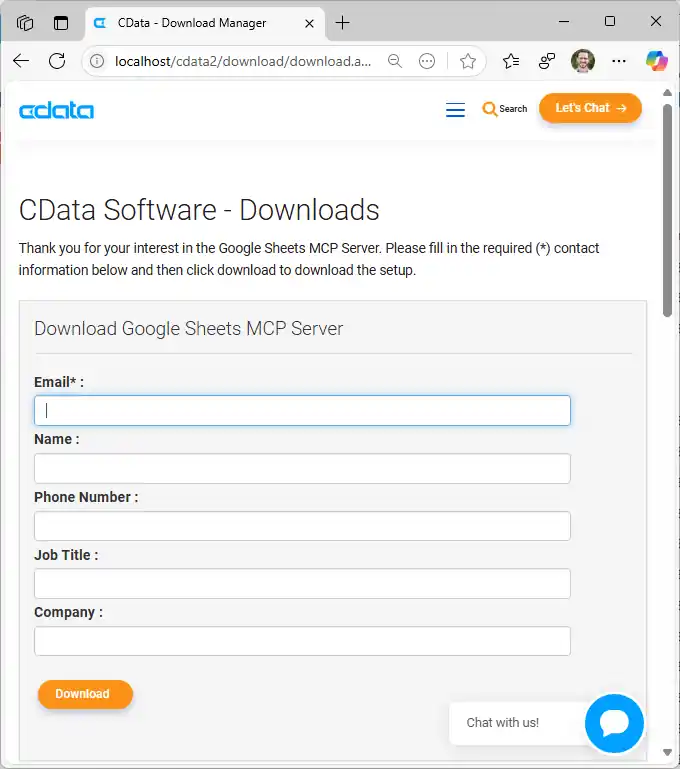

Step 1: Download and install the CData Code Assist MCP for Azure Data Lake Storage

- To begin, download the CData Code Assist MCP for Azure Data Lake Storage

- Find and double-click the installer to begin the installation

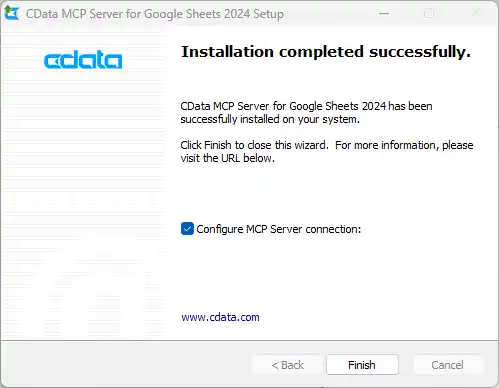

- Run the installer and follow the prompts to complete the installation

When the installation is complete, you are ready to configure your Code Assist MCP add-on by connecting to Azure Data Lake Storage.

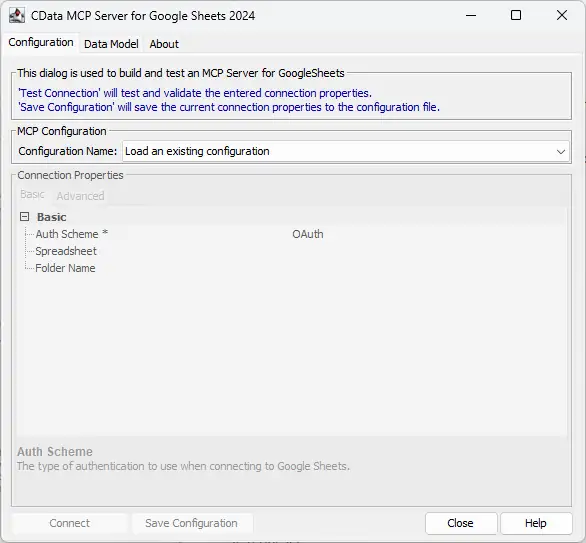

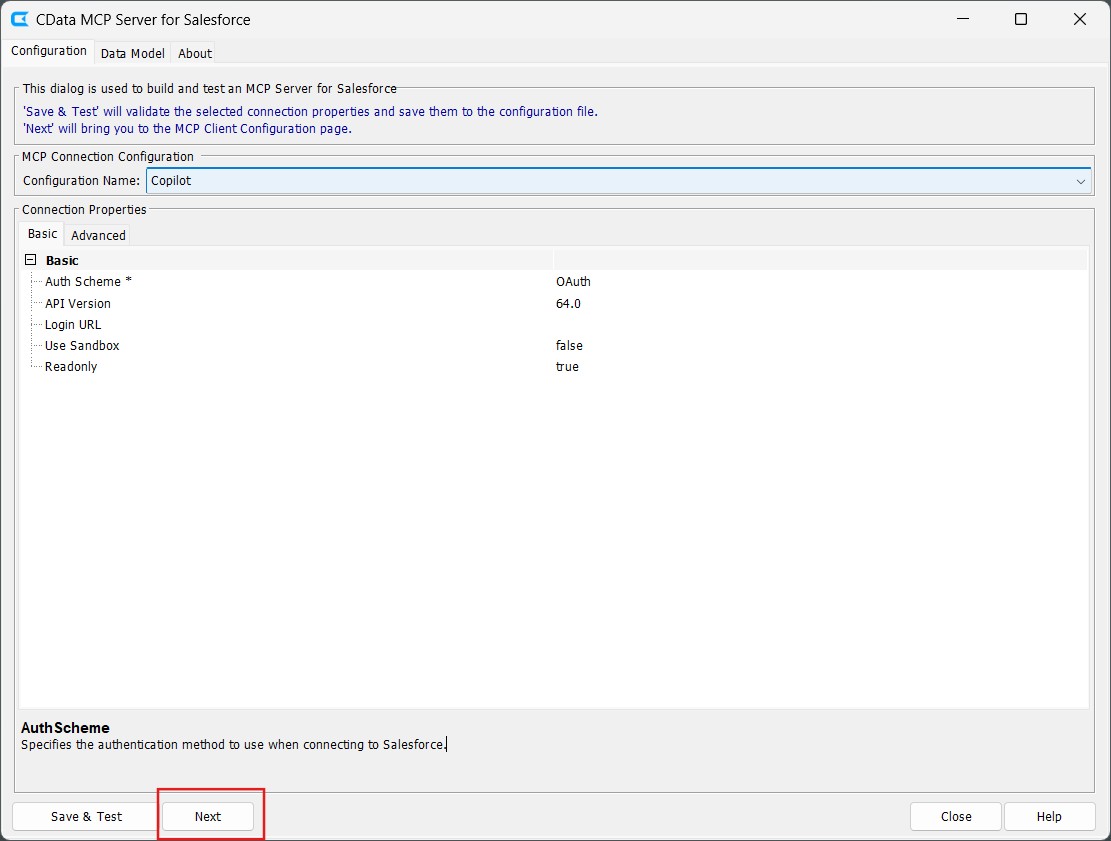

Step 2: Configure the connection to Azure Data Lake Storage

- After installation, open the CData Code Assist MCP for Azure Data Lake Storage configuration wizard

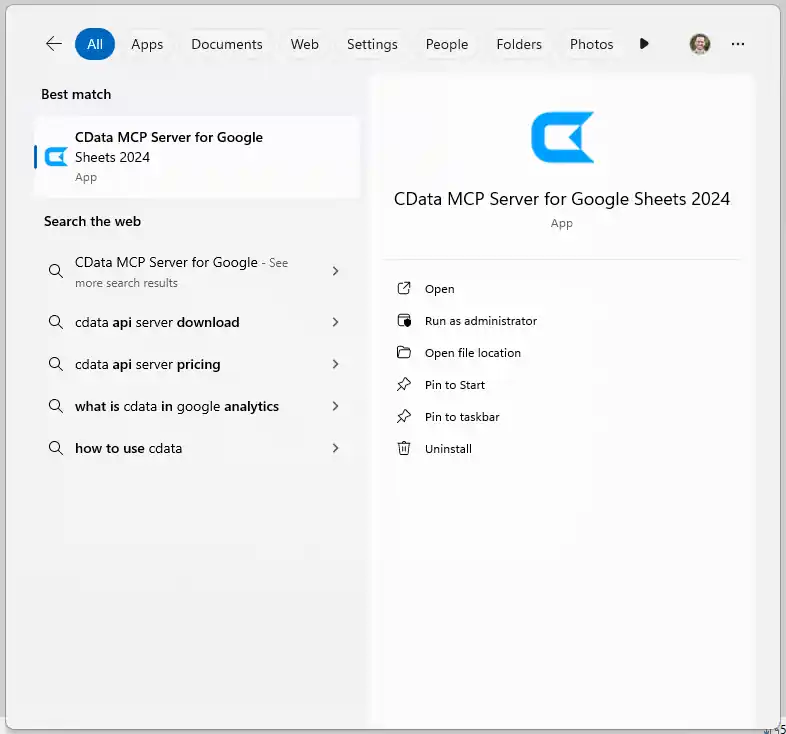

NOTE: If the wizard does not open automatically, search for "CData Code Assist MCP for Azure Data Lake Storage" in the Windows search bar and open the application.

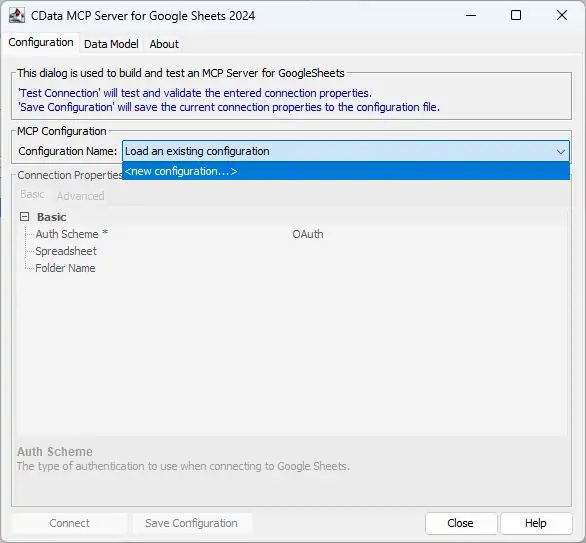

- In MCP Configuration > Configuration Name, either select an existing configuration or choose

to create a new one

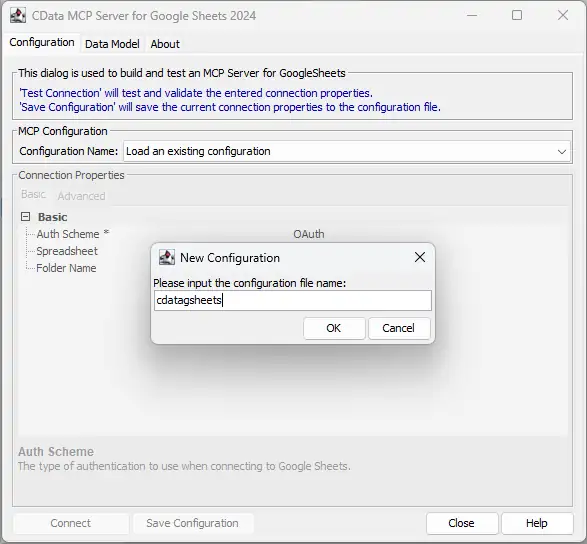

- Name the configuration (e.g. "cdata_adls") and click OK

-

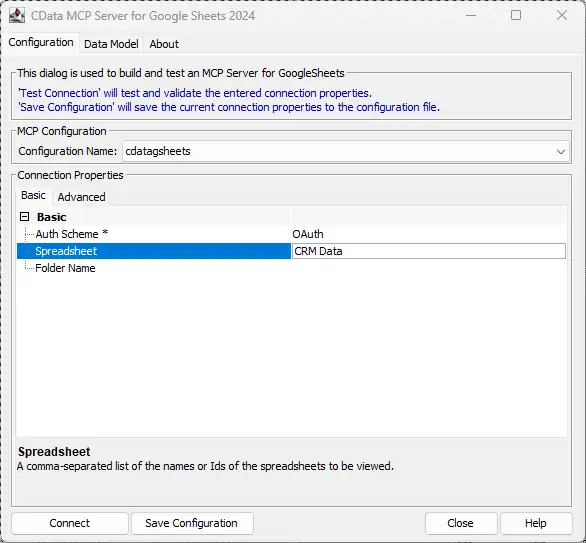

Enter the appropriate connection properties in the configuration wizard

Authenticating to a Gen 1 DataLakeStore Account

Gen 1 uses OAuth 2.0 in Entra ID (formerly Azure AD) for authentication.

For this, an Active Directory web application is required. You can create one as follows:

To authenticate against a Gen 1 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen1.

- Account: Set this to the name of the account.

- OAuthClientId: Set this to the application Id of the app you created.

- OAuthClientSecret: Set this to the key generated for the app you created.

- TenantId: Set this to the tenant Id. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

Authenticating to a Gen 2 DataLakeStore Account

To authenticate against a Gen 2 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen2.

- Account: Set this to the name of the account.

- FileSystem: Set this to the file system which will be used for this account.

- AccessKey: Set this to the access key which will be used to authenticate the calls to the API. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

- Click Connect to authenticate with Azure Data Lake Storage through OAuth

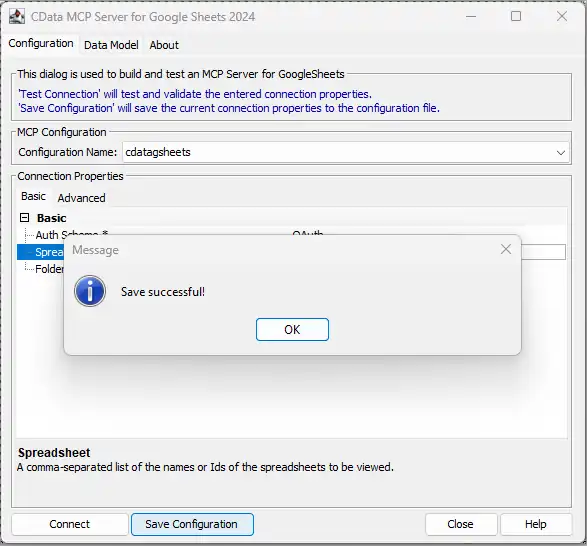

- Click Save & Test to finalize the connection

This process creates a .mcp configuration file that GitHub Copilot will reference when launching the Code Assist MCP add-on. Now with your Code Assist MCP add-on configured, you are ready to connect it to GitHub Copilot.

Step 3: Connect the Code Assist MCP add-on to GitHub Copilot

- Download and install Visual Studio Code and enable the GitHub Copilot Chat extension

- Open or create the mcp.json file:

- For global configuration: %%APPDATA%%/Roaming/Code/User/mcp.json

- For project-specific configuration:

/.vscode/mcp.json

- Add the JSON code shown below and save the file

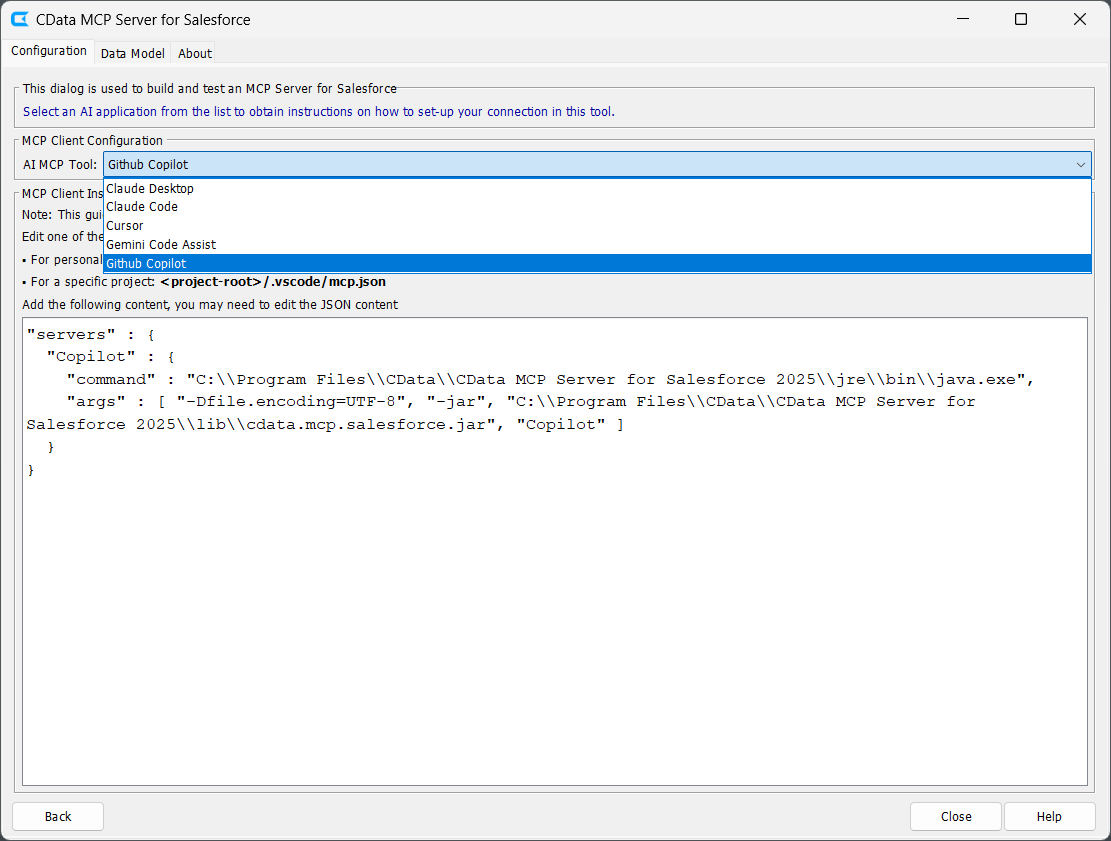

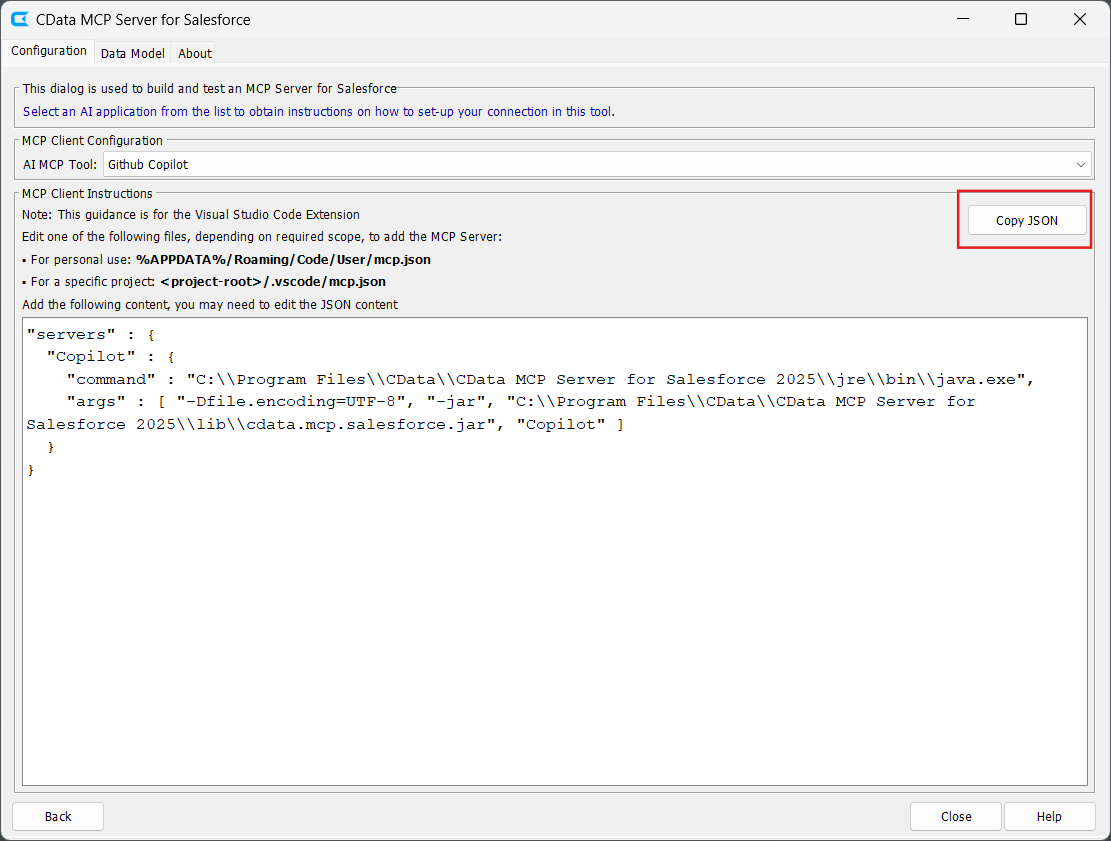

- After saving and testing your connection in the configuration wizard, click Next

- Select Github Copilot from the AI MCP Tool dropdown

- Follow the MCP Client Instructions to create the required configuration file

- Copy the displayed JSON code and paste it into your configuration file

Option 1: Manually add the MCP configuration

{

"servers": {

"cdata_adls": {

"command": "C:\Program Files\CData\CData Code Assist MCP for Azure Data Lake Storage\jre\bin\java.exe",

"args": [

"-Dfile.encoding=UTF-8",

"-jar",

"C:\Program Files\CData\CData Code Assist MCP for Azure Data Lake Storage\lib\cdata.mcp.adls.jar",

"cdata_adls"

]

}

}

}

NOTE: The command value should point to your Java 17+ java.exe executable, and the JAR path should point to the installed CData Code Assist MCP add-on .jar file. The final argument must match the MCP configuration name you saved in the CData configuration wizard (e.g. "cdata_adls").

Option 2: Copy the MCP configuration from the CData Code Assist MCP for Azure Data Lake Storage UI

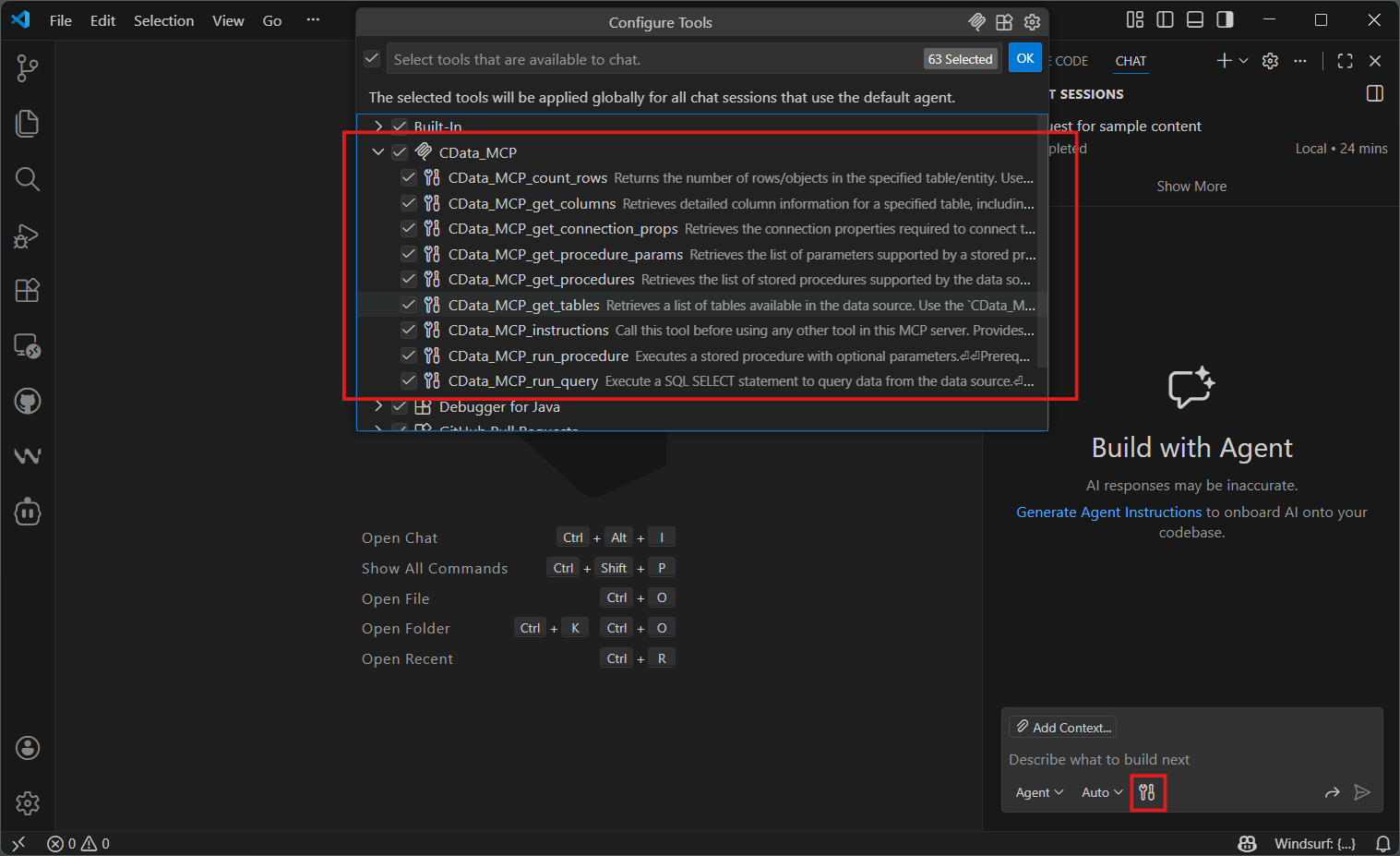

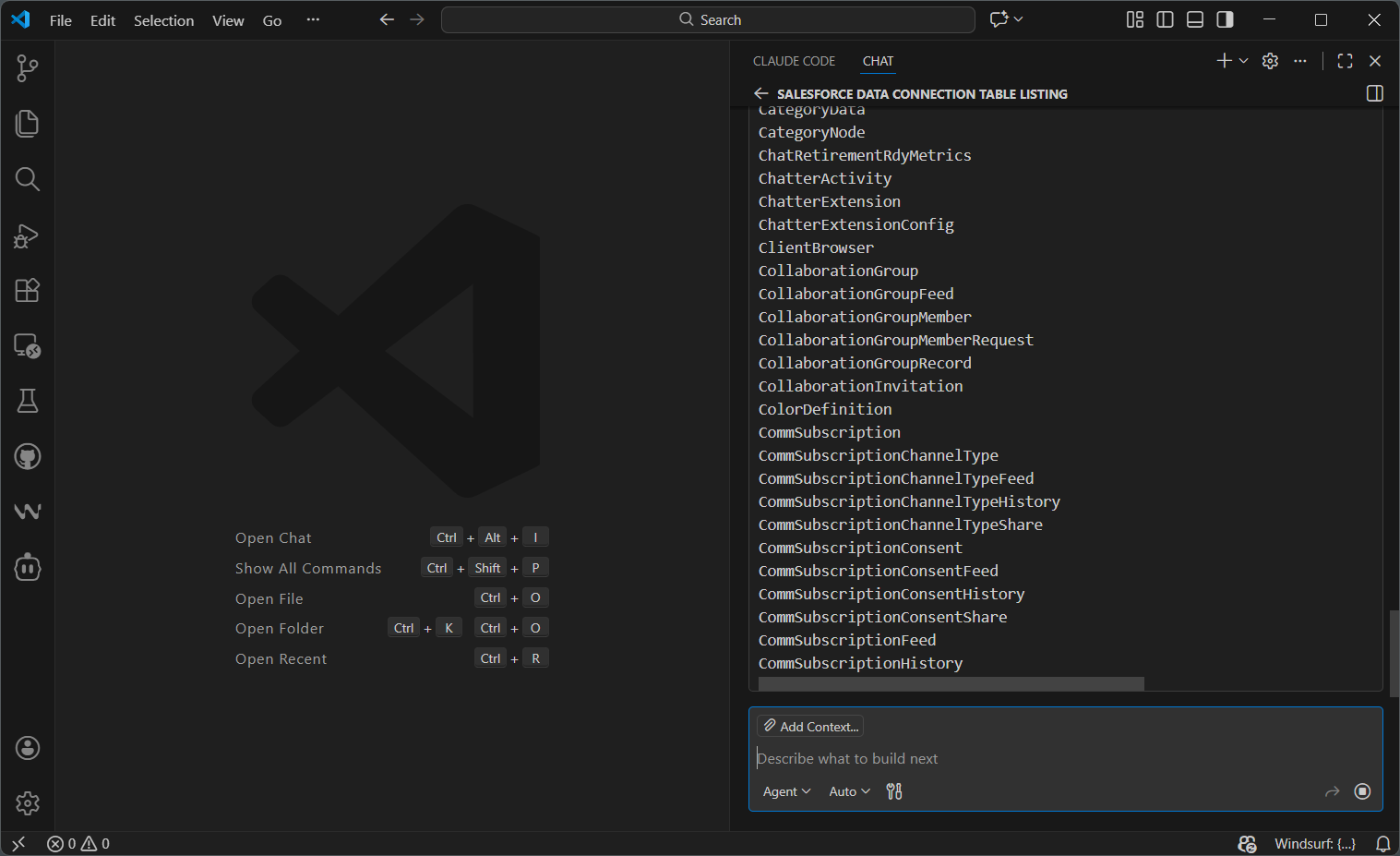

Step 4: Query live Azure Data Lake Storage data in GitHub Copilot

- Launch Visual Studio Code and open the GitHub Copilot Chat interface. Select the tool icon to enable the configured Code Assist MCP add-on

- Ask questions about your Azure Data Lake Storage data using natural language. For example:

"List all tables available in my Azure Data Lake Storage data data connection."

- Start building with natural language prompts:

For my project, data from the Resources is very important. Pull data from the most important columns like FullPath and Permission.

GitHub Copilot is now fully integrated with CData Code Assist MCP for Azure Data Lake Storage and can use the MCP tools to explore schemas and execute live queries against Azure Data Lake Storage.

Build with Code Assist MCP. Deploy with CData Drivers.

Download Code Assist MCP for free and give your AI tools schema-aware access to live Azure Data Lake Storage data during development. When you're ready to move to production, CData Azure Data Lake Storage Drivers deliver the same SQL-based access with enterprise-grade performance, security, and reliability.

Visit the CData Community to share insights, ask questions, and explore what's possible with MCP-powered AI workflows.