Use Agno to Talk to Your Databricks Data via CData Connect AI

Agno is a developer-first Python framework for building AI agents that reason, plan, and take actions using tools. Agno emphasizes a clean, code-driven architecture where the agent runtime remains fully under developer control.

CData Connect AI provides a secure cloud-to-cloud interface for integrating 300+ enterprise data sources with AI systems. Using Connect AI, live Databricks data data can be exposed through a remote MCP endpoint without replication.

In this guide, we build a production-ready Agno agent using the Agno Python SDK. The agent connects to CData Connect AI via MCP using streamable HTTP, dynamically discovers available tools, and invokes them to query live Databricks data.

Prerequisites

- Python 3.9+.

- A CData Connect AI account – Sign up or log in here.

- An active Databricks account with valid credentials.

- An LLM API key (for example, OpenAI).

Overview

Here is a high-level overview of the process:

- Connect: Configure a Databricks connection in CData Connect AI.

- Discover: Use MCP to dynamically retrieve tools exposed by CData Connect AI.

- Query: Wrap MCP tools as Agno functions and query live Databricks data.

About Databricks Data Integration

Accessing and integrating live data from Databricks has never been easier with CData. Customers rely on CData connectivity to:

- Access all versions of Databricks from Runtime Versions 9.1 - 13.X to both the Pro and Classic Databricks SQL versions.

- Leave Databricks in their preferred environment thanks to compatibility with any hosting solution.

- Secure authenticate in a variety of ways, including personal access token, Azure Service Principal, and Azure AD.

- Upload data to Databricks using Databricks File System, Azure Blog Storage, and AWS S3 Storage.

While many customers are using CData's solutions to migrate data from different systems into their Databricks data lakehouse, several customers use our live connectivity solutions to federate connectivity between their databases and Databricks. These customers are using SQL Server Linked Servers or Polybase to get live access to Databricks from within their existing RDBMs.

Read more about common Databricks use-cases and how CData's solutions help solve data problems in our blog: What is Databricks Used For? 6 Use Cases.

Getting Started

Step 1: Configure Databricks in CData Connect AI

To enable Agno to query live Databricks data, first create a Databricks connection in CData Connect AI. This connection is exposed through the CData Remote MCP Server.

-

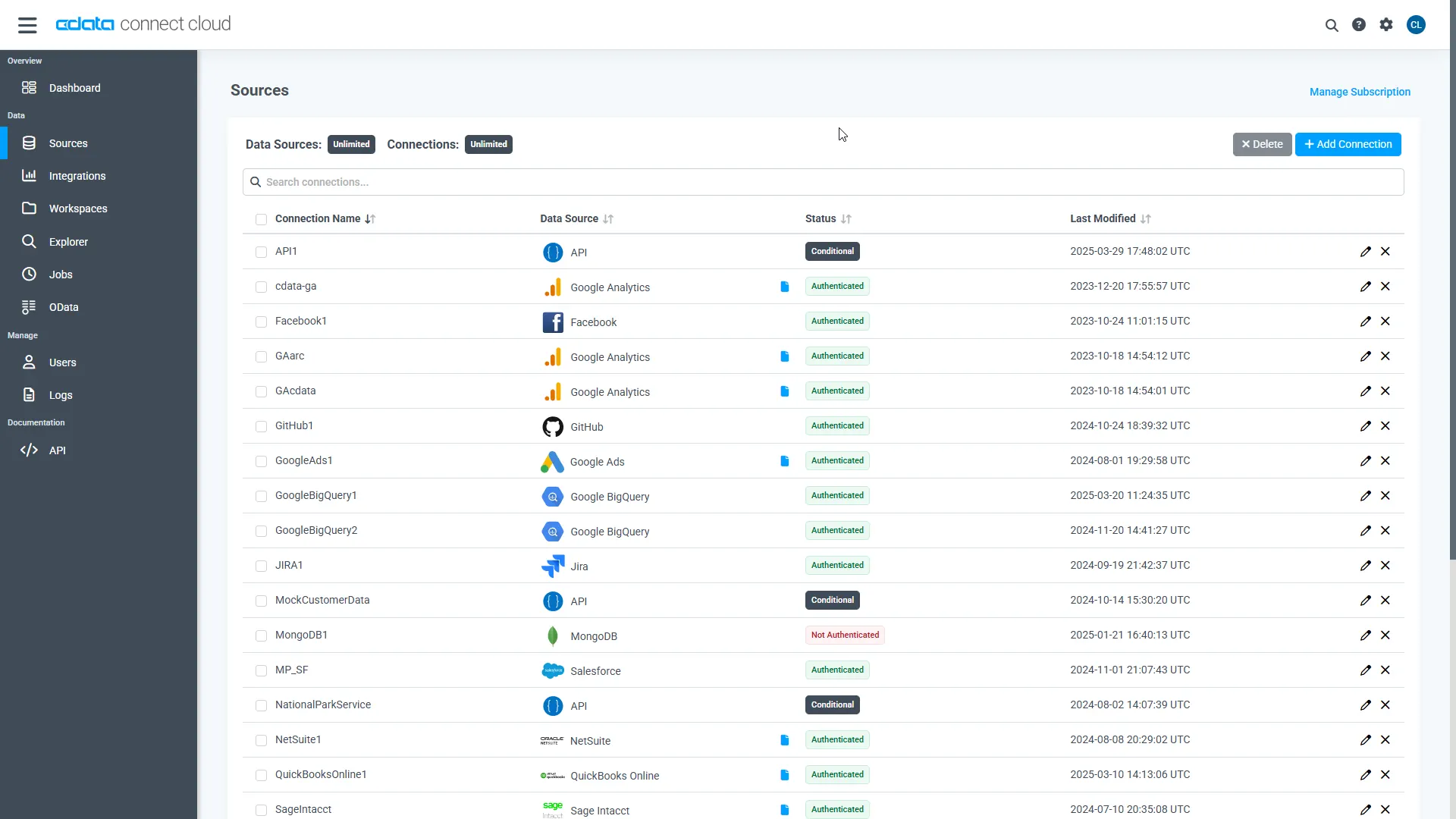

Log into Connect AI, click Sources, and then click

Add Connection.

-

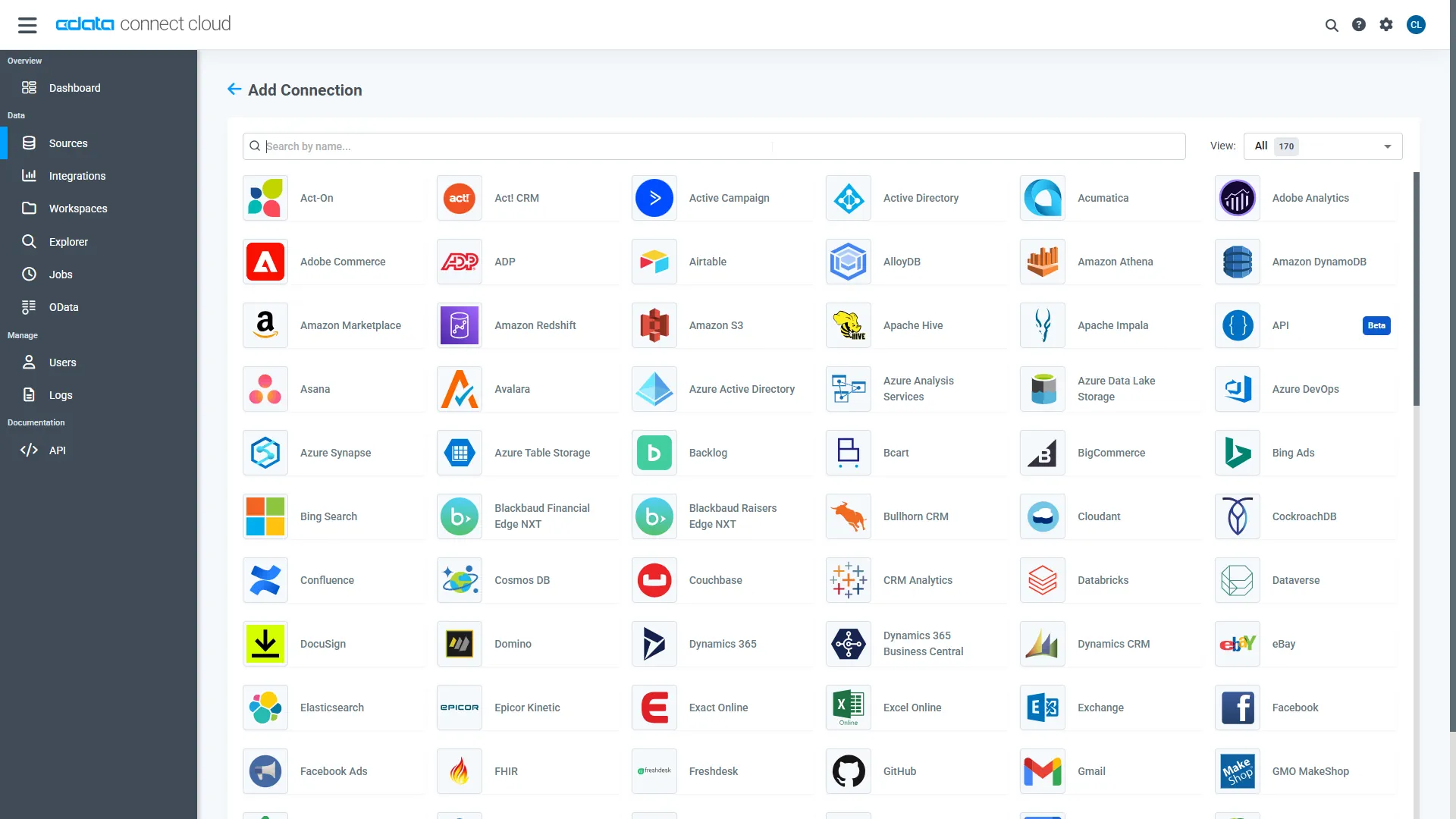

Select "Databricks" from the Add Connection panel.

-

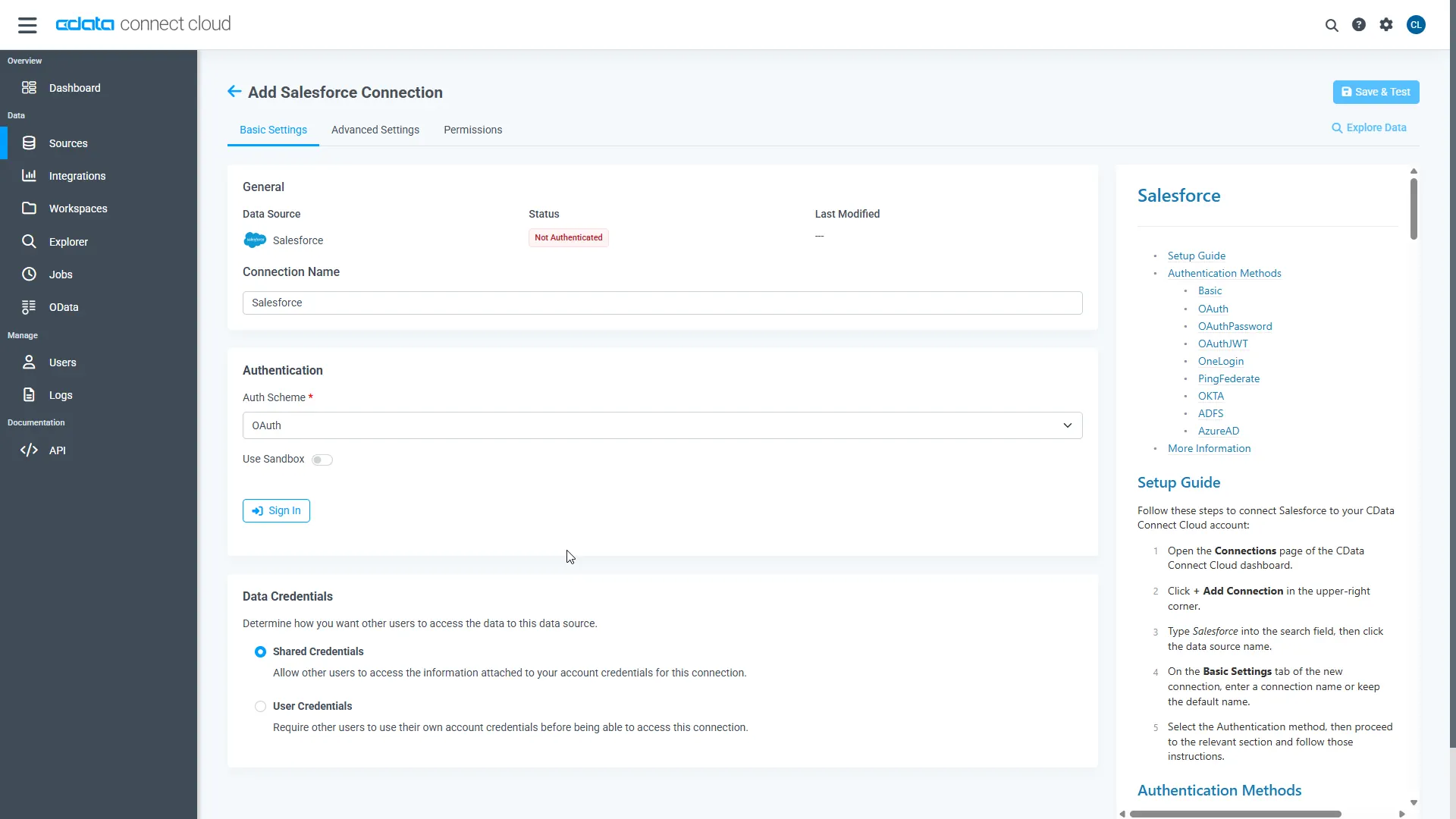

Enter the required authentication properties.

To connect to a Databricks cluster, set the properties as described below.

Note: The needed values can be found in your Databricks instance by navigating to Clusters, and selecting the desired cluster, and selecting the JDBC/ODBC tab under Advanced Options.

- Server: Set to the Server Hostname of your Databricks cluster.

- HTTPPath: Set to the HTTP Path of your Databricks cluster.

- Token: Set to your personal access token (this value can be obtained by navigating to the User Settings page of your Databricks instance and selecting the Access Tokens tab).

Click Create & Test.

Click Create & Test.

-

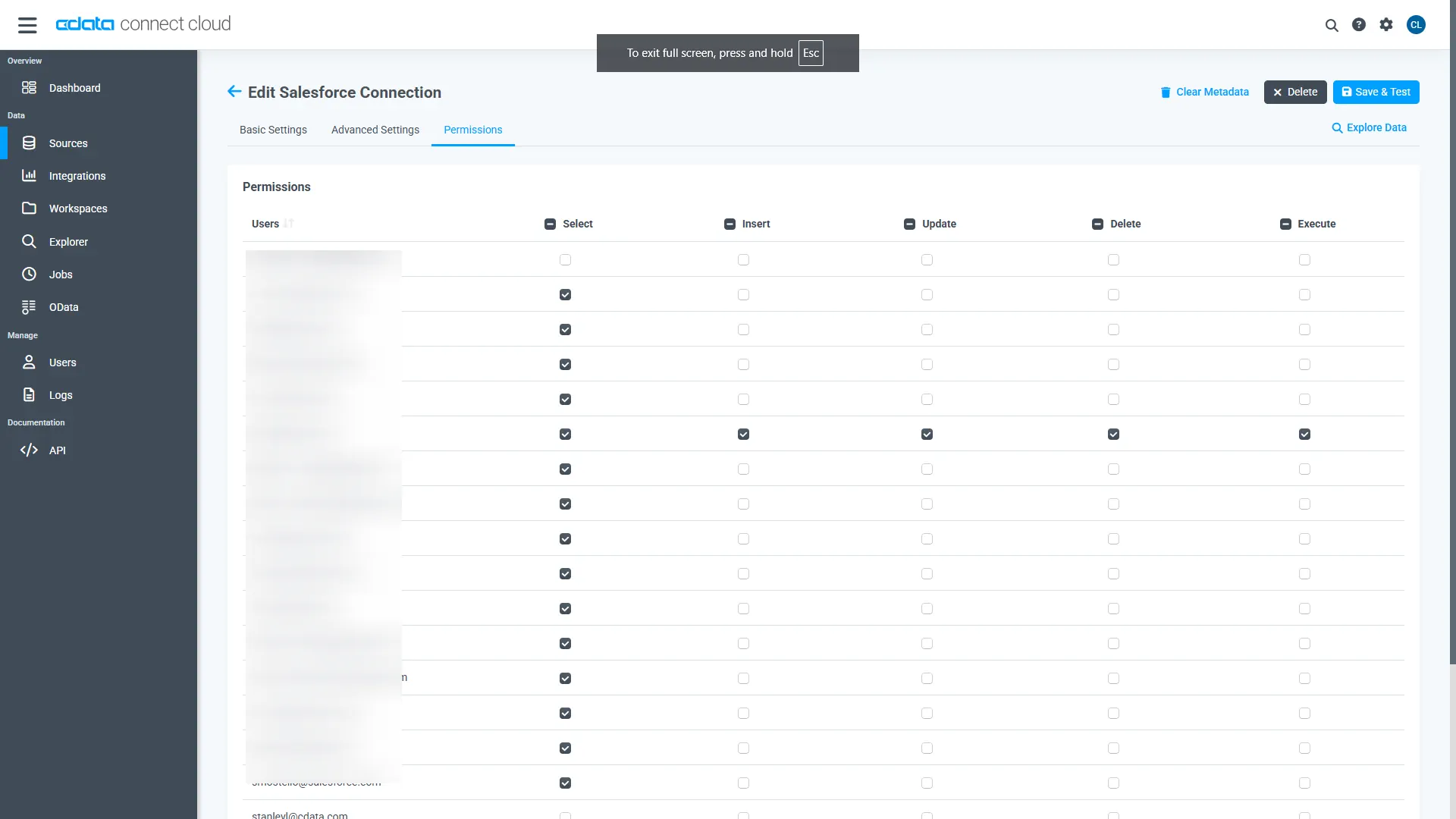

Open the Permissions tab and configure user access.

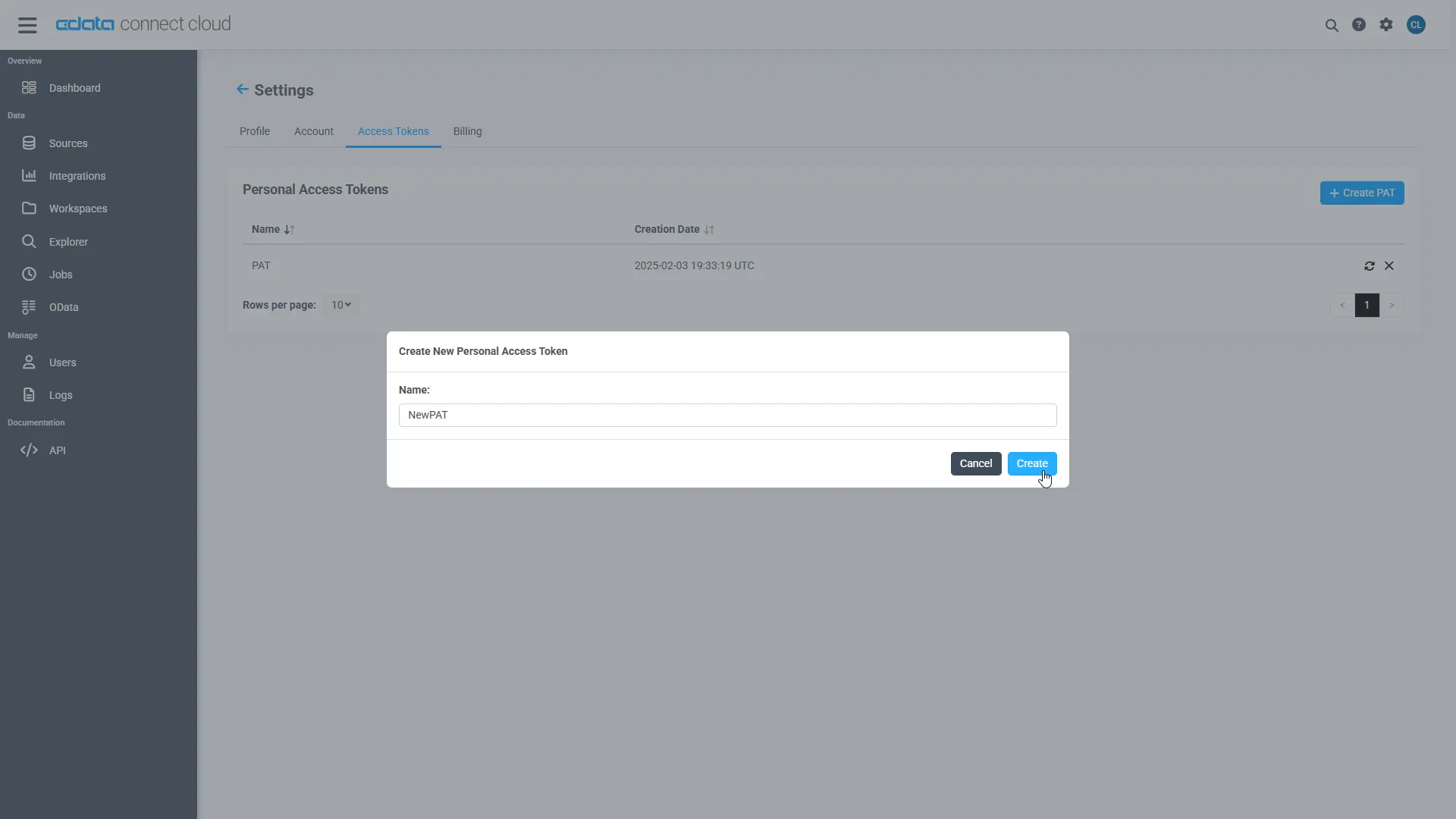

Add a Personal Access Token

A Personal Access Token (PAT) authenticates MCP requests from Agno to CData Connect AI.

- Open Settings and navigate to Access Tokens.

- Click Create PAT.

-

Save the generated token securely.

Step 2: Install dependencies and configure environment variables

Install Agno and the MCP adapter dependencies. LangChain is included strictly for MCP tool compatibility.

pip install agno agno-mcp langchain-mcp-adapters

Configure environment variables:

export CDATA_MCP_URL="https://mcp.cloud.cdata.com/mcp" export CDATA_MCP_AUTH="Base64EncodedCredentials" export OPENAI_API_KEY="your-openai-key"

Where "Base64EncodedCredentials" is your Connect AI user email and your Personal Access Token joined by a colon (":") and Base64 Encoded: Base64([email protected]:MY_CONNECT_AI_PAT)

Step 3: Connect to CData Connect AI via MCP

Create an MCP client using streamable HTTP. This establishes a secure connection to CData Connect AI.

import os

from langchain_mcp_adapters.client import MultiServerMCPClient

mcp_client = MultiServerMCPClient(

connections={

"default": {

"transport": "streamable_http",

"url": os.environ["CDATA_MCP_URL"],

"headers": {

"Authorization": f"Basic {os.environ['CDATA_MCP_AUTH']}"

}

}

}

)

Step 4: Discover MCP tools

CData Connect AI exposes operations as MCP tools. These are retrieved dynamically at runtime.

langchain_tools = await mcp_client.get_tools() for tool in langchain_tools: print(tool.name)

Step 5: Convert MCP tools to Agno functions

Each MCP tool is wrapped as an Agno function so it can be used by the agent.

NOTE: Agno performs all reasoning, planning, and tool selection.LangChain is used only as a lightweight MCP compatibility layer to consume tools exposed by CData Connect AI.

from agno.tools import Function

def make_tool_caller(lc_tool):

async def call_tool(**kwargs):

return await lc_tool.ainvoke(kwargs)

return call_tool

Step 6: Create an Agno agent and query live Databricks data

Agno performs all reasoning, planning, and tool invocation. LangChain plays no role beyond MCP compatibility.

from agno.agent import Agent

from agno.models.openai import OpenAIChat

agent = Agent(

model=OpenAIChat(

id="gpt-4o",

temperature=0.2,

api_key=os.environ["OPENAI_API_KEY"]

),

tools=agno_tools,

markdown=True

)

await agent.aprint_response(

"Show me the top 5 records from the available data source"

)

if __name__ == "__main__":

asyncio.run(main())

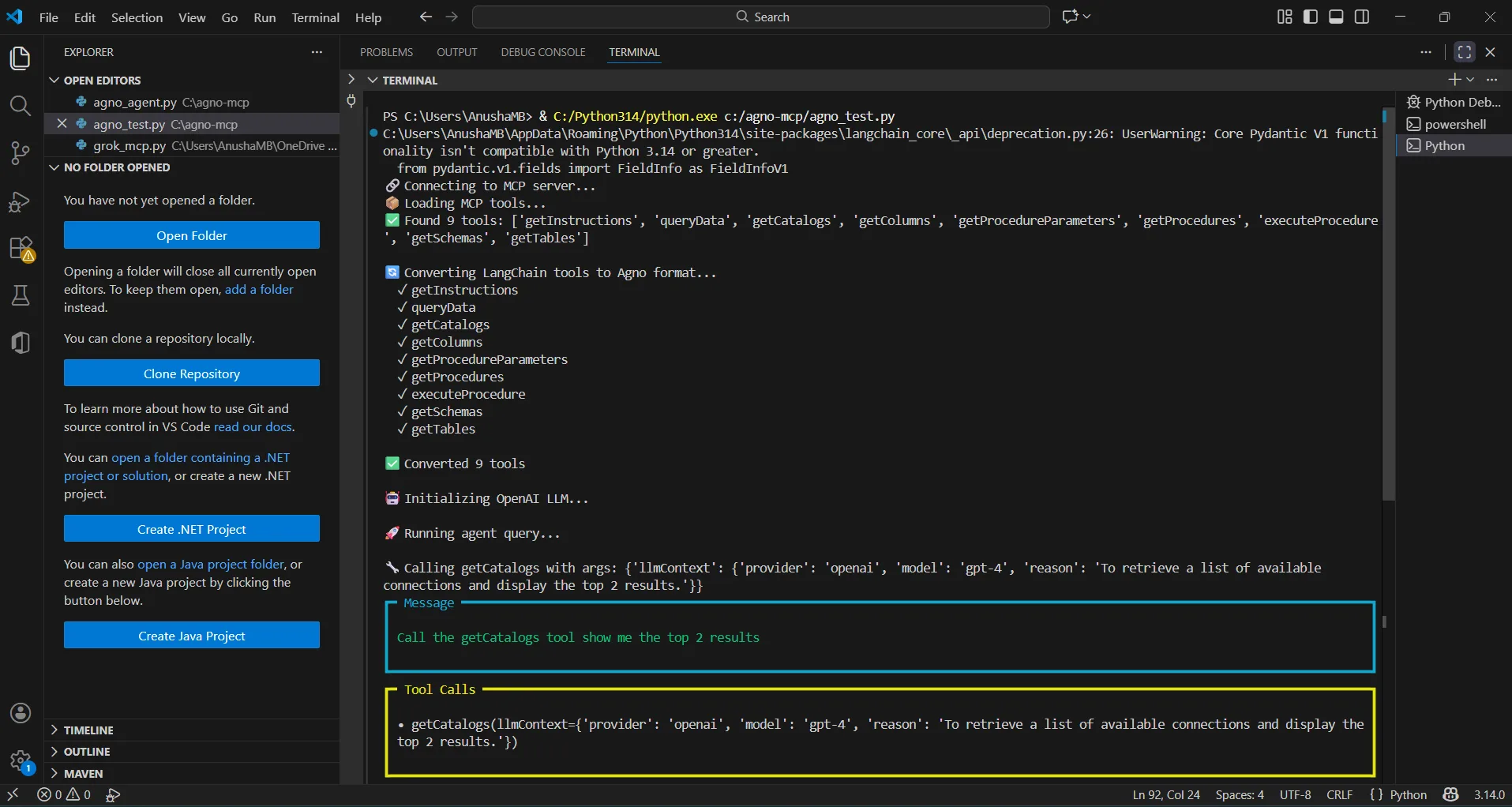

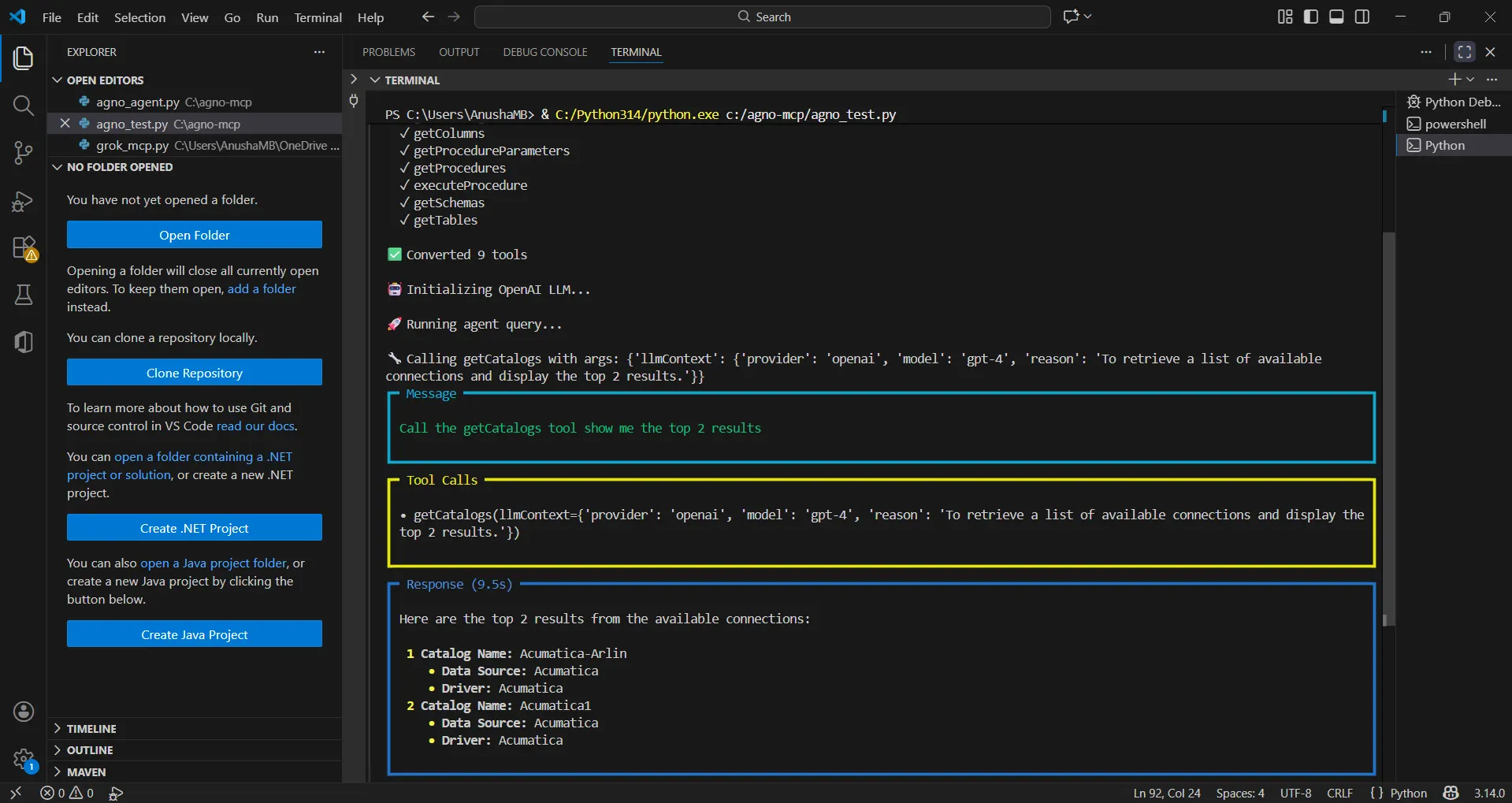

The results below show an Agno agent invoking MCP tools through CData Connect AI and returning live Databricks data data.

You can now query live Databricks data using natural language through your Agno agent.

Get CData Connect AI

To get live data access to 300+ SaaS, Big Data, and NoSQL sources directly from your cloud applications, try CData Connect AI today!