Connect to Live Lakebase Data in PostGresSQL Interface through CData Connect AI

There are a vast number of PostgreSQL clients available on the Internet. PostgreSQL is a popular interface for data access. When you pair PostgreSQL with CData Connect AI, you gain database-like access to live Lakebase data from PostgreSQL. In this article, we walk through the process of connecting to Lakebase data in Connect AI and establishing a connection between Connect AI and PostgreSQL using a TDS foreign data wrapper (FDW).

CData Connect AI provides a pure SQL Server interface for Lakebase, allowing you to query data from Lakebase without replicating the data to a natively supported database. Using optimized data processing out of the box, CData Connect AI pushes all supported SQL operations (filters, JOINs, etc.) directly to Lakebase, leveraging server-side processing to return the requested Lakebase data quickly.

Connect to Lakebase in Connect AI

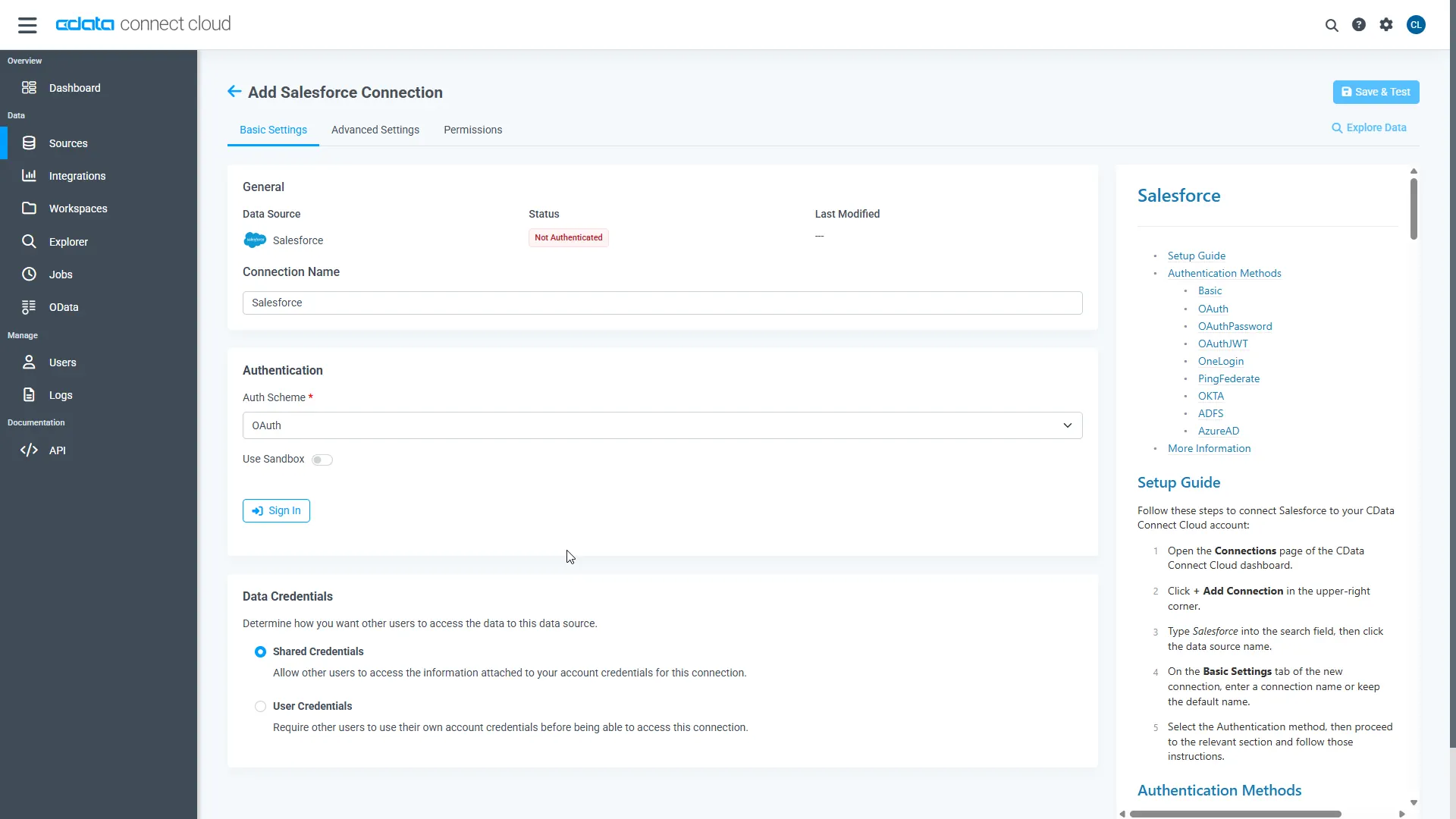

CData Connect AI uses a straightforward, point-and-click interface to connect to data sources.

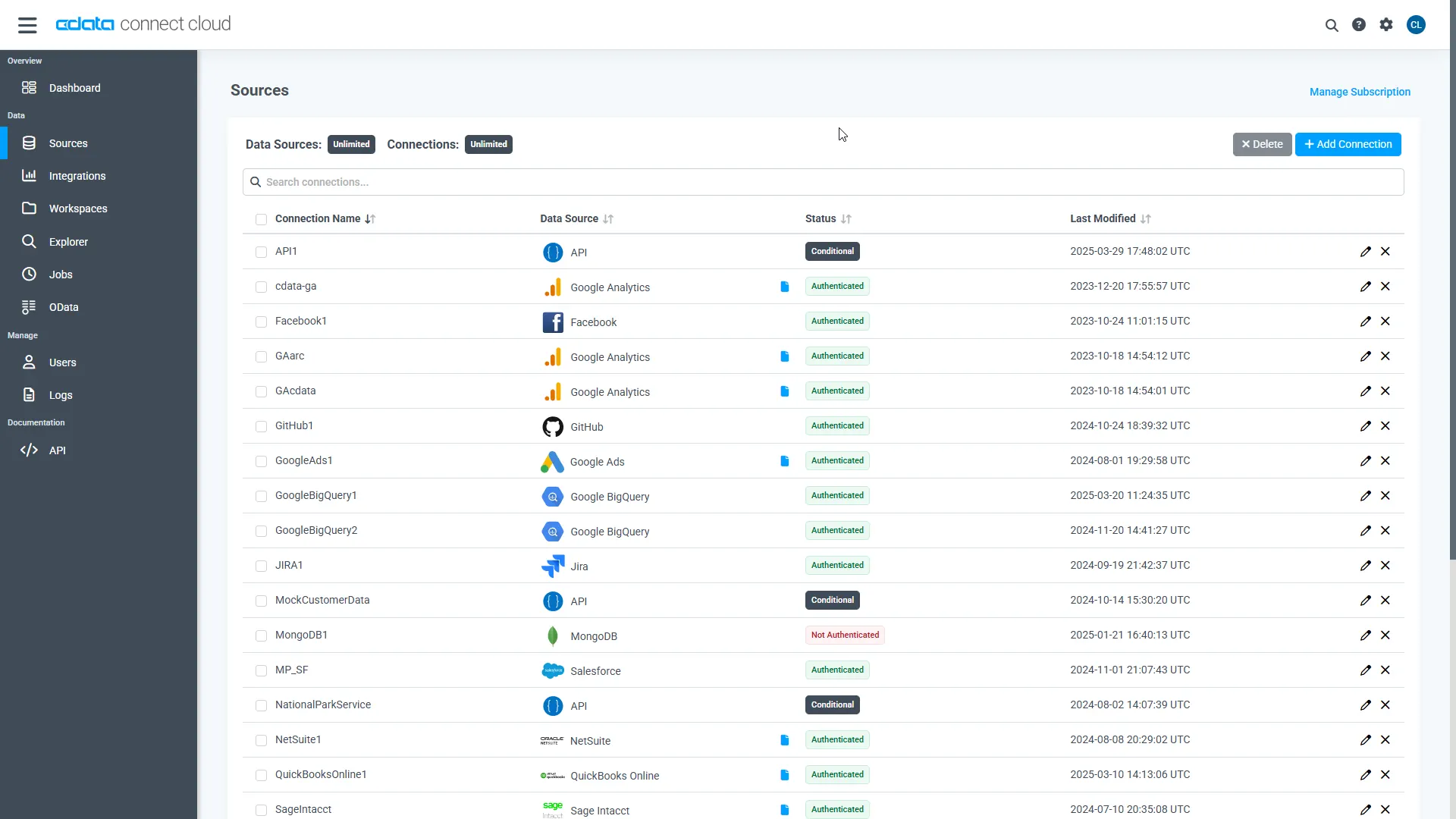

- Log into Connect AI, click Sources, and then click Add Connection

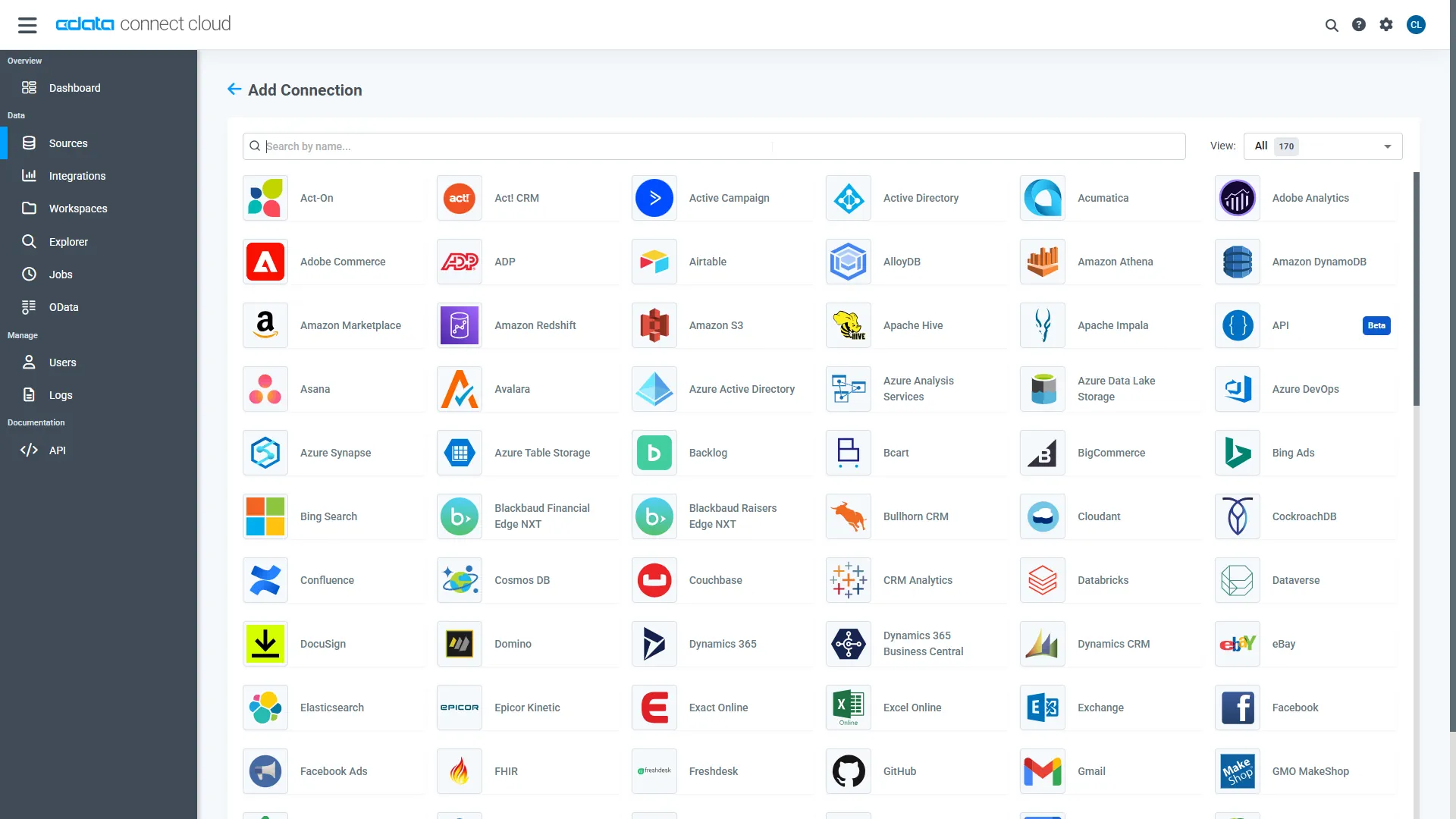

- Select "Lakebase" from the Add Connection panel

-

Enter the necessary authentication properties to connect to Lakebase.

To connect to Databricks Lakebase, start by setting the following properties:

- DatabricksInstance: The Databricks instance or server hostname, provided in the format instance-abcdef12-3456-7890-abcd-abcdef123456.database.cloud.databricks.com.

- Server: The host name or IP address of the server hosting the Lakebase database.

- Port (optional): The port of the server hosting the Lakebase database, set to 5432 by default.

- Database (optional): The database to connect to after authenticating to the Lakebase Server, set to the authenticating user's default database by default.

OAuth Client Authentication

To authenicate using OAuth client credentials, you need to configure an OAuth client in your service principal. In short, you need to do the following:

- Create and configure a new service principal

- Assign permissions to the service principal

- Create an OAuth secret for the service principal

For more information, refer to the Setting Up OAuthClient Authentication section in the Help documentation.

OAuth PKCE Authentication

To authenticate using the OAuth code type with PKCE (Proof Key for Code Exchange), set the following properties:

- AuthScheme: OAuthPKCE.

- User: The authenticating user's user ID.

For more information, refer to the Help documentation.

- Click Save & Test

-

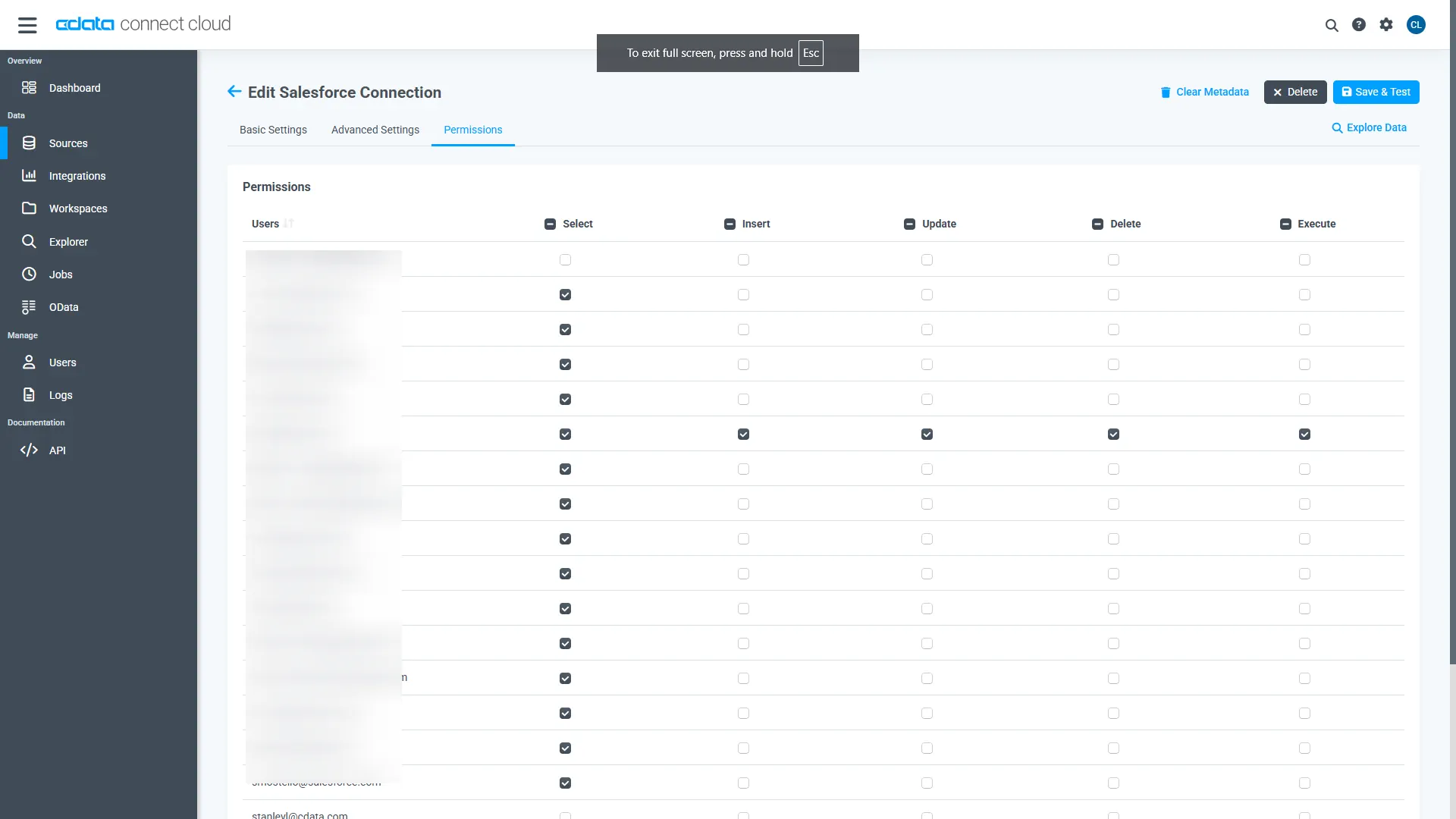

Navigate to the Permissions tab in the Add Lakebase Connection page and update the User-based permissions.

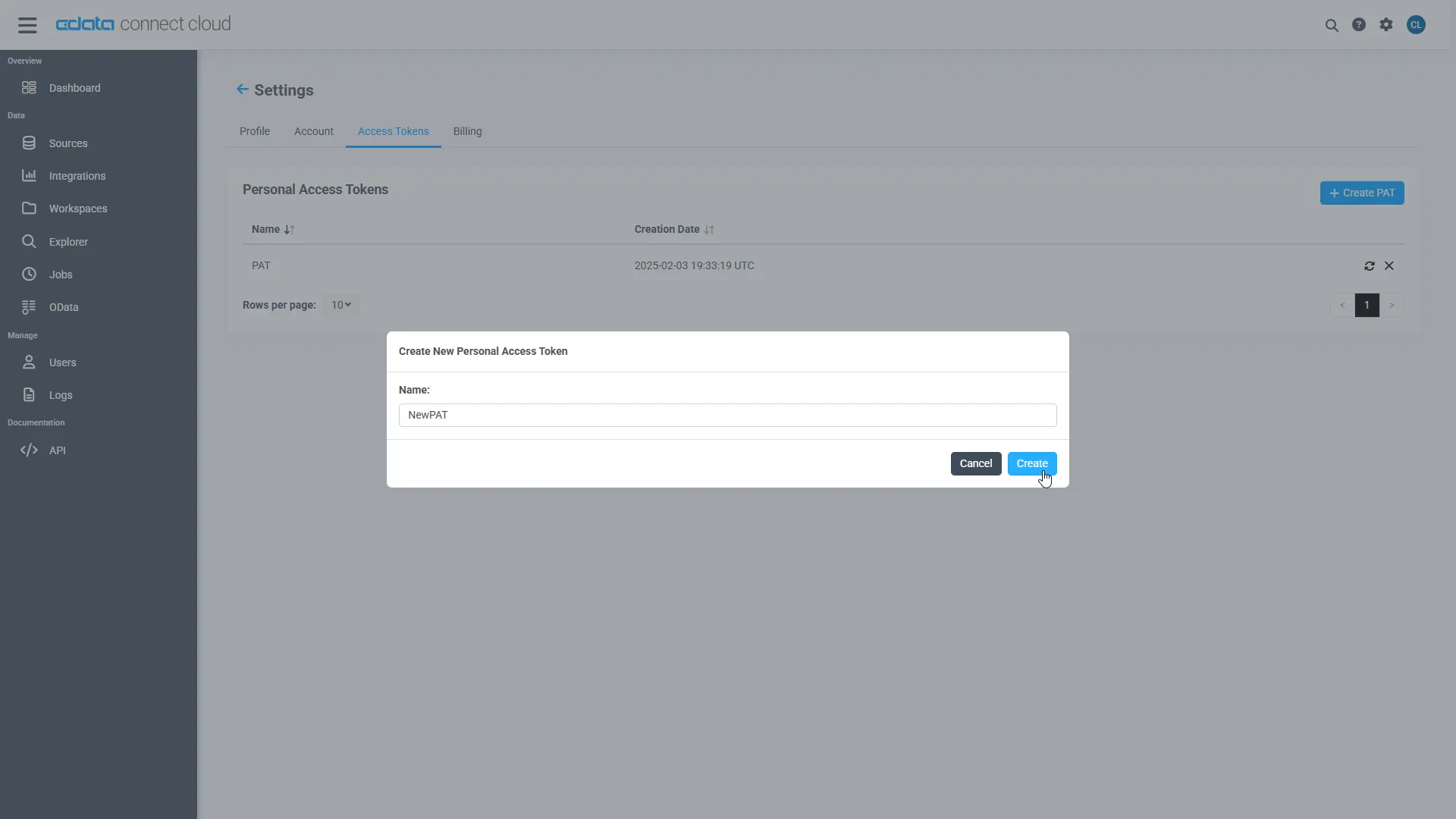

Add a Personal Access Token

When connecting to Connect AI through the REST API, the OData API, or the Virtual SQL Server, a Personal Access Token (PAT) is used to authenticate the connection to Connect AI. It is best practice to create a separate PAT for each service to maintain granularity of access.

- Click on the Gear icon () at the top right of the Connect AI app to open the settings page.

- On the Settings page, go to the Access Tokens section and click Create PAT.

-

Give the PAT a name and click Create.

- The personal access token is only visible at creation, so be sure to copy it and store it securely for future use.

With the connection configured and a PAT generated, you are ready to connect to Lakebase data from PostgreSQL.

Build the TDS Foreign Data Wrapper

The Foreign Data Wrapper can be installed as an extension to PostgreSQL, without recompiling PostgreSQL. The tds_fdw extension is used as an example (https://github.com/tds-fdw/tds_fdw).

- You can clone and build the git repository via something like the following view source:

sudo apt-get install git git clone https://github.com/tds-fdw/tds_fdw.git cd tds_fdw make USE_PGXS=1 sudo make USE_PGXS=1 install

Note: If you have several PostgreSQL versions and you do not want to build for the default one, first locate where the binary for pg_config is, take note of the full path, and then append PG_CONFIG=after USE_PGXS=1 at the make commands. - After you finish the installation, then start the server:

sudo service postgresql start

- Then go inside the Postgres database

psql -h localhost -U postgres -d postgres

Note: Instead of localhost you can put the IP where your PostgreSQL is hosted.

Connect to Lakebase data as a PostgreSQL Database and query the data!

After you have installed the extension, follow the steps below to start executing queries to Lakebase data:

- Log into your database.

- Load the extension for the database:

CREATE EXTENSION tds_fdw;

- Create a server object for Lakebase data:

CREATE SERVER "Lakebase1" FOREIGN DATA WRAPPER tds_fdw OPTIONS (servername'tds.cdata.com', port '14333', database 'Lakebase1');

- Configure user mapping with your email and Personal Access Token from your Connect AI account:

CREATE USER MAPPING for postgres SERVER "Lakebase1" OPTIONS (username '[email protected]', password 'your_personal_access_token' );

- Create the local schema:

CREATE SCHEMA "Lakebase1";

- Create a foreign table in your local database:

#Using a table_name definition: CREATE FOREIGN TABLE "Lakebase1".Orders ( id varchar, ShipCity varchar) SERVER "Lakebase1" OPTIONS(table_name 'Lakebase.Orders', row_estimate_method 'showplan_all'); #Or using a schema_name and table_name definition: CREATE FOREIGN TABLE "Lakebase1".Orders ( id varchar, ShipCity varchar) SERVER "Lakebase1" OPTIONS (schema_name 'Lakebase', table_name 'Orders', row_estimate_method 'showplan_all'); #Or using a query definition: CREATE FOREIGN TABLE "Lakebase1".Orders ( id varchar, ShipCity varchar) SERVER "Lakebase1" OPTIONS (query 'SELECT * FROM Lakebase.Orders', row_estimate_method 'showplan_all'); #Or setting a remote column name: CREATE FOREIGN TABLE "Lakebase1".Orders ( id varchar, col2 varchar OPTIONS (column_name 'ShipCity')) SERVER "Lakebase1" OPTIONS (schema_name 'Lakebase', table_name 'Orders', row_estimate_method 'showplan_all');

- You can now execute read/write commands to Lakebase:

SELECT id, ShipCity FROM "Lakebase1".Orders;

More Information & Free Trial

Now, you have created a simple query from live Lakebase data. For more information on connecting to Lakebase (and more than 200 other data sources), visit the Connect AI page. Sign up for a free trial and start working with live Lakebase data in PostgreSQL.