Integrating LangChain with Zuora Data via CData Connect AI

LangChain is a framework used by developers, data engineers, and AI practitioners for building AI-powered applications and workflows by combining reasoning models (LLMs), tools, APIs, and data connectors. By integrating LangChain with CData Connect AI through the built-in MCP Server, workflows can effortlessly access and interact with live Zuora data in real time.

CData Connect AI offers a secure, low-code environment to connect Zuora and other data sources, removing the need for complex ETL and enabling seamless automation across business applications with live data.

This article outlines how to configure Zuora connectivity in CData Connect AI, register the MCP server with LangChain, and build a workflow that queries Zuora data in real time.

Prerequisites

- An account in CData Connect AI

- Python version 3.10 or higher, to install the LangChain and LangGraph packages

- Generate and save an OpenAI API key

- Install Visual Studio Code in your system

Step 1: Configure Zuora Connectivity for LangChain

Before LangChain can access Zuora, a Zuora connection must be created in CData Connect AI. This connection is then exposed to LangChain through the remote MCP server.

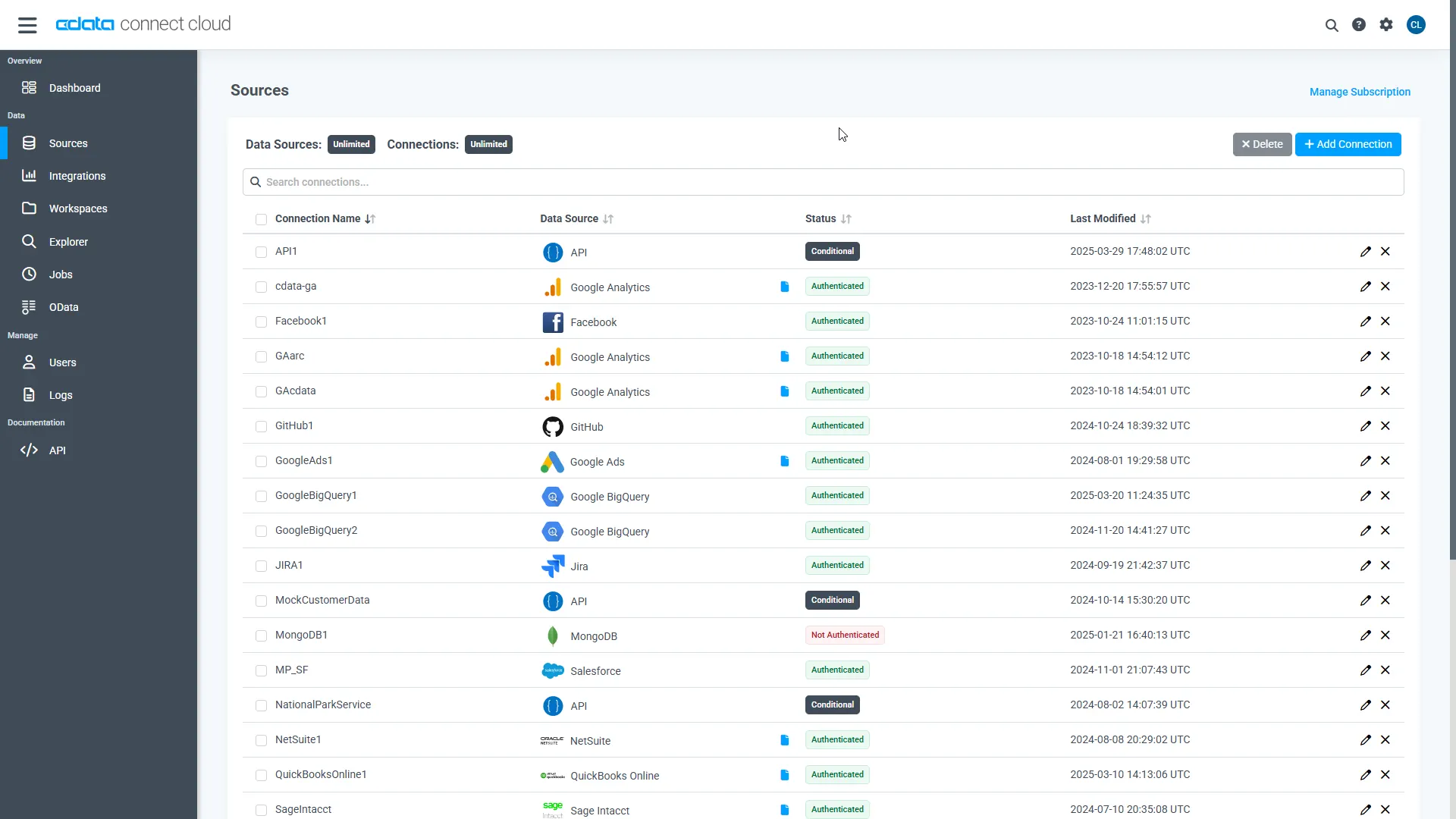

- Log in to Connect AI click Sources, and then click + Add Connection

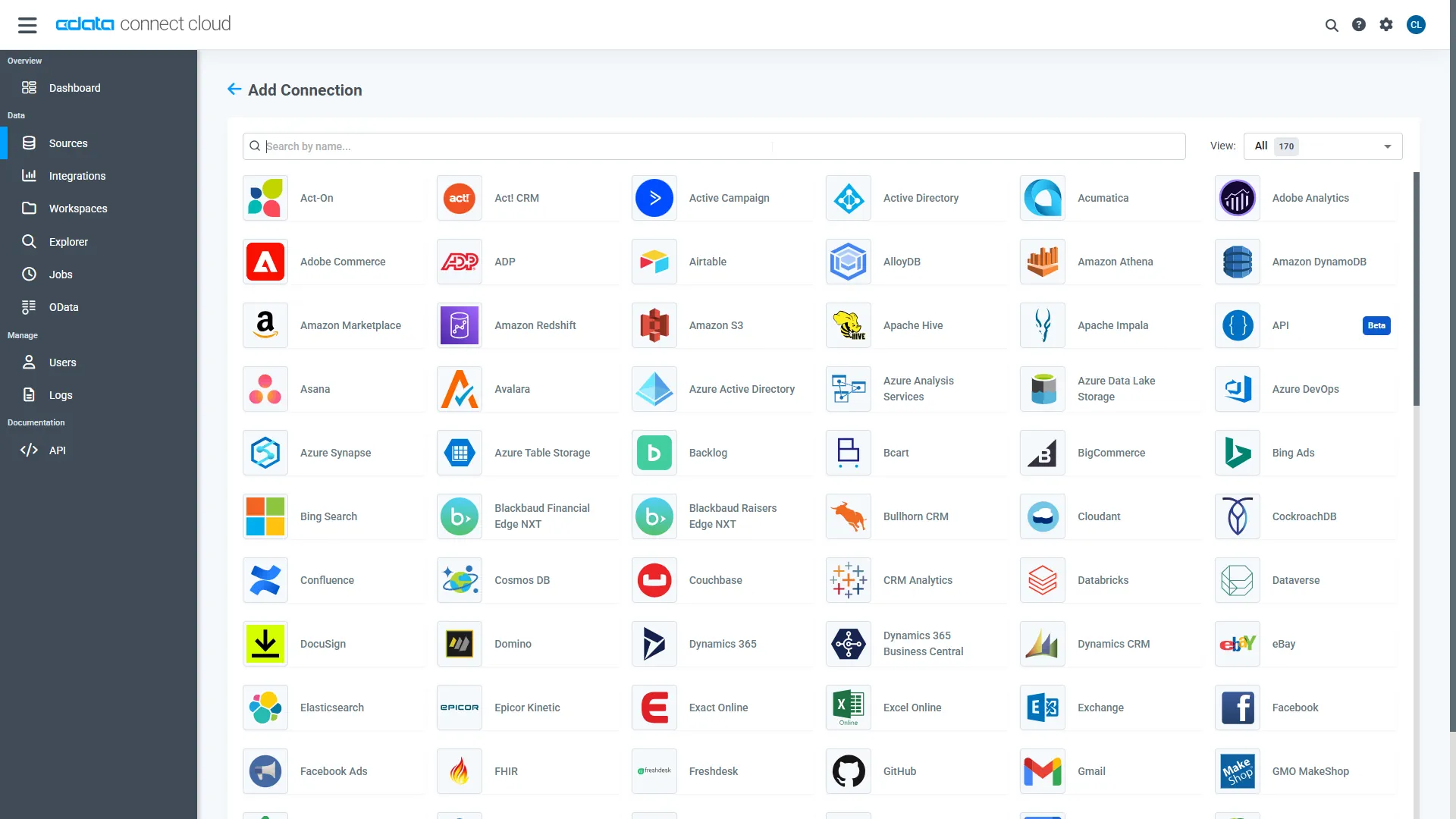

- From the available data sources, choose Zuora

-

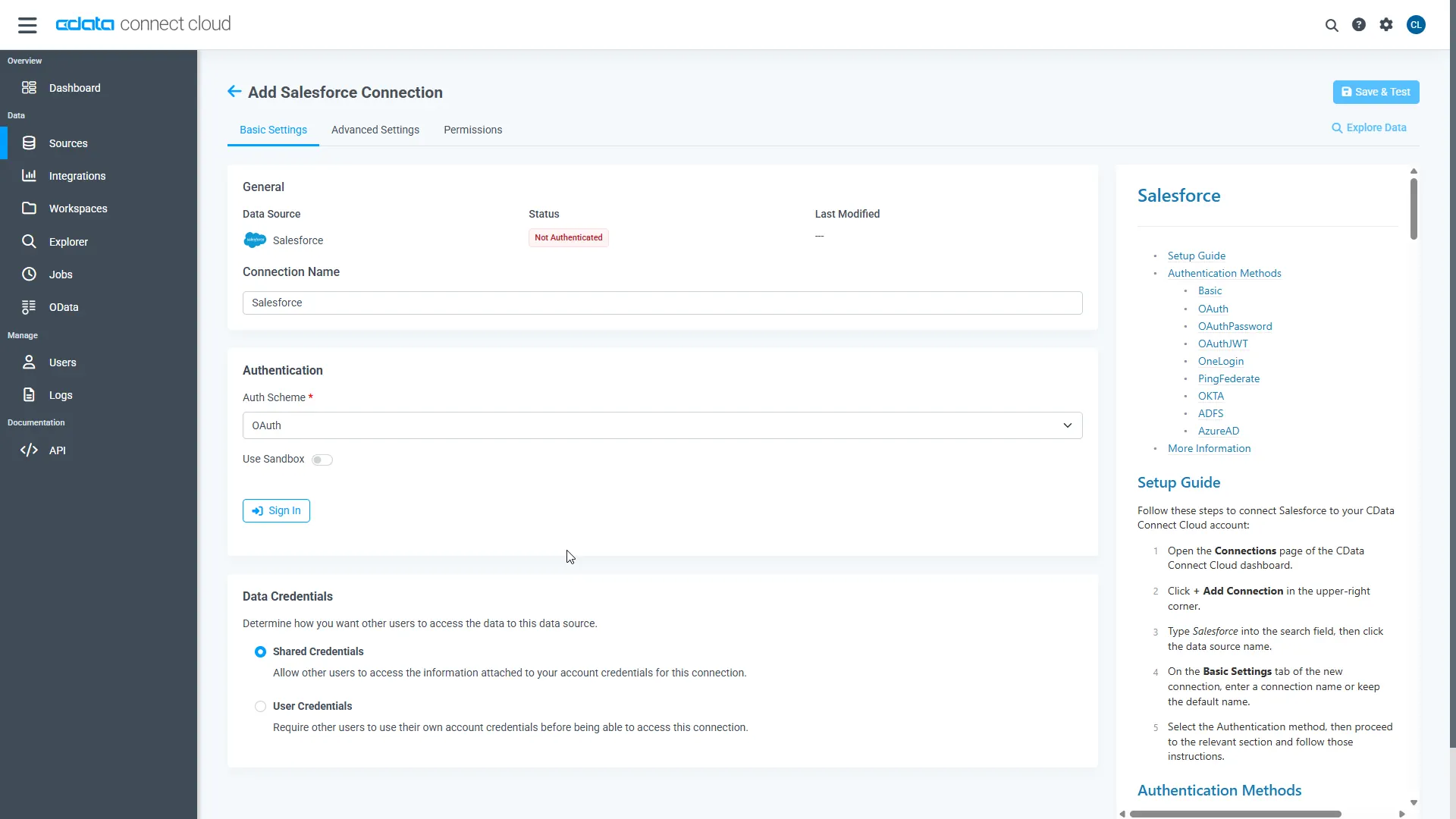

Enter the necessary authentication properties to connect to Zuora

Zuora uses the OAuth standard to authenticate users. See the online Help documentation for a full OAuth authentication guide.

Configuring Tenant property

In order to create a valid connection with the provider you need to choose one of the Tenant values (USProduction by default) which matches your account configuration. The following is a list with the available options:

- USProduction: Requests sent to https://rest.zuora.com.

- USAPISandbox: Requests sent to https://rest.apisandbox.zuora.com"

- USPerformanceTest: Requests sent to https://rest.pt1.zuora.com"

- EUProduction: Requests sent to https://rest.eu.zuora.com"

- EUSandbox: Requests sent to https://rest.sandbox.eu.zuora.com"

Selecting a Zuora Service

Two Zuora services are available: Data Query and AQuA API. By default ZuoraService is set to AQuADataExport.

DataQuery

The Data Query feature enables you to export data from your Zuora tenant by performing asynchronous, read-only SQL queries. We recommend to use this service for quick lightweight SQL queries.

Limitations- The maximum number of input records per table after filters have been applied: 1,000,000

- The maximum number of output records: 100,000

- The maximum number of simultaneous queries submitted for execution per tenant: 5

- The maximum number of queued queries submitted for execution after reaching the limitation of simultaneous queries per tenant: 10

- The maximum processing time for each query in hours: 1

- The maximum size of memory allocated to each query in GB: 2

- The maximum number of indices when using Index Join, in other words, the maximum number of records being returned by the left table based on the unique value used in the WHERE clause when using Index Join: 20,000

AQuADataExport

AQuA API export is designed to export all the records for all the objects ( tables ). AQuA query jobs have the following limitations:

Limitations- If a query in an AQuA job is executed longer than 8 hours, this job will be killed automatically.

- The killed AQuA job can be retried three times before returned as failed.

- Click Save & Test

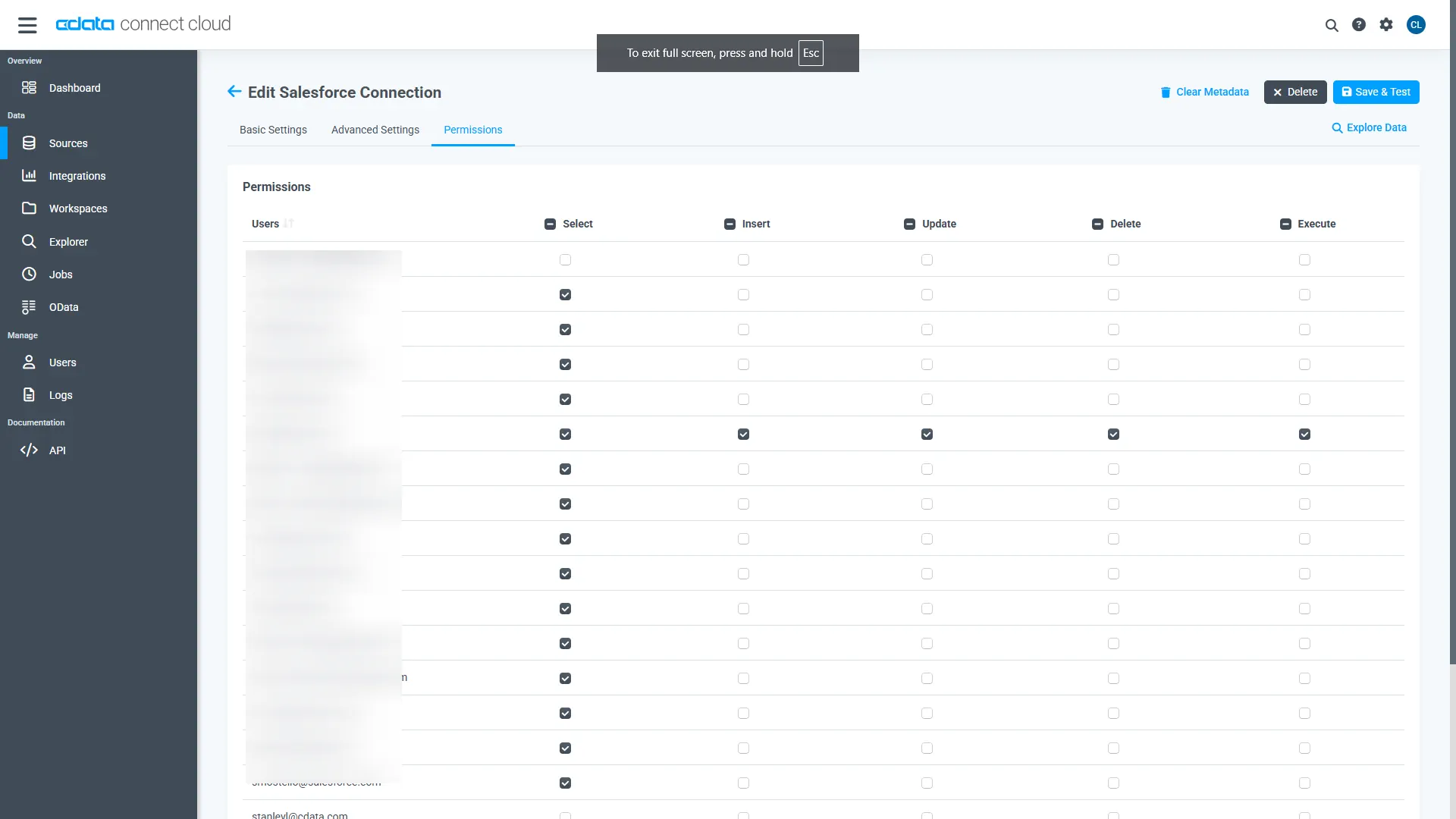

- Once authenticated, open the Permissions tab in the Zuora connection and configure user-based permissions as required

Generate a Personal Access Token (PAT)

LangChain authenticates to Connect AI using an account email and a Personal Access Token (PAT). Creating separate PATs for each integration is recommended to maintain access control granularity.

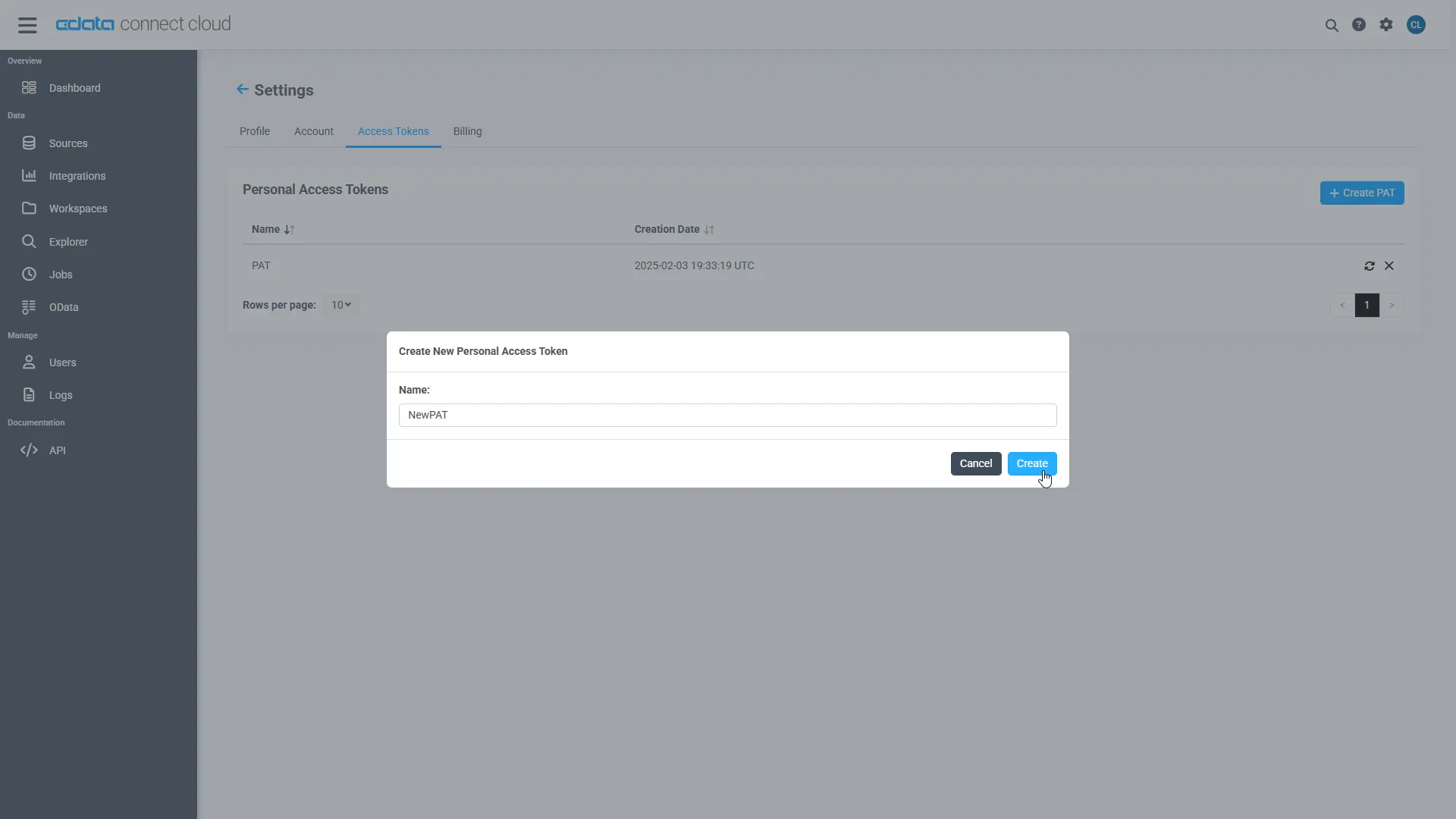

- In Connect AI, select the Gear icon in the top-right to open Settings

- Under Access Tokens, select Create PAT

- Provide a descriptive name for the token and select Create

- Copy the token and store it securely. The PAT will only be visible during creation

With the Zuora connection configured and a PAT generated, LangChain is prepared to connect to Zuora data through the CData MCP server.

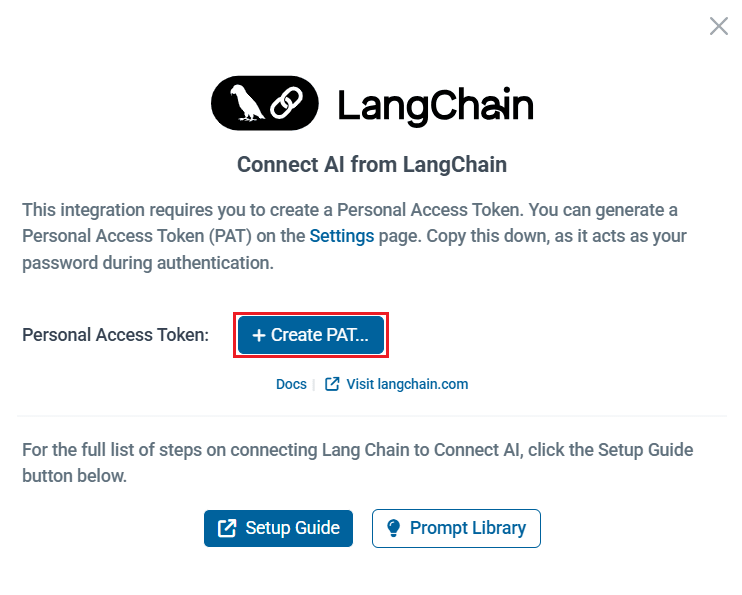

Note: You can also generate a PAT from LangChain in the Integrations section of Connect AI. Simply click Connect --> Create PAT to generate it.

Step 2: Connect to the MCP server in LangChain

To connect LangChain with CData Connect AI Remote MCP Server and use OpenAI (ChatGPT) for reasoning, you need to configure your MCP server endpoint and authentication values in a config.py file. These values allow LangChain to call the MCP server tools, while OpenAI handles the natural language reasoning.

- Create a folder for LangChain MCP

- Create two Python files within the folder: config.py and langchain.py

- In config.py, create a class Config to define your MCP server authentication and URL. You need to provide your Base64-encoded CData Connect AI username and PAT (obtained in the prerequisites):

class Config: MCP_BASE_URL = "https://mcp.cloud.cdata.com/mcp" #MCP Server URL MCP_AUTH = "base64encoded(EMAIL:PAT)" #Base64 encoded Connect AI Email:PATNote: You can create the base64 encoded version of MCP_AUTH using any Base64 encoding tool.

- In langchain.py, set up your MCP server and MCP client to call the tools and prompts:

""" Integrates a LangChain ReAct agent with CData Connect AI MCP server. The script demonstrates fetching, filtering, and using tools with an LLM for agent-based reasoning. """ import asyncio from langchain_mcp_adapters.client import MultiServerMCPClient from langchain_openai import ChatOpenAI from langgraph.prebuilt import create_react_agent from config import Config async def main(): # Initialize MCP client with one or more server URLs mcp_client = MultiServerMCPClient( connections={ "default": { # you can name this anything "transport": "streamable_http", "url": Config.MCP_BASE_URL, "headers": {"Authorization": f"Basic {Config.MCP_AUTH}"}, } } ) # Load remote MCP tools exposed by the server all_mcp_tools = await mcp_client.get_tools() print("Discovered MCP tools:", [tool.name for tool in all_mcp_tools]) # Create and run the ReAct style agent llm = ChatOpenAI( model="gpt-4o", temperature=0.2, api_key="YOUR_OPEN_API_KEY" #Use your OpenAI API Key here, this can be found here: https://platform.openai.com/ ) agent = create_react_agent(llm, all_mcp_tools) user_prompt = "How many tables are available in Zuora1?" #Change prompts as per need print(f" User prompt: {user_prompt}") # Send a prompt asking the agent to use the MCP tools response = await agent.ainvoke( { "messages": [{ "role": "user", "content": (user_prompt),}]} ) # Print out the agent's final response final_msg = response["messages"][-1].content print("Agent final response:", final_msg) if __name__ == "__main__": asyncio.run(main())

Step 3: Install the LangChain and LangGraph packages

Since this workflow uses LangChain together with CData Connect AI MCP and integrates OpenAI for reasoning, you need to install the required Python packages.

Run the following command in your project terminal:

pip install langchain-mcp-adapters langchain-openai langgraph

Step 4: Prompt Zuora using LangChain (via the MCP server)

- When the installation finishes, run python langchain.py to execute the script

- The script connects to the MCP server and discovers the CData Connect AI MCP tools available for querying your connected data

- Supply a prompt (e.g., "How many tables are available in Zuora?")

- Accordingly, the agent responds with the results

Get CData Connect AI

To get live data access to 300+ SaaS, Big Data, and NoSQL sources directly from your cloud applications, try CData Connect AI today!