Organizations standing up AI agents in 2026 are facing an architecture decision early in the design phase: do they support LLM-driven workflows or code execution? Which framework lets agents interact with external services in the best way? The two approaches are typically presented as an either/or choice, and some teams commit before they fully understand what their workflows actually need. That framing creates problems down the road.

Organizations standing up AI agents in 2026 are facing an architecture decision early in the design phase: do they support LLM-driven workflows or code execution? Which framework lets agents interact with external services in the best way? The two approaches are typically presented as an either/or choice, and some teams commit before they fully understand what their workflows actually need. That framing creates problems down the road.

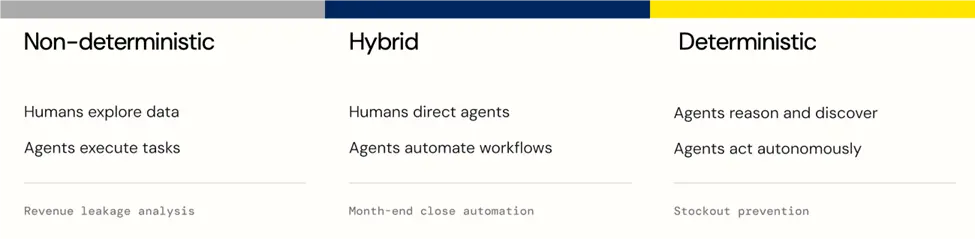

The real question organizations need to ask is "where does the steps this agent takes sit between fully exploratory and fully deterministic, and will that change over time?" The observation that AI workflows exist on a spectrum isn't new. Salesforce, Camunda, deepset, and others have written about the tension between deterministic and non-deterministic execution. But there's still room in the discourse to connect how your data infrastructure is either your ceiling or your runway as those workflows scale.

In this article, we'll give a name to the spectrum and draw out the infrastructure requirements to support your workflows, no matter where they fall on that spectrum.

Naming the spectrum

Several thought leaders are converging on the same observation: AI agent workflows aren't binary. Salesforce describes a choice between rule-based precision and adaptive reasoning. Camunda frames it as blending deterministic orchestration with dynamic AI bursts. deepset calls it a spectrum, not a binary. These are all pointing at the same reality, but without a consistent name or a shared framework for acting on it. We're calling it the autonomy spectrum, and the two poles are worth defining clearly.

At the non-deterministic, exploratory end, the LLM drives the process — it decides what questions to ask, how to interpret results, and what to do next. Output varies from run to run, which is often the point. This is the right posture when the schema is unknown, the specific step requires reasoning or judgment, the output isn't fixed. Often a human and/or LLM provides qualitative analysis before delivering results, prescribing actions, or drawing insights. Schema discovery, ad-hoc analysis, quality assessment, and even prompt prototyping all live here.

At the deterministic end, the logic is fixed and codified and autonomous reasoning can happen. The LLM still chooses from a limited set of parameters, but has more rigid, well-defined actions that it can take. The same input produces the same output every time. The step is auditable, schedulable, and measurable. This is the right posture when the pattern is established, correctness is required, the operation runs frequently, or compliance demands an audit trail. These steps are taken with minimal input: a single phrase, a scheduled event, an upstream signal. But the execution of the tasks occurs outside of the LLM. Creating tasks from a content calendar, inserting records from a validated source, and running scheduled reports all live here.

The part of the spectrum we think most processes will land is the middle, a kind of hybrid zone where the structure is fixed, but the reasoning within it isn't. The LLM makes decisions like choosing from a wide range of parameters, evaluating conditions, or selecting from a bounded set of actions. But the action space is well-defined, and the output is predictable within a range. Evaluating data quality before a pipeline runs, selecting the right API parameters based on what a schema lookup returns, and triaging inbound requests against a set of business rules all live here.

Hybrid workflows in practice

Think of a research-analysis-report agentic flow. The research agent likely has a very defined set of parameters for the data it retrieves. The analyst, though, will need to generate insights, recommendations, and more based on the data presented, so will need much more autonomy. The reporter probably sits between the two, confined by the output of the analyst, but free to make its own decisions in how that output is presented.

Or imagine a content team that uses an agent to asses a batch of content briefs for completeness and quality (here the LLM reasons about whether the brief aligns with brand guidelines, contains enough detail, flags gaps, and suggests improvements). They then use another agent to creates project tasks in Asana with titles, assignees, and business-day-calculated due dates based on the publication schedule (deterministic — same input, same task structure, every time). The quality judgment leans towards the non-deterministic end because it genuinely requires LLM reasoning. The task creation moves to the deterministic, using the LLM for data calculation, but otherwise using code outside of the LLM because the pattern is fixed and runs on a regular schedule.

In both of these workflows, the instinct is to push every step toward the deterministic end of the spectrum. But it's worth noting that "deterministic" isn't some ideal end state for every step of a workflow. Some steps will always need LLM reasoning, and trying to force them into deterministic execution degrades quality without gaining reliability. The goal is to position each step correctly, not to push everything toward one end.

Why your architecture determines which steps you can support

Most AI-assisted workflows will contain steps that fall on various parts of the spectrum. But contrary to the prevailing implication that AI maturity means all workflows become fully autonomous, business is likely to stay human-led, but agent executed. Understanding this reality helps when choosing the right architecture to support any agentic workflow, no matter where its steps fall on the spectrum. If the connectivity architecture is tied to one end (either pure MCP, LLM-driven or pure code-driven), it creates a ceiling; one made more apparent as organizations adopt more complex agentic workflows.

Based on our research, the right data architecture needs to cover three gaps to fully enable agents, no matter what level of autonomy they require. First, agents need connectivity, both in terms of breadth (Can the agents connect to the systems they need to pull data and act?) and style (Do LLMs have direct access via MCP? Does code execution have access via other standard protocols, like REST or pure SQL?). Second, agents need context. Do agents have full fidelity access to not just the data, but the metadata. Is business logic built into the architecture? Can it be discovered by the LLM? And last, agents need control (or more likely, the organizations do). Are system-specific permissions honored? Are agent actions auditable? Can permissions be down-scoped as needed?

A platform that provides connectivity, context, and control, supports agentic workflows no matter where they fall on the spectrum. Agents explore, access, and act on exactly the systems they need, at runtime, with built-in or discoverable semantic intelligence, while organizations get full visibility into every connection, question, and action.

A practical framework for placing your steps

The spectrum becomes actionable when your team can assess where existing and planned steps in agentic workflows belong. Here are the indicators we've found most useful.

A non-deterministic workflow step can be identified when input is unstructured or variable in ways that can't be fully anticipated, when the cost of being wrong is low or a human is in the review loop, or when the task genuinely benefits from variation.

The deterministic steps reliably produce consistent outputs based on the same inputs across multiple runs, let a human reviewer approve quickly, let the step run frequently enough that reliability and cost matter, build an audit trail, or let the step be written as code without meaningfully degrading quality.

A hybrid step might involve classifying a support ticket by urgency and topic before routing it, enriching a lead record by reasoning about which fields to populate from multiple sources, or deciding which of several templated responses best fits an inbound request.

The best architectures connectivity, context, and control to support the full spectrum of autonomy, giving humans and agentic workflows freedom to operate on their own terms, with the same services, the same governance, and the same credentials at every point along the way.

Build your AI agent strategy on Connect AI

Your workflows will vary — your data architecture should be ready for that. Start a free trial of CData Connect AI and give your agents governed access for broad exploration, rigid action execution, and everything in between, across 350+ enterprise data sources.

Your enterprise data, finally AI-ready.

Connect AI gives your AI assistants and agents live, governed access to 350+ enterprise systems — so they can reason over your actual business data, not just what they were trained on.