Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →A Comparison of JDBC Drivers for BigQuery

The metrics in this article were found using the most up-to-date drivers available as of October 2019.

BigQuery is "Google's fully managed, petabyte-scale, low-cost enterprise data warehouse for analytics" and provides a robust, widely-used way to store and access your data. For many companies, Google BigQuery is the first choice for a cloud-based analytics platform. Because BigQuery is cloud-based, there is no need for a database administrator and there is no infrastructure to maintain.

This article compares the read and write performance of the Google-supported JDBC Driver for Google BigQuery1, a Competitor JDBC Driver for Google BigQuery, and the CData Software JDBC Driver for Google BigQuery2 when working with larger datasets. We show how the CData Driver is able to read large data sets 1.5x - 13x faster than other drivers and rapidly perform million-row inserts, a task that other drivers are unable to complete.

The Data

In order to provide a reproducible comparison for reading data, we queried the tlc_yellow_trips_2018 table from the new_york_taxi_trips dataset in the public bigquery-public-data project3. The details for the tlc_yellow_trips_2018 table are below:

| Table Size | Table Number of Rows | Number of Columns | ||

|---|---|---|---|---|

| 18.1 GB | 112,234,626 | 17 |

JDBC Driver Read Performance

The main goal of this investigation was to compare the related performance of the drivers. We did this by running the same set of queries with each JDBC driver. We used LIMIT clauses to change the size of the dataset returned in each query, but we requested the same columns for each query.

Base Query

SELECT vendor_id, passenger_count, trip_distance, rate_code, store_and_fwd_flag, payment_type, fare_amount, extra, mta_tax, tip_amount, tolls_amount, imp_surcharge, total_amount, pickup_location_id, dropoff_location_id FROM `bigquery-public-data.new_york_taxi_trips.tlc_yellow_trips_2018`

Limit by Query

- LIMIT 100000

- LIMIT 1000000

- LIMIT 10000000

To test the drivers, we connected to BigQuery using a basic Java application and executed the above queries repeatedly. The results were read and stored in a new variable (based on the datatype) for each column in each row. The charts below compare using two APIs: the V2 REST API and the pre-release Storage API (NOTE: the Competitor driver does not support the Storage API). The times you see in the chart below are the average times for each query, in seconds.

Querying Data Using the REST API

| Query Times by Driver (in seconds) | |||

|---|---|---|---|

| Query | CData JDBC | Google JDBC | Competitor JDBC |

| 1 (100,000 rows) | 9.96 (+20%) | 11.89 | 12.37 |

| 2 (1,000,000 rows) | 99.56 (+11%) | 110.40 | 111.04 |

| 3 (10,000,000 rows) | 971.22 (+15%) | 1,114.76 | 1,074.95 |

As can be seen in the results, the CData drivers regularly outperformed the other drivers when using the REST API, retrieving and processing results up to 24% faster.

Querying Data Using the Storage API

When testing with the Storage API, we added an additional query for all of the data in the sample dataset.

| Query Times by Driver (in seconds) | ||

|---|---|---|

| Query | CData JDBC | Google JDBC |

| 1 (100,000 rows) | 3.64 (+126%) | 8.21 |

| 2 (1,000,000 rows) | 10.06 (+90%) | 19.16 |

| 3 (10,000,000 rows) | 82.62 (+54%) | 127.51 |

| 4 (112,234,626 rows) | 911.39 (+49%) | 1,355.37 |

As can be seen in the results, the CData Driver is able to leverage the high-throughput capabilities of the Storage API to retrieve and process even the largest data sets 1.5x faster than the Google Driver.

JDBC Driver Resource Usage

While testing the read performance of the JDBC drivers, we also measured client-side resource usage, looking specifically at memory and CPU usage. The charts below were found by running a sample Java program and using Java VisualVM to capture the CPU and memory usage. We used Java version 12 update 211 with a maximum heap size of 4.27 Gigabytes.

For this comparison, we ran the query for 10 million rows against the V2 REST API.

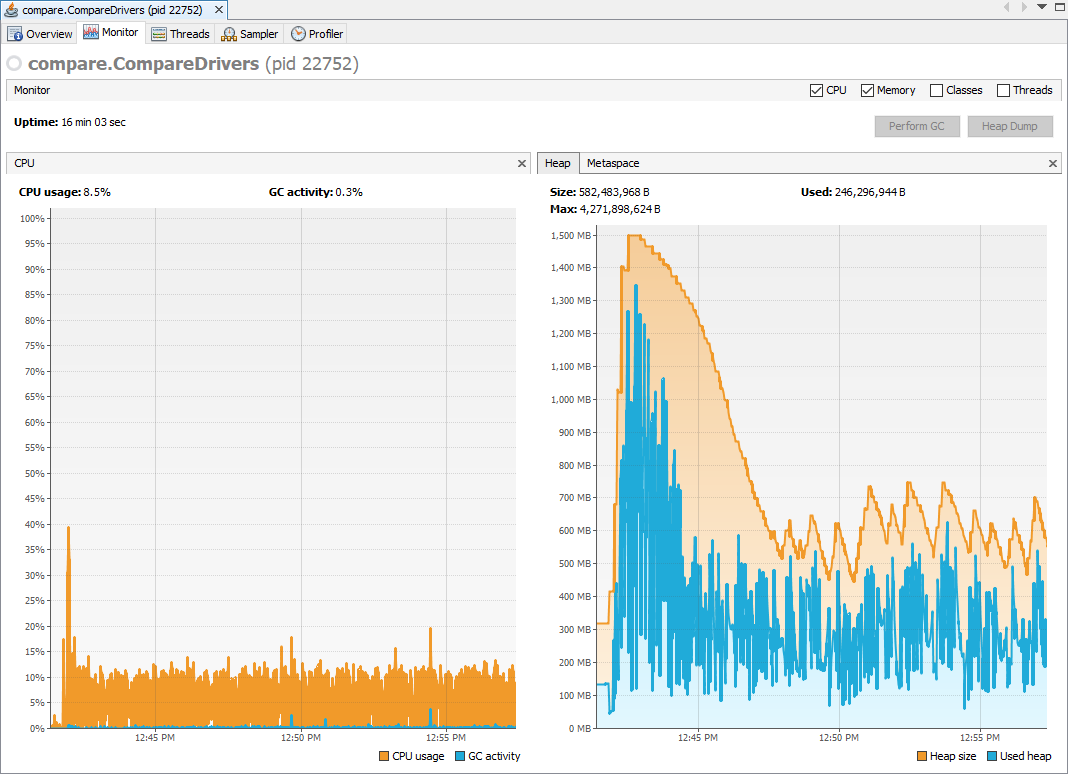

CData Driver

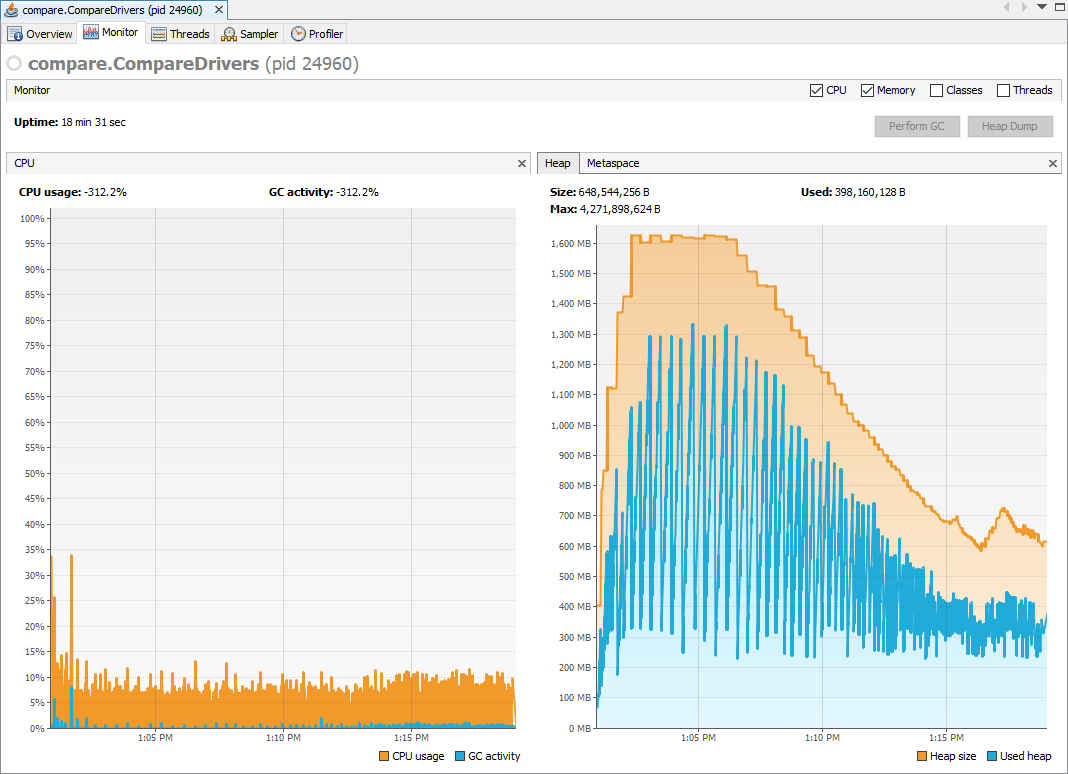

Google Driver*

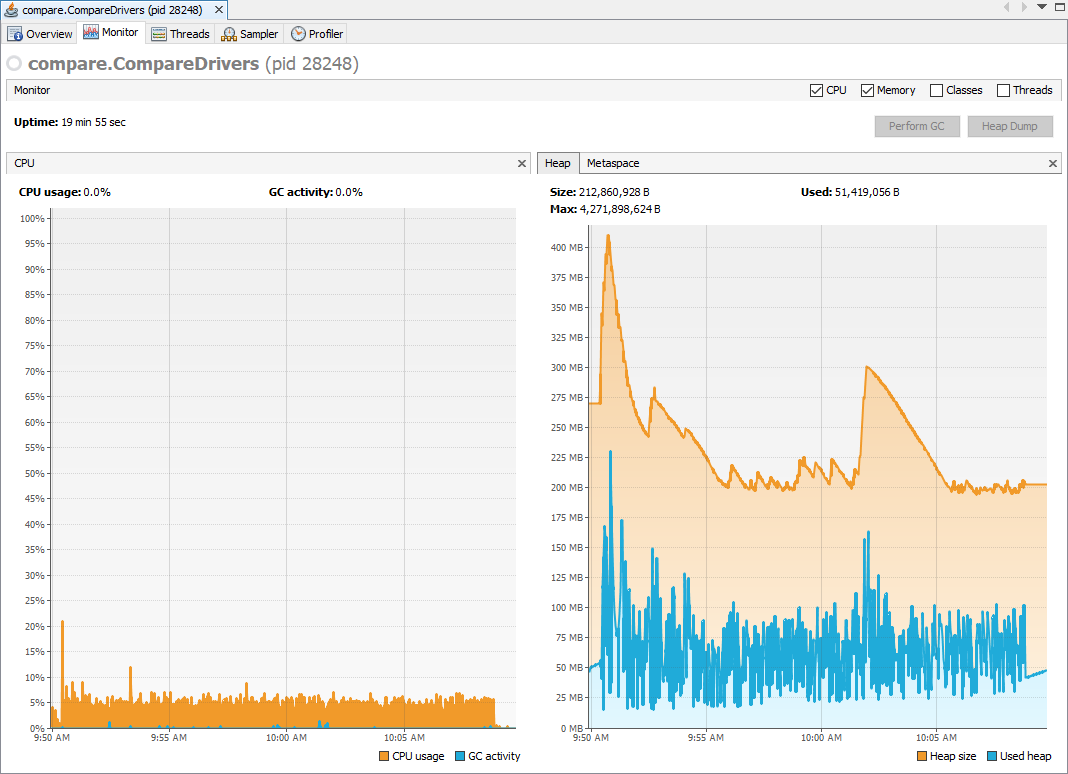

Competitor Driver*

* Note the change in scale for the Heap graph.

The CData Driver averages near 700 MB of heap usage for the first few minutes and then uses around 250 MB for the remainder of the time. Based on the CPU usage graph, we can see that the CData Driver is consistently using around 6% or 7% of the CPU available, consistently processing the data as fast as it is returned.

The native driver averages near 700 MB of heap usage and, unlike the CData driver, maintains a high level of heap usage for most of the execution time, dropping to using around 350 MB for the last fourth of the execution. The Google Driver uses around 5% of the CPU available for the length of the execution time.

The competitor driver averages around 70 MB of heap usage but is relatively inconsistent in its heap usage (compared to the CData and Google Drivers). The competitor driver uses less than 5% of the CPU available for the length of the execution time.

Million Row Challenge Revisited: 3.5x Faster

In October of 2017, we put the CData JDBC Driver to the task of inserting one million rows (a task the native driver was not even able to perform). Our updates to the Driver since then have also improved the write speed, now taking (on average) 5 minutes 47 seconds to insert the data (with 19 columns in each row). This makes the new CData JDBC Driver over 3.5x faster at inserts than previously.

In comparison, neither the Google Driver nor the Competitor driver are able to execute high-performance INSERT statements. The drivers do not implement large-scale ingestion mechanisms. The drivers are able to execute a small number of INSERT statements, but any attempts to load large data sets cause you to hit the rate limits for the BigQuery Data Manipulation Language.1

We used the same simple Java program as before to add the rows to a copy of the trips table from the nyc_tlc.yellow public dataset3. For our testing, we inserted the data in 100 batches of 10,000 rows.

Sample Code

//one batch

Connection connection = DriverManager.getConnection("jdbc:googlebigquery:InitiateOAuth=GETANDREFRESH;QueryPassthrough=false;ProjectId=" + projectId + ";DatasetId=" + datasetId + ";Timeout=240;UseLegacySQL=false;");

String cmd = "INSERT INTO TestDataset.nyc_yellow_trips_copy (vendor_id, pickup_datetime, dropoff_datetime, pickup_longitude, pickup_latitude, dropoff_longitude, dropoff_latitude, rate_code, passenger_count, trip_distance, payment_type, fare_amount, extra, mta_tax, imp_surcharge, tip_amount, tolls_amount, total_amount, store_and_fwd_flag) VALUES (?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?,?)";

PreparedStatement pstmt = connection.prepareStatement(cmd);

for (int row = 0; row < rows.length; row++){

String[] line = rows[row].split(",");

for (int i = 0 ; i < line.length; i++) {

//add parameters based on datatype

if ( (i < 3) || (i ==7) || (i==10) || (i==18) ) {

pstmt.setString(i + 1, line[i]);

} else if (i == 8) {

pstmt.setInt(i+1, Integer.parseInt(line[i]));

} else {

pstmt.setDouble(i+1, Double.parseDouble(line[i]));

}

}

pstmt.addBatch();

}

int[] affected = pstmt.executeBatch();Results

| # of Rows | # of Columns | Time (in Seconds) |

|---|---|---|

| 1,000,000 | 19 (mixed data types) | 346.64 |

Conclusion

The CData Driver's performance has improved since our last comparison and continues to offer better querying of large datasets over the Google-supported driver and the competitor driver, processing the largest dataset 54% faster by making better use of the available client resources read and process data as quickly as possible.

When it comes to inserting data, the CData Driver stands alone in its ability to rapidly insert large sets of data. In our previous article, we were able to insert 1 million rows in around 20 minutes. Now the driver performs the same task in less than 6 minutes.

Our developers have labored to optimize the performance in processing the results returned by Google to the point that the drivers seem to only be hindered by web traffic and server processing times. The same effort has been put into making the most of the BigQuery Write functionality, making the CData Drivers the best drivers for both reading from and writing to Google BigQuery.