Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Integrate with Azure Data Lake Storage Data using Apache Camel2

Create a simple Java app that uses Apache Camel routing and the CData JDBC Driver to copy Azure Data Lake Storage data to a JSON file on disk.

Apache Camel is an open source integration framework that allows you to integrate various systems consuming or producing data. When paired with the CData JDBC Driver for Azure Data Lake Storage, you can write Java apps that use Camel routes that integrate with live Azure Data Lake Storage data. This article walks through creating an app in NetBeans that connects, queries, and routes Azure Data Lake Storage data to a JSON file.

With built-in optimized data processing, the CData JDBC Driver offers unmatched performance for interacting with live Azure Data Lake Storage data. When you issue complex SQL queries to Azure Data Lake Storage, the driver pushes supported SQL operations, like filters and aggregations, directly to Azure Data Lake Storage and utilizes the embedded SQL engine to process unsupported operations client-side (often SQL functions and JOIN operations). Its built-in dynamic metadata querying allows you to work with and analyze Azure Data Lake Storage data using native data types.

Creating A New Maven/Java Project

Follow the steps below to create a new Java project and add the appropriate dependencies:

- Open NetBeans and create a new project.

- Select Maven from the categories list and Java Application from the projects list, then click Next.

- Name the project (and adjust any other properties) and click Finish.

- In the source package, create a new Java class (we used App.java for this article) and add the main method to the class.

Adding Project Dependencies

With the project created, we can start adding the dependencies needed to work with live Azure Data Lake Storage data from our App. If you have not already done so, install Maven in your environment, as it is required to add the JAR file for the CData JDBC Driver to your project.

Installing the CData JDBC Driver for Azure Data Lake Storage with Maven

- Download the CData JDBC Driver for Azure Data Lake Storage installer, unzip the package, and run the JAR file to install the driver.

- Use Maven to install the JDBC Driver as a connector.

mvn install:install-file -Dfile="C:\Program Files\CData[product_name] 2019\lib\cdata.jdbc.adls.jar" -DgroupId="org.cdata.connectors" -DartifactId="cdata-adls-connector" -Dversion="19" -Dpackaging=jar

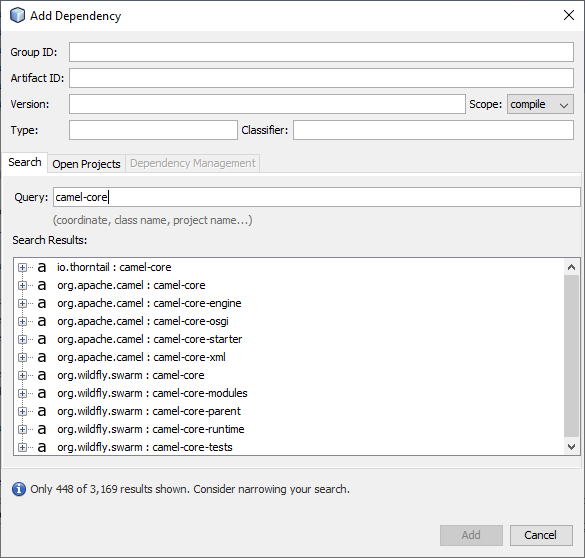

Once the JDBC Driver is installed, we can add dependencies to our project. To add a dependency, you can either edit the pom.xml file or right-click the dependencies folder and click Add Dependency. The properties for each dependency follow, but you can search through the available libraries by typing the name of the dependency in the Query box in the Add Dependency wizard.

Required Dependencies

| Dependency | Group ID | Artifact ID | Version |

|---|---|---|---|

| camel-core | org.apache.camel | camel-core | 3.0.0 |

| camel-jackson | org.apache.camel | camel-jackson | 3.0.0 |

| camel-jdbc | org.apache.camel | camel-jdbc | 3.0.0 |

| camel-jsonpath | org.apache.camel | camel-jsonpath | 3.0.0 |

| cdata-adls-connector | org.cdata.connectors | cdata-salesforce-connector | 19 |

| commons-dbcp2 | org.apache.commons | commons-dbcp2 | 2.7.0 |

| slf4j-log4j12 | org.slf4j | slf4j-log4j12 | 1.7.30 |

| log4j | org.apache.logging.log4j | log4j | 2.12.1 |

Accessing Azure Data Lake Storage Data in Java Apps with Camel

After adding the required dependencies, we can use the Java DSL (Domain Specific Language) to create routes with access to live Azure Data Lake Storage data. Code snippets follow. Download the sample project (zip file) to follow along (make note of the TODO comments).

Start by importing the necessary classes into our main class.

import org.apache.camel.CamelContext; import org.apache.camel.builder.RouteBuilder; import org.apache.camel.impl.DefaultCamelContext; import org.apache.camel.support.SimpleRegistry; import org.apache.commons.dbcp2.BasicDataSource; import org.apache.log4j.BasicConfigurator;

Then in the main method, we configure logging, create a new BasicDataSource and add it to the registry, create a new CamelContext, and finally add a route to the context. In this sample, we route Azure Data Lake Storage data to a JSON file.

Configure Logging

BasicConfigurator.configure();

Create a BasicDataSource

Create a BasicDataSource and set the driver class name (cdata.jdbc.salesforce.SalesforceDriver) and URL (using the required connection properties).

Authenticating to a Gen 1 DataLakeStore Account

Gen 1 uses OAuth 2.0 in Azure AD for authentication.

For this, an Active Directory web application is required. You can create one as follows:

To authenticate against a Gen 1 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen1.

- Account: Set this to the name of the account.

- OAuthClientId: Set this to the application Id of the app you created.

- OAuthClientSecret: Set this to the key generated for the app you created.

- TenantId: Set this to the tenant Id. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

Authenticating to a Gen 2 DataLakeStore Account

To authenticate against a Gen 2 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen2.

- Account: Set this to the name of the account.

- FileSystem: Set this to the file system which will be used for this account.

- AccessKey: Set this to the access key which will be used to authenticate the calls to the API. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

BasicDataSource basic = new BasicDataSource();

basic.setDriverClassName("cdata.jdbc.adls.ADLSDriver");

basic.setUrl("jdbc:adls:Schema=ADLSGen2;Account=myAccount;FileSystem=myFileSystem;AccessKey=myAccessKey;InitiateOAuth=GETANDREFRESH");

The CData JDBC Driver includes a built-in connection string designer to help you configure the connection URL.

Built-in Connection String Designer

For assistance in constructing the JDBC URL, use the connection string designer built into the Azure Data Lake Storage JDBC Driver. Either double-click the JAR file or execute the jar file from the command line.

java -jar cdata.jdbc.adls.jar

Fill in the connection properties and copy the connection string to the clipboard.

Add the BasicDataSource to the Registry and Create a CamelContext

SimpleRegistry reg = new SimpleRegistry();

reg.bind("myDataSource", basic);

CamelContext context = new DefaultCamelContext(reg);

Add Routing to the CamelContext

The routing below uses a timer component to run one time and passes a SQL query to the JDBC Driver. The results are marshaled as JSON (and formatted for pretty print) and passed to a file component to write to disk as a JSON file.

context.addRoutes(new RouteBuilder() {

@Override

public void configure() {

from("timer://foo?repeatCount=1")

.setBody(constant("SELECT * FROM Account LIMIT 10"))

.to("jdbc:myDataSource")

.marshal().json(true)

.to("file:C:\\Users\\USER\\Documents?fileName=account.json");

}

});

Managing the CamelContext Lifecycle

With the route defined, start the CamelContext to begin the lifecycle. In this example, we wait 10 seconds and then shut down the context.

context.start(); Thread.sleep(10000); context.stop();

Free Trial, Sample Project & Technical Support

Now, you have a working Java application that uses Camel to route data from Azure Data Lake Storage to a JSON file. Download a free, 30-day trial of the CData JDBC Driver for Azure Data Lake Storage and the sample project (make note of the TODO comments) and start working with your live Azure Data Lake Storage data in Apache Camel. Reach out to our Support Team if you have any questions.