Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Build Visualizations of Azure Data Lake Storage Data in Birst

Use CData drivers and the Birst Cloud Agent to build real-time visualizations of Azure Data Lake Storage data in Birst.

Birst is a cloud business intelligence (BI) tool and analytics platform that helps organizations quickly understand and optimize complex processes. When paired with the CData JDBC Driver for Azure Data Lake Storage, you can connect to live Azure Data Lake Storage data through the Birst Cloud Agent and build real-time visualizations. In this article, we walk you through, step-by-step, how to connect to Azure Data Lake Storage using the Cloud Agent and create dynamic reports in Birst.

With powerful data processing capabilities, the CData JDBC Driver offers unmatched performance for live Azure Data Lake Storage data operations in Birst. When you issue complex SQL queries from Birst to Azure Data Lake Storage, the driver pushes supported SQL operations, like filters and aggregations, directly to Azure Data Lake Storage and utilizes the embedded SQL Engine to process unsupported operations client-side (often SQL functions and JOIN operations). With built-in dynamic metadata querying, the JDBC driver enables you to visualize and analyze Azure Data Lake Storage data using native Birst data types.

Configure a JDBC Connection to Azure Data Lake Storage Data in Birst

Before creating the Birst project, you will need to install the Birst Cloud Agent (in order to work with the installed JDBC Driver). Also, copy the JAR file for the JDBC Driver (and the LIC file, if it exists) to the /drivers/ directory in the installation location for the Cloud Agent.

With the driver and Cloud Agent installed, you are ready to begin.

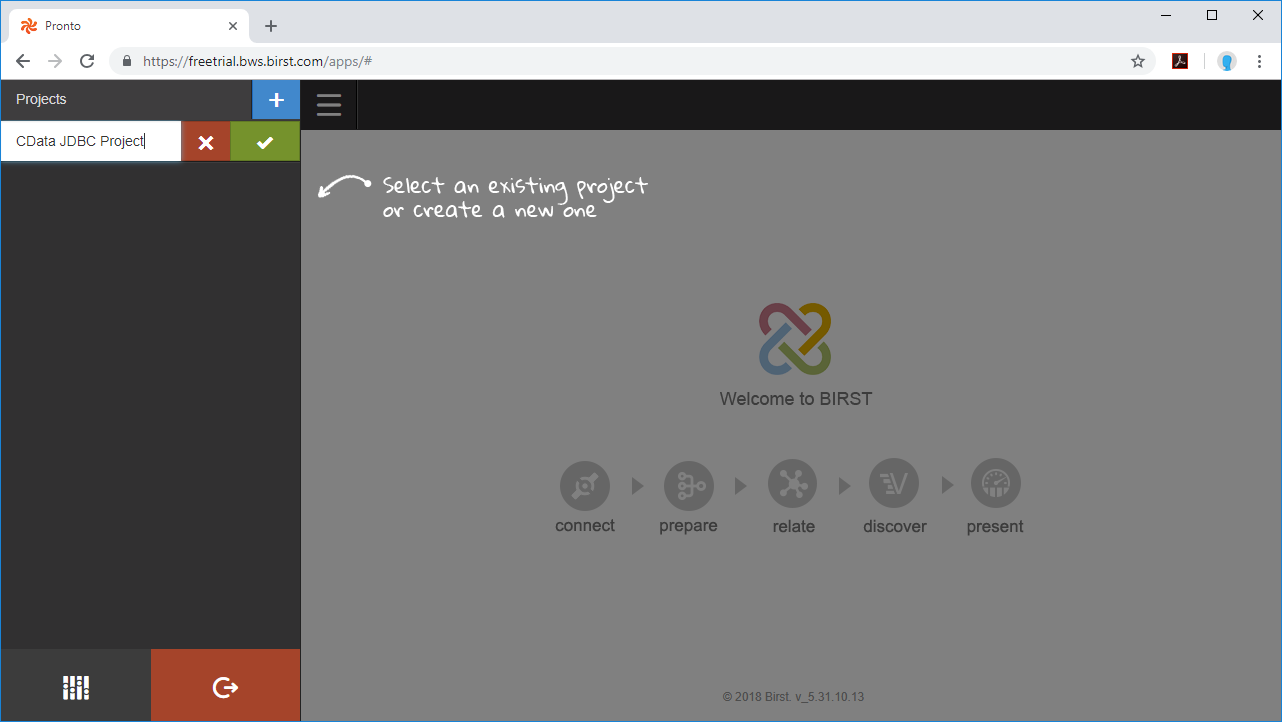

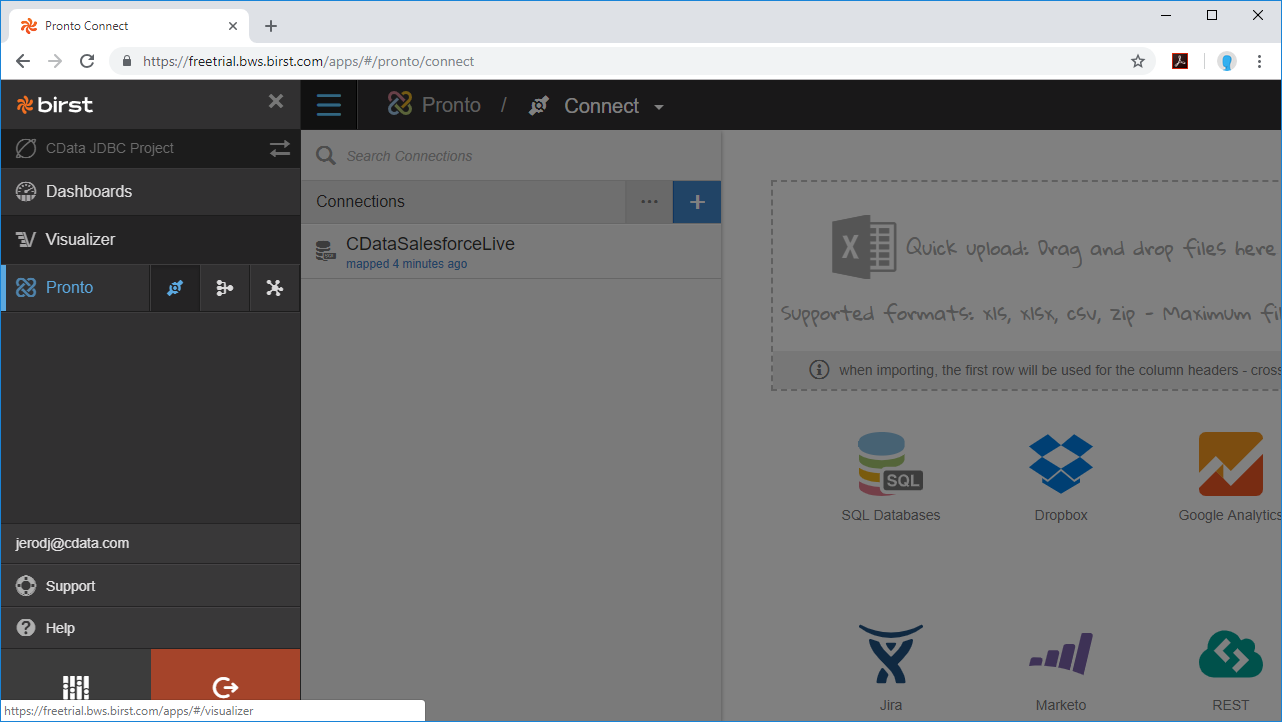

- Create a new project in Birst.

![Create a new Project in Birst]()

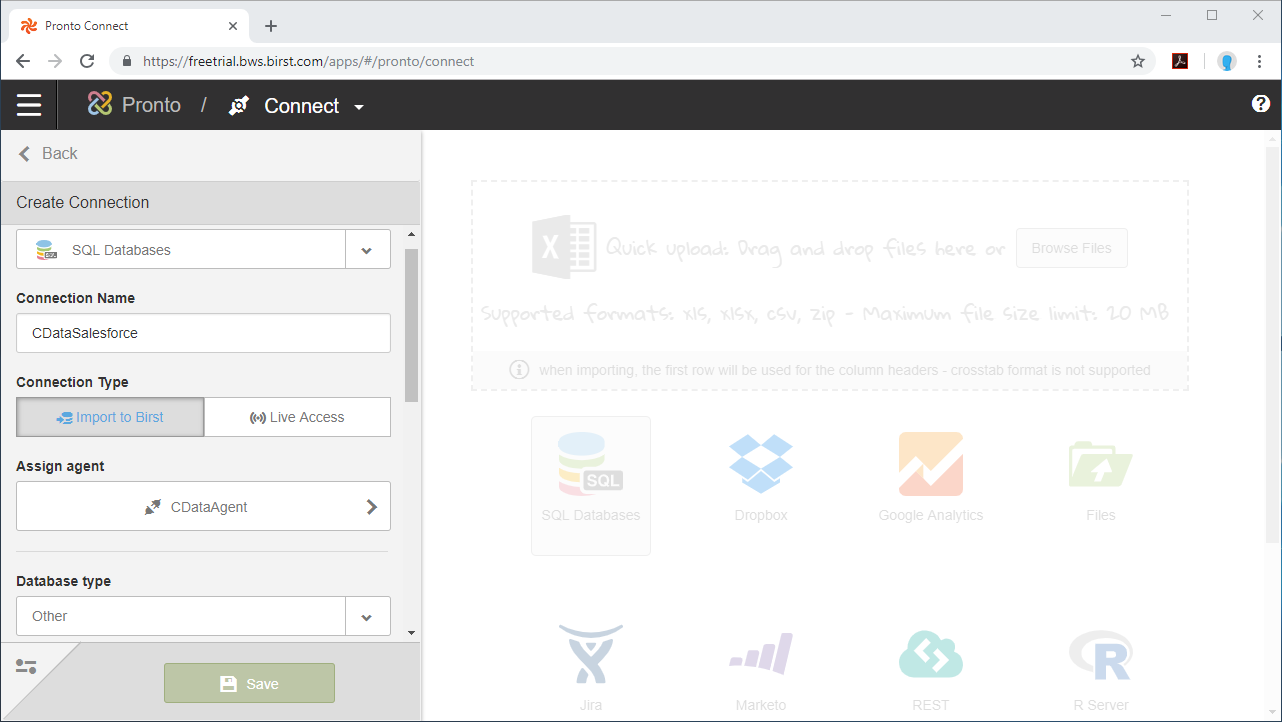

- Name the connection (e.g. CDataADLS).

- Choose Live Access.

- Select an agent.

- Set Database Type to Other.

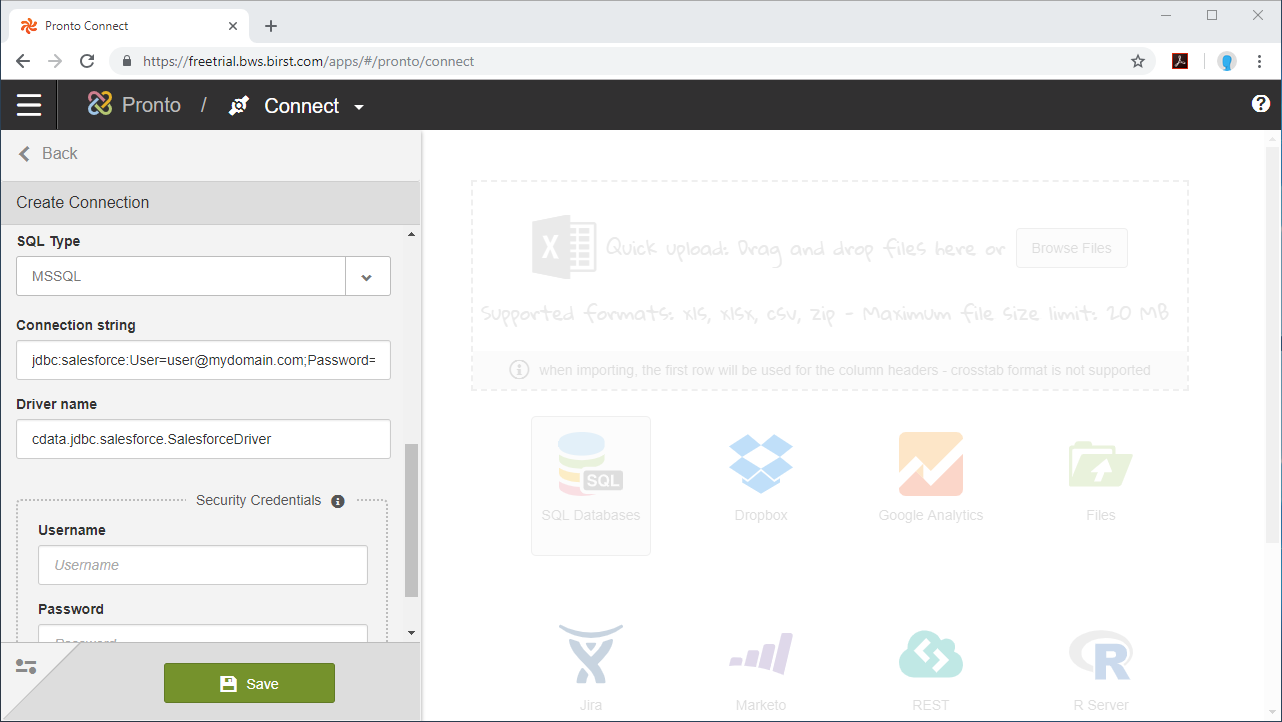

- Set SQL Type to MSSQL

- Set the Connection string.

Authenticating to a Gen 1 DataLakeStore Account

Gen 1 uses OAuth 2.0 in Azure AD for authentication.

For this, an Active Directory web application is required. You can create one as follows:

To authenticate against a Gen 1 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen1.

- Account: Set this to the name of the account.

- OAuthClientId: Set this to the application Id of the app you created.

- OAuthClientSecret: Set this to the key generated for the app you created.

- TenantId: Set this to the tenant Id. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

Authenticating to a Gen 2 DataLakeStore Account

To authenticate against a Gen 2 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen2.

- Account: Set this to the name of the account.

- FileSystem: Set this to the file system which will be used for this account.

- AccessKey: Set this to the access key which will be used to authenticate the calls to the API. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

Built-in Connection String Designer

For assistance in constructing the JDBC URL, use the connection string designer built into the Azure Data Lake Storage JDBC Driver. Either double-click the JAR file or execute the jar file from the command-line.

java -jar cdata.jdbc.adls.jarFill in the connection properties and copy the connection string to the clipboard.

![Using the built-in connection string designer to generate a JDBC URL (Salesforce is shown.)]()

When you configure the JDBC URL, you may also want to set the Max Rows connection property. This will limit the number of rows returned, which is especially helpful for improving performance when designing reports and visualizations.

Below is a typical JDBC connection string for Azure Data Lake Storage:

jdbc:adls:Schema=ADLSGen2;Account=myAccount;FileSystem=myFileSystem;AccessKey=myAccessKey;InitiateOAuth=GETANDREFRESH - Set the Driver Name: cdata.jdbc.adls.ADLSDriver and click Save.

NOTE: Since authentication to Azure Data Lake Storage is managed from the connection string, you can leave Security Credentials blank.

Configure Azure Data Lake Storage Data Objects

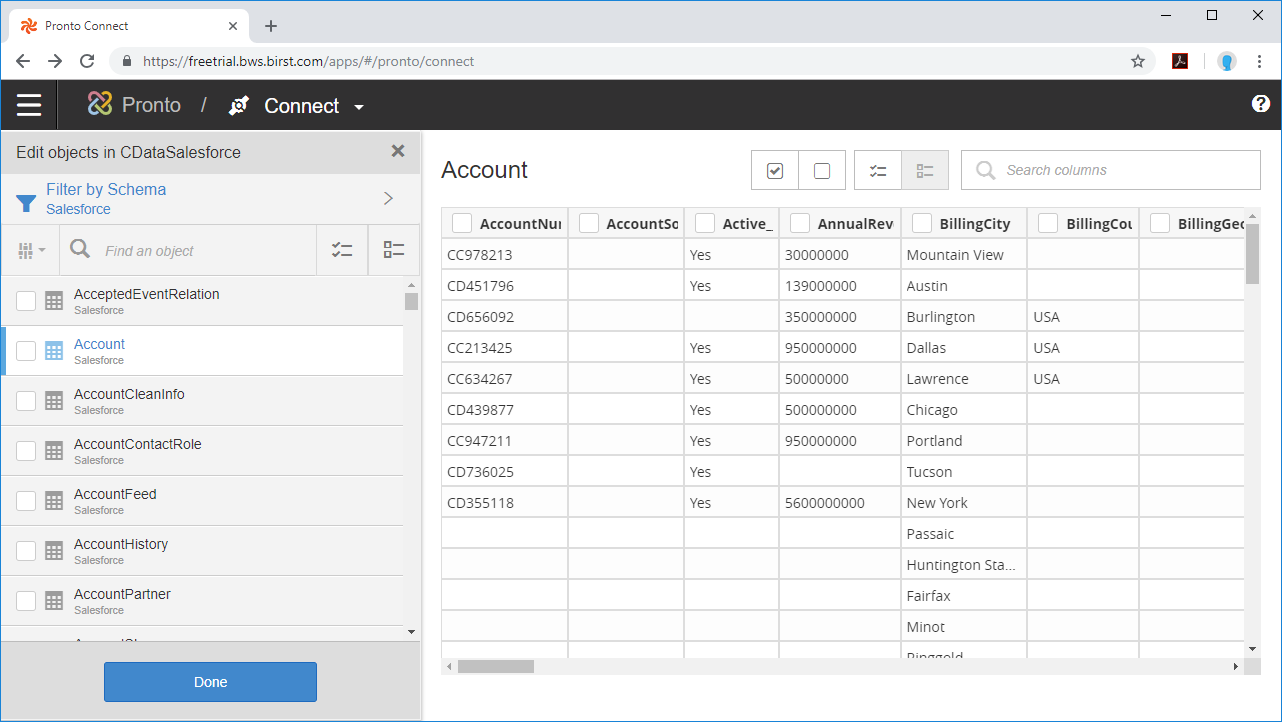

Now that the connection is configured, we are ready to configure the schema for the dataset, choosing the tables, views, and columns we wish to visualize.

- Select the Schema (e.g. ADLS).

- Click on Tables and/or Views to connect to those entities and click Apply.

- Select the Tables and Columns you want to access and click Done.

With the objects configured, you can perform any data preparation and discover any relationships in your data using the Pronto Prepare and Relate tools.

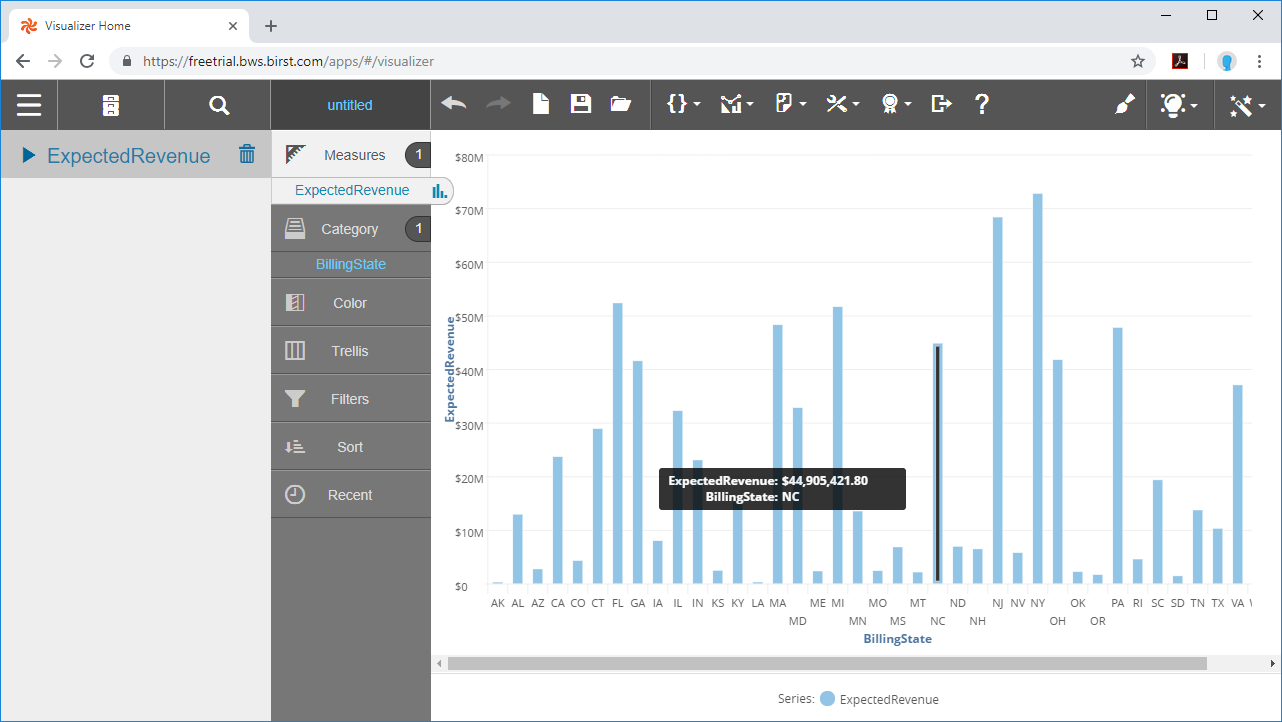

Build a Visualization

After you prepare your data and define relationships between the connected objects, you are ready to build your visualization.

- Select the Visualizer tool from the menu.

- Select Measures & Categories from your objects

- Select and configure the appropriate visualization for the Measure(s) you selected.

Using the CData JDBC Driver for Azure Data Lake Storage with the Cloud Agent and Birst, you can easily create robust visualizations and reports on Azure Data Lake Storage data. Download a free, 30-day trial and start building Birst visualizations today.