Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Integrating Azure Data Lake Storage Data in Talend Cloud Data Management Platform

Connect Azure Data Lake Storage Data with Talend Cloud Data Management Platform using the CData JDBC Driver for Azure Data Lake Storage.

Qlik's Talend Cloud Data Management Platform supports various data environments, enabling analytics for smarter decisions, operational data sharing, data and application modernization, and establishing data excellence for risk reduction. When paired with the CData JDBC Driver for Azure Data Lake Storage, you can improve data integration, quality, and governance for your Azure Data Lake Storage Data. This article shows how you can easily integrate to Azure Data Lake Storage using a CData JDBC Driver in Talend Cloud Data Management, and then view the data for simultaneous use in your workflow.

Prerequisites

Before connecting the CData JDBC Driver to view and work with your data in Talend Cloud Data Management Platform, make sure to download and install the latest version of Talend Studio on your system. Also, ensure that you have the required prerequisites.

- A Talend Cloud Data Management account with appropriate permissions.

- The CData JDBC Driver for Azure Data Lake Storage, which can be downloaded from the CData website.

Connect to Azure Data Lake Storage in Talend as a JDBC data source

Access Talend Data Management Cloud

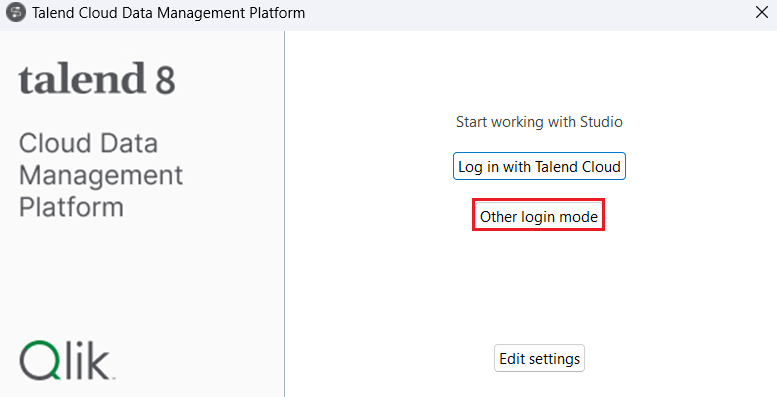

- Run the Talend Cloud Data Management Platform installed on your local system and click on Other Login Mode.

![Log into Talend Cloud Data Management Platform locally]()

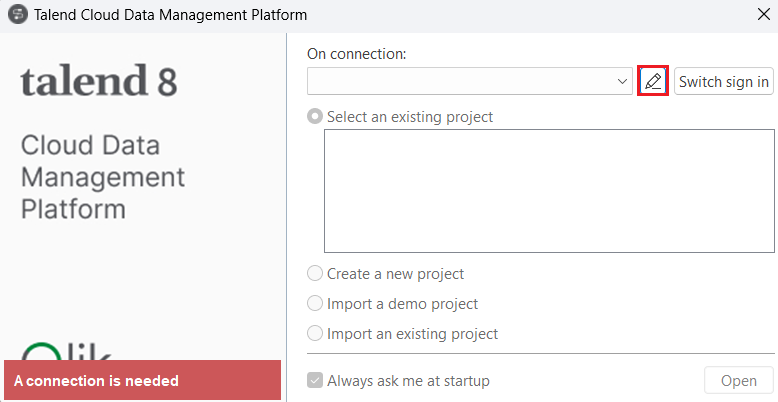

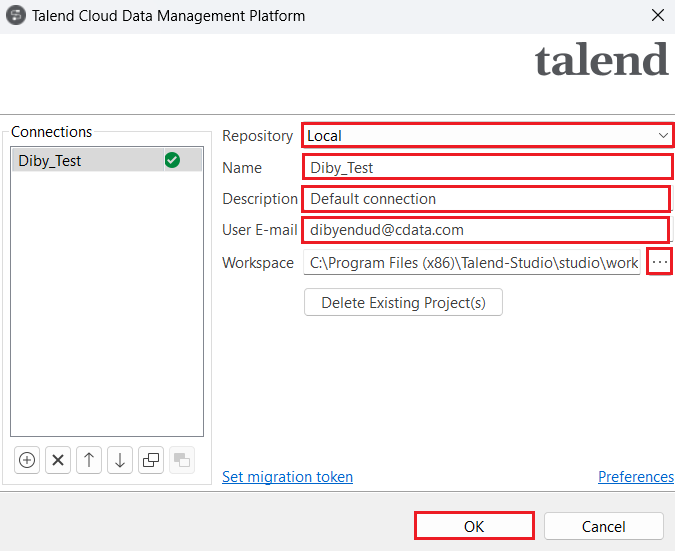

- Click on Manage Connections. Set Repository to "Local" and enter the Name, Description and User E-mail in the respective spaces. Set the Workspace path and click on OK.

![Manage a connection.]()

![Create a connection in Talend Data Management Cloud]()

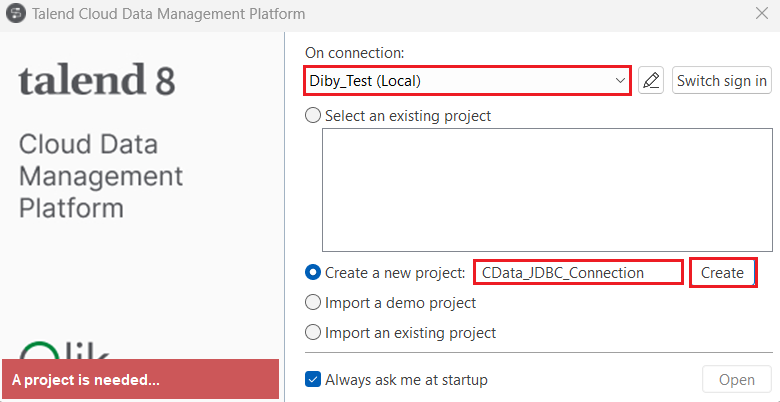

- Now, select the Create a new project radio button to add a new project name and click on Create.

![Create a new project.]()

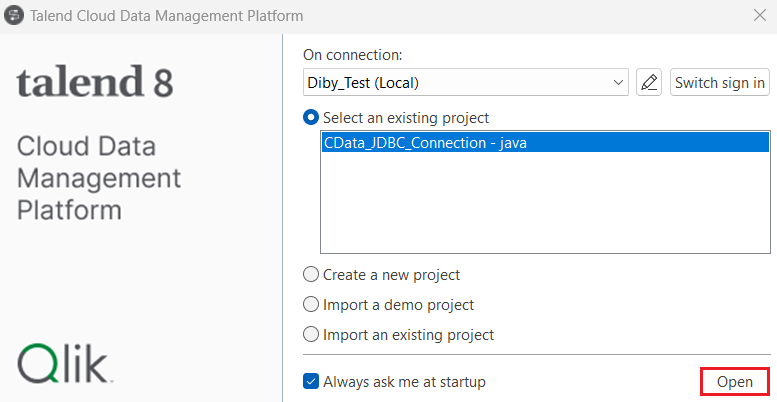

- The new project should appear under the Select an existing project section. Click on Open. The Talend Cloud Data Management Platform workspace opens up.

![Open the Talend Cloud Data Management Platform workspace.]()

Create a new connection

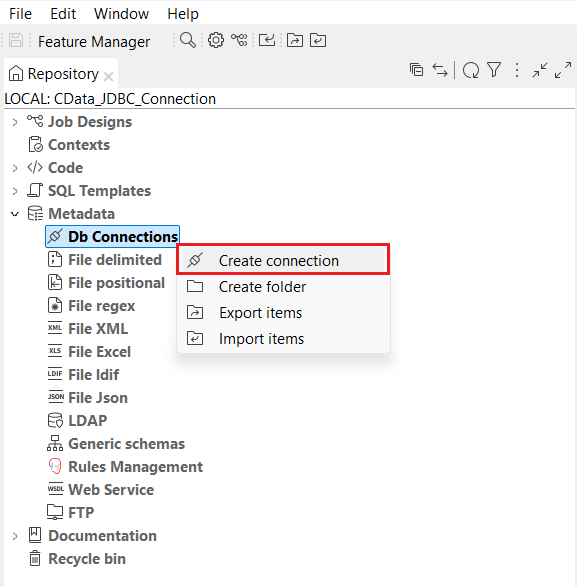

- In the navigation pane, locate and expand the Metadata dropdown. Right-click on Db Connections and select Create Connection.

![Create a new connection in the Talend platform under Db connections.]()

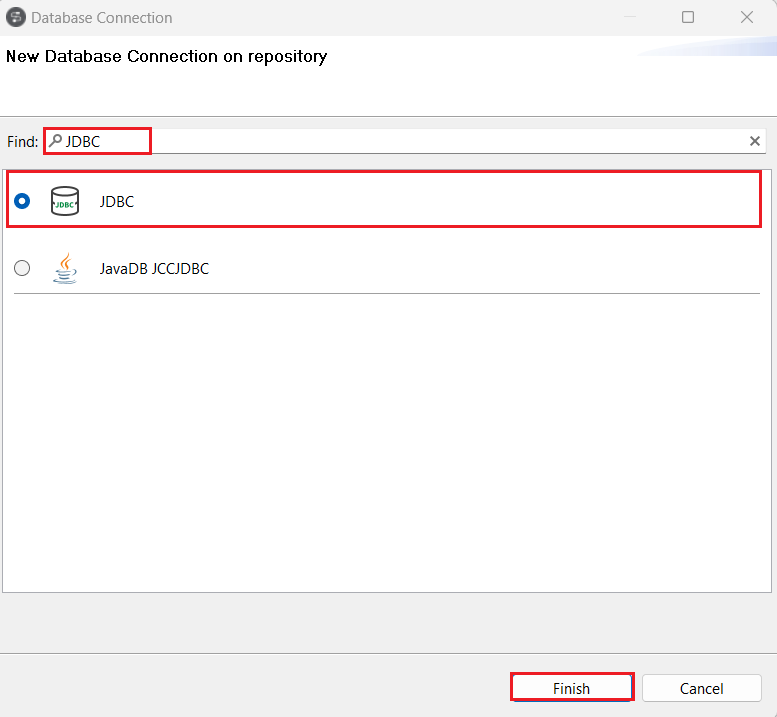

- Search for and select 'JDBC' in the Find section of the Database Connection window. Then, click on Finish.

![Search and select the JDBC connector.]()

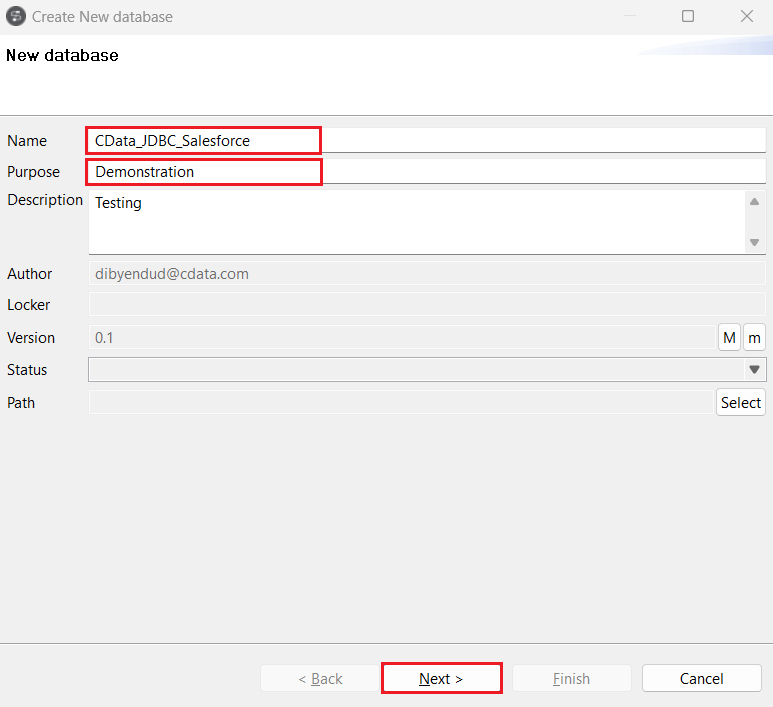

- Enter the Name, Purpose and Description of the new database in Talend where you need to load the Azure Data Lake Storage data. Click on Next.

![Enter details of the database toload the source data.]()

- Generate a JDBC URL for connecting to Azure Data Lake Storage, beginning with jdbc:adls: followed by a series of semicolon-separated connection string properties.

Authenticating to a Gen 1 DataLakeStore Account

Gen 1 uses OAuth 2.0 in Azure AD for authentication.

For this, an Active Directory web application is required. You can create one as follows:

To authenticate against a Gen 1 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen1.

- Account: Set this to the name of the account.

- OAuthClientId: Set this to the application Id of the app you created.

- OAuthClientSecret: Set this to the key generated for the app you created.

- TenantId: Set this to the tenant Id. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

Authenticating to a Gen 2 DataLakeStore Account

To authenticate against a Gen 2 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen2.

- Account: Set this to the name of the account.

- FileSystem: Set this to the file system which will be used for this account.

- AccessKey: Set this to the access key which will be used to authenticate the calls to the API. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

Built-in Connection String Designer

For assistance in constructing the JDBC URL, use the connection string designer built into the Azure Data Lake Storage JDBC Driver. Either double-click the JAR file or execute the jar file from the command-line.

java -jar cdata.jdbc.adls.jarFill in the connection properties and copy the connection string to the clipboard.

![Using the built-in connection string designer to generate a JDBC URL (Salesforce is shown.)]()

A typical JDBC URL is below:

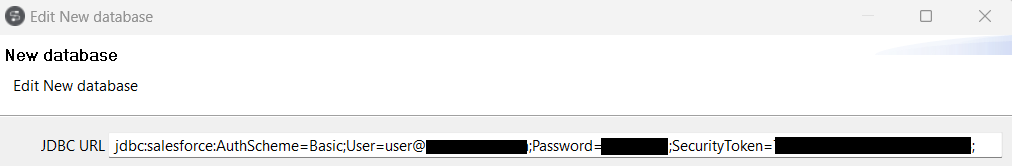

jdbc:adls:Schema=ADLSGen2;Account=myAccount;FileSystem=myFileSystem;AccessKey=myAccessKey;InitiateOAuth=GETANDREFRESH Enter the JDBC URL copied from CData JDBC Driver for Azure Data Lake Storage in Edit new database.

![Enter the JDBC URL (Salesforce is shown).]()

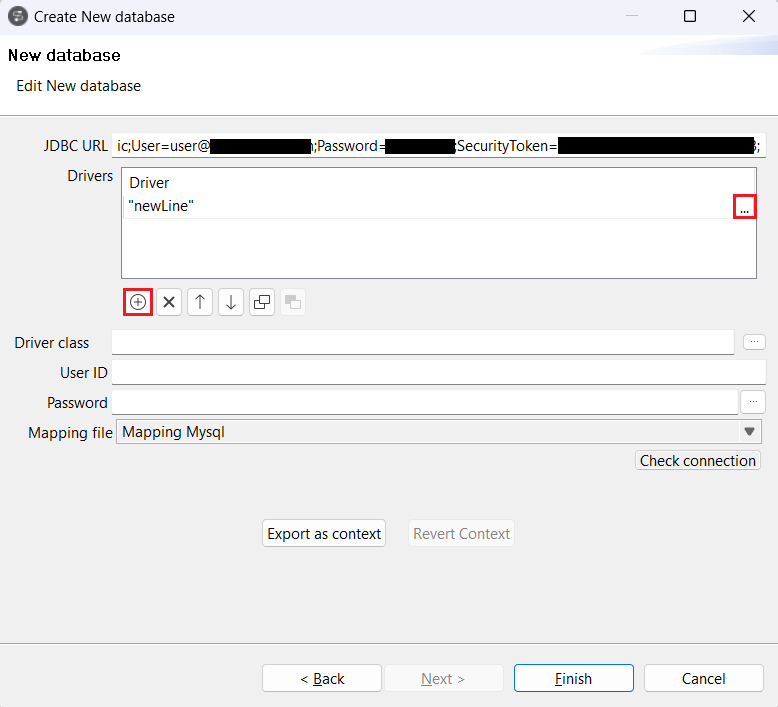

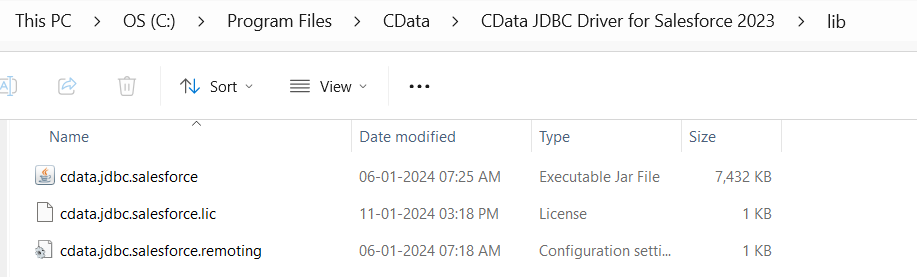

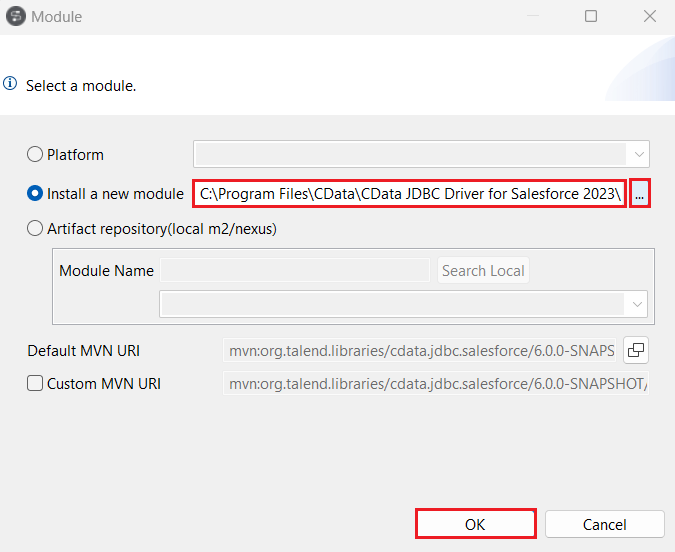

- Click on the "(+) Add" button under Drivers. A 'newLine' will appear in the Drivers board. Click on (...) at the end of the new line, select the Install a new module radio button, and click on (...) to add the path to the JAR file, located in the 'lib' subfolder of the installation directory. Click on OK.

![Add a new line in Drivers board to add the path to the Azure Data Lake Storage JAR file (Salesforce is shown).]()

![The lib folder which contains the Azure Data Lake Storage JAR file (Salesforce is shown).]()

![Add the JAR installation path in 'Install a new module' (Salesforce is shown).]()

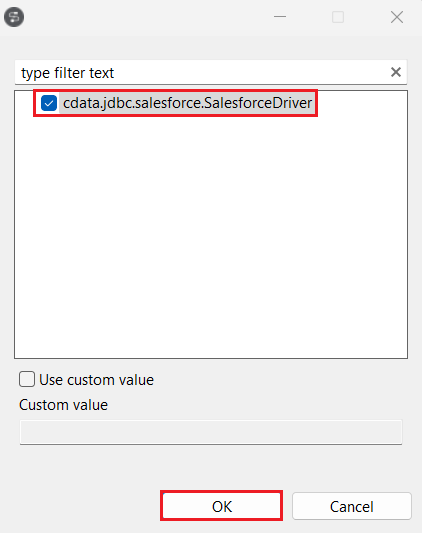

- Select the Driver Class as cdata.jdbc.adls (JAR file obtained from your installation directory given in the previous step).

![Add the Driver class.]()

Test the new connection

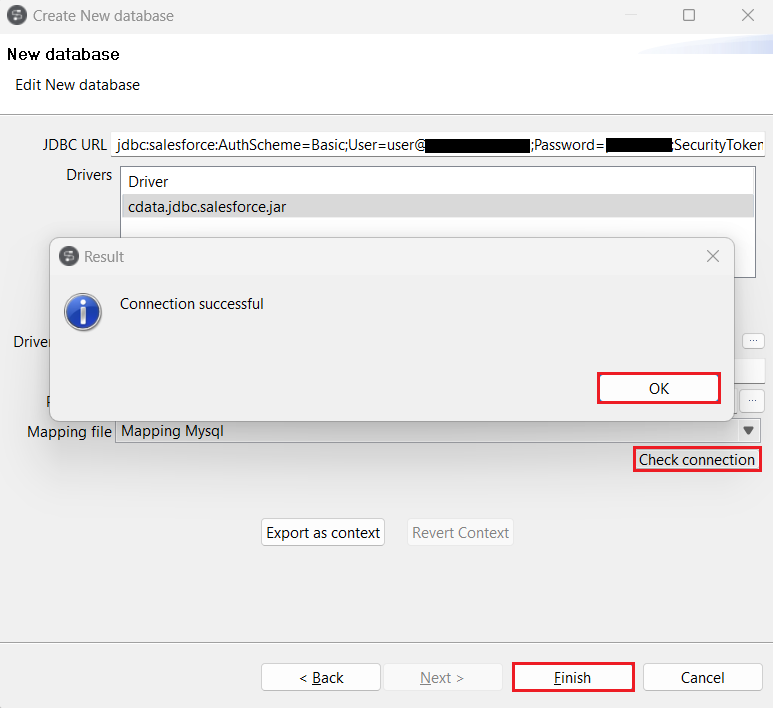

- Click on Check Connection. If the entered details are correct, a "Connection successful" confirmation prompt will appear. Click on "OK" and "Finish".

![Check the connection.]()

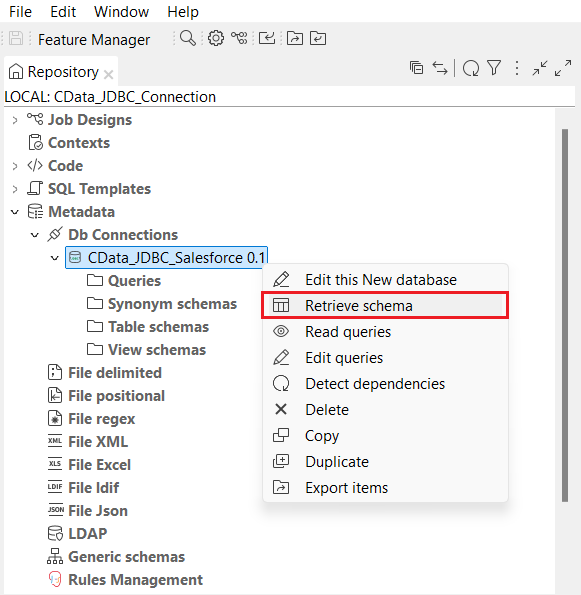

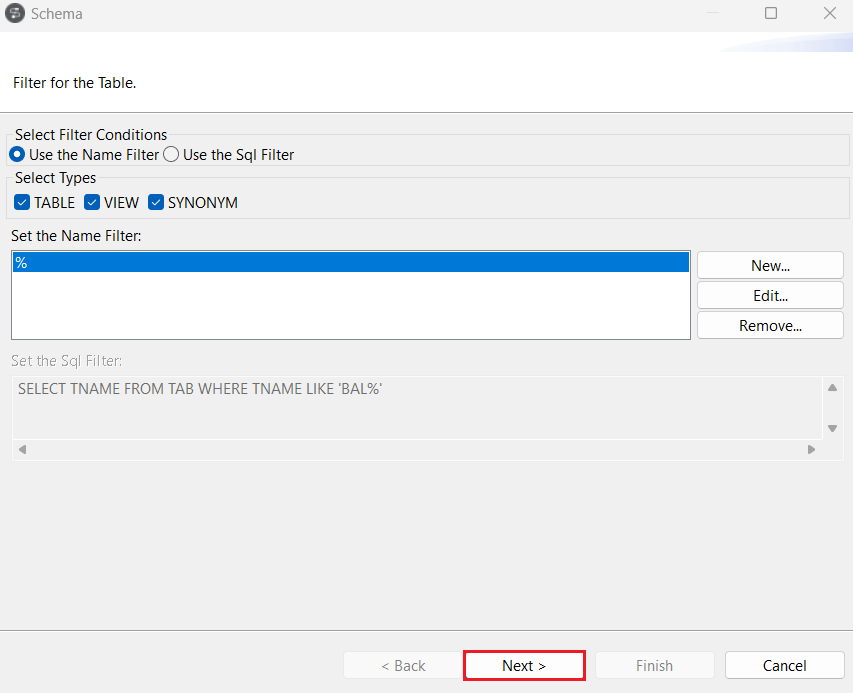

- Once the connection is established, right-click on the newly created connection and select Retrieve Schema. You can use the filters as well to retrieve the data as per your requirements. Click on Next.

![Retrieve schema from the datasource.]()

![Add the necessary filters.]()

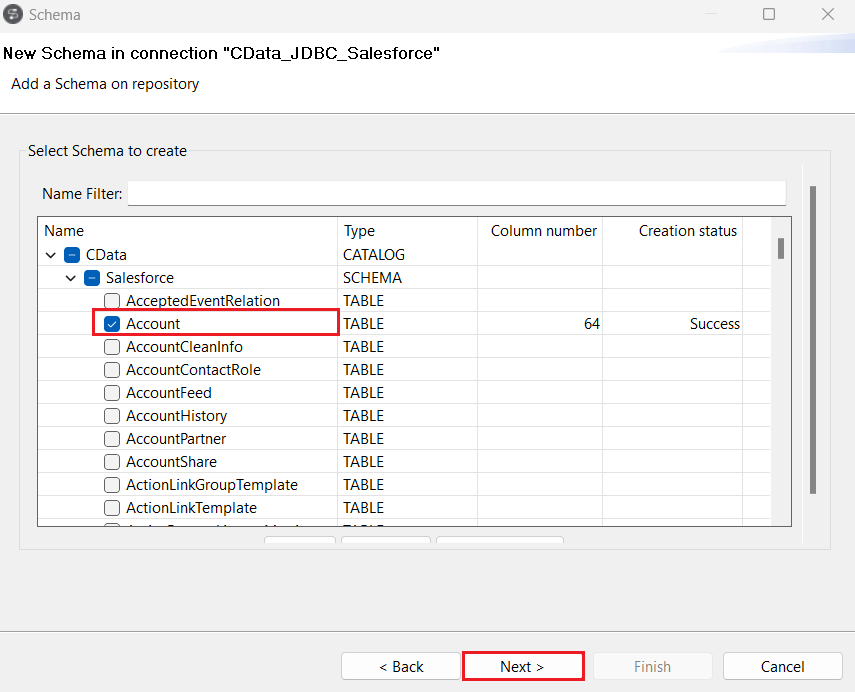

- Expand the "CData" catalog in the Schema window and select the tables you want to import from the Azure Data Lake Storage schema. Click on Next.

![Select a table from the Azure Data Lake Storage schema.]()

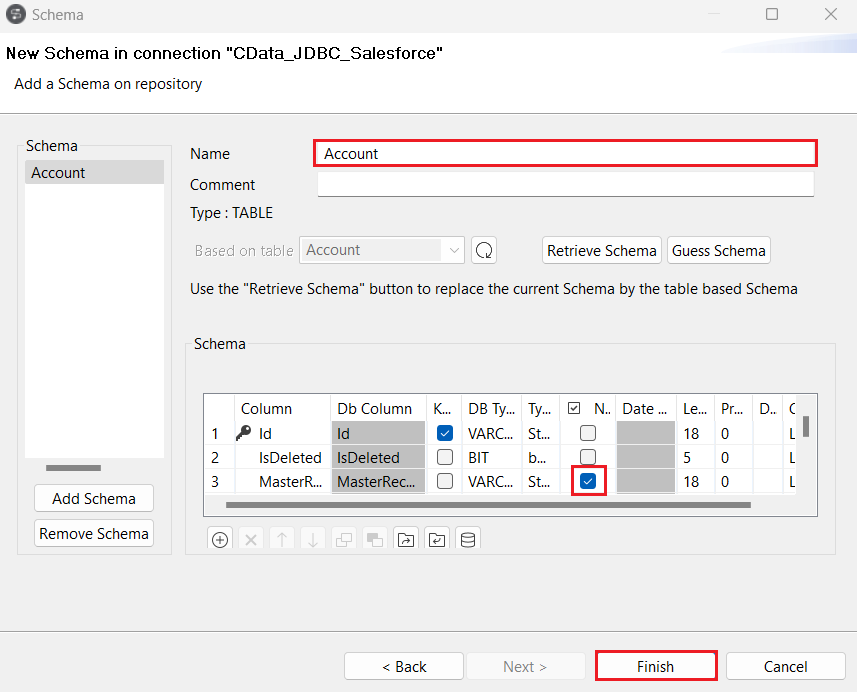

- In the next step, select the columns you want to view from the table and click on Finish.

![Select the necessary columns from the selected table.]()

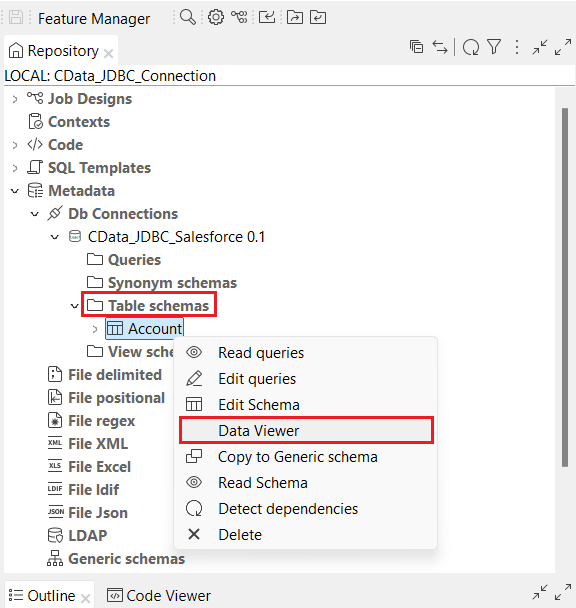

- All the selected tables from the Azure Data Lake Storage schema are now populated under the Table Schemas section of the JDBC connection.

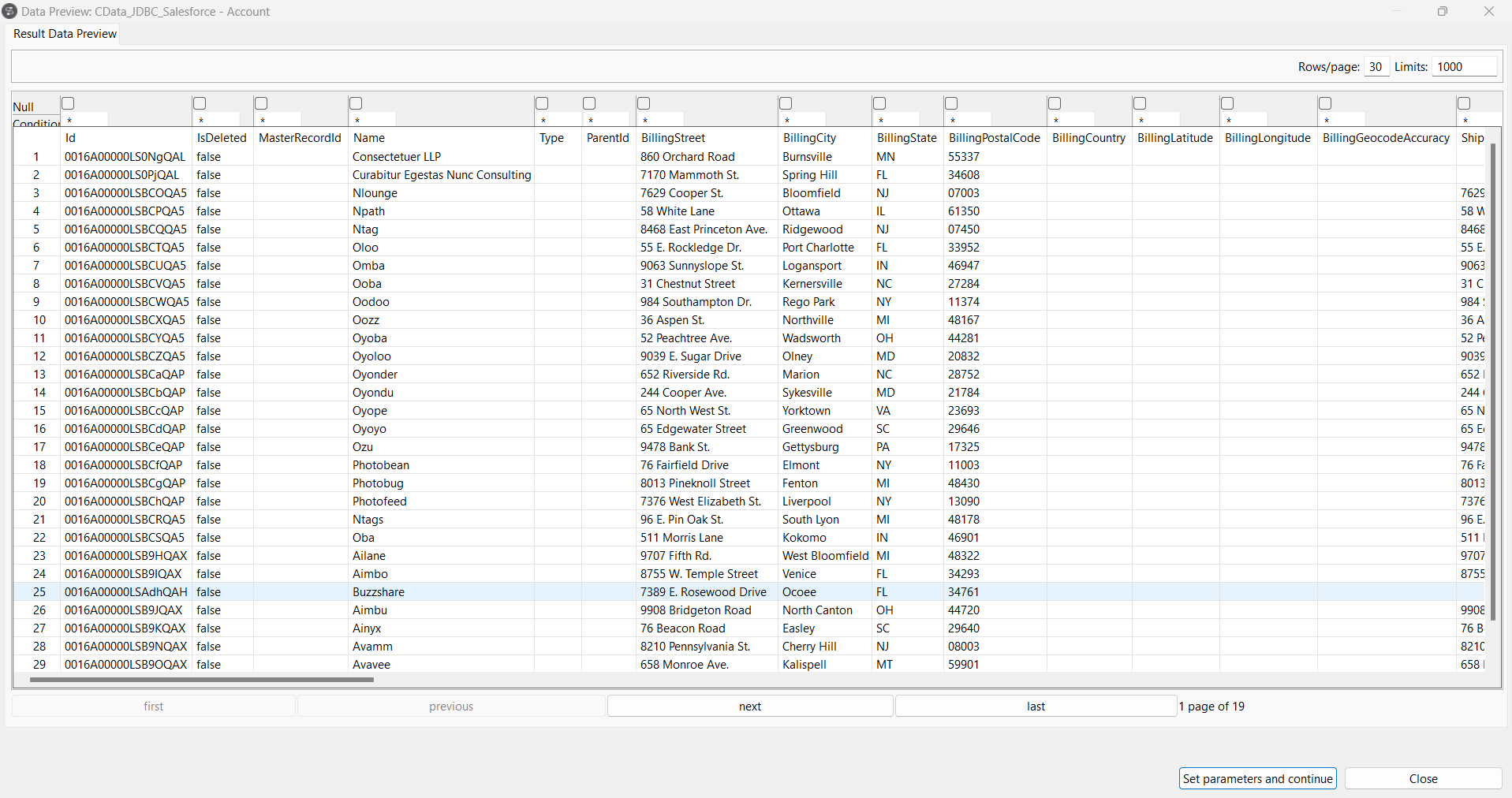

- Right-click on any of the selected tables and click on Data Viewer to preview the data from the data source.

![Click on Data Viewer to view the source data.]()

![Display the source table view.]()

Get Started Today

Download a free, 30-day trial of the CData JDBC Driver for Azure Data Lake Storage and integrate Azure Data Lake Storage data into Talend Cloud Data Management Platform. Reach out to our Support Team if you have any questions.