Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Prepare, Blend, and Analyze Azure Data Lake Storage Data in Alteryx Designer

Build workflows to access live Azure Data Lake Storage data for self-service data analytics.

The CData ODBC Driver for Azure Data Lake Storage enables access to live data from Azure Data Lake Storage under the ODBC standard, allowing you work with Azure Data Lake Storage data in a wide variety of BI, reporting, and ETL tools and directly, using familiar SQL queries. This article shows how to connect to Azure Data Lake Storage data using an ODBC connection in Alteryx Designer to perform self-service BI, data preparation, data blending, and advanced analytics.

The CData ODBC drivers offer unmatched performance for interacting with live Azure Data Lake Storage data in Alteryx Designer due to optimized data processing built into the driver. When you issue complex SQL queries from Alteryx Designer to Azure Data Lake Storage, the driver pushes supported SQL operations, like filters and aggregations, directly to Azure Data Lake Storage and utilizes the embedded SQL engine to process unsupported operations (often SQL functions and JOIN operations) client-side. With built-in dynamic metadata querying, you can visualize and analyze Azure Data Lake Storage data using native Alteryx data field types.

Connect to Azure Data Lake Storage Data

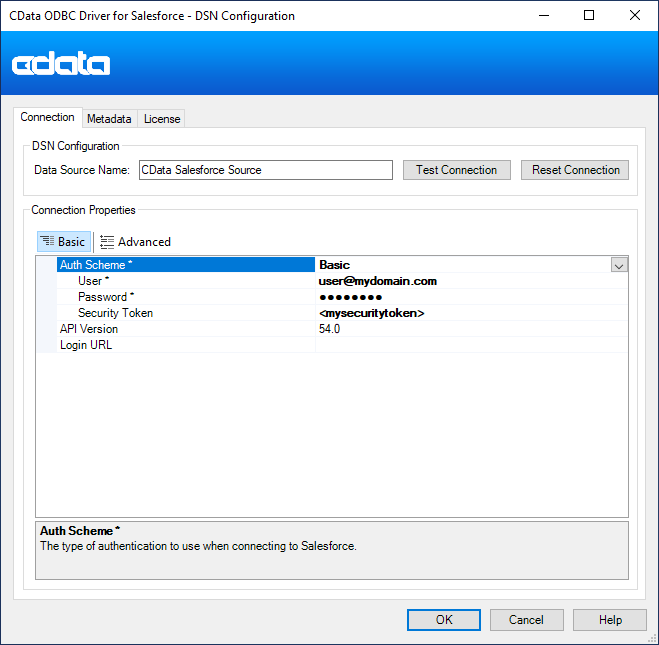

- If you have not already done so, provide values for the required connection properties in the data source name (DSN). You can configure the DSN using the built-in Microsoft ODBC Data Source Administrator. This is also the last step of the driver installation. See the "Getting Started" chapter in the Help documentation for a guide to using the Microsoft ODBC Data Source Administrator to create and configure a DSN.

Authenticating to a Gen 1 DataLakeStore Account

Gen 1 uses OAuth 2.0 in Azure AD for authentication.

For this, an Active Directory web application is required. You can create one as follows:

To authenticate against a Gen 1 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen1.

- Account: Set this to the name of the account.

- OAuthClientId: Set this to the application Id of the app you created.

- OAuthClientSecret: Set this to the key generated for the app you created.

- TenantId: Set this to the tenant Id. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

Authenticating to a Gen 2 DataLakeStore Account

To authenticate against a Gen 2 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen2.

- Account: Set this to the name of the account.

- FileSystem: Set this to the file system which will be used for this account.

- AccessKey: Set this to the access key which will be used to authenticate the calls to the API. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

When you configure the DSN, you may also want to set the Max Rows connection property. This will limit the number of rows returned, which is especially helpful for improving performance when designing reports and visualizations.

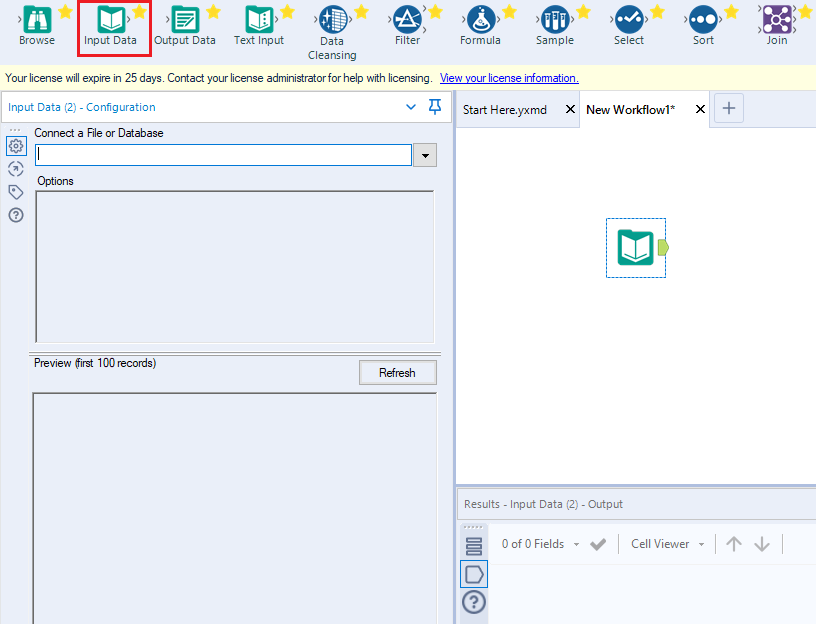

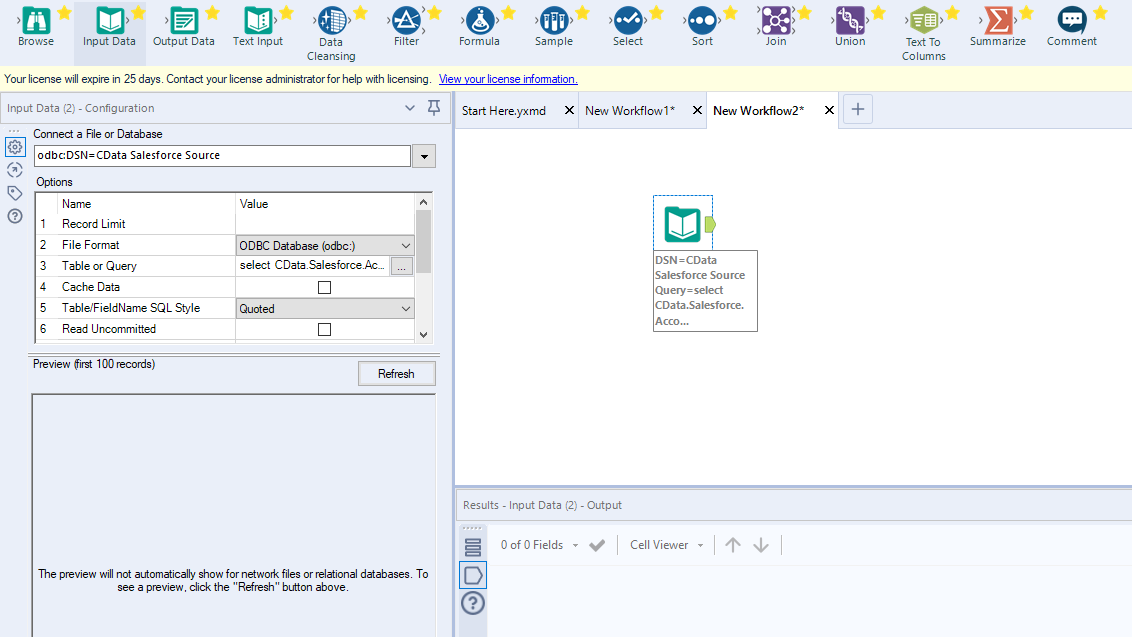

- Open Alteryx Designer and create a new workflow.

- Drag and drop a new input data tool onto the workflow.

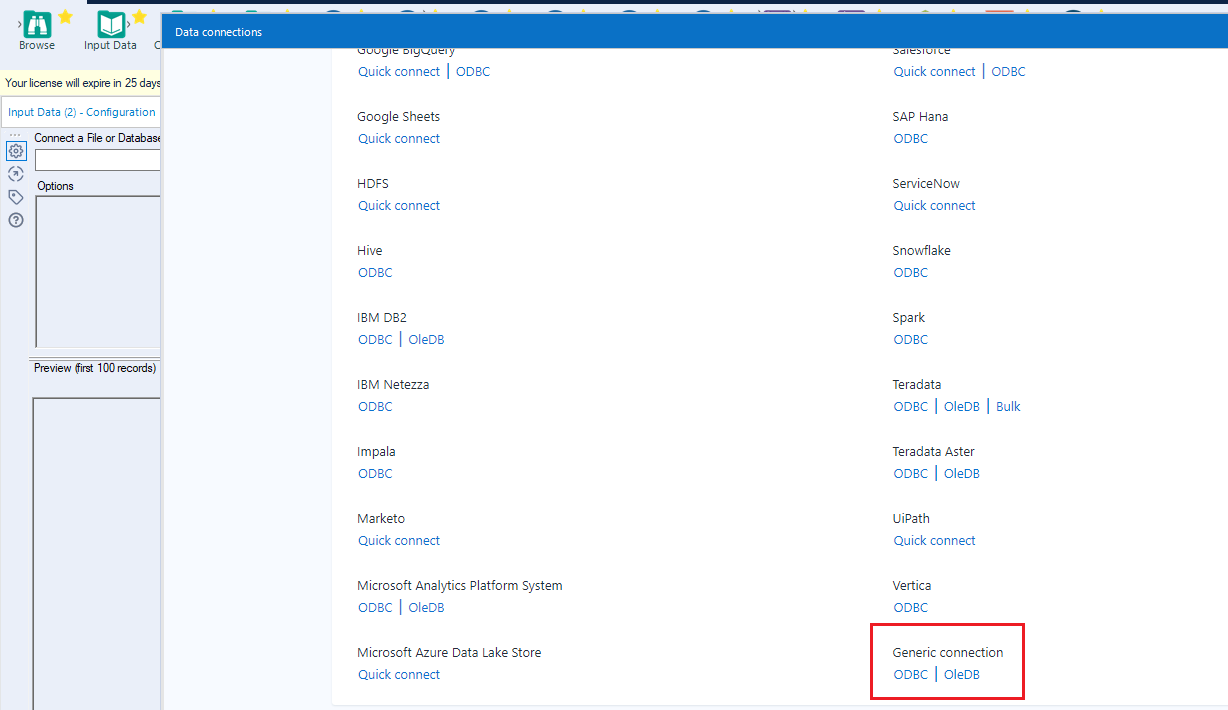

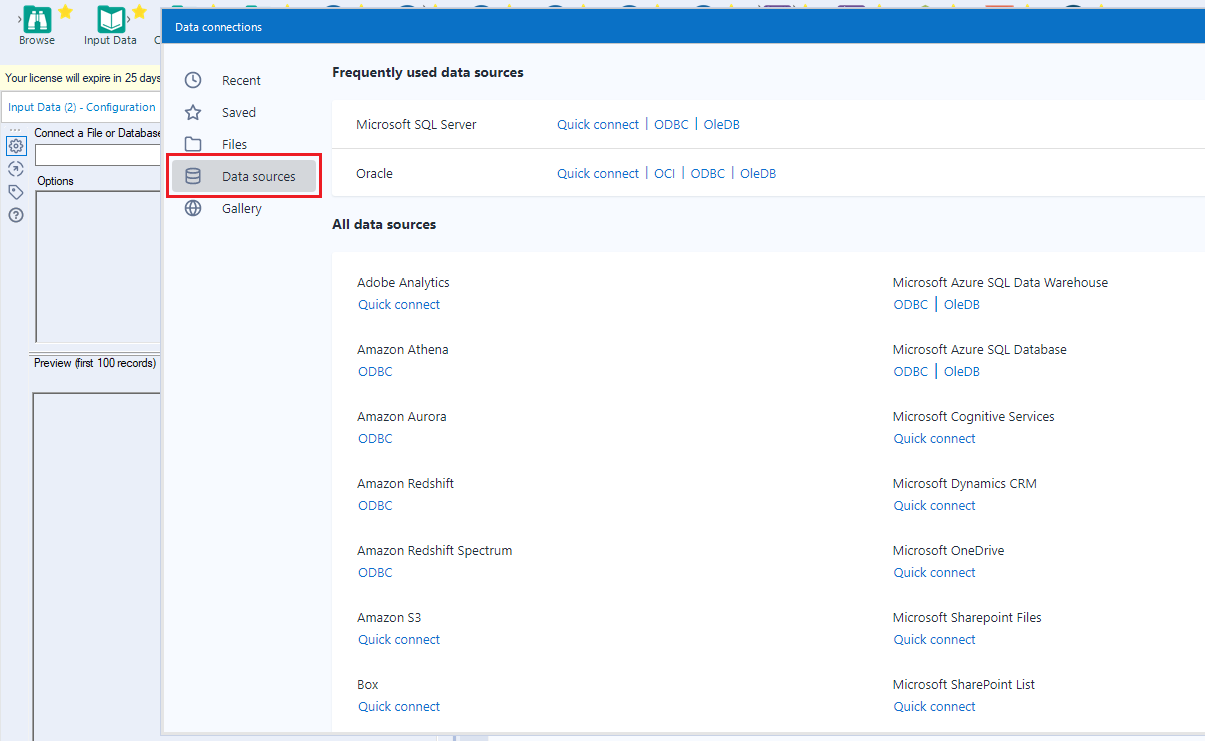

- Click the drop down under Connect a File or Database and select the Data sources tab.

- Navigate tot he end of the page and click on "ODBC" under "Generic connection"

![Select New ODBC Connection.]()

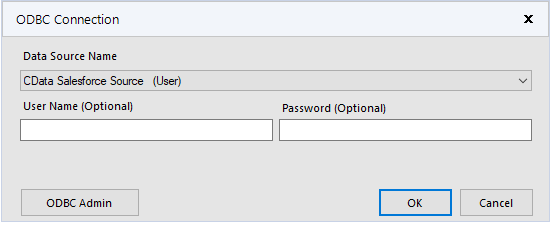

- Select the DSN (CData Azure Data Lake Storage Source) that you configured for use in Alteryx.

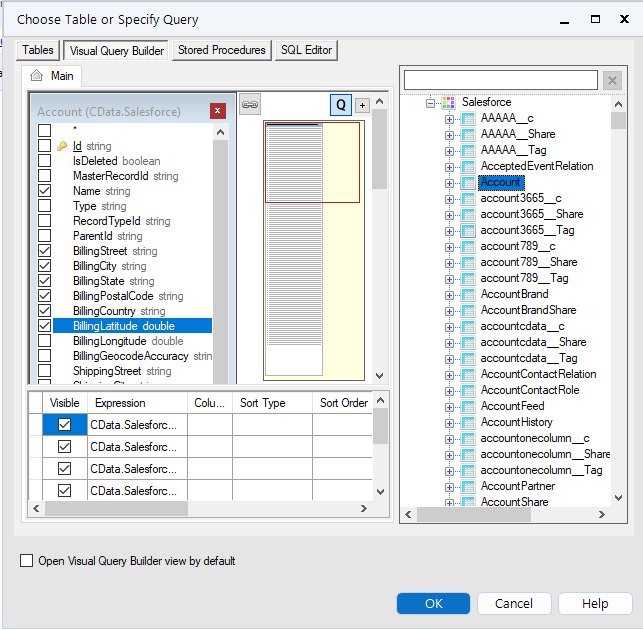

- In the wizard that opens, drag, and drop the table to be queried in the "Query Builder box." Select the fields by checking the boxes that you wish to include in your query. Where possible, the complex queries generated by the filters and aggregations will be pushed down to Azure Data Lake Storage, while any unsupported operations (which can include SQL functions and JOIN operations) will be managed client-side by the CData SQL engine embedded in the connector.

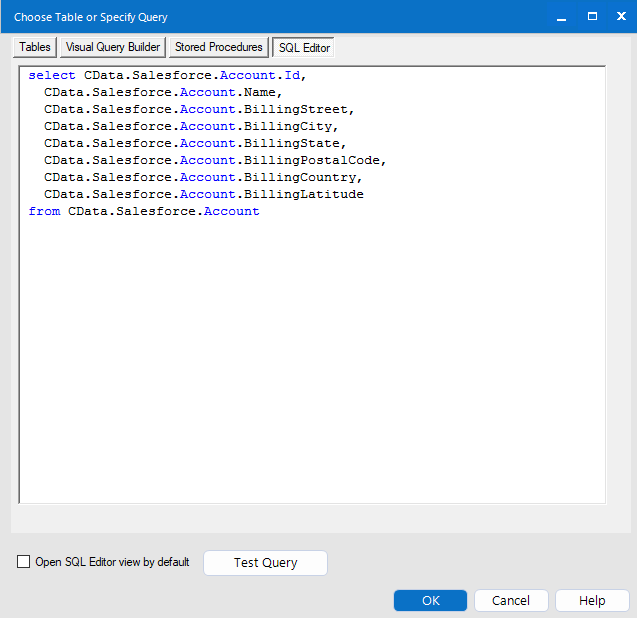

If you wish to further customize your dataset, you can open the SQL Editor and modify the query manually, adding clauses, aggregations, and other operations to ensure that you are retrieving exactly the Azure Data Lake Storage data you want .

With the query defined, you are ready to work with Azure Data Lake Storage data in Alteryx Designer.

Perform Self-Service Analytics on Azure Data Lake Storage Data

You are now ready to create a workflow to prepare, blend, and analyze Azure Data Lake Storage data. The CData ODBC Driver performs dynamic metadata discovery, presenting data using Alteryx data field types and allowing you to leverage the Designer's tools to manipulate data as needed and build meaningful datasets. In the example below, you will cleanse and browse data.

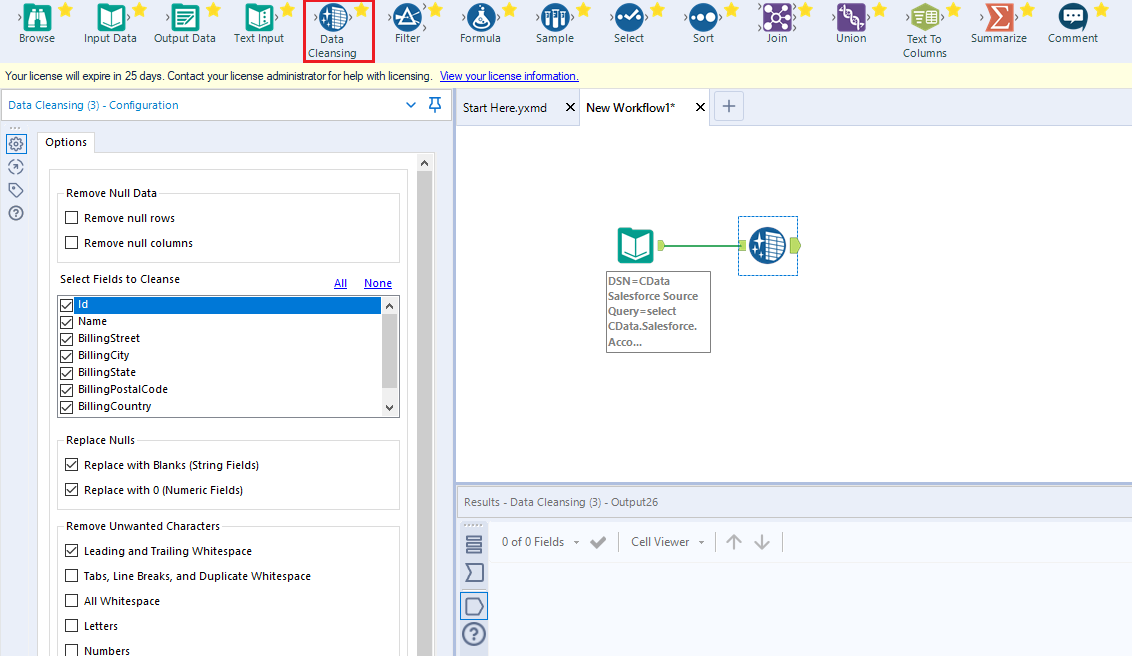

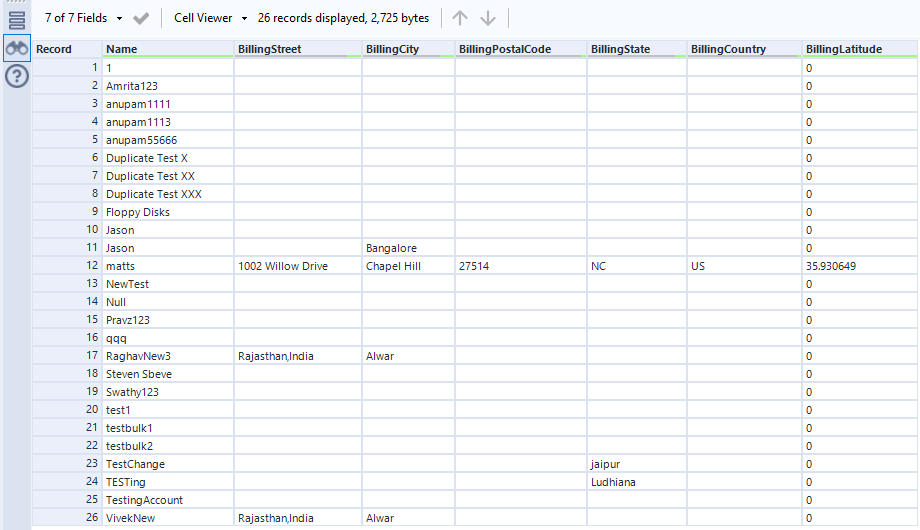

- Add a data cleansing tool to the workflow and check the boxes in Replace Nulls to replace null text fields with blanks and replace null numeric fields with 0. You can also check the box in Remove Unwanted Characters to remove leading and trailing whitespace.

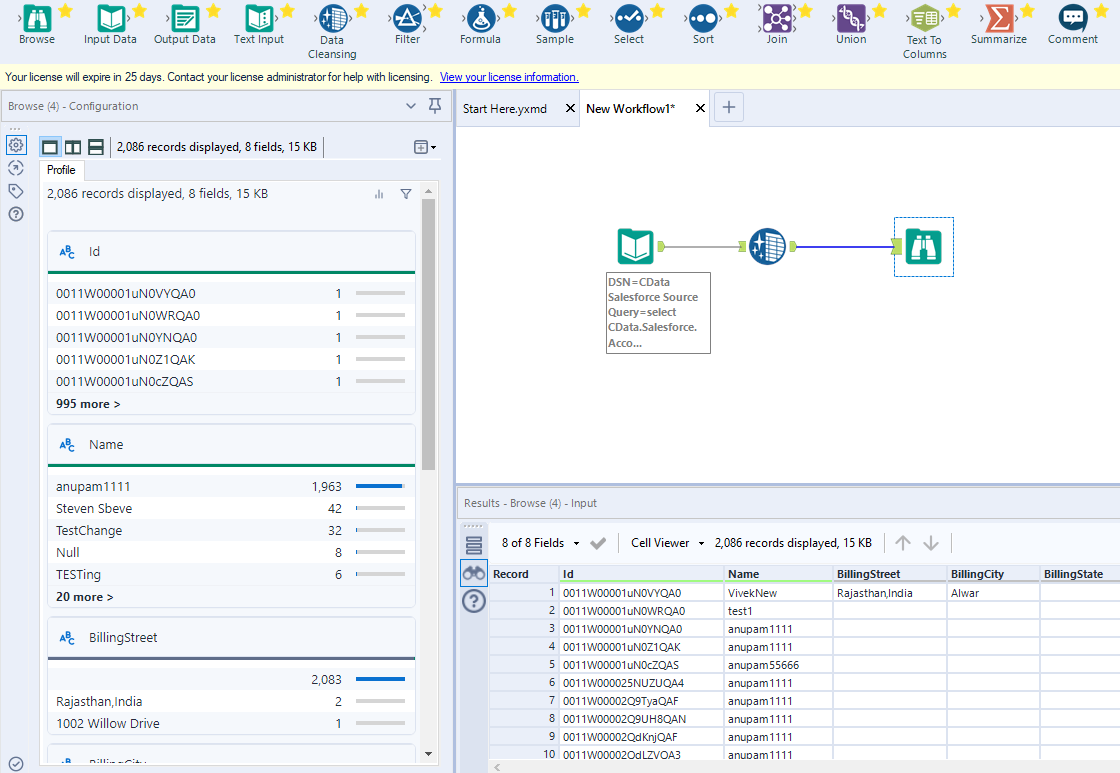

- Add a browse data tool to the workflow.

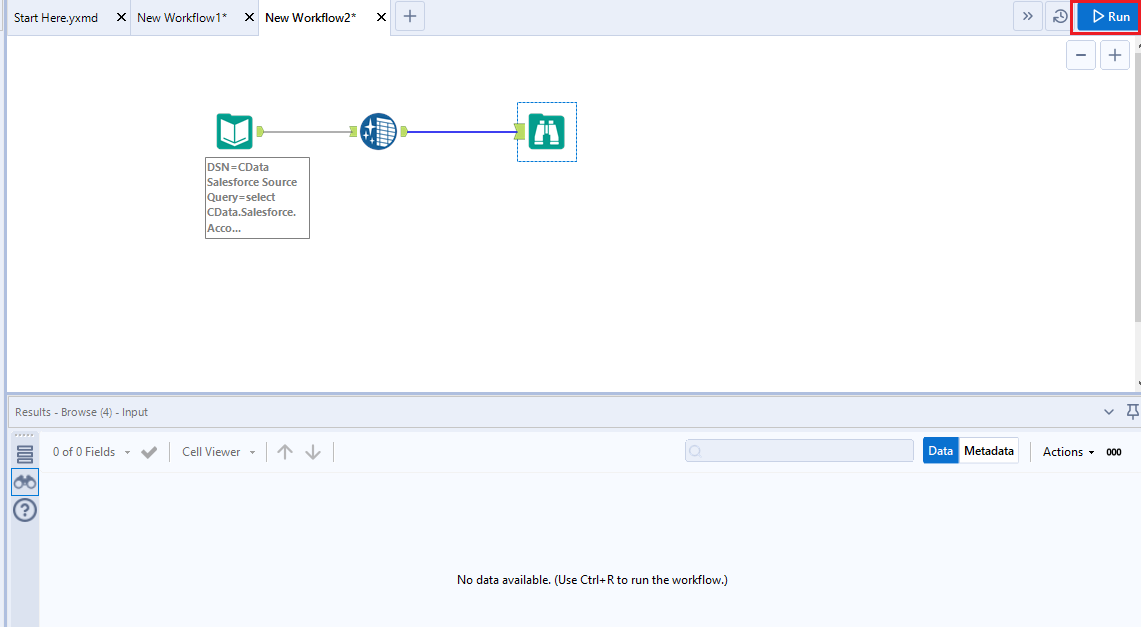

- Click to run the workflow (CTRL+R).

- Browse your cleansed Azure Data Lake Storage data in the results view.

Thanks to built-in, high-performance data processing, you will be able to quickly cleanse, transform, and/or analyze your Azure Data Lake Storage data with Alteryx.