Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Analyze Azure Data Lake Storage Data in R

Create data visualizations and use high-performance statistical functions to analyze Azure Data Lake Storage data in Microsoft R Open.

Access Azure Data Lake Storage data with pure R script and standard SQL. You can use the CData ODBC Driver for Azure Data Lake Storage and the RODBC package to work with remote Azure Data Lake Storage data in R. By using the CData Driver, you are leveraging a driver written for industry-proven standards to access your data in the popular, open-source R language. This article shows how to use the driver to execute SQL queries to Azure Data Lake Storage data and visualize Azure Data Lake Storage data in R.

Install R

You can complement the driver's performance gains from multi-threading and managed code by running the multithreaded Microsoft R Open or by running R linked with the BLAS/LAPACK libraries. This article uses Microsoft R Open (MRO).

Connect to Azure Data Lake Storage as an ODBC Data Source

Information for connecting to Azure Data Lake Storage follows, along with different instructions for configuring a DSN in Windows and Linux environments.

Authenticating to a Gen 1 DataLakeStore Account

Gen 1 uses OAuth 2.0 in Azure AD for authentication.

For this, an Active Directory web application is required. You can create one as follows:

To authenticate against a Gen 1 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen1.

- Account: Set this to the name of the account.

- OAuthClientId: Set this to the application Id of the app you created.

- OAuthClientSecret: Set this to the key generated for the app you created.

- TenantId: Set this to the tenant Id. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

Authenticating to a Gen 2 DataLakeStore Account

To authenticate against a Gen 2 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen2.

- Account: Set this to the name of the account.

- FileSystem: Set this to the file system which will be used for this account.

- AccessKey: Set this to the access key which will be used to authenticate the calls to the API. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

When you configure the DSN, you may also want to set the Max Rows connection property. This will limit the number of rows returned, which is especially helpful for improving performance when designing reports and visualizations.

Windows

If you have not already, first specify connection properties in an ODBC DSN (data source name). This is the last step of the driver installation. You can use the Microsoft ODBC Data Source Administrator to create and configure ODBC DSNs.

Linux

If you are installing the CData ODBC Driver for Azure Data Lake Storage in a Linux environment, the driver installation predefines a system DSN. You can modify the DSN by editing the system data sources file (/etc/odbc.ini) and defining the required connection properties.

/etc/odbc.ini

[CData ADLS Source]

Driver = CData ODBC Driver for Azure Data Lake Storage

Description = My Description

Schema = ADLSGen2

Account = myAccount

FileSystem = myFileSystem

AccessKey = myAccessKey

For specific information on using these configuration files, please refer to the help documentation (installed and found online).

Load the RODBC Package

To use the driver, download the RODBC package. In RStudio, click Tools -> Install Packages and enter RODBC in the Packages box.

After installing the RODBC package, the following line loads the package:

library(RODBC)

Note: This article uses RODBC version 1.3-12. Using Microsoft R Open, you can test with the same version, using the checkpoint capabilities of Microsoft's MRAN repository. The checkpoint command enables you to install packages from a snapshot of the CRAN repository, hosted on the MRAN repository. The snapshot taken Jan. 1, 2016 contains version 1.3-12.

library(checkpoint)

checkpoint("2016-01-01")

Connect to Azure Data Lake Storage Data as an ODBC Data Source

You can connect to a DSN in R with the following line:

conn <- odbcConnect("CData ADLS Source")

Schema Discovery

The driver models Azure Data Lake Storage APIs as relational tables, views, and stored procedures. Use the following line to retrieve the list of tables:

sqlTables(conn)

Execute SQL Queries

Use the sqlQuery function to execute any SQL query supported by the Azure Data Lake Storage API.

resources <- sqlQuery(conn, "SELECT FullPath, Permission FROM Resources WHERE Type = 'FILE'", believeNRows=FALSE, rows_at_time=1)

You can view the results in a data viewer window with the following command:

View(resources)

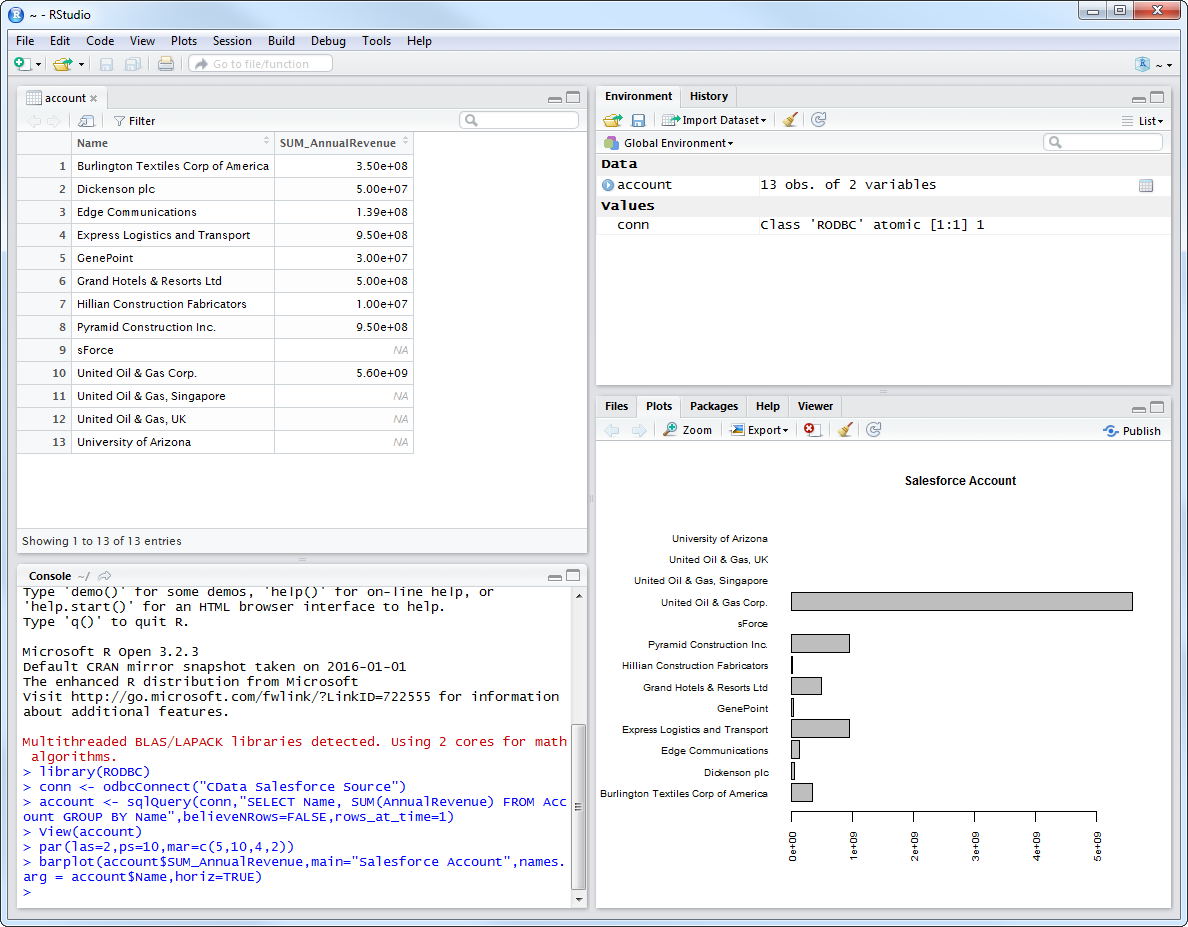

Plot Azure Data Lake Storage Data

You can now analyze Azure Data Lake Storage data with any of the data visualization packages available in the CRAN repository. You can create simple bar plots with the built-in bar plot function:

par(las=2,ps=10,mar=c(5,15,4,2))

barplot(resources$Permission, main="Azure Data Lake Storage Resources", names.arg = resources$FullPath, horiz=TRUE)