Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →How to connect and process Basecamp Data from Azure Databricks

Use CData, Azure, and Databricks to perform data engineering and data science on live Basecamp Data

Databricks is a cloud-based service that provides data processing capabilities through Apache Spark. When paired with the CData JDBC Driver, customers can use Databricks to perform data engineering and data science on live Basecamp data. This article walks through hosting the CData JDBC Driver in Azure, as well as connecting to and processing live Basecamp data in Databricks.

With built-in optimized data processing, the CData JDBC Driver offers unmatched performance for interacting with live Basecamp data. When you issue complex SQL queries to Basecamp, the driver pushes supported SQL operations, like filters and aggregations, directly to Basecamp and utilizes the embedded SQL engine to process unsupported operations client-side (often SQL functions and JOIN operations). Its built-in dynamic metadata querying allows you to work with and analyze Basecamp data using native data types.

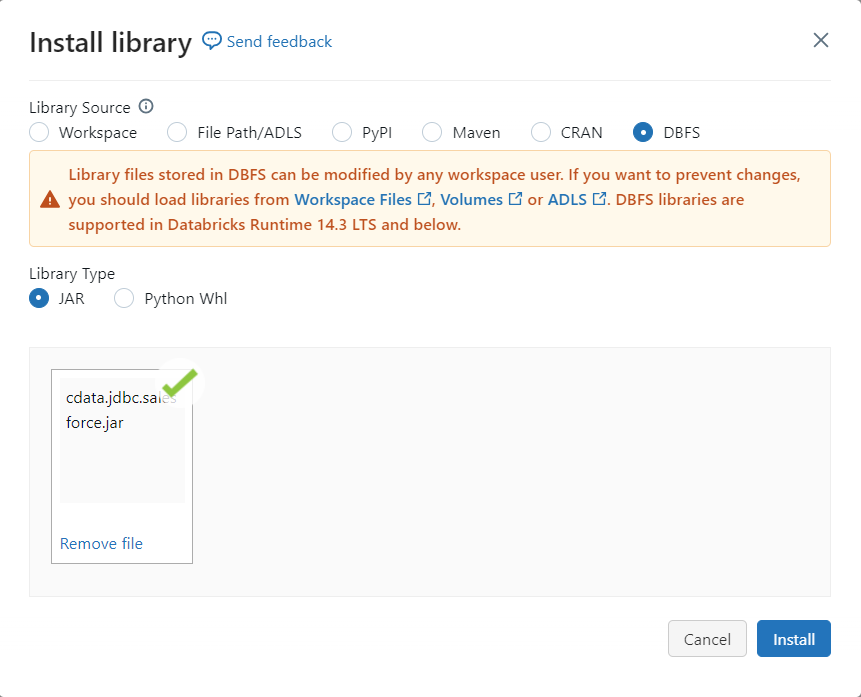

Install the CData JDBC Driver in Azure

To work with live Basecamp data in Databricks, install the driver on your Azure cluster.

- Navigate to your Databricks administration screen and select the target cluster.

- On the Libraries tab, click "Install New."

- Select "Upload" as the Library Source and "Jar" as the Library Type.

- Upload the JDBC JAR file (cdata.jdbc.basecamp.jar) from the installation location (typically C:\Program Files\CData[product_name]\lib).

Connect to Basecamp from Databricks

With the JAR file installed, we are ready to work with live Basecamp data in Databricks. Start by creating a new notebook in your workspace. Name the notebook, select Python as the language (though Scala is available as well), and choose the cluster where you installed the JDBC driver. When the notebook launches, we can configure the connection, query Basecamp, and create a basic report.

Configure the Connection to Basecamp

Connect to Basecamp by referencing the class for the JDBC Driver and constructing a connection string to use in the JDBC URL. Additionally, you will need to set the RTK property in the JDBC URL (unless you are using a Beta driver). You can view the licensing file included in the installation for information on how to set this property.

driver = "cdata.jdbc.basecamp.BasecampDriver" url = "jdbc:basecamp:RTK=5246...;[email protected];Password=test123;"

Built-in Connection String Designer

For assistance in constructing the JDBC URL, use the connection string designer built into the Basecamp JDBC Driver. Either double-click the JAR file or execute the jar file from the command-line.

java -jar cdata.jdbc.basecamp.jar

Fill in the connection properties and copy the connection string to the clipboard.

Basecamp uses basic or OAuth 2.0 authentication. To use basic authentication you will need the user and password that you use for logging in to Basecamp. To authenticate to Basecamp via OAuth 2.0, you will need to obtain the OAuthClientId, OAuthClientSecret, and CallbackURL connection properties by registering an app with Basecamp.

See the Getting Started section in the help documentation for a connection guide.

Additionally, you will need to specify the AccountId connection property. This can be copied from the URL after you log in.

Load Basecamp Data

Once the connection is configured, you can load Basecamp data as a dataframe using the CData JDBC Driver and the connection information.

remote_table = spark.read.format ( "jdbc" ) \ .option ( "driver" , driver) \ .option ( "url" , url) \ .option ( "dbtable" , "Projects") \ .load ()

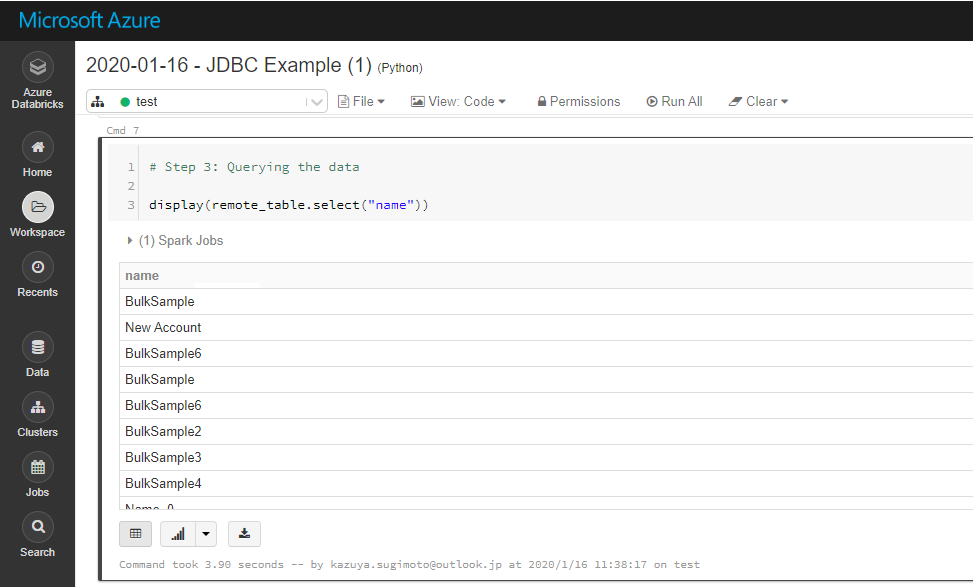

Display Basecamp Data

Check the loaded Basecamp data by calling the display function.

display (remote_table.select ("Name"))

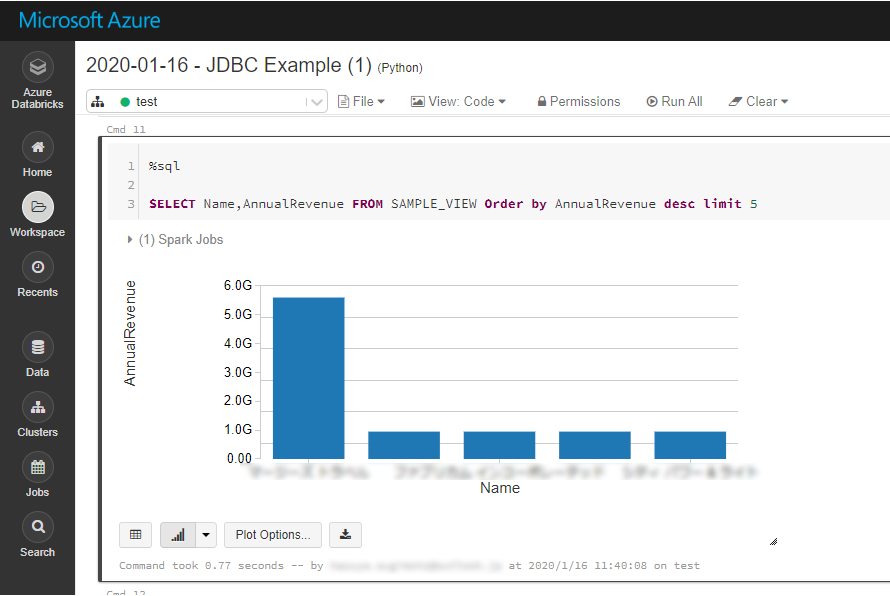

Analyze Basecamp Data in Azure Databricks

If you want to process data with Databricks SparkSQL, register the loaded data as a Temp View.

remote_table.createOrReplaceTempView ( "SAMPLE_VIEW" )

The SparkSQL below retrieves the Basecamp data for analysis.

% sql SELECT Name, DocumentsCount FROM Projects

The data from Basecamp is only available in the target notebook. If you want to use it with other users, save it as a table.

remote_table.write.format ( "parquet" ) .saveAsTable ( "SAMPLE_TABLE" )

Download a free, 30-day trial of the CData JDBC Driver for Basecamp and start working with your live Basecamp data in Azure Databricks. Reach out to our Support Team if you have any questions.