Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Build Phoenix-Connected ETL Processes in Google Data Fusion

Load the CData JDBC Driver into Google Data Fusion and create ETL processes with access live Phoenix data.

Google Data Fusion allows users to perform self-service data integration to consolidate disparate data. Uploading the CData JDBC Driver for Phoenix enables users to access live Phoenix data from within their Google Data Fusion pipelines. While the CData JDBC Driver enables piping Phoenix data to any data source natively supported in Google Data Fusion, this article walks through piping data from Phoenix to Google BigQuery,

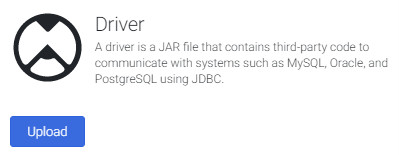

Upload the CData JDBC Driver for Phoenix to Google Data Fusion

Upload the CData JDBC Driver for Phoenix to your Google Data Fusion instance to work with live Phoenix data. Due to the naming restrictions for JDBC drivers in Google Data Fusion, create a copy or rename the JAR file to match the following format driver-version.jar. For example: cdataapachephoenix-2020.jar

- Open your Google Data Fusion instance

- Click the to add an entity and upload a driver

![]()

- On the "Upload driver" tab, drag or browse to the renamed JAR file.

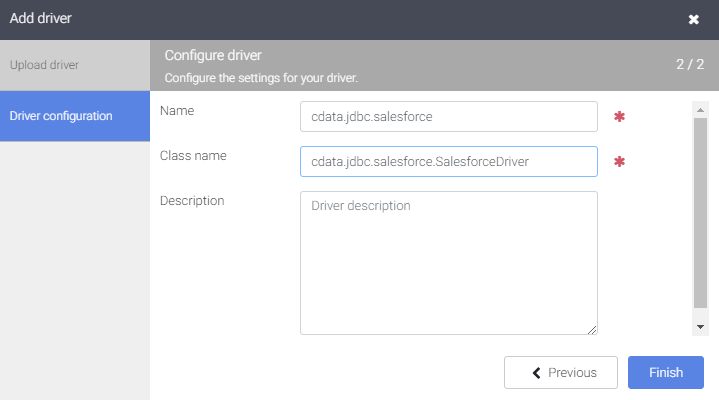

- On the "Driver configuration" tab:

- Name: Create a name for the driver (cdata.jdbc.apachephoenix) and make note of the name

- Class name: Set the JDBC class name: (cdata.jdbc.apachephoenix.ApachePhoenixDriver)

![Configuring the driver (Salesforce is shown.)]()

- Click "Finish"

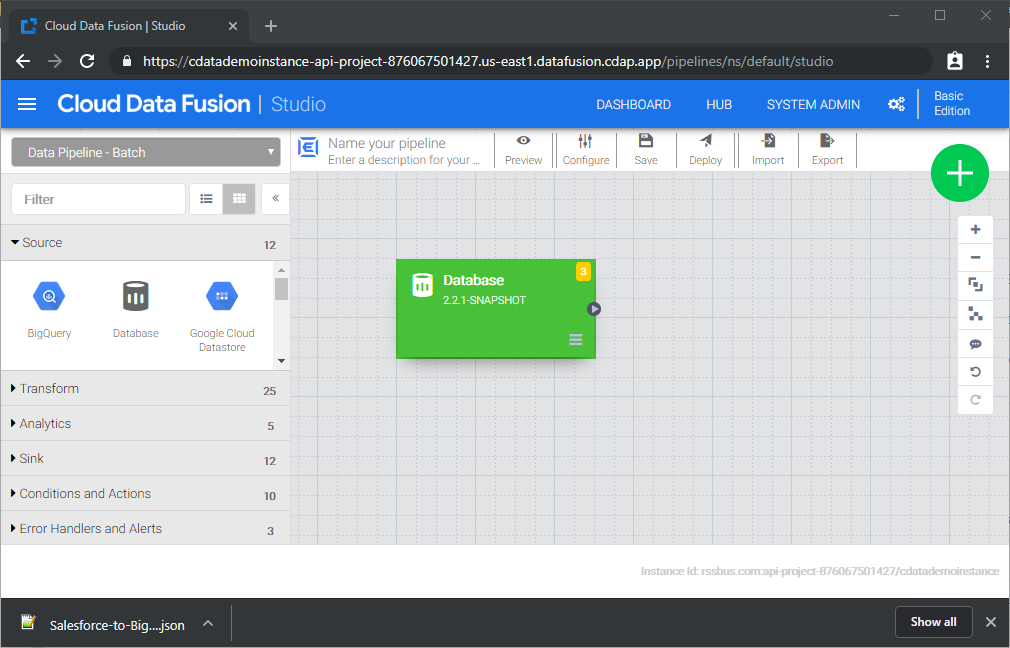

Connect to Phoenix Data in Google Data Fusion

With the JDBC Driver uploaded, you are ready to work with live Phoenix data in Google Data Fusion Pipelines.

- Navigate to the Pipeline Studio to create a new Pipeline

- From the "Source" options, click "Database" to add a source for the JDBC Driver

![Adding a database source]()

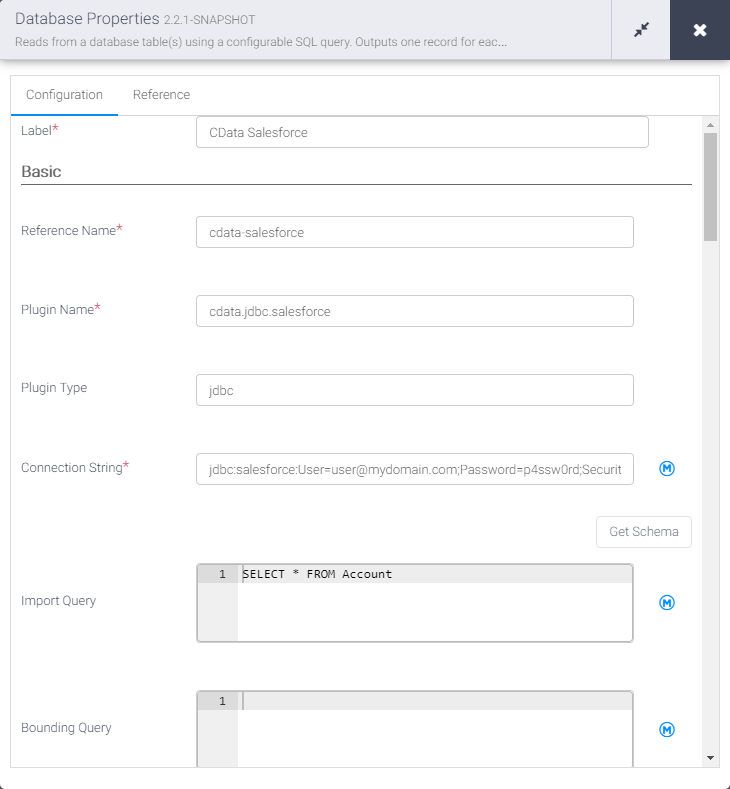

- Click "Properties" on the Database source to edit the properties

NOTE: To use the JDBC Driver in Google Data Fusion, you will need a license (full or trial) and a Runtime Key (RTK). For more information on obtaining this license (or a trial), contact our sales team.

- Set the Label

- Set Reference Name to a value for any future references (i.e.: cdata-apachephoenix)

- Set Plugin Type to "jdbc"

- Set Connection String to the JDBC URL for Phoenix. For example:

jdbc:apachephoenix:RTK=5246...;Server=localhost;Port=8765;Connect to Apache Phoenix via the Phoenix Query Server. Set the Server and Port (if different from the default port) properties to connect to Apache Phoenix. The Server property will typically be the host name or IP address of the server hosting Apache Phoenix.

Authenticating to Apache Phoenix

By default, no authentication will be used (plain). If authentication is configured for your server, set AuthScheme to NEGOTIATE and set the User and Password properties (if necessary) to authenticate through Kerberos.

Built-in Connection String Designer

For assistance in constructing the JDBC URL, use the connection string designer built into the Phoenix JDBC Driver. Either double-click the JAR file or execute the jar file from the command-line.

java -jar cdata.jdbc.apachephoenix.jarFill in the connection properties and copy the connection string to the clipboard.

![Using the built-in connection string designer to generate a JDBC URL (Salesforce is shown.)]()

- Set Import Query to a SQL query that will extract the data you want from Phoenix, i.e.:

SELECT * FROM MyTable

![Configuring the database source]()

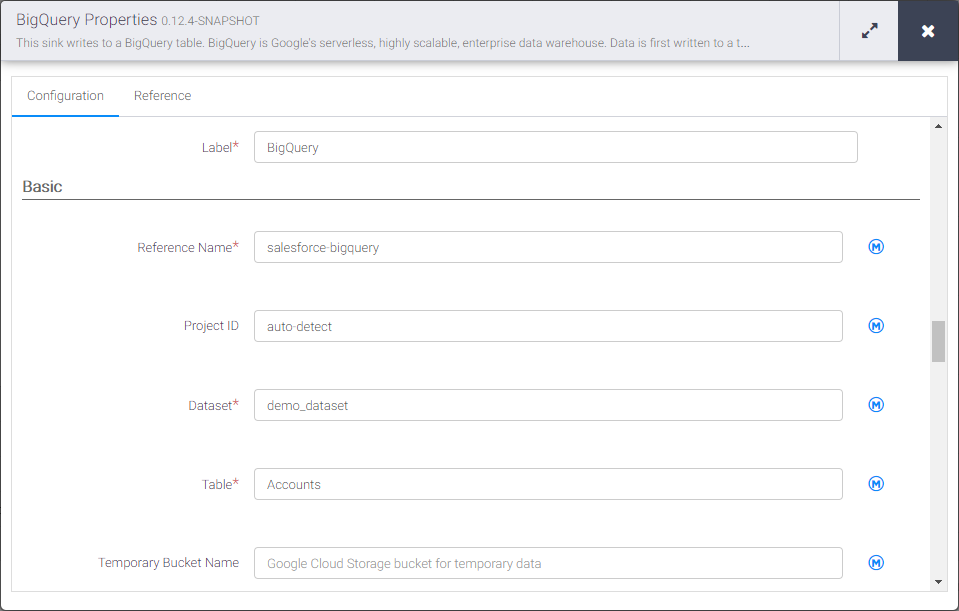

- From the "Sink" tab, click to add a destination sink (we use Google BigQuery in this example)

- Click "Properties" on the BigQuery sink to edit the properties

- Set the Label

- Set Reference Name to a value like apachephoenix-bigquery

- Set Project ID to a specific Google BigQuery Project ID (or leave as the default, "auto-detect")

- Set Dataset to a specific Google BigQuery dataset

- Set Table to the name of the table you wish to insert Phoenix data into

![Configuring the BigQuery sink]()

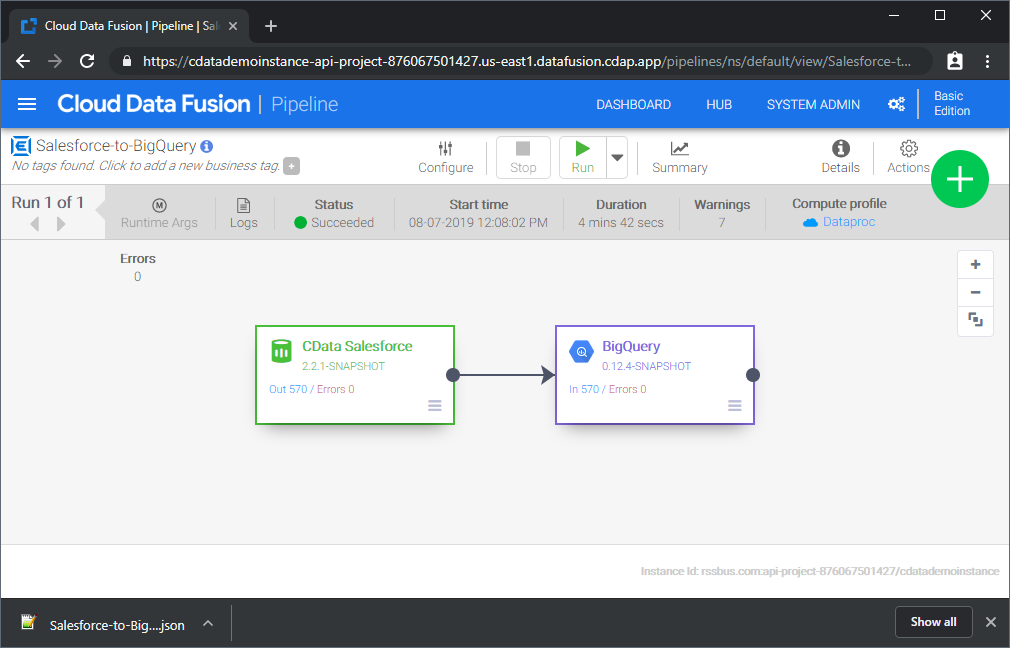

With the Source and Sink configured, you are ready to pipe Phoenix data into Google BigQuery. Save and deploy the pipeline. When you run the pipeline, Google Data Fusion will request live data from Phoenix and import it into Google BigQuery.

While this is a simple pipeline, you can create more complex Phoenix pipelines with transforms, analytics, conditions, and more. Download a free, 30-day trial of the CData JDBC Driver for Phoenix and start working with your live Phoenix data in Google Data Fusion today.