Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →How to import Spark Data into Apache Solr

Use the CData JDBC Driver for Spark in Data Import Handler and create an automated import of Spark data to Apache Solr Enterprise Search platform.

The Apache Solr platform is a popular, blazing-fast, open source enterprise search solution built on Apache Lucene.

Apache Solr is equipped with the Data Import Handler (DIH), which can import data from databases and, XML, CSV, and JSON files. When paired with the CData JDBC Driver for Spark, you can easily import Spark data to Apache Solr. In this article, we show step-by-step how to use CData JDBC Driver in Apache Solr Data Import Handler and import Spark data for use in enterprise search.

Create an Apache Solr Core and a Schema for Importing Spark

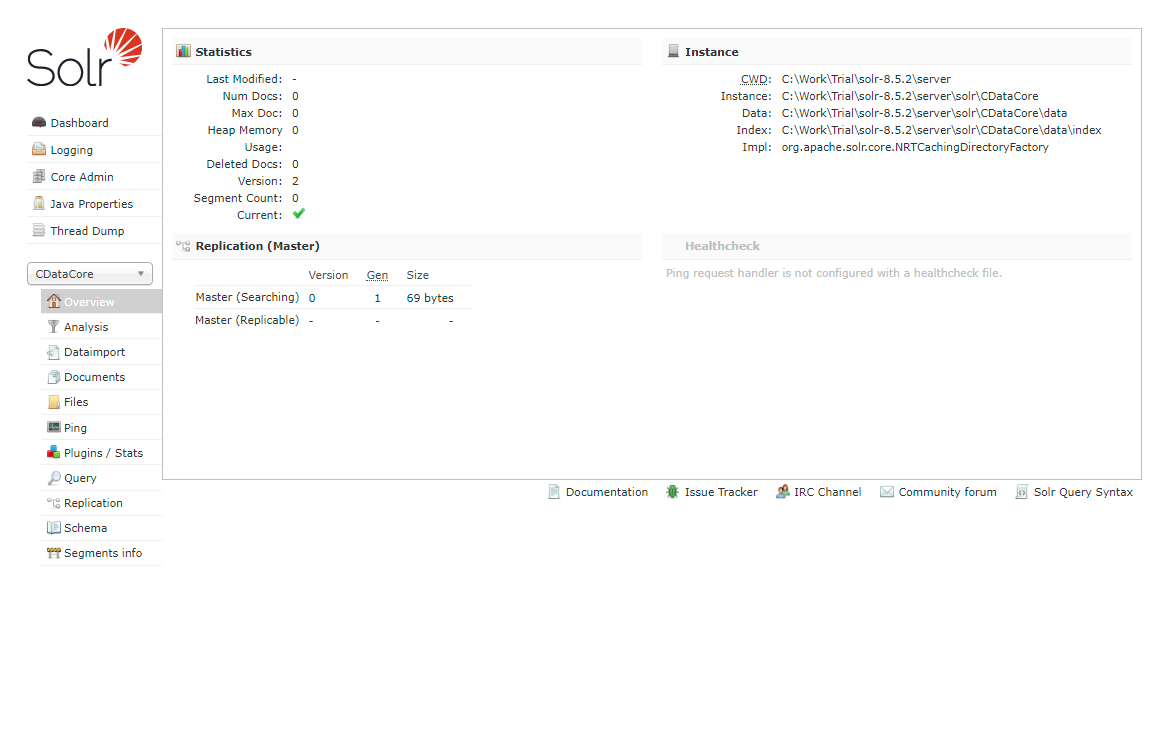

- Run Apache Solr and create a Core.

> solr create -c CDataCoreFor this article, Solr is running as a standalone instance in the local environment and you can access the core at this URL: http://localhost:8983/solr/#/CDataCore/core-overview - Create a schema consisting of "field" objects to represent the columns of the Spark data to be imported and a unique key for the entity. LastModifiedDate, if it exists in Spark, is used for incremental updates. If it does not exist, you cannot do the deltaquery in the later section. Save the schema in the managed-schema file created by Apache Solr.

- Install the CData Spark JDBC Driver. Copy the JAR and license file (cdata.sparksql.jar and cdata.jdbc.sparksql.lic) to the Solr directory.

- CData JDBC JAR file: C:\Program Files\CData\CData JDBC Driver for Spark ####\lib

- Apache Solr: solr-8.5.2\server\lib

SparkSQLUniqueKey

Now we are ready to use Spark data in Solr.

Define an Import of Spark to Apache Solr

In this section, we walk through configuring the Data Import Handler.

- Modify the Config file of the created Core. Add the JAR file reference and add the DIH RequestHander definition.

<lib dir="${solr.install.dir:../../../..}/dist/" regex="solr-dataimporthandler-.*\.jar" /> <requestHandler name="/dataimport" class="org.apache.solr.handler.dataimport.DataImportHandler"> <lst name="defaults"> <str name="config">solr-data-config.xml</str> </lst> </requestHandler> - Next, create a solr-data-config.xml at the same level. In this article, we retrieve a table from Spark, but you can use a custom SQL query to request data as well. The Driver Class and a sample JDBC Connection string are in the sample code below.

<dataConfig> <dataSource driver="cdata.jdbc.sparksql.SparkSQLDriver" url="jdbc:sparksql:Server=127.0.0.1;"> </dataSource> <document> <entity name="Customers" query="SELECT Id,SparkSQLColumn1,SparkSQLColumn2,SparkSQLColumn3,SparkSQLColumn4,SparkSQLColumn5,SparkSQLColumn6,SparkSQLColumn7,LastModifiedDate FROM Customers" deltaQuery="SELECT Id FROM Customers where LastModifiedDate >= '${dataimporter.last_index_time}'" deltaImportQuery="SELECT Id,SparkSQLColumn1,SparkSQLColumn2,SparkSQLColumn3,SparkSQLColumn4,SparkSQLColumn5,SparkSQLColumn6,SparkSQLColumn7,LastModifiedDate FROM Customers where Id=${dataimporter.delta.Id}"> <field column="Id" name="Id" ></field> <field column="SparkSQLColumn1" name="SparkSQLColumn1" ></field> <field column="SparkSQLColumn2" name="SparkSQLColumn2" ></field> <field column="SparkSQLColumn3" name="SparkSQLColumn3" ></field> <field column="SparkSQLColumn4" name="SparkSQLColumn4" ></field> <field column="SparkSQLColumn5" name="SparkSQLColumn5" ></field> <field column="SparkSQLColumn6" name="SparkSQLColumn6" ></field> <field column="SparkSQLColumn7" name="SparkSQLColumn7" ></field> <field column="LastModifiedDate" name="LastModifiedDate" ></field> </entity> </document> </dataConfig> - In the query section, set the SQL query that select the data from Spark. deltaQuery and deltaImportquery define the ID and the conditions when using incremental updates from the second import of the same entity.

- After all settings are done, restart Solr.

> solr stop -all > solr start

Run a DataImport of Spark Data.

- Execute DataImport from the URL below:

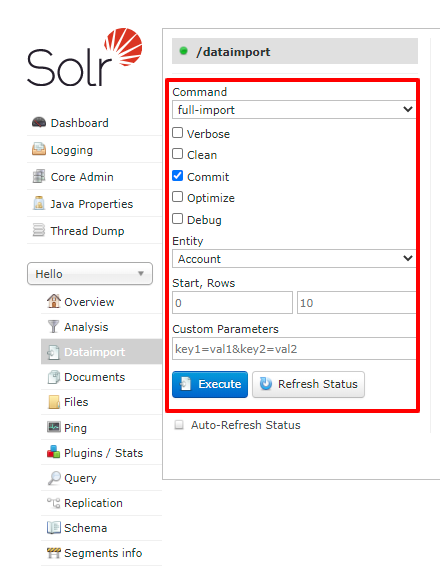

http://localhost:8983/solr/#/CDataCore/dataimport//dataimport![Load Spark data to Solr using Data Import.]()

- Select the "full-import" Command, choose the table from Entity, and click "Execute."

![Execute full import in Solr.]()

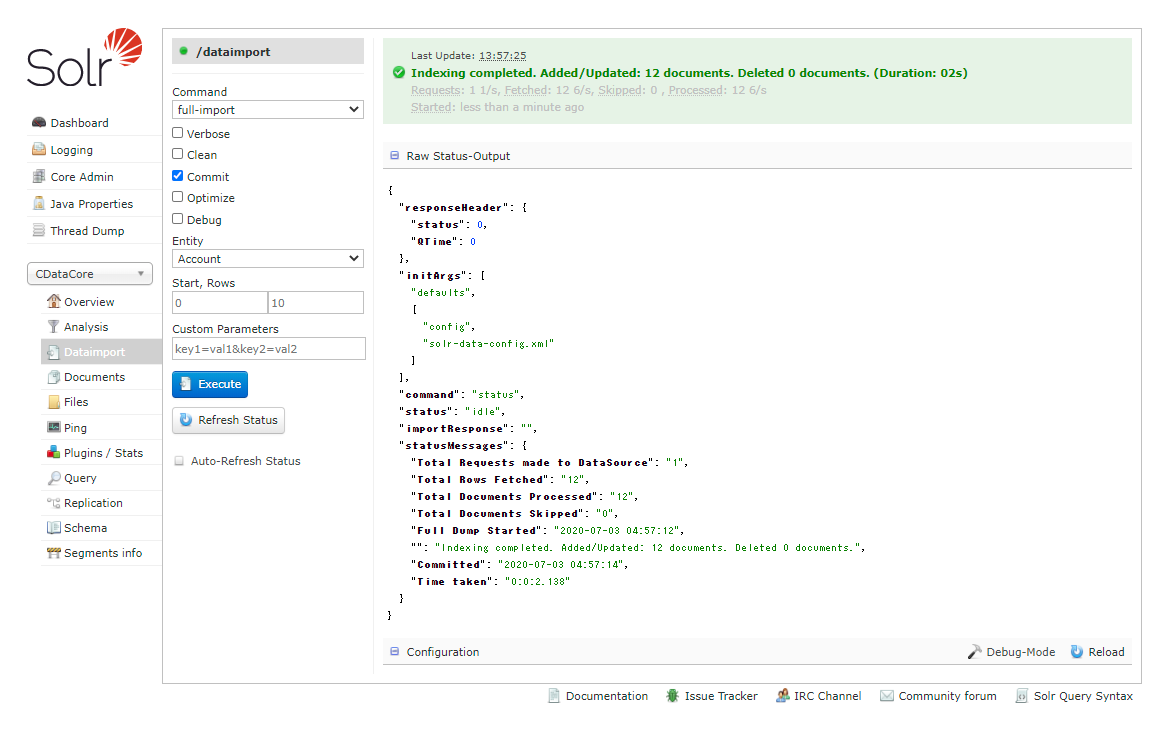

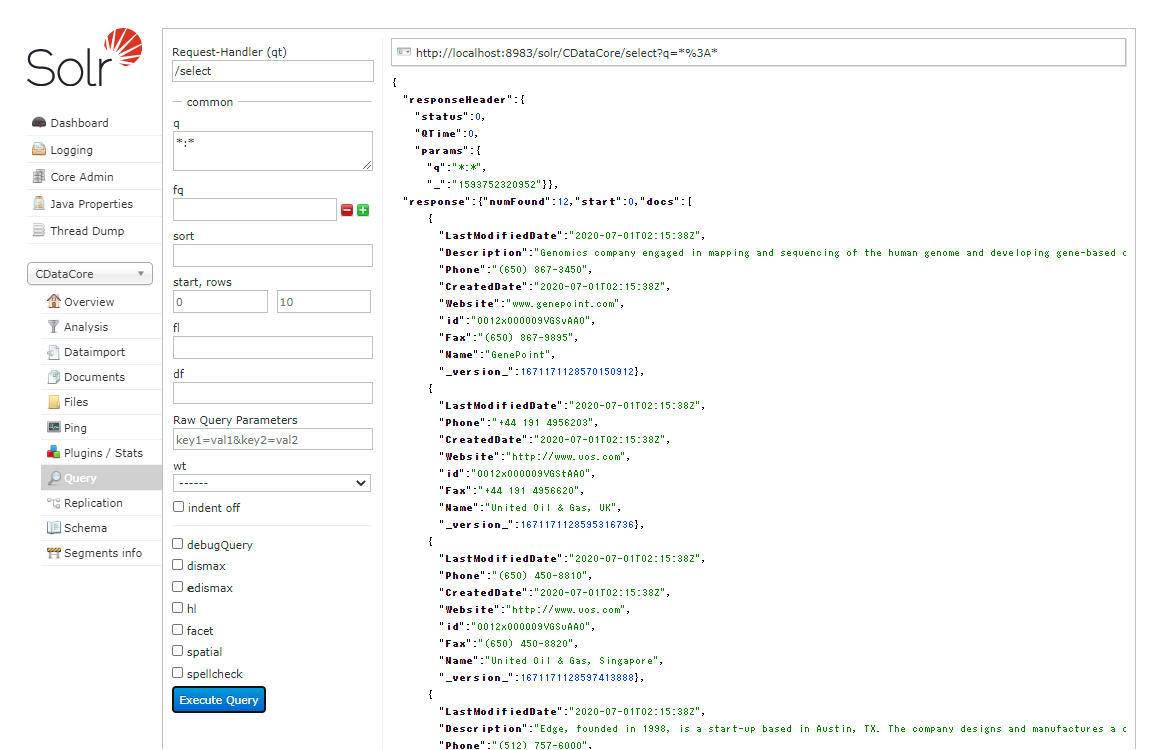

- Check the result of the import from the Query.

![Check the full import result of Spark.]()

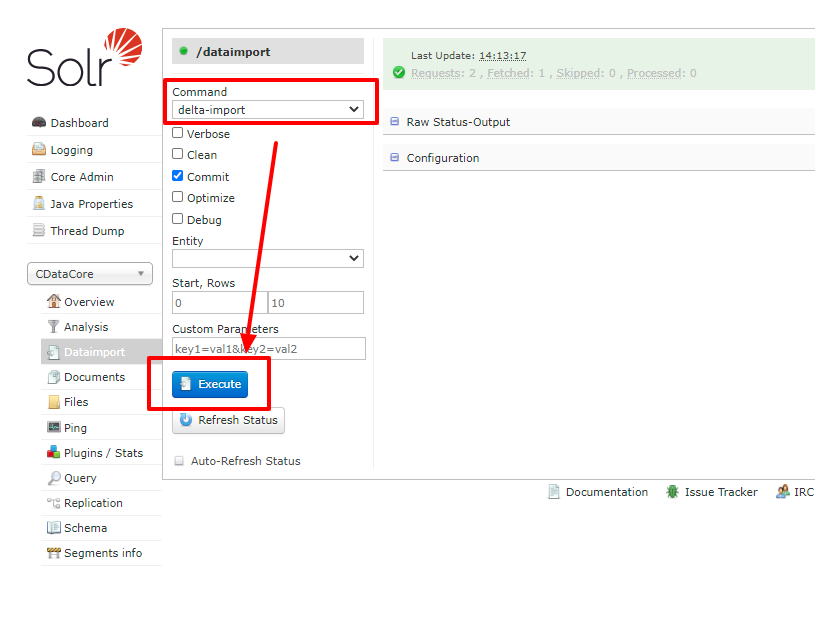

- Try an incremental update using deltaQuery. Modify some data in the original Spark data set. Select the "delta-import" command this time from DataImport window and click "Execute."

![Execute Delta import in Solr.]()

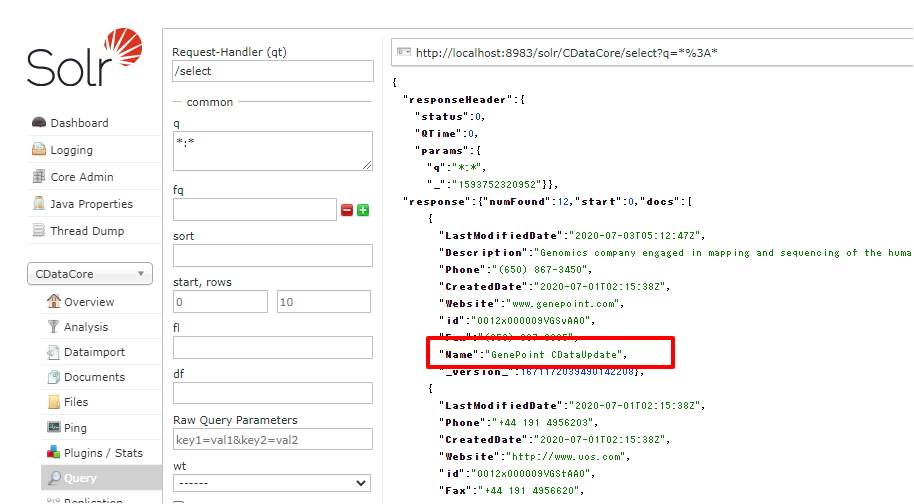

- Check the result of the incremental update.

![Check the delta import result of Spark.]()

Using the CData JDBC Driver for Spark you are able to create an automated import of Spark data into Apache Solr. Download a free, 30 day trial of any of the 200+ CData JDBC Drivers and get started today.