Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Create Datasets from Spark in Domo Workbench and Build Visualizations of Spark Data in Domo

Use the CData ODBC Driver for Spark to create datasets from Spark data in Domo Workbench and then build visualizations in the Domo service.

Domo helps you manage, analyze, and share data across your entire organization, enabling decision makers to identify and act on strategic opportunities. Domo Workbench provides a secure, client-side solution for uploading your on-premise data to Domo. The CData ODBC Driver for Spark links Domo Workbench to operational Spark data. You can build datasets from Spark data using standard SQL queries in Workbench and then create real-time visualizations of Spark data in the Domo service.

The CData ODBC Drivers offer unmatched performance for interacting with live Spark data in Domo due to optimized data processing built into the driver. When you issue complex SQL queries from Domo to Spark, the driver pushes supported SQL operations, like filters and aggregations, directly to Spark and utilizes the embedded SQL Engine to process unsupported operations (often SQL functions and JOIN operations) client-side. With built-in dynamic metadata querying, you can visualize and analyze Spark data using native Domo data types.

Connect to Spark as an ODBC Data Source

If you have not already, first specify connection properties in an ODBC DSN (data source name). This is the last step of the driver installation. You can use the Microsoft ODBC Data Source Administrator to create and configure ODBC DSNs.

Set the Server, Database, User, and Password connection properties to connect to SparkSQL.

When you configure the DSN, you may also want to set the Max Rows connection property. This will limit the number of rows returned, which is especially helpful for improving performance when designing reports and visualizations.

After creating a DSN, you will need to create a dataset for Spark in Domo Workbench using the Spark DSN and build a visualization in the Domo service based on the dataset.

Build a Dataset for Spark Data

You can follow the steps below to build a dataset based on a table in Spark in Domo Workbench using the CData ODBC Driver for Spark.

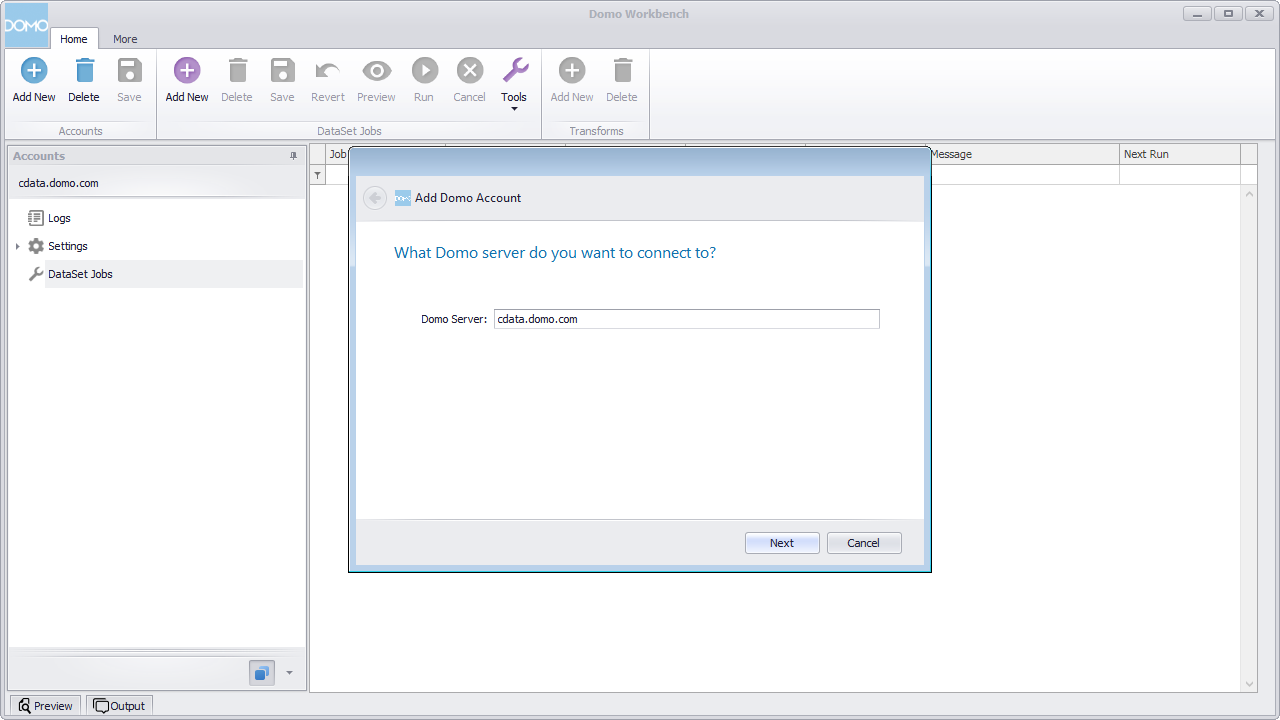

- Open Domo Workbench and, if you have not already, add your Domo service server to Workbench. In the Accounts submenu, click Add New, type in the server address (i.e., domain.domo.com) and click through the wizard to authenticate.

![Connecting to the Domo Service.]()

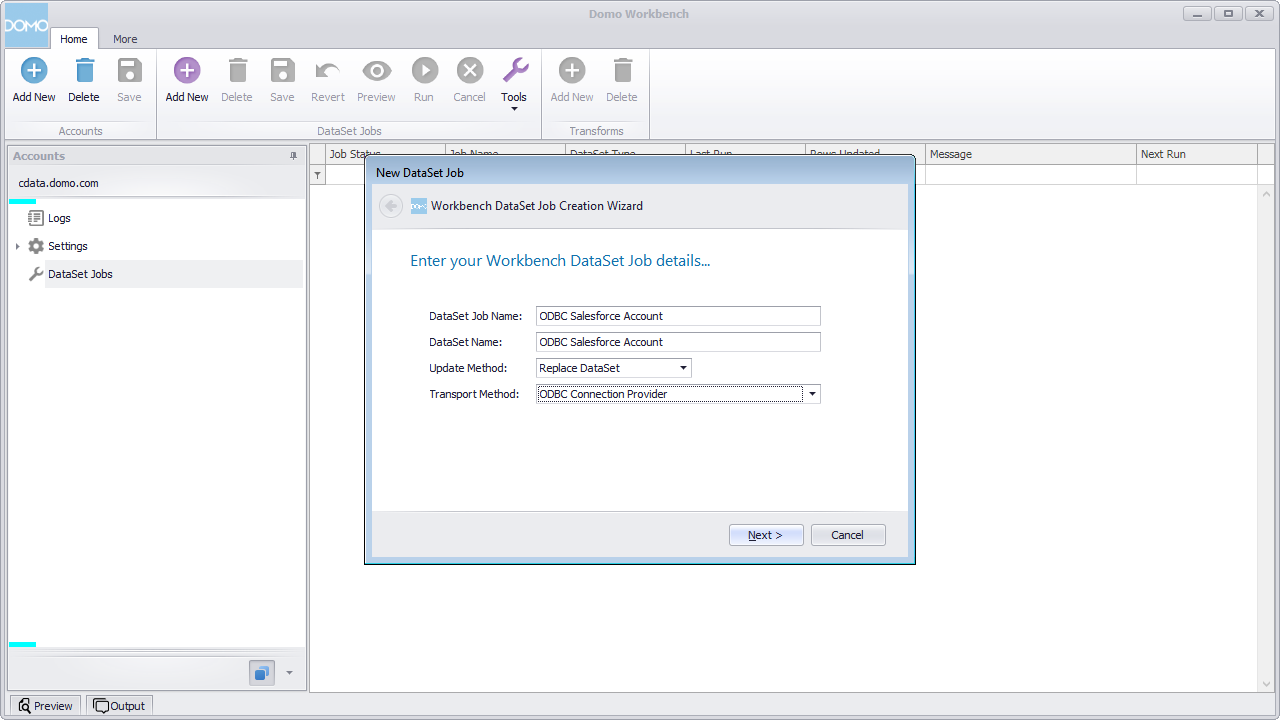

- In the DataSet Jobs submenu, click Add New.

- Name the dataset job (i.e., ODBC Spark Customers), select ODBC Connection Provider as the transport method, and click through the wizard.

![Configuring the DataSet Job.]()

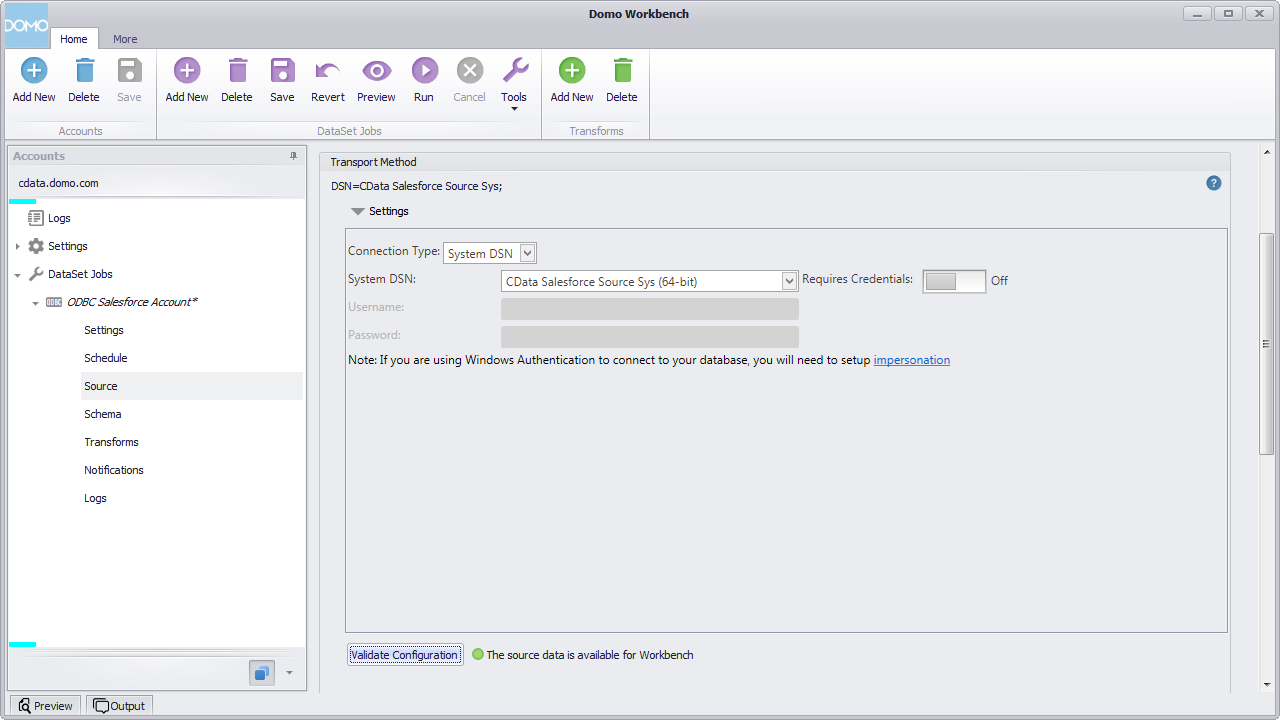

- In the newly created DataSet Job, navigate to Source and click to configure the settings.

- Select System DSN for the Connection Type.

- Select the previously configured DSN (CData SparkSQL Sys) for the System DSN.

- Click to validate the configuration.

![Configuring the Source Settings.]()

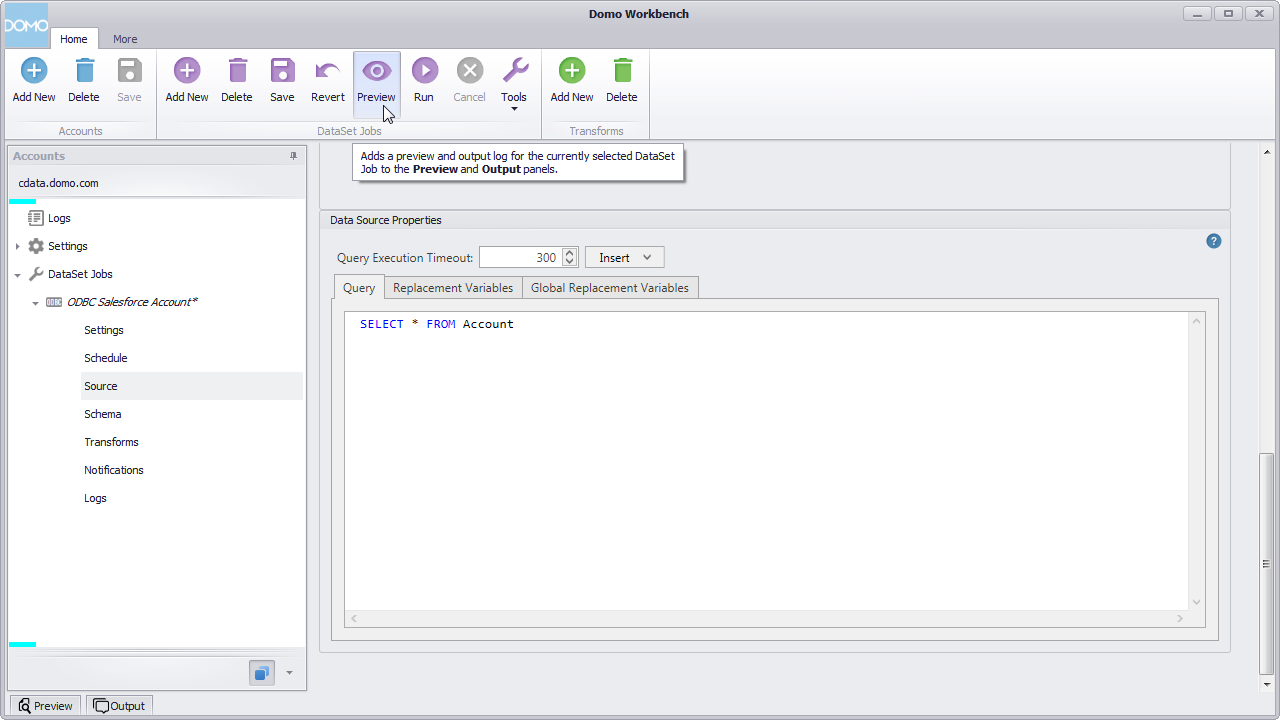

- Below the settings, set the Query to a SQL query:

SELECT * FROM CustomersNOTE: By connecting to Spark data using an ODBC driver, you simply need to know SQL in order to get your data, circumventing the need to know Spark-specific APIs or protocols. - Click preview.

![Querying Spark Data.]()

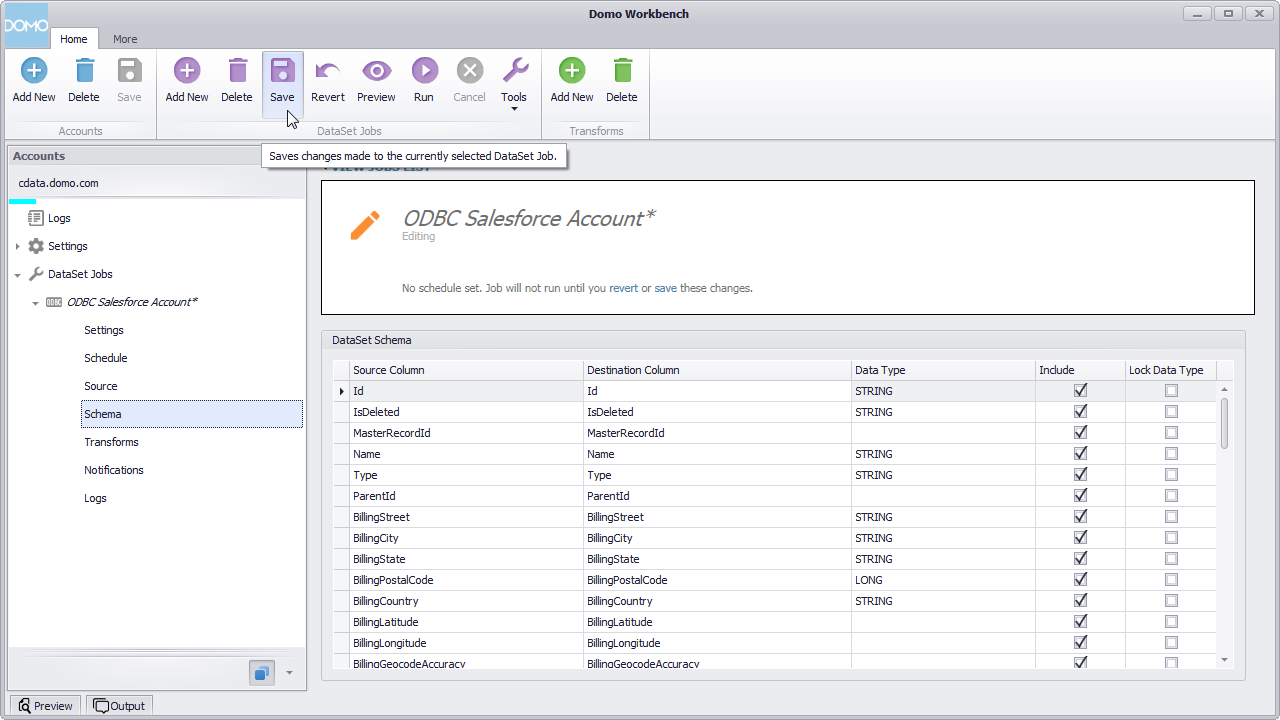

- Check over the generated schema, add any transformations, then save and run the dataset job.

![Save and Run the Configured DataSet Job (Salesforce is shown).]()

With the dataset job run, the dataset will be accessible from the Domo service, allowing you to build visualizations, reports, and more based on Spark data.

Create Data Visualizations

With the DataSet Job saved and run in Domo Workbench, we are ready to build visualizations of the Spark data in the Domo service.

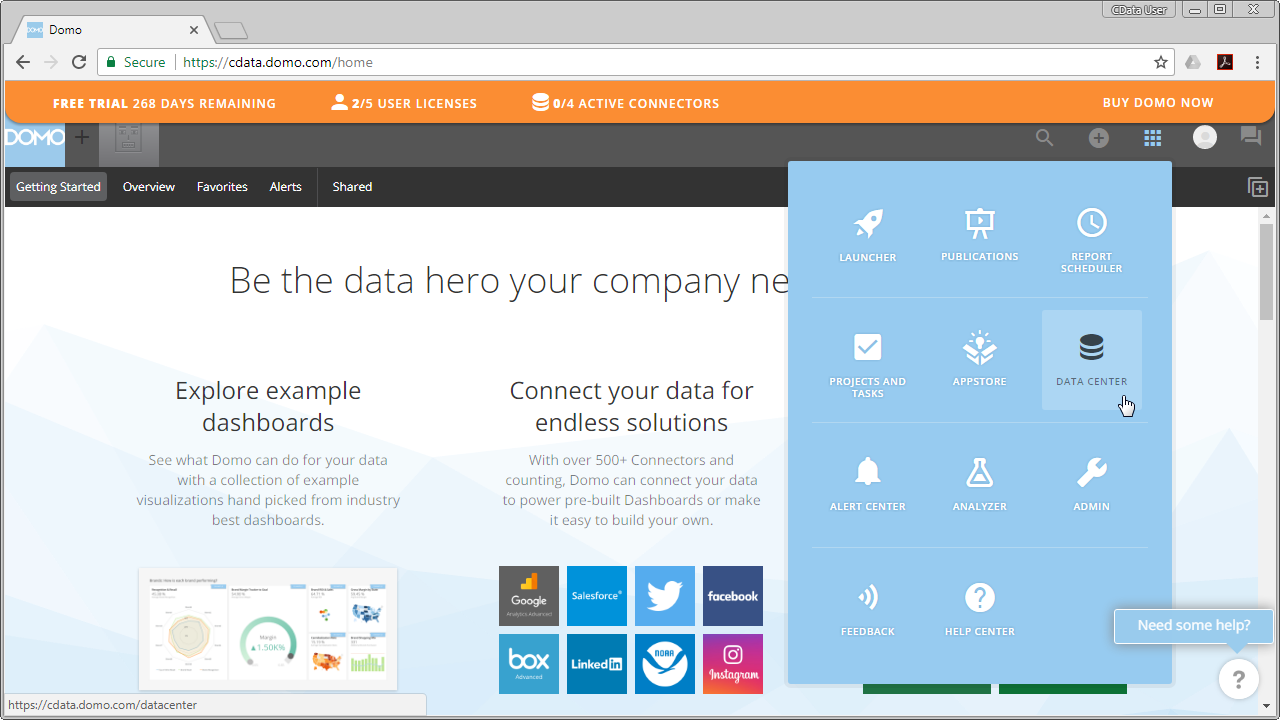

- Navigate to the Data Center.

![Accessing the Data Center (Salesforce is shown).]()

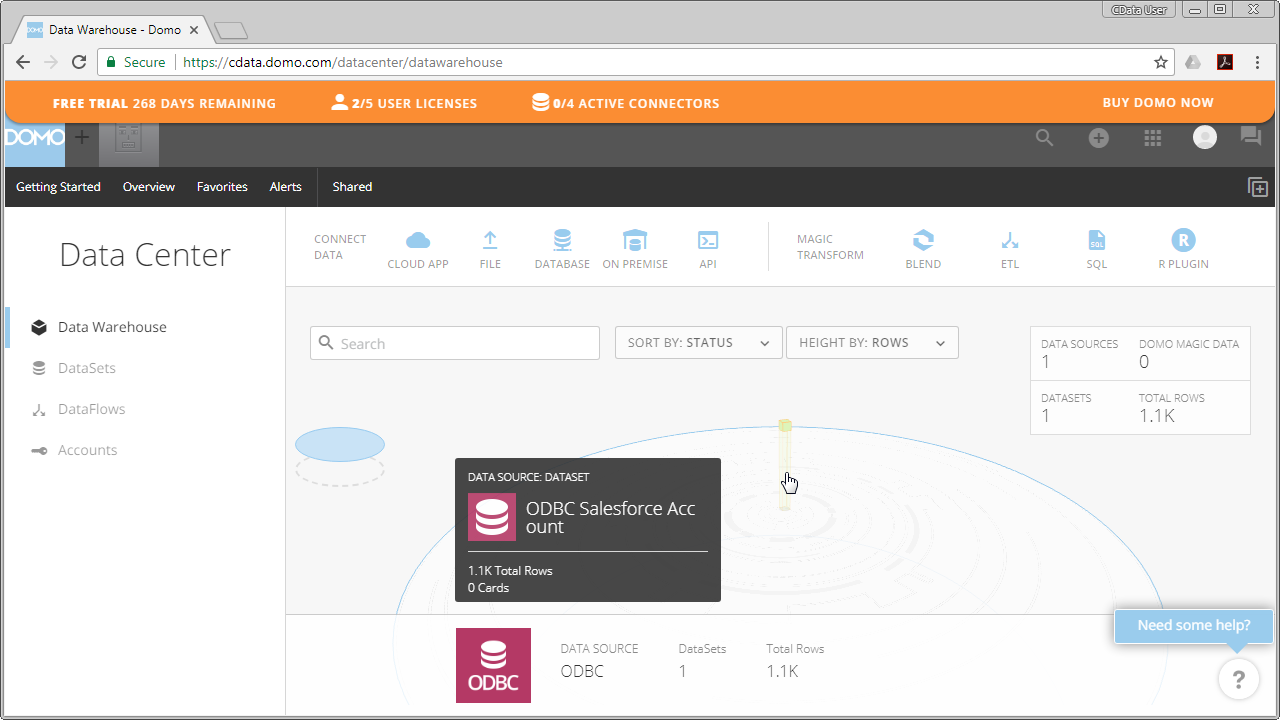

- In the data warehouse, select the ODBC data source and drill down to our new dataset.

![Selecting the Spark Dataset (Salesforce is shown).]()

- With the dataset selected, choose to create a visualization.

- In the new card:

- Drag a Dimension to the X Value.

- Drag a Measure to the Y Value.

- Choose a Visualization.

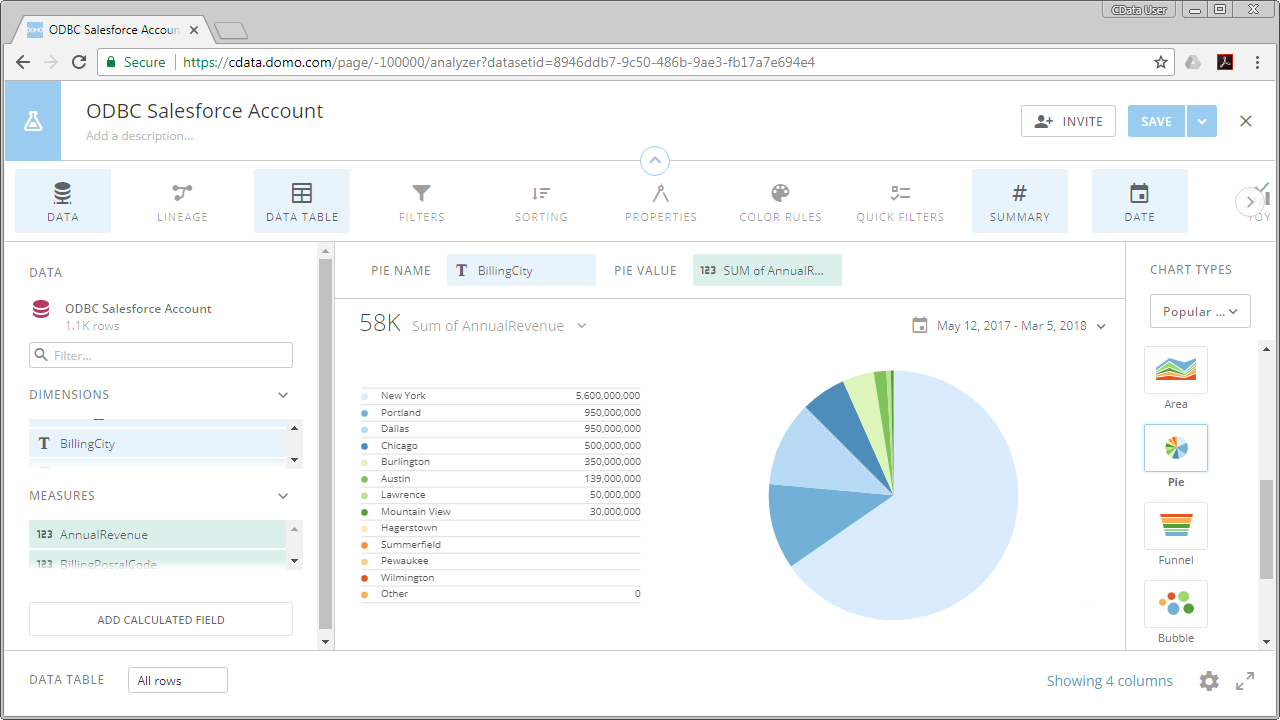

![Visualizing Spark Data in Domo (Salesforce is shown).]()

With the CData ODBC Driver for Spark, you can build custom datasets based on Spark data using only SQL in Domo Workbench and then build and share visualizations and reports through the Domo service.