Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Access Azure Data Lake Storage Data in Mule Applications Using the CData JDBC Driver

Create a simple Mule Application that uses HTTP and SQL with CData JDBC drivers to create a JSON endpoint for Azure Data Lake Storage data.

The CData JDBC Driver for Azure Data Lake Storage connects Azure Data Lake Storage data to Mule applications enabling read functionality with familiar SQL queries. The JDBC Driver allows users to easily create Mule applications to backup, transform, report, and analyze Azure Data Lake Storage data.

This article demonstrates how to use the CData JDBC Driver for Azure Data Lake Storage inside of a Mule project to create a Web interface for Azure Data Lake Storage data. The application created allows you to request Azure Data Lake Storage data using an HTTP request and have the results returned as JSON. The exact same procedure outlined below can be used with any CData JDBC Driver to create a Web interface for the 200+ available data sources.

- Create a new Mule Project in Anypoint Studio.

- Add an HTTP Connector to the Message Flow.

- Configure the address for the HTTP Connector.

![Add and Configure the HTTP Connector]()

- Add a Database Select Connector to the same flow, after the HTTP Connector.

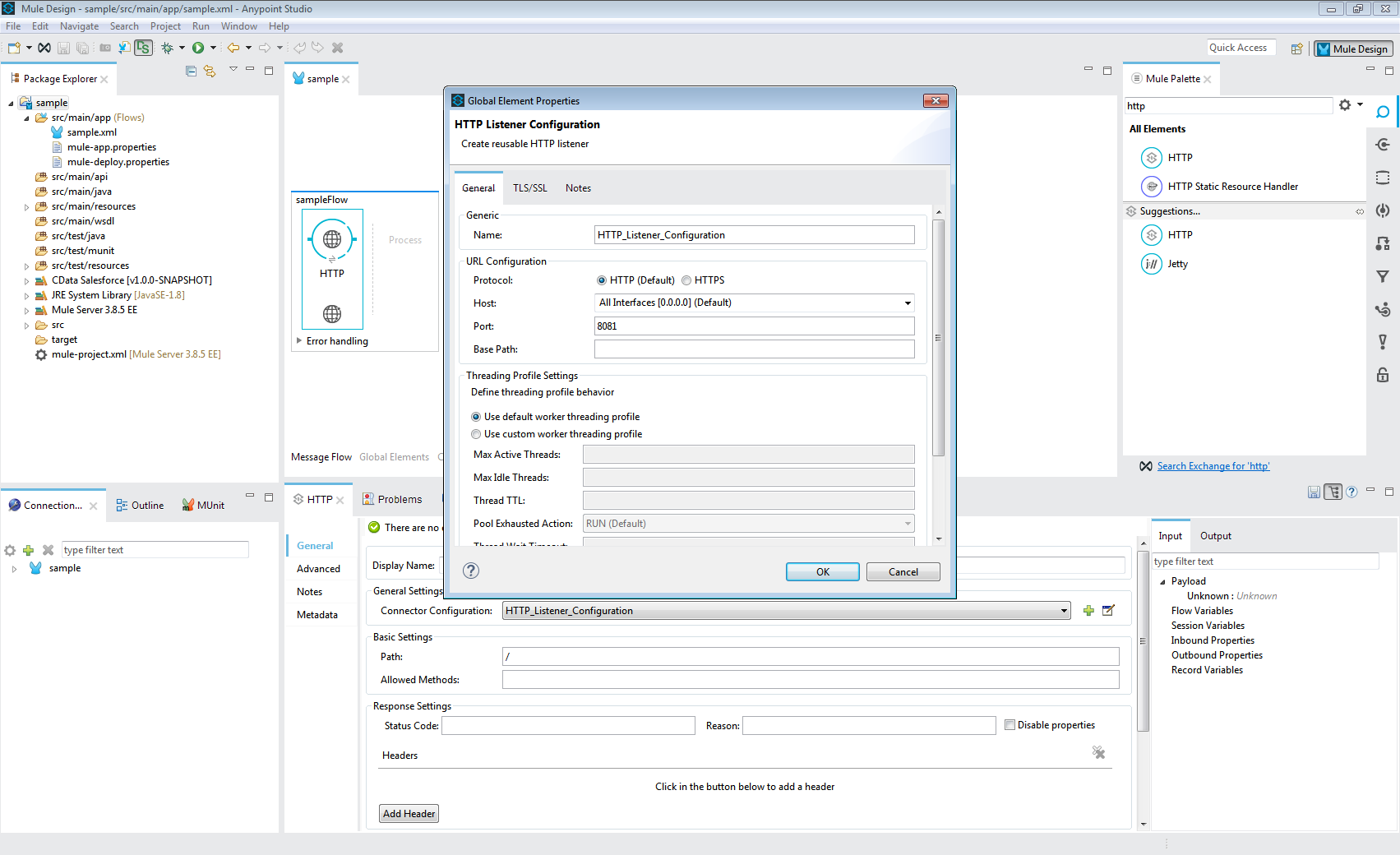

- Create a new Connection (or edit an existing one) and configure the properties.

- Set Connection to "Generic Connection"

- Select the CData JDBC Driver JAR file in the Required Libraries section (e.g. cdata.jdbc.adls.jar).

![Adding the JAR file (Salesforce is shown).]()

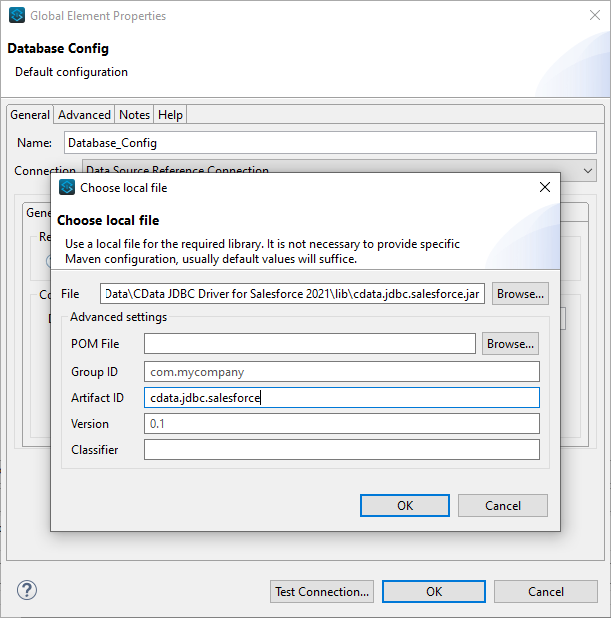

- Set the URL to the connection string for Azure Data Lake Storage

Authenticating to a Gen 1 DataLakeStore Account

Gen 1 uses OAuth 2.0 in Azure AD for authentication.

For this, an Active Directory web application is required. You can create one as follows:

To authenticate against a Gen 1 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen1.

- Account: Set this to the name of the account.

- OAuthClientId: Set this to the application Id of the app you created.

- OAuthClientSecret: Set this to the key generated for the app you created.

- TenantId: Set this to the tenant Id. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

Authenticating to a Gen 2 DataLakeStore Account

To authenticate against a Gen 2 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen2.

- Account: Set this to the name of the account.

- FileSystem: Set this to the file system which will be used for this account.

- AccessKey: Set this to the access key which will be used to authenticate the calls to the API. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

Built-in Connection String Designer

For assistance in constructing the JDBC URL, use the connection string designer built into the Azure Data Lake Storage JDBC Driver. Either double-click the JAR file or execute the jar file from the command-line.

java -jar cdata.jdbc.adls.jarFill in the connection properties and copy the connection string to the clipboard.

- Set the Driver class name to cdata.jdbc.adls.ADLSDriver.

![A configured Database Connection (Salesforce is shown).]()

- Click Test Connection.

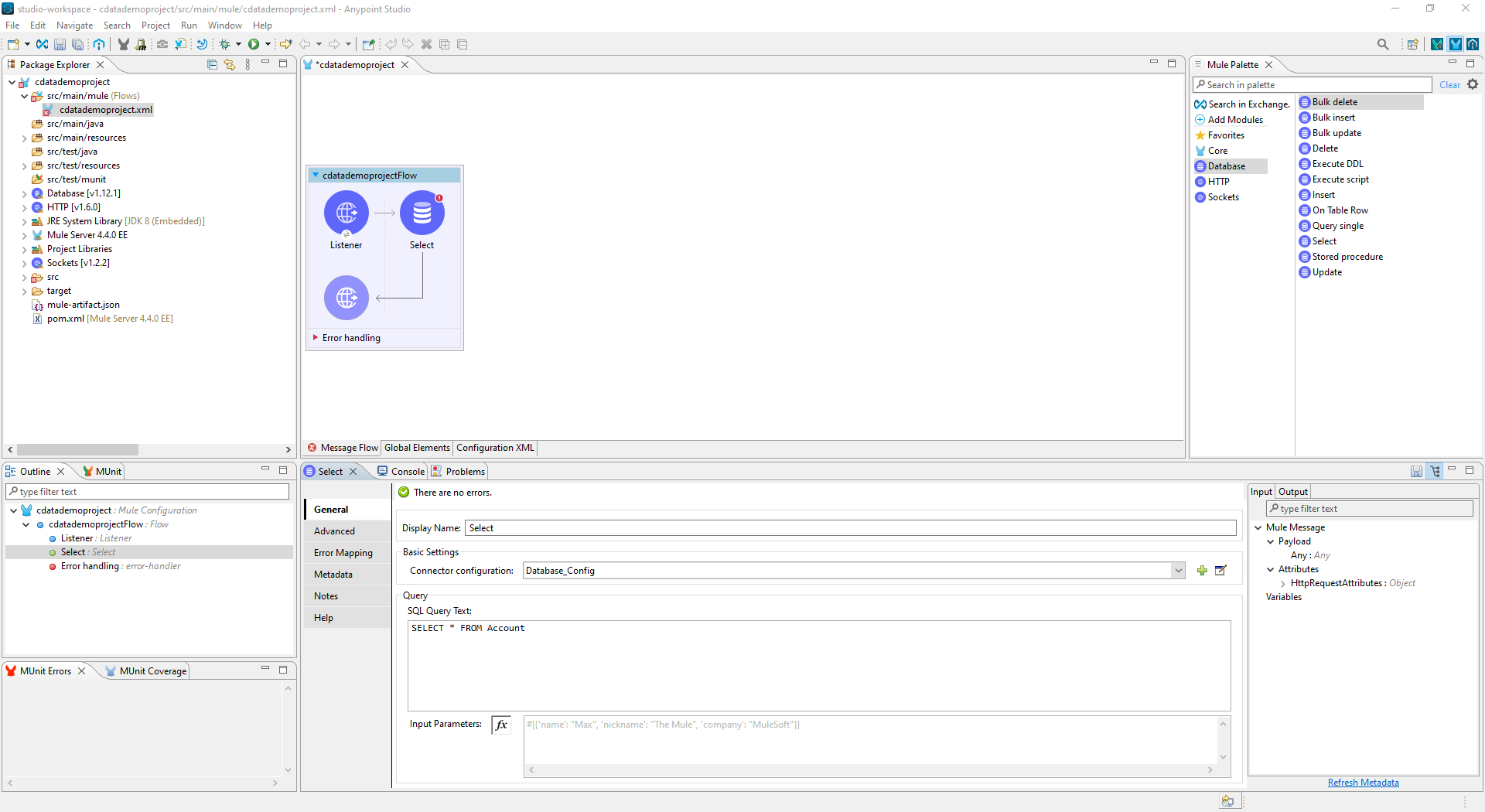

- Set the SQL Query Text to a SQL query to request Azure Data Lake Storage data. For example:

SELECT FullPath, Permission FROM Resources WHERE Type = 'FILE'![Configure the Select object (Salesforce is Shown)]()

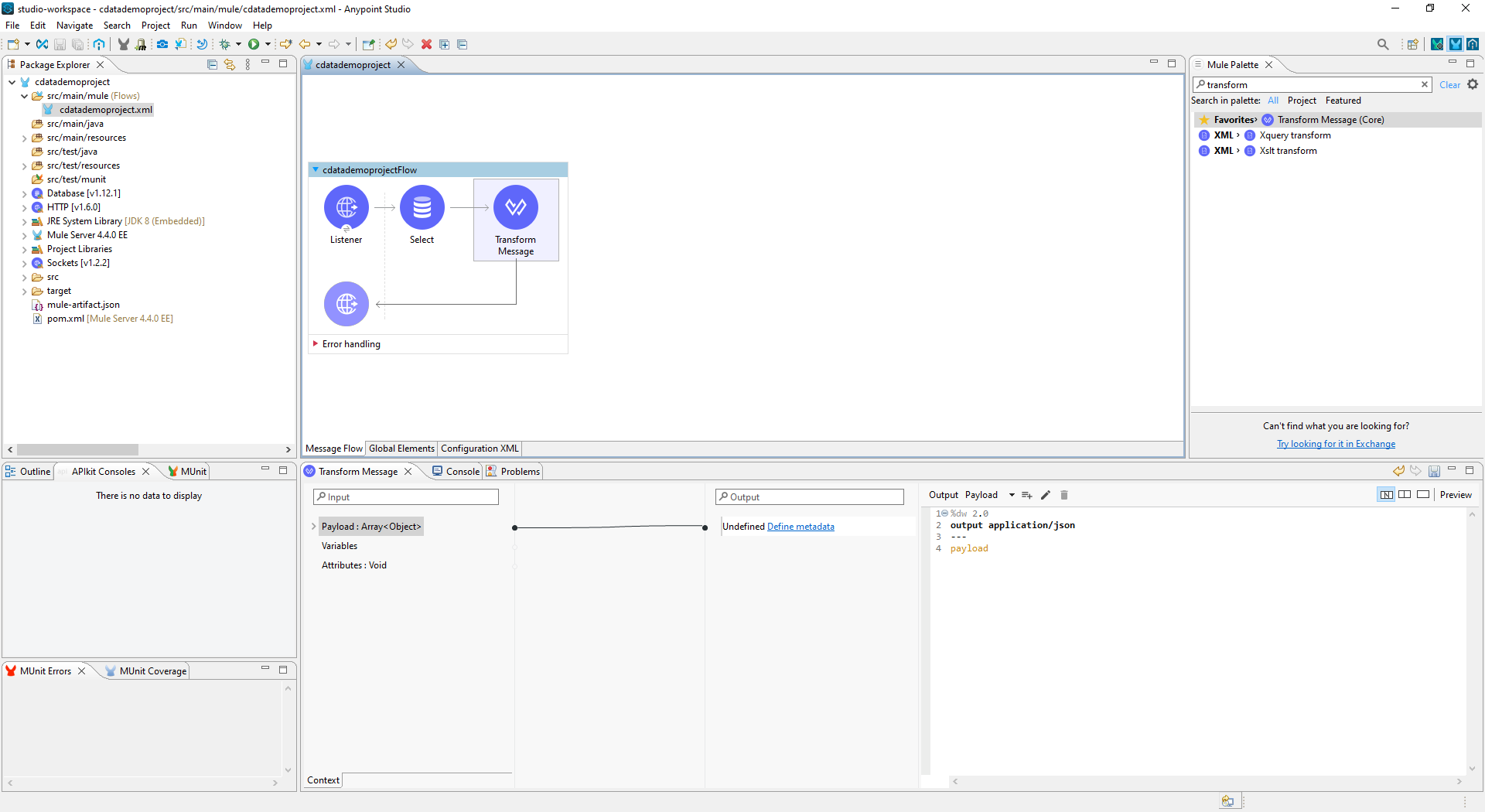

- Add a Transform Message Component to the flow.

- Set the Output script to the following to convert the payload to JSON:

%dw 2.0 output application/json --- payload![Add the Transform Message Component to the Flow]()

- To view your Azure Data Lake Storage data, navigate to the address you configured for the HTTP Connector (localhost:8081 by default): http://localhost:8081. The Azure Data Lake Storage data is available as JSON in your Web browser and any other tools capable of consuming JSON endpoints.

At this point, you have a simple Web interface for working with Azure Data Lake Storage data (as JSON data) in custom apps and a wide variety of BI, reporting, and ETL tools. Download a free, 30 day trial of the JDBC Driver for Azure Data Lake Storage and see the CData difference in your Mule Applications today.