Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Azure Data Lake Storage Reporting in OBIEE with the Azure Data Lake Storage JDBC Driver

Deploy the Azure Data Lake Storage JDBC driver on OBIEE to provide real-time reporting across the enterprise.

The CData JDBC Driver for Azure Data Lake Storage is a standard database driver that can integrate real-time access to Azure Data Lake Storage data into your Java-based reporting server. This article shows how to deploy the driver to Oracle Business Intelligence Enterprise Edition (OBIEE) and create reports on Azure Data Lake Storage data that reflect any changes.

Deploy the JDBC Driver

Follow the steps below to add the JDBC driver to WebLogic's classpath.

For WebLogic 12.2.1, simply place the driver JAR and .lic file into DOMAIN_HOME\lib; for example, ORACLE_HOME\user_projects\domains\MY_DOMAIN\lib. These files will be added to the server classpath at startup.

You can also manually add the driver to the classpath: This is required for earlier versions. Prepend the following to PRE_CLASSPATH in setDomainEnv.cmd (Windows) or setDomainEnv.sh (Unix). This script is located in the bin subfolder of the folder for that domain. For example: ORACLE_HOME\user_projects\domains\MY_DOMAIN\bin.

set PRE_CLASSPATH=your-installation-directory\lib\cdata.jdbc.adls.jar;%PRE_CLASSPATH%

Restart all servers; for example, run the stop and start commands in DOMAIN_HOME\bitools\bin.

Create a JDBC Data Source for Azure Data Lake Storage

After deploying the JDBC driver, you can create a JDBC data source from BI Publisher.

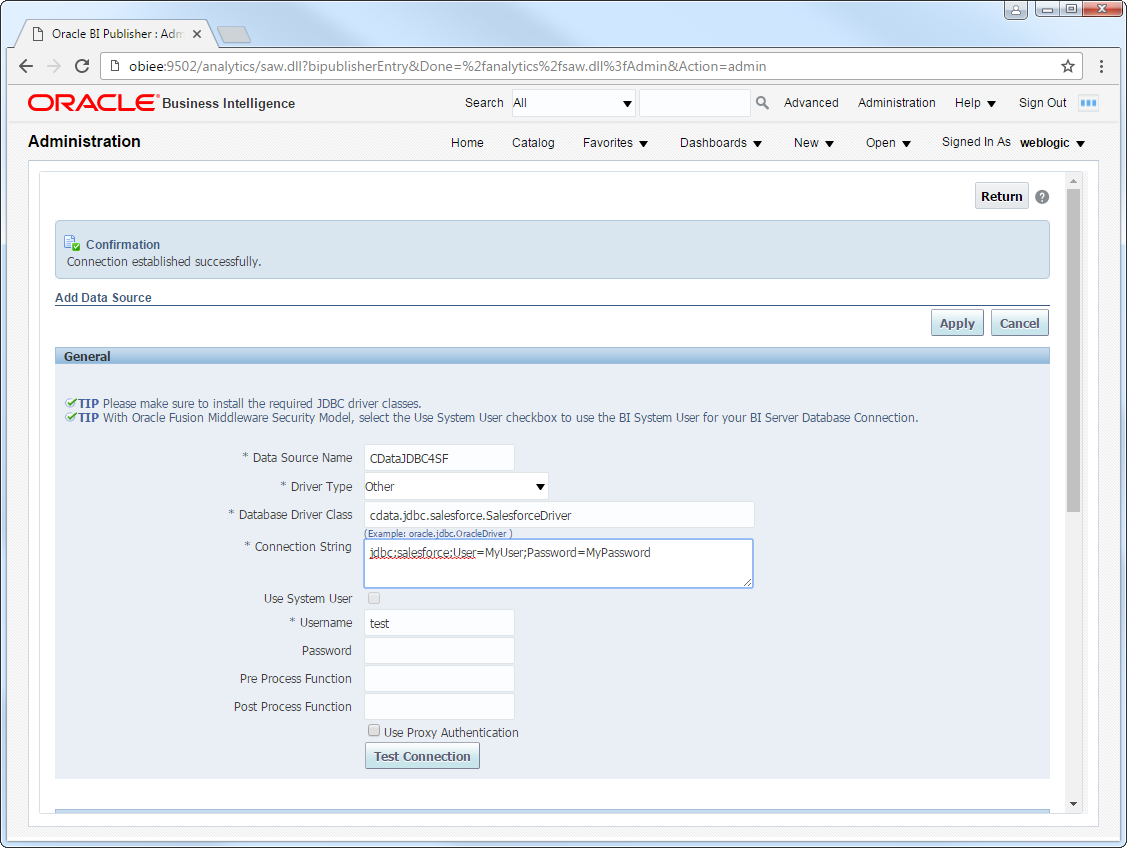

- Log into BI Publisher, at the URL http://localhost:9502/analytics, for example, and click Administration -> Manage BI Publisher.

- Click JDBC Connection -> Add Data Source.

- Enter the following information:

- Data Source Name: Enter the name that users will create connections to in their reports.

- Driver Type: Select Other.

- Database DriverClass: Enter the driver class, cdata.jdbc.adls.ADLSDriver.

- Connection String: Enter the JDBC URL.

Authenticating to a Gen 1 DataLakeStore Account

Gen 1 uses OAuth 2.0 in Azure AD for authentication.

For this, an Active Directory web application is required. You can create one as follows:

To authenticate against a Gen 1 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen1.

- Account: Set this to the name of the account.

- OAuthClientId: Set this to the application Id of the app you created.

- OAuthClientSecret: Set this to the key generated for the app you created.

- TenantId: Set this to the tenant Id. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

Authenticating to a Gen 2 DataLakeStore Account

To authenticate against a Gen 2 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen2.

- Account: Set this to the name of the account.

- FileSystem: Set this to the file system which will be used for this account.

- AccessKey: Set this to the access key which will be used to authenticate the calls to the API. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

Built-in Connection String Designer

For assistance in constructing the JDBC URL, use the connection string designer built into the Azure Data Lake Storage JDBC Driver. Either double-click the JAR file or execute the jar file from the command-line.

java -jar cdata.jdbc.adls.jarFill in the connection properties and copy the connection string to the clipboard.

![Using the built-in connection string designer to generate a JDBC URL (Salesforce is shown.)]()

When you configure the JDBC URL, you may also want to set the Max Rows connection property. This will limit the number of rows returned, which is especially helpful for improving performance when designing reports and visualizations.

A typical JDBC URL is below:

jdbc:adls:Schema=ADLSGen2;Account=myAccount;FileSystem=myFileSystem;AccessKey=myAccessKey;InitiateOAuth=GETANDREFRESH - Username: Enter the username.

- Password: Enter the password.

- In the Security section, select the allowed user roles.

![The required settings for a JDBC data source. (Salesforce is shown.)]()

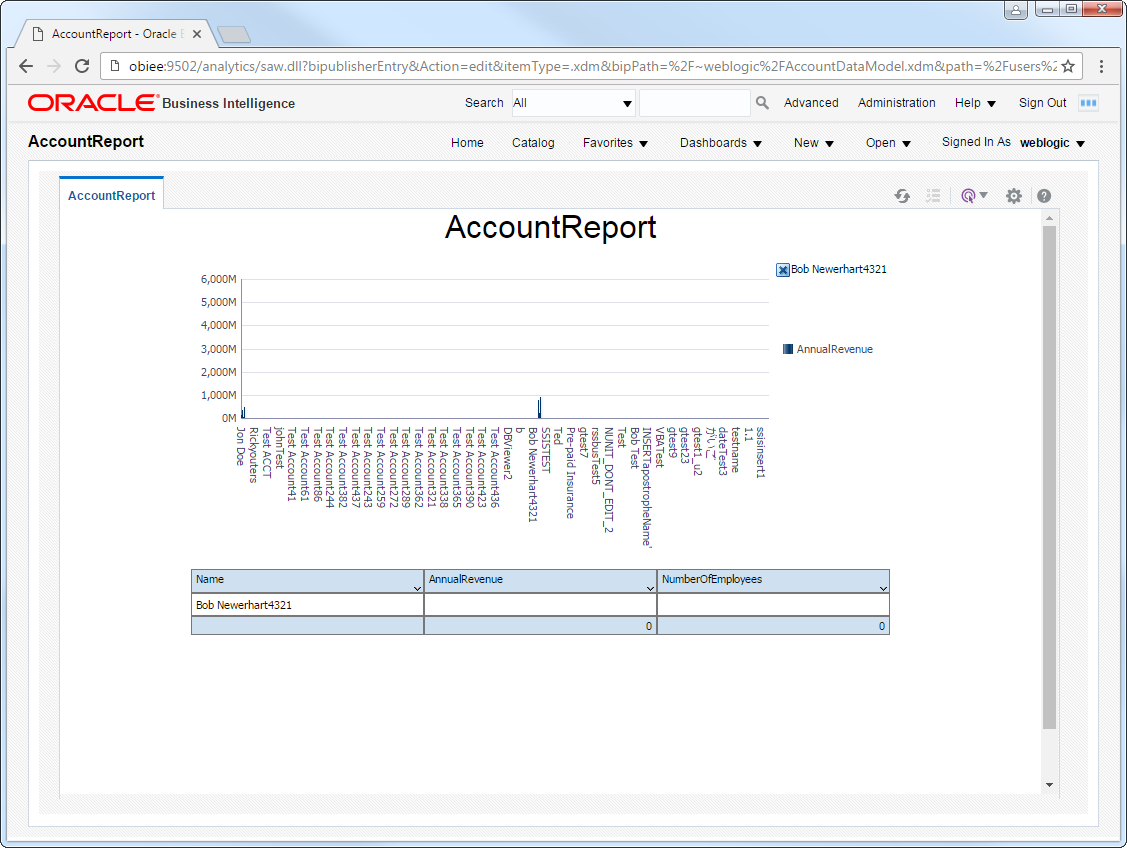

Create Real-Time Azure Data Lake Storage Reports

You can now create reports and analyses based on real-time Azure Data Lake Storage data. Follow the steps below to use the standard report wizard to create an interactive report that reflects any changes to Azure Data Lake Storage data.

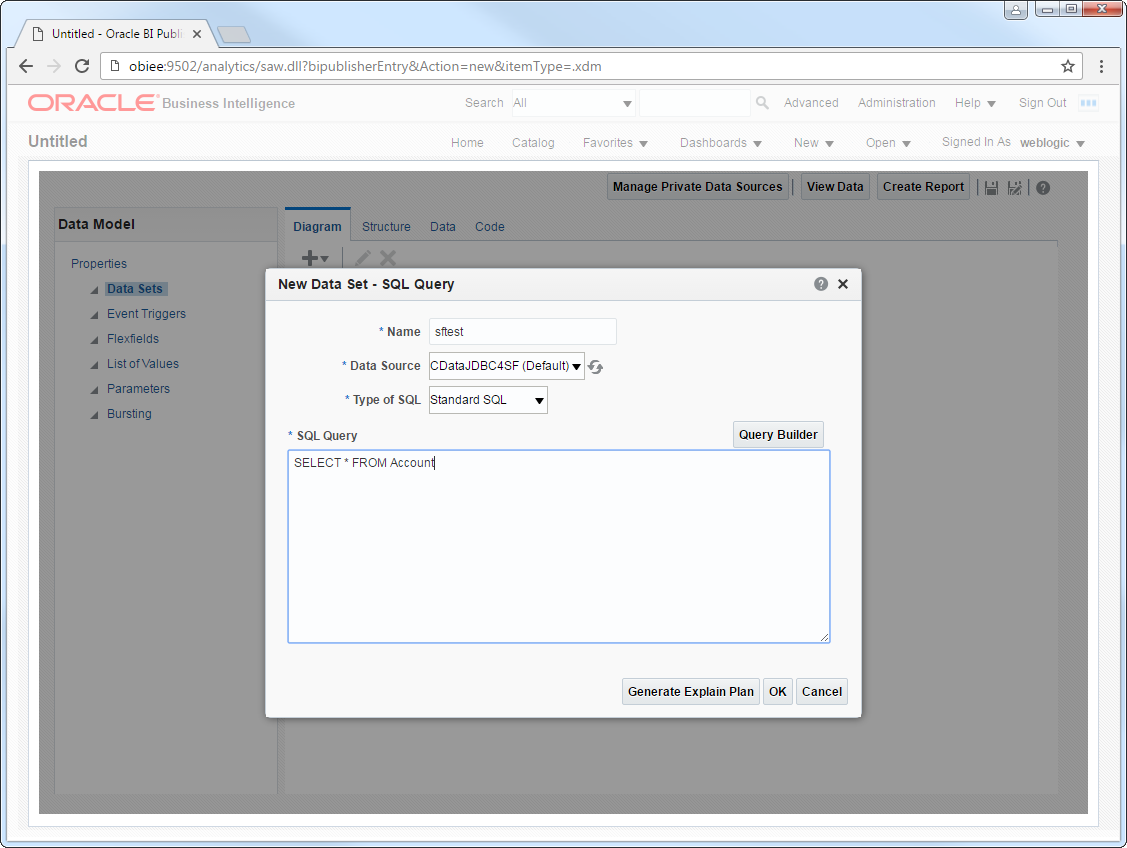

- On the global header, click New -> Data Model.

- On the Diagram tab, select SQL query in the menu.

- Enter a name for the query and in the Data Source menu select the Azure Data Lake Storage JDBC data source you created.

- Select standard SQL and enter a query like the following:

SELECT FullPath, Permission FROM Resources WHERE Type = 'FILE'![The SQL query to be used to create the data set for the report's data model. (Salesforce is shown.)]()

- Click View Data to generate the sample data to be used as you build your report.

- Select the number of rows to include in the sample data, click View, and then click Save As Sample Data.

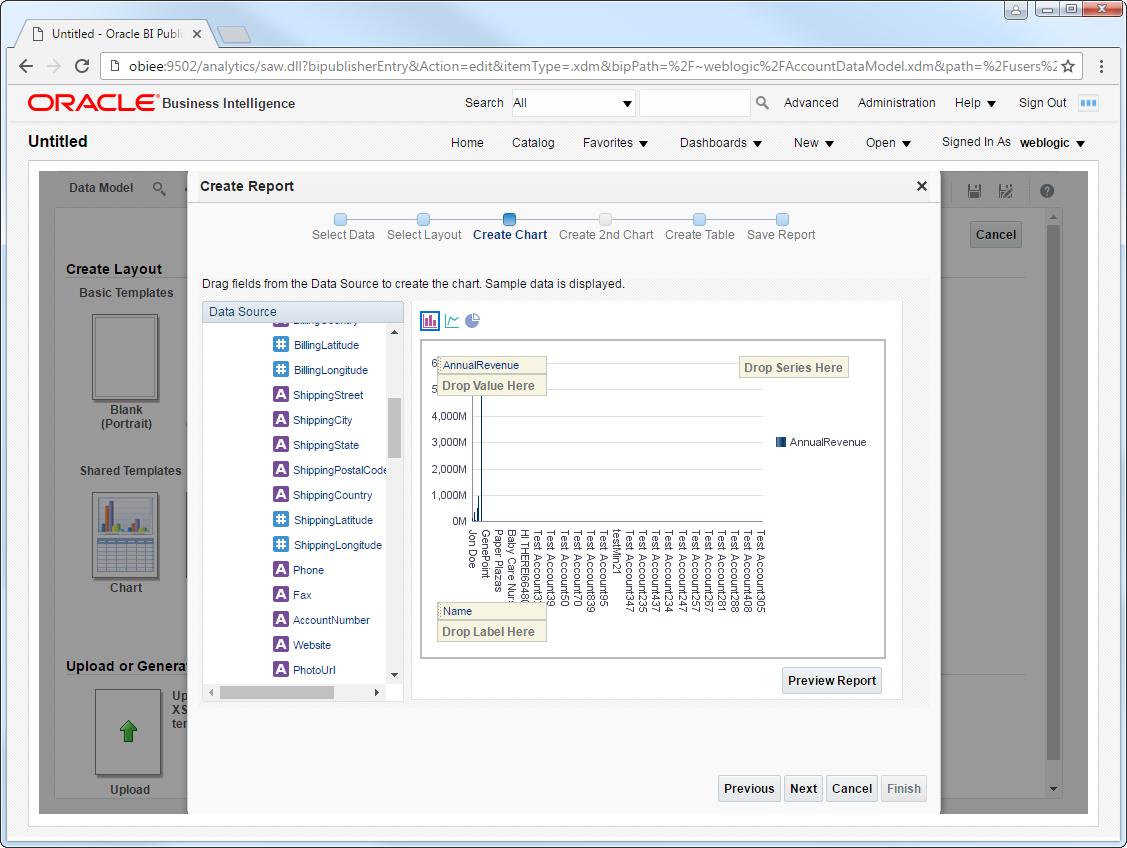

- Click Create Report -> Use Data Model.

- Select Guide Me and on the Select Layout page select the report objects you want to include. In this example we select Chart and Table.

- Drop a numeric column like Permission onto the Drop Value Here box on the y-axis. Drop a dimension column like FullPath onto the Drop Label Here box on the x-axis.

![The dimensions and measures for a chart. (Salesforce is shown.)]()

- Click Refresh to pick up any changes to the Azure Data Lake Storage data.

![An interactive, refresh-on-demand report. (Salesforce is shown.)]()