Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Create Databricks-Connected Nintex Workflows

Use CData Connect Cloud to connect to Databricks Data from Nintex Workflow Cloud and build custom workflows using live Databricks data.

Nintex Workflow Cloud is a cloud-based platform where you can design workflows to automate simple or complex processes using drag-and-drop interactions — without writing any code. When paired with CData Connect Cloud, you get instant, cloud-to-cloud access to Databricks data for business applications. This article shows how to create a virtual database for Databricks in Connect Cloud and build a simple workflow from Databricks data in Nintex.

CData Connect Cloud provides a pure, cloud-to-cloud interface for Databricks, allowing you to build workflows from live Databricks data in Nintex Workflow Cloud — without replicating the data to a natively supported database. Nintex allows you to access data directly using SQL queries. Using optimized data processing out of the box, CData Connect Cloud pushes all supported SQL operations (filters, JOINs, etc.) directly to Databricks, leveraging server-side processing to quickly return the requested Databricks data.

Configure Databricks Connectivity for Nintex

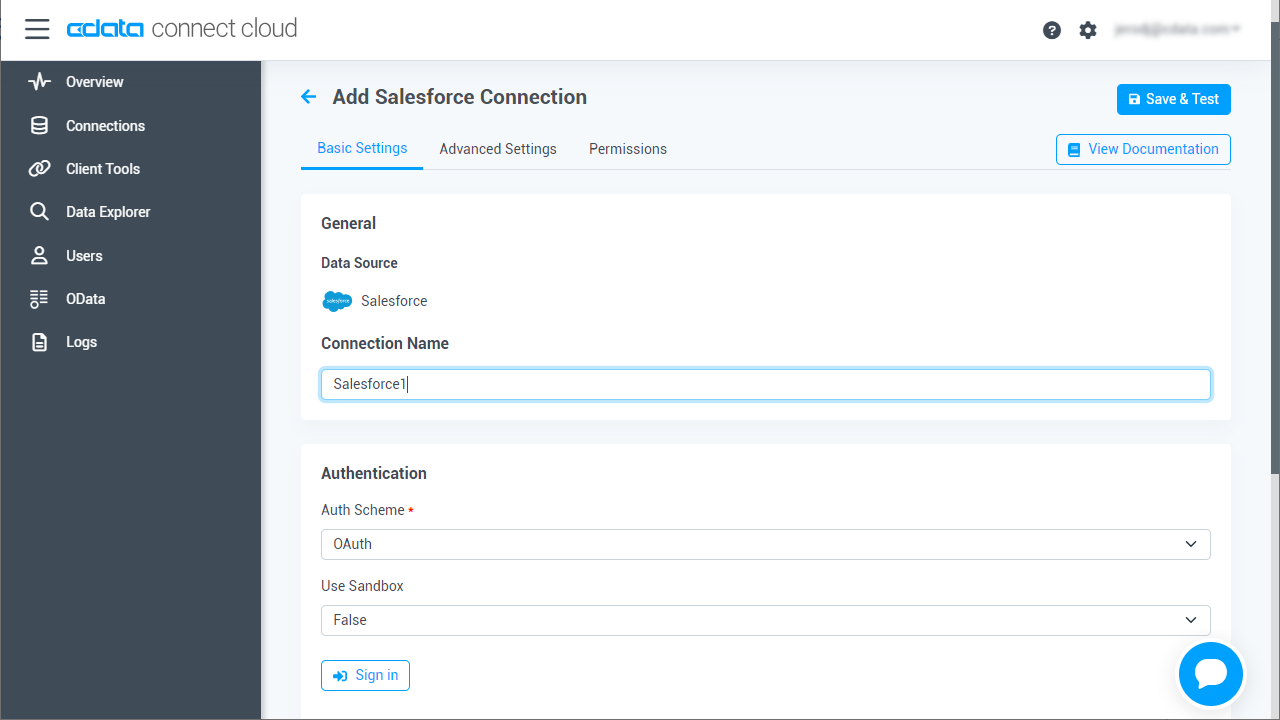

Connectivity to Databricks from Nintex is made possible through CData Connect Cloud. To work with Databricks data from Nintex, we start by creating and configuring a Databricks connection.

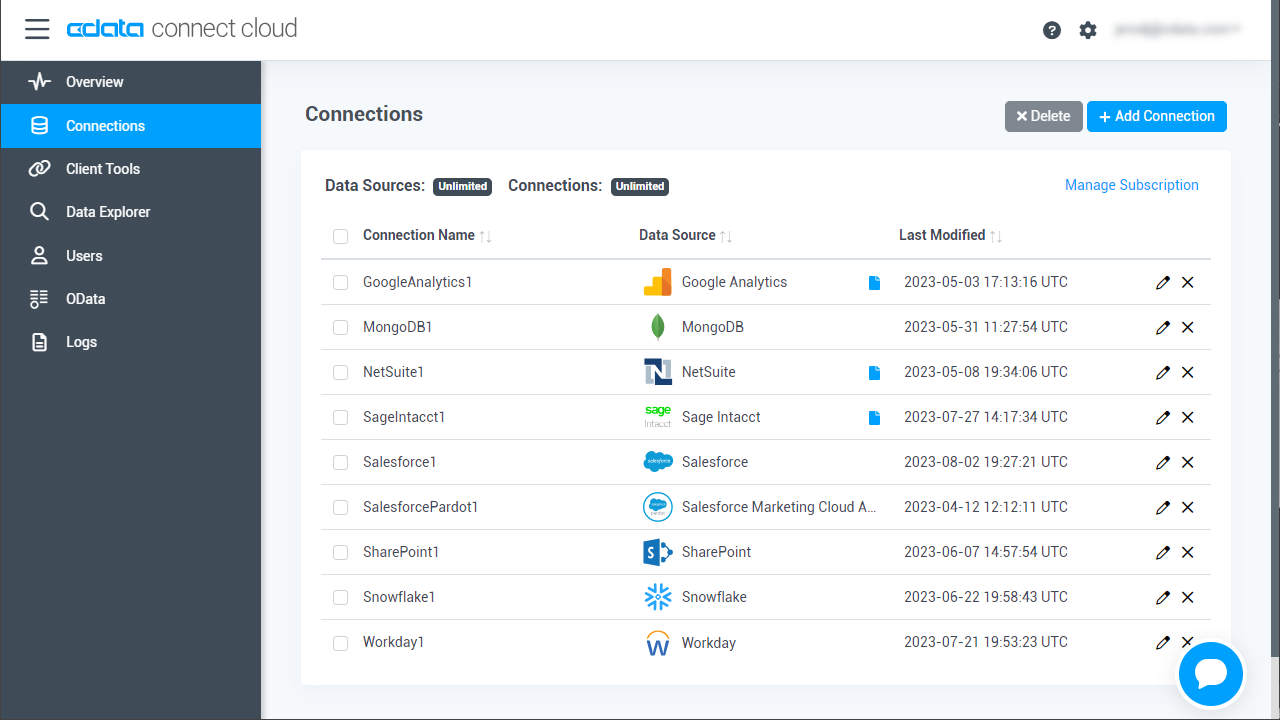

- Log into Connect Cloud, click Connections and click Add Connection

![Adding a Connection]()

- Select "Databricks" from the Add Connection panel

![Selecting a data source]()

-

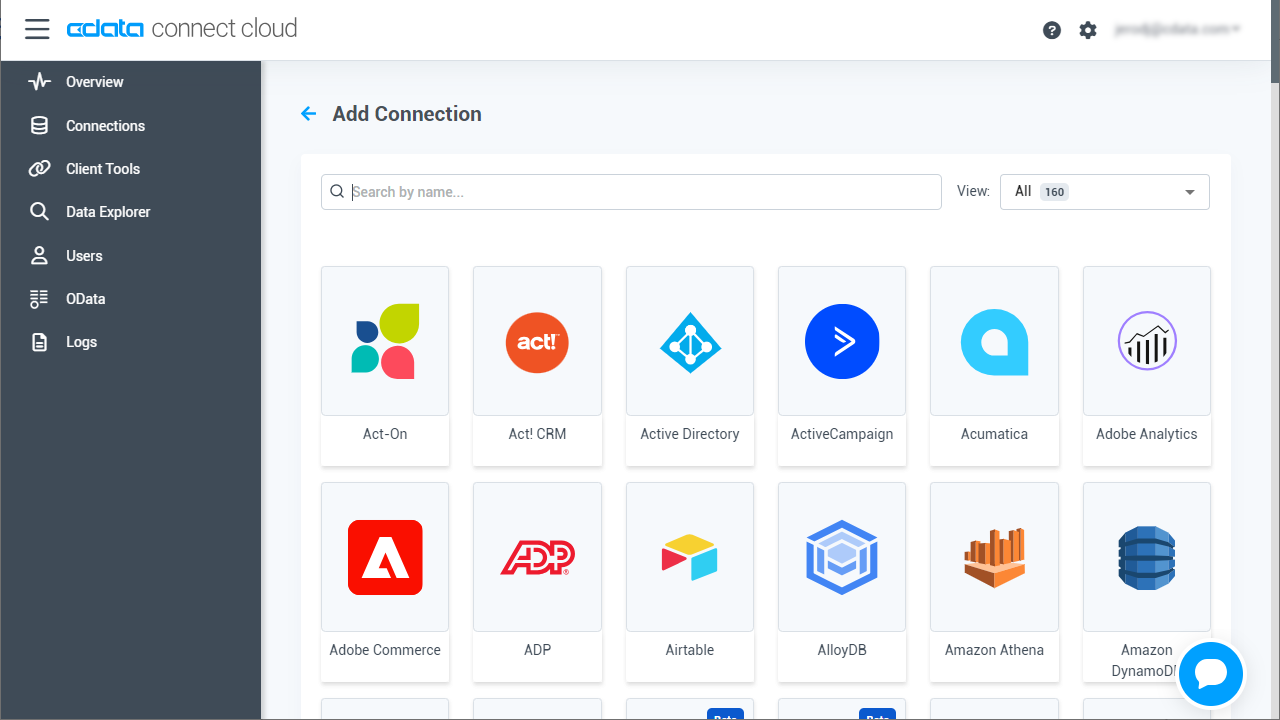

Enter the necessary authentication properties to connect to Databricks.

To connect to a Databricks cluster, set the properties as described below.

Note: The needed values can be found in your Databricks instance by navigating to Clusters, and selecting the desired cluster, and selecting the JDBC/ODBC tab under Advanced Options.

- Server: Set to the Server Hostname of your Databricks cluster.

- HTTPPath: Set to the HTTP Path of your Databricks cluster.

- Token: Set to your personal access token (this value can be obtained by navigating to the User Settings page of your Databricks instance and selecting the Access Tokens tab).

![Configuring a connection (Salesforce is shown)]()

- Click Create & Test

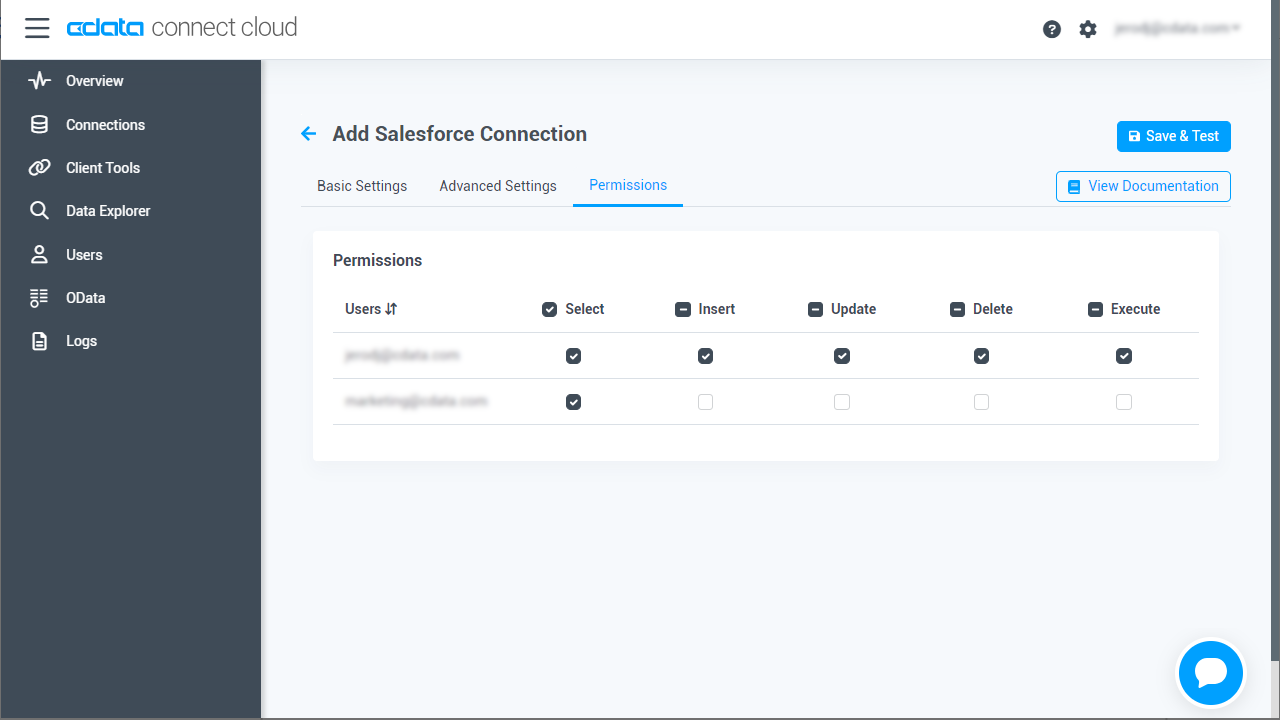

- Navigate to the Permissions tab in the Add Databricks Connection page and update the User-based permissions.

![Updating permissions]()

Add a Personal Access Token

If you are connecting from a service, application, platform, or framework that does not support OAuth authentication, you can create a Personal Access Token (PAT) to use for authentication. Best practices would dictate that you create a separate PAT for each service, to maintain granularity of access.

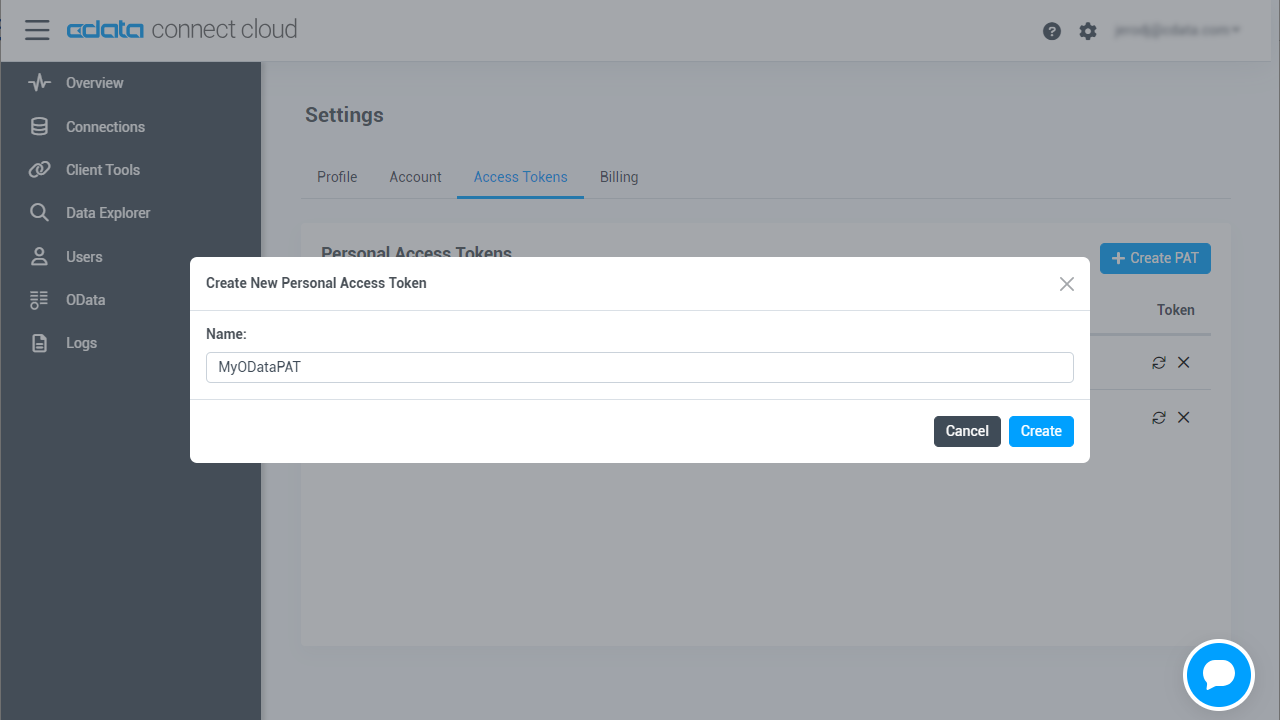

- Click on your username at the top right of the Connect Cloud app and click User Profile.

- On the User Profile page, scroll down to the Personal Access Tokens section and click Create PAT.

- Give your PAT a name and click Create.

![Creating a new PAT]()

- The personal access token is only visible at creation, so be sure to copy it and store it securely for future use.

With the connection configured, you are ready to connect to Databricks data from Nintex Workflow Cloud.

Connect to Databricks from Nintex

The steps below outline creating a new connection to Databricks data from Nintex (via Connect Cloud).

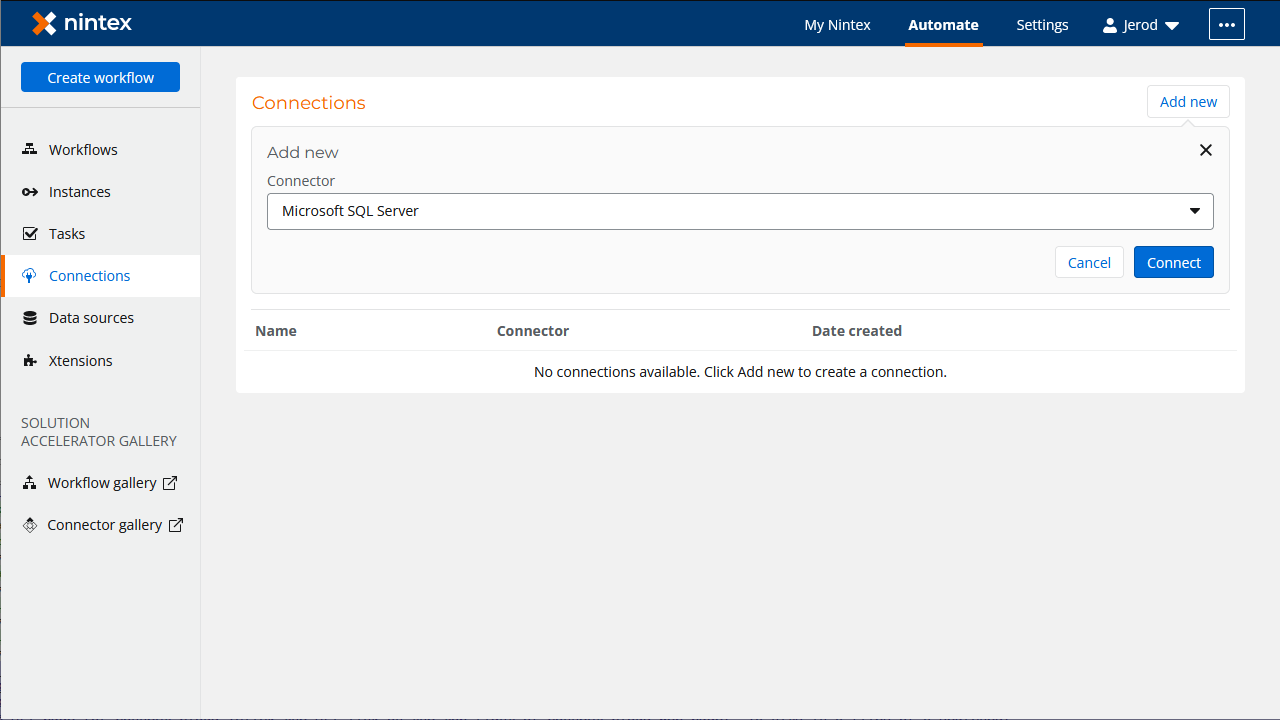

- Log into Nintex Workflow Cloud

- In the Connections tab, click "Add new"

- Select "Microsoft SQL Server" as the connector and click "Connect"

![Adding a new SQL Server Connection]()

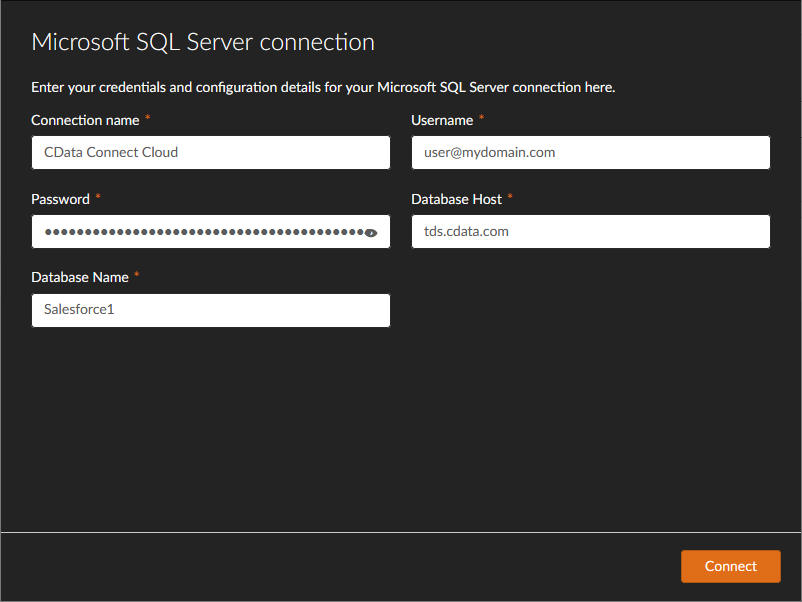

- In the SQL Server connection wizard, set the following properties:

- Connection Name: a Connect Cloud

- Username: a Connect Cloud username (e.g. [email protected])

- Password: the Connect Cloud user's PAT

- Database Host: tds.cdata.com

- Database Name: the Databricks connection (a.g., Databricks1)

![Configuring the Connection to Connect Cloud]()

- Click "Connect"

- Configure the connection permissions and click "Save permissions"

![Configuring permissions and saving the Connection]()

Create a Simple Databricks Workflow

With the connection to CData Connect Cloud configured, we are ready to build a simple workflow to access Databricks data. Start by clicking the "Create workflow" button.

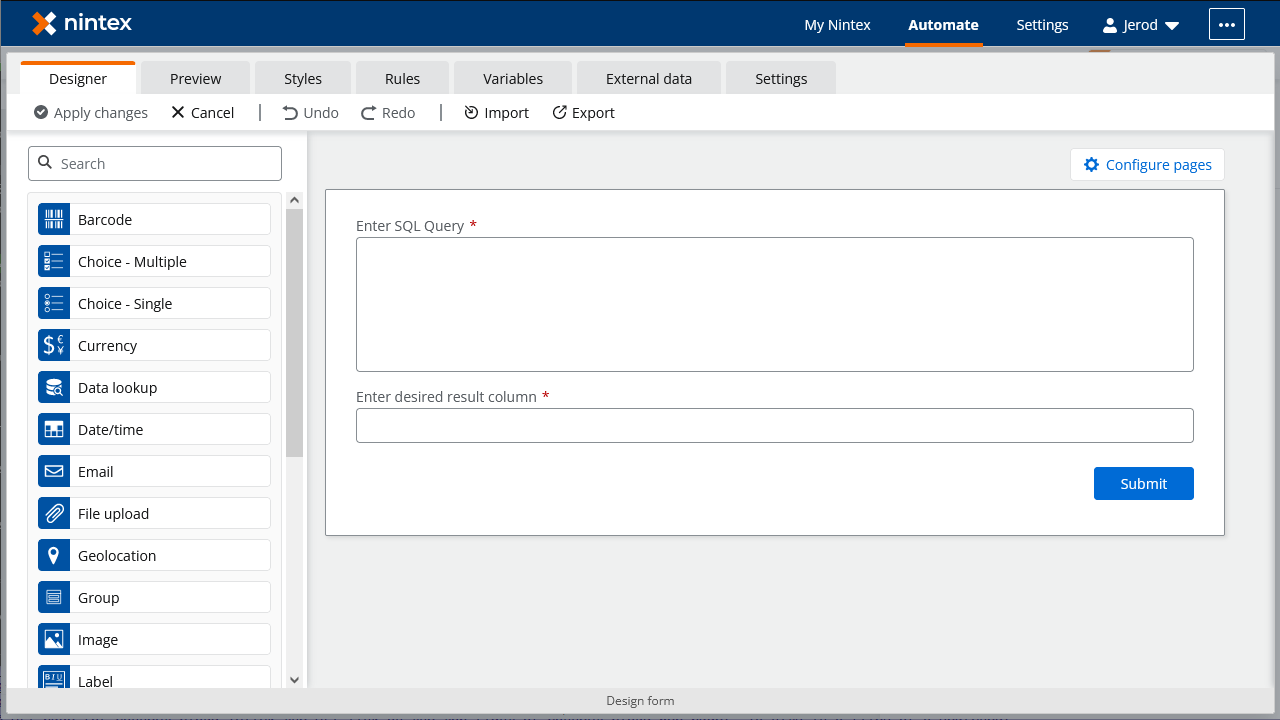

Configure the Start Event Action

- Click the start event task and select the "Form" event

- Click "Design form"

- Drag a "Text - Long" element onto the Form and click the element to configure it

- Set "Title" to "Enter SQL query"

- Set "Required" to true

- Drag a "Text - Short" element onto the Form and click the element to configure it

- Set "Title" to "Enter desired result column"

- Set "Required" to true

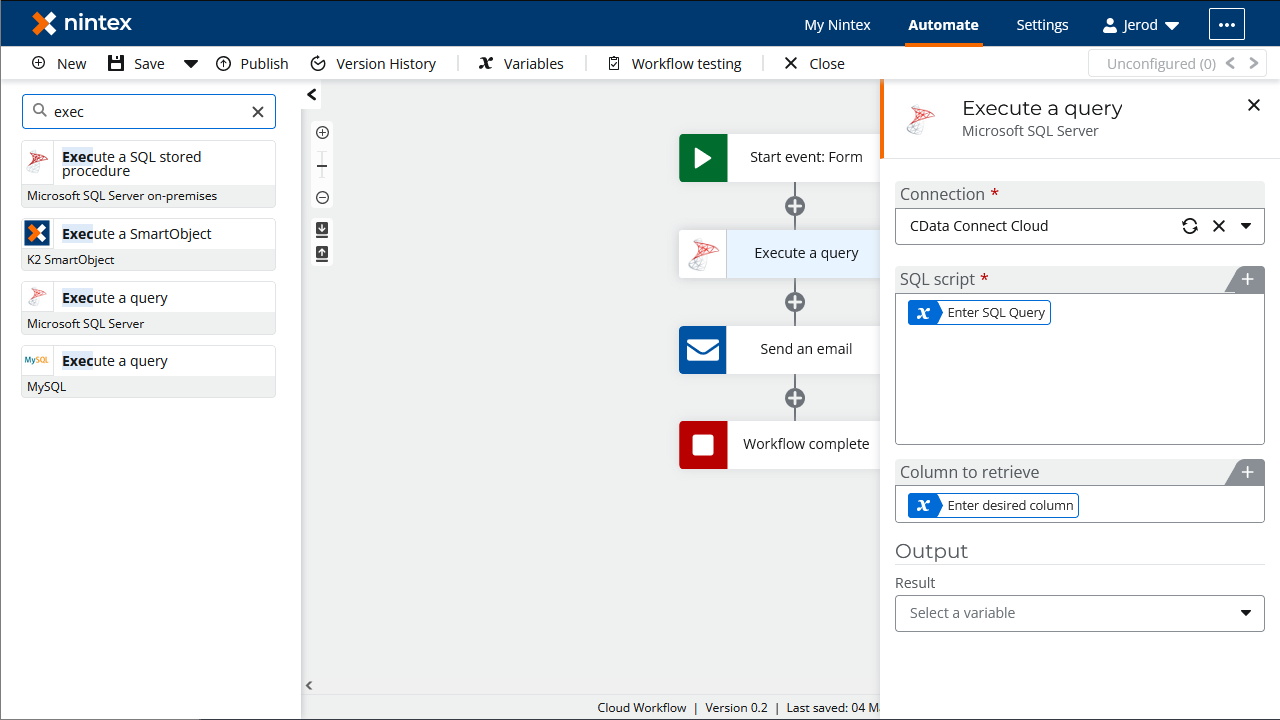

Configure an "Execute a Query" Action

- Add an "Execute a query" action after the "Start event: Form" action and click to configure the action

- Set "SQL Script" to the "Enter SQL Query" variable from the "Start event" action

- Set "Column to retrieve" to the "Enter desired result column" variable from the "Start event" action

- Set "Retrieved column" to a new variable (a.g., "values")

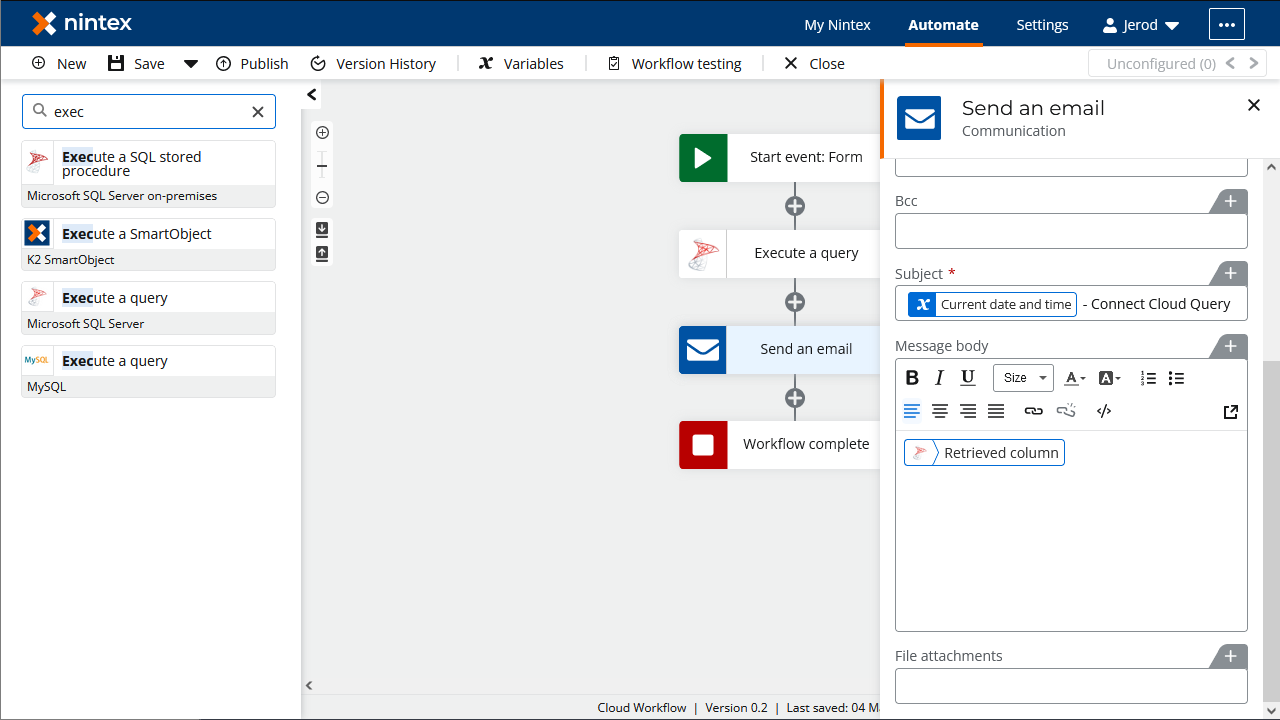

Configure a "Send an Email" Action

- Add a "Send an email" action after the "Execute a query" action and click to configure the action

- Set the "Recipient email address"

- Set the "Subject"

- Set the "Message body" to the variable created for the retrieved column

Once you configure the actions, click "Save," name the Workflow, and click "Save" again. You now have a simple workflow that will query Databricks using SQL and sand an email with the results.

To learn more about live data access to 100+ SaaS, Big Data, and NoSQL sources directly from your cloud applications, check out the CData Connect Cloud page. Sign up for a free trial and reach out to our Support Team if you have any questions.