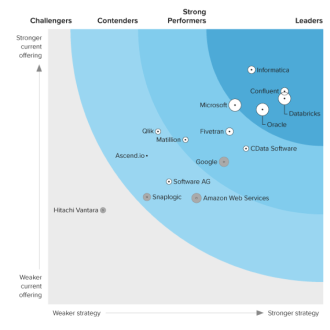

Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Automated Continuous Kafka Replication to Databricks

Use CData Sync for automated, continuous, customizable Kafka replication to Databricks.

Always-on applications rely on automatic failover capabilities and real-time data access. CData Sync integrates live Kafka data into your Databricks instance, allowing you to consolidate all of your data into a single location for archiving, reporting, analytics, machine learning, artificial intelligence and more.

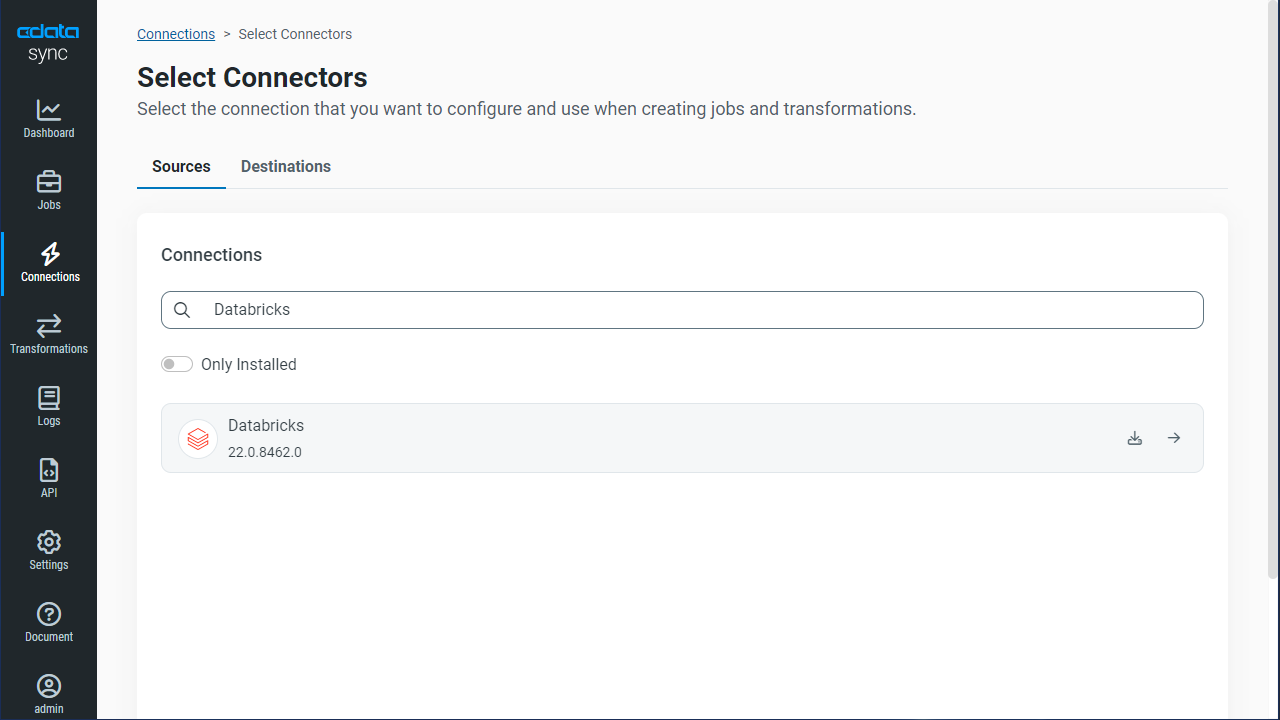

Configure Databricks as a Replication Destination

Using CData Sync, you can replicate Kafka data to Databricks. To add a replication destination, navigate to the Connections tab.

- Click Add Connection.

- Select Databricks as a destination.

![Configure a Destination connection to Databricks.]()

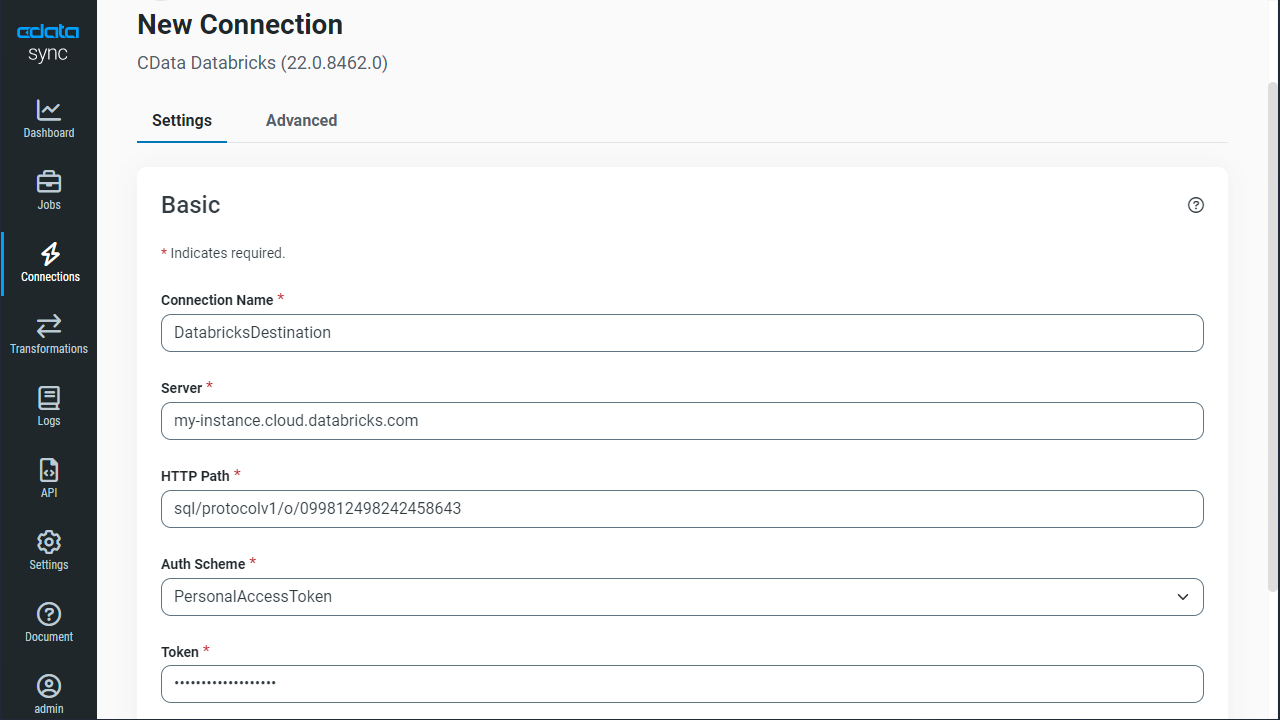

- Enter the necessary connection properties. To connect to a Databricks cluster, set the properties as described below.

Note: The needed values can be found in your Databricks instance by navigating to Clusters, and selecting the desired cluster, and selecting the JDBC/ODBC tab under Advanced Options.

- Server: Set to the Server Hostname of your Databricks cluster.

- HTTPPath: Set to the HTTP Path of your Databricks cluster.

- Token: Set to your personal access token (this value can be obtained by navigating to the User Settings page of your Databricks instance and selecting the Access Tokens tab).

- Click Test Connection to ensure that the connection is configured properly.

![Configure a Destination connection.]()

- Click Save Changes.

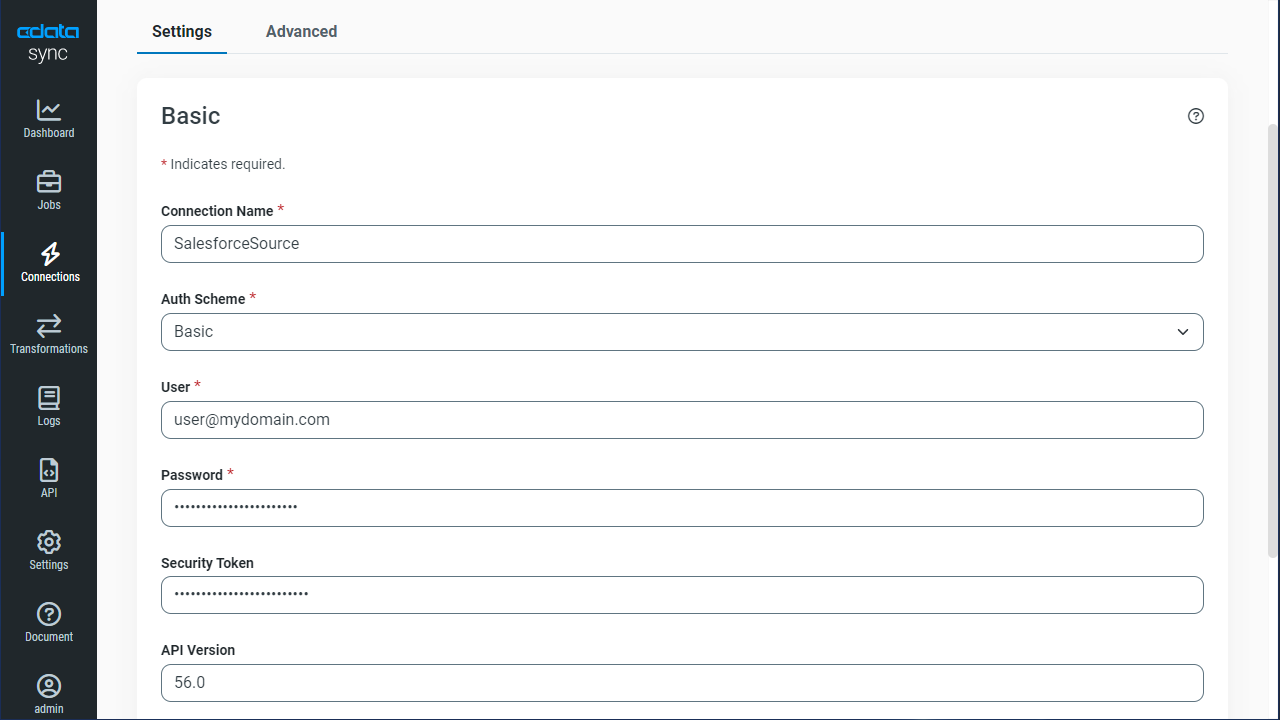

Configure the Kafka Connection

You can configure a connection to Kafka from the Connections tab. To add a connection to your Kafka account, navigate to the Connections tab.

- Click Add Connection.

- Select a source (Kafka).

- Configure the connection properties.

Set BootstrapServers and the Topic properties to specify the address of your Apache Kafka server, as well as the topic you would like to interact with.

Authorization Mechanisms

- SASL Plain: The User and Password properties should be specified. AuthScheme should be set to 'Plain'.

- SASL SSL: The User and Password properties should be specified. AuthScheme should be set to 'Scram'. UseSSL should be set to true.

- SSL: The SSLCert and SSLCertPassword properties should be specified. UseSSL should be set to true.

- Kerberos: The User and Password properties should be specified. AuthScheme should be set to 'Kerberos'.

You may be required to trust the server certificate. In such cases, specify the TrustStorePath and the TrustStorePassword if necessary.

![Configure a Source connection (Salesforce is shown).]()

- Click Connect to ensure that the connection is configured properly.

- Click Save Changes.

Configure Replication Queries

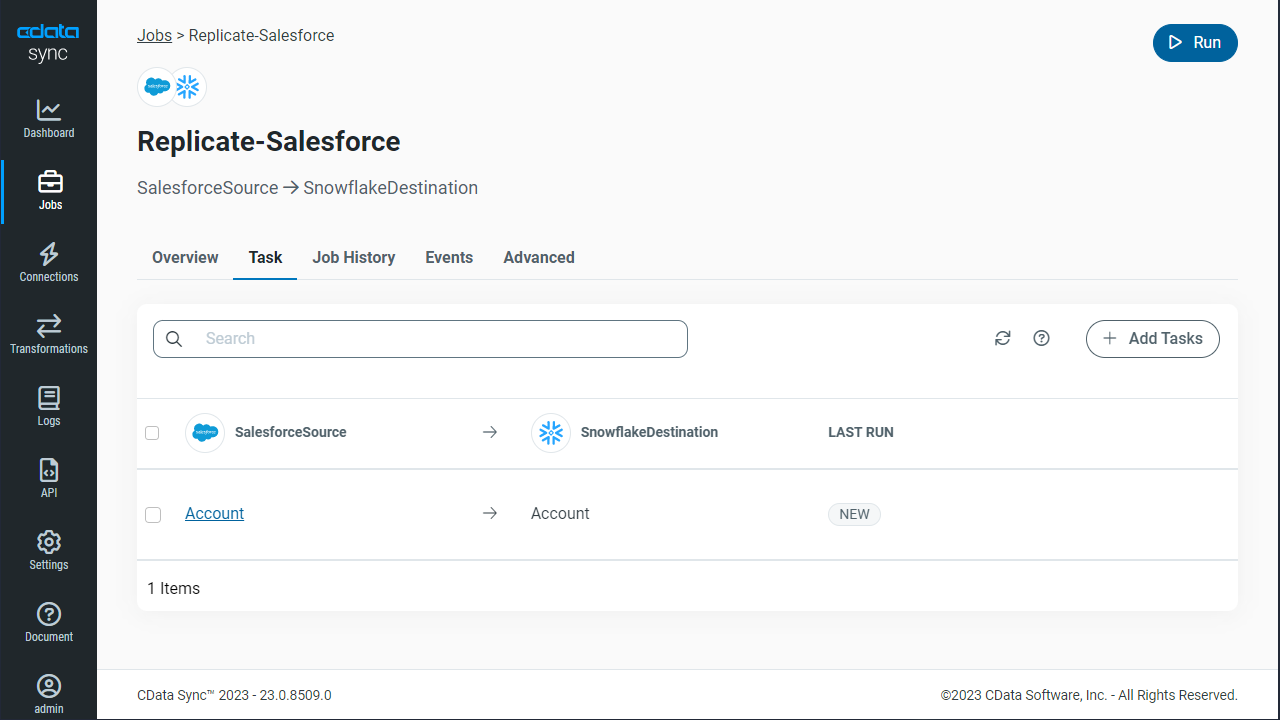

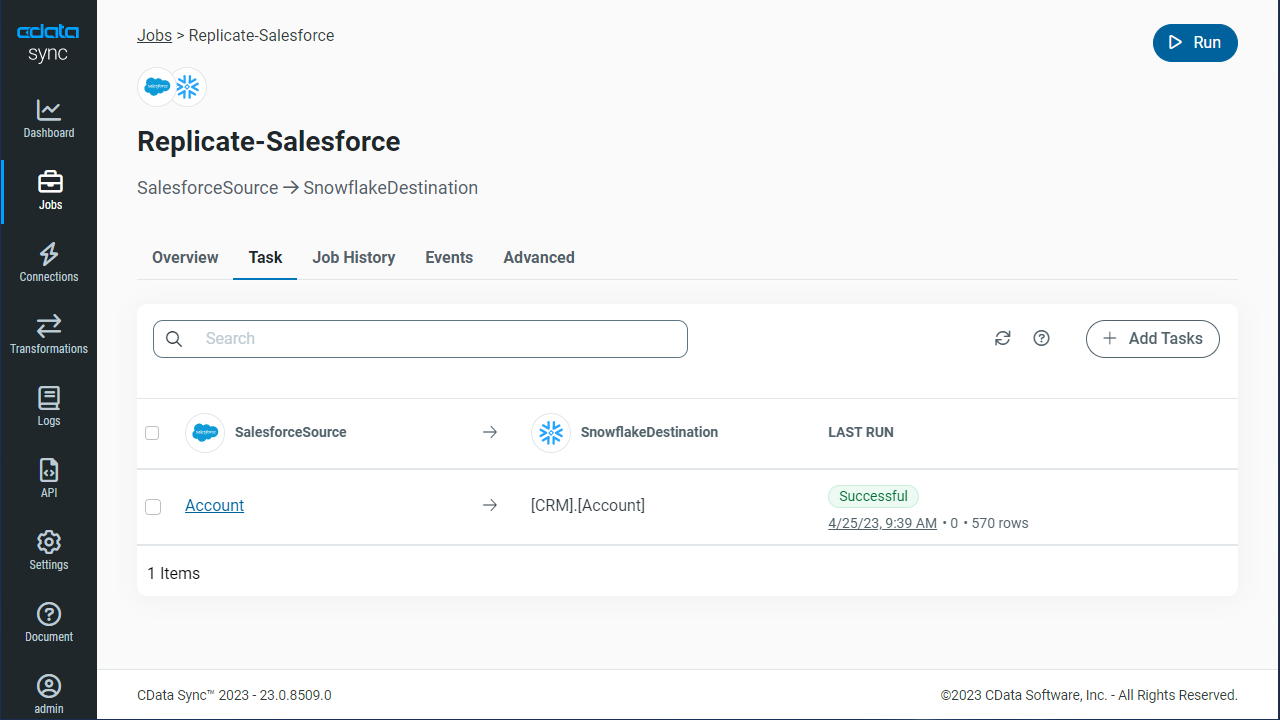

CData Sync enables you to control replication with a point-and-click interface and with SQL queries. For each replication you wish to configure, navigate to the Jobs tab and click Add Job. Select the Source and Destination for your replication.

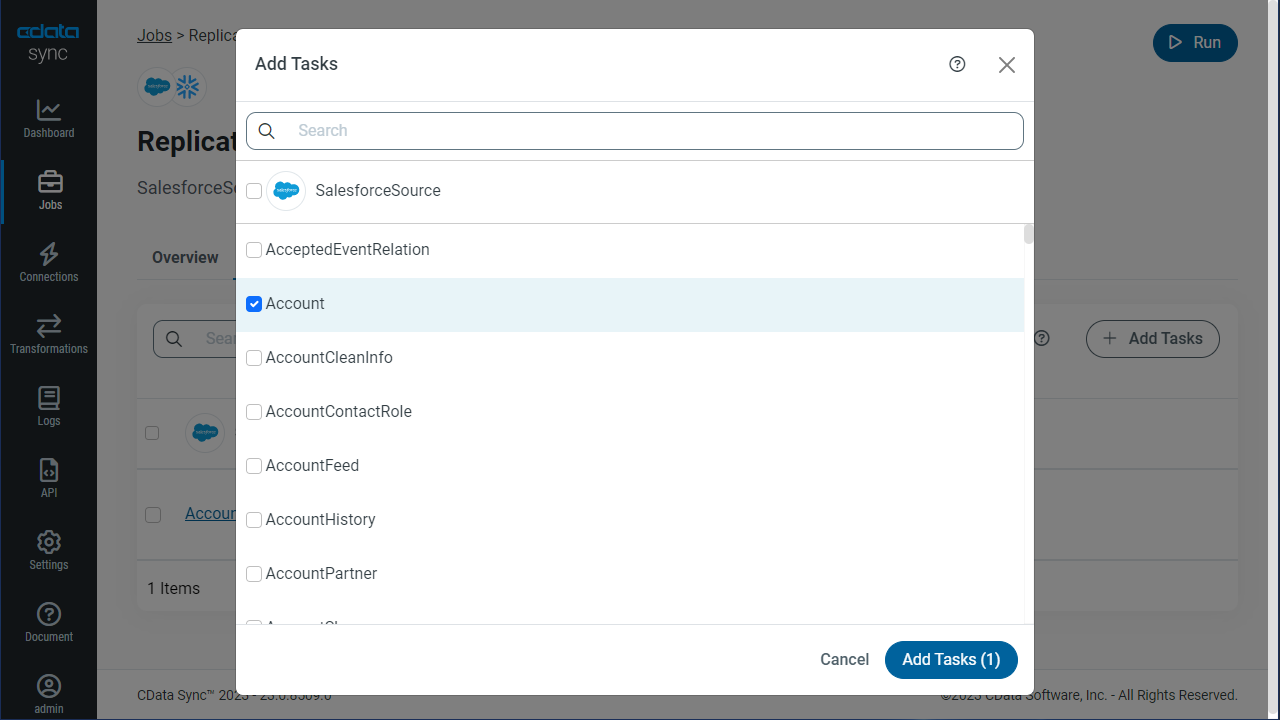

Replicate Entire Tables

To replicate an entire table, click Add Tables in the Tables section, choose the table(s) you wish to replicate, and click Add Selected Tables.

Customize Your Replication

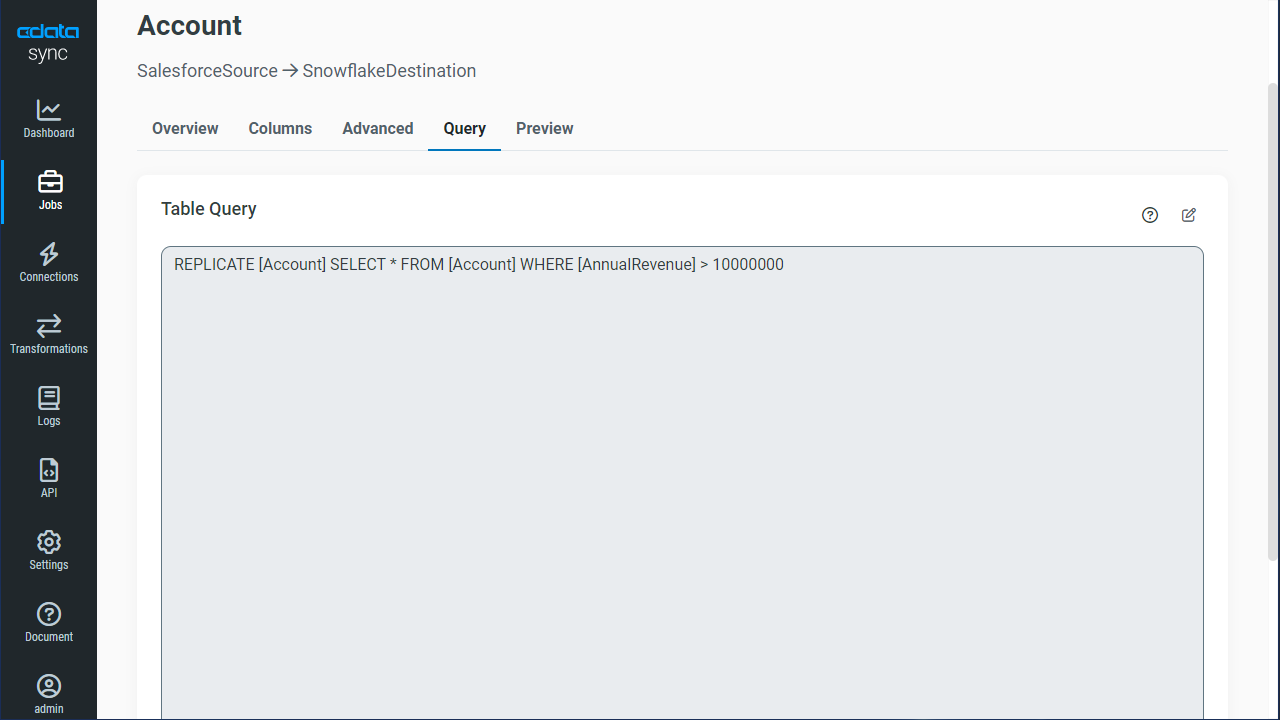

You can use the Columns and Query tabs of a task to customize your replication. The Columns tab allows you to specify which columns to replicate, rename the columns at the destination, and even perform operations on the source data before replicating. The Query tab allows you to add filters, grouping, and sorting to the replication.

Schedule Your Replication

In the Schedule section, you can schedule a job to run automatically, configuring the job to run after specified intervals ranging from once every 10 minutes to once every month.

Once you have configured the replication job, click Save Changes. You can configure any number of jobs to manage the replication of your Kafka data to Databricks.