Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →Connect to Databricks Data in Aqua Data Studio

Access Databricks data from tools in Aqua Data Studio such as the Visual Query Builder and the Table Data Editor.

The CData JDBC Driver for Databricks integrates Databricks data with wizards and analytics in IDEs like Aqua Data Studio. This article shows how to connect to Databricks data through the connection manager and execute queries.

Create a JDBC Data Source

You can use the connection manager to define connection properties and save them in a new JDBC data source. The Databricks data source can then be accessed from Aqua Data Studio tools.

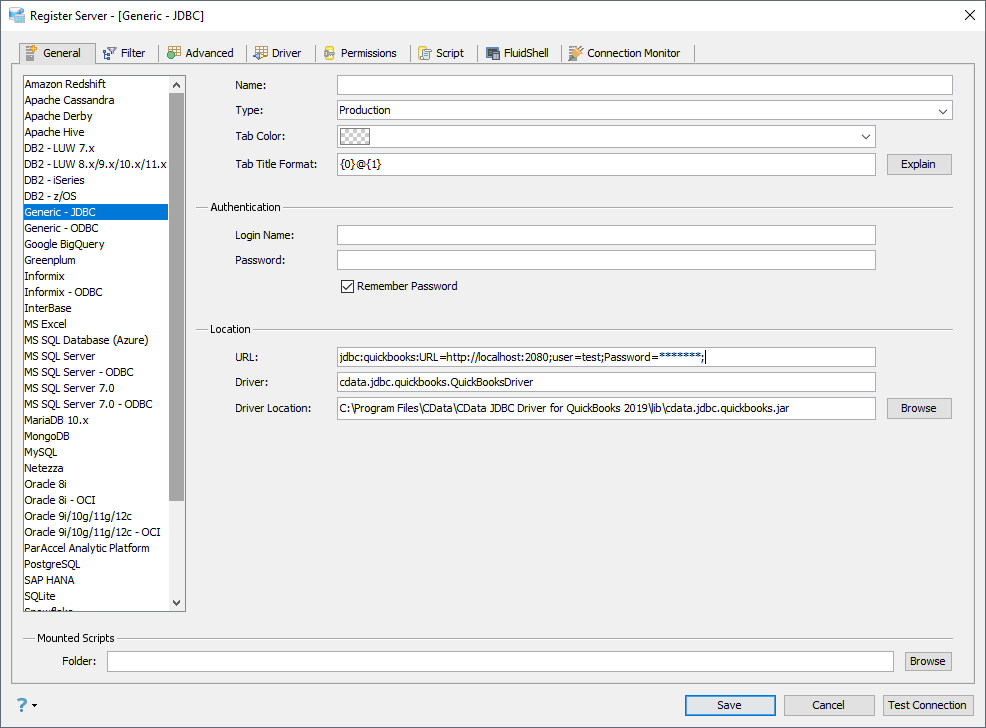

- In Aqua Data Studio, select Register Server from the Servers menu.

- In the Register Server form, select the 'Generic - JDBC' connection.

- Enter the following JDBC connection properties:

- Name: Enter a name for the data source; for example, Databricks.

- Driver Location: Click the Browse button and select the cdata.jdbc.databricks.jar file, located in the lib subfolder of the installation directory.

- Driver: Enter the Driver's class name, cdata.jdbc.databricks.DatabricksDriver.

URL: Enter the JDBC URL, which starts with jdbc:databricks: and is followed by a semicolon-separated list of connection properties.

To connect to a Databricks cluster, set the properties as described below.

Note: The needed values can be found in your Databricks instance by navigating to Clusters, and selecting the desired cluster, and selecting the JDBC/ODBC tab under Advanced Options.

- Server: Set to the Server Hostname of your Databricks cluster.

- HTTPPath: Set to the HTTP Path of your Databricks cluster.

- Token: Set to your personal access token (this value can be obtained by navigating to the User Settings page of your Databricks instance and selecting the Access Tokens tab).

Built-in Connection String Designer

For assistance in constructing the JDBC URL, use the connection string designer built into the Databricks JDBC Driver. Either double-click the JAR file or execute the jar file from the command-line.

java -jar cdata.jdbc.databricks.jarFill in the connection properties and copy the connection string to the clipboard.

![Using the built-in connection string designer to generate a JDBC URL (Salesforce is shown.)]() A typical JDBC URL is below:

A typical JDBC URL is below:jdbc:databricks:Server=127.0.0.1;Port=443;TransportMode=HTTP;HTTPPath=MyHTTPPath;UseSSL=True;User=MyUser;Password=MyPassword;

![The JDBC data source, defined by the JAR path, driver class, and JDBC URL. (QuickBooks is shown.)]()

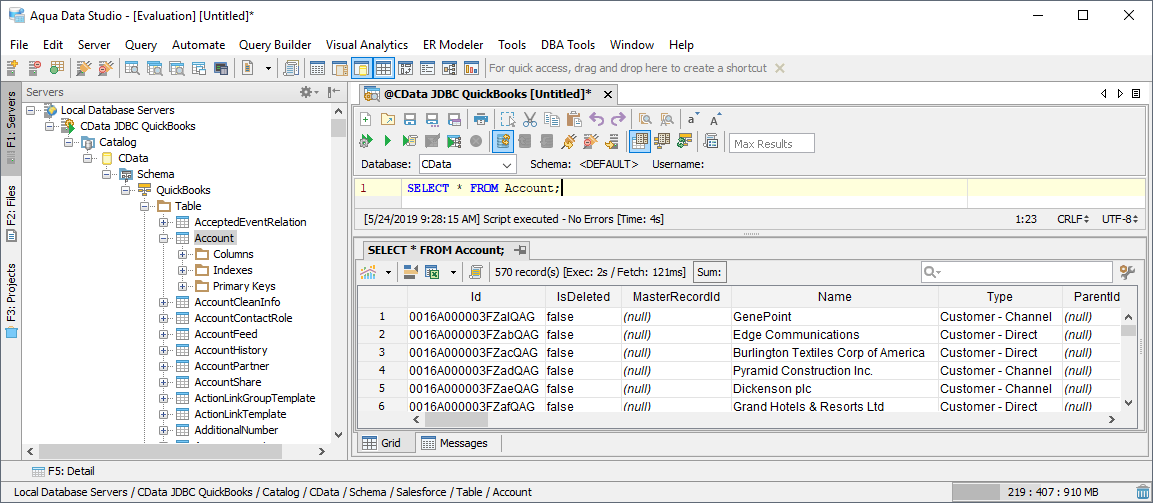

Query Databricks Data

You can now query the tables exposed.

A typical JDBC URL is below:

A typical JDBC URL is below: