Discover how a bimodal integration strategy can address the major data management challenges facing your organization today.

Get the Report →CData Software - Knowledge Base

Latest Articles

- Replicate Data from Multiple Files in an Amazon S3 Bucket Using CData Sync

- Replicate Data from Multiple Local Files Using CData Sync

- Driver Guide: Marketing Analytics Predefined Reports

- Displaying Data from Related Tables Using Angular with Connect Server

- Deploying CData Sync in a Kubernetes Environment

- Excel Add-In Getting Started Guide

Latest KB Entries

- Jetty Security Notice Overview

- Upsert Salesforce Data Using External Id in SSIS

- NuGet Repository Overview

- SAP Drivers Overview

- Embedded Web Server (.NET) - Potential Medium Security Vulnerability

- Configuring Incremental Replication in CData Sync

ODBC Drivers

- [ article ] Import Google Spreadsheets data into FileMaker Pro

- [ article ] Use the CData ODBC Driver for NetSuite in ...

- [ article ] A Comparison of Drivers for Azure Cosmos DB

- [ article ] Using CData ODBC drivers from SharePoint Excel ...

JDBC Drivers

- [ article ] Access SAP Tables and SAP Queries using CData SAP ...

- [ article ] A Comparison of the CData and Sun JDBC-ODBC ...

- [ article ] Use the CData JDBC Driver for Salesforce in ...

- [ article ] A Performance Comparison of Drivers for MongoDB

SSIS Components

- [ article ] Access SAP Tables and SAP Queries using CData SAP ...

- [ kb ] SSIS Components: The PerformUpgrade Method Failed

- [ article ] How to connect Salesforce to SQL Server with SSIS

- [ article ] Import Salesforce Data into SQL Server using SSIS

ADO.NET Providers

- [ article ] Connect SharePoint to SQL Server through SSIS

- [ article ] Faster Reporting using the CData Provider for ...

- [ article ] Accessing QuickBooks with Entity Framework 6

- [ article ] REST Easy with CData Drivers

BizTalk Adapters

- [ article ] How to Generate Updategrams with the CData BizTalk ...

- [ article ] How to Execute Stored Procedures with the CData ...

- [ article ] Standards-Based Access to NoSQL Data Sources

- [ article ] Configure a One-Way Send Port for the CData ...

Excel Add-Ins

- [ article ] Excel Files as Data Sources Using CData ADO.NET ...

- [ article ] Video: Connecting with MongoDB Data from Microsoft ...

- [ article ] Insert Invoices with the Excel Add-In for ...

- [ article ] Transfer data from Excel to QuickBooks using the ...

API Server

- [ article ] Access Salesforce Data in SharePoint External ...

- [ article ] Connect to Dynamics NAV with the CData OData ...

- [ article ] Building Dynamic React Apps with Database Data

- [ article ] Building Dynamic D3.js Apps with Database Data

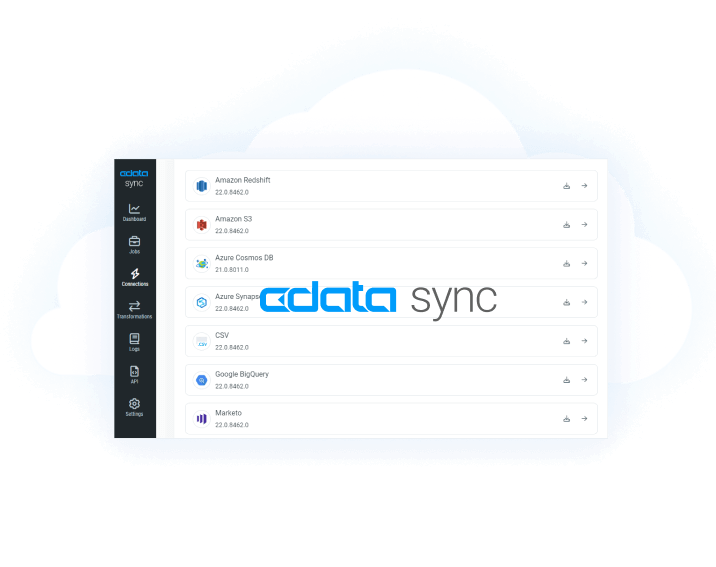

Data Sync

- [ article ] Use CData Sync to Replicate NetSuite Data to ...

- [ kb ] How to Replicate Data Between MySQL and SQL Server ...

- [ kb ] Configuring Incremental Replication in CData Sync

- [ article ] Launch the CData Sync AMI on Amazon Web Services

Windows PowerShell

- [ article ] Reconciling Authorize.net Transactions with ...

- [ article ] Import QuickBooks Online Data to QuickBooks ...

- [ article ] PowerShell Cmdlets Getting Started Guide

- [ article ] Query Google Calendars, Contacts, and Documents ...